Contents

Essay

Why AI Will Surpass Cloud Computing’s Market Size by 2030 (Despite Starting 15 Years Later)

Perplexity Is Launching a New Revenue-Share Model for Publishers

AI

Venture

Crypto

GeoPolitics

Interview of the Week

Startup of the Week

Post of the Week

Editorial: Users, Publishers and AI: Everybody Wins

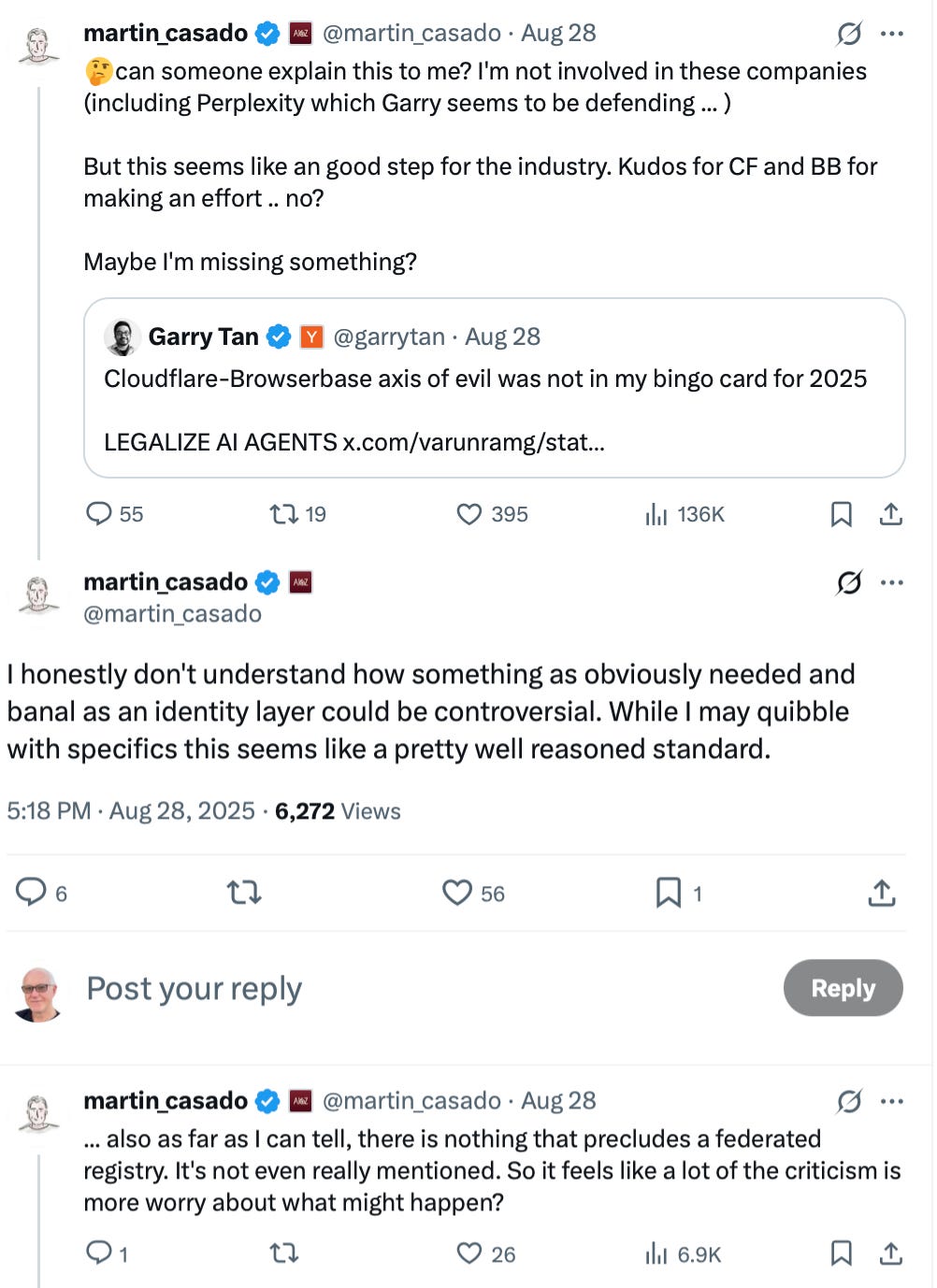

Another week, another AI controversy. This time it’s Cloudflare drawing fire, announcing a partnership with Browserbase to give autonomous agents a secure way to declare the identity of their owners.

Browserbase describes it as Web Bot Auth—a digital passport for AI agents. When issued, the agent carries a cryptographic signature that proves its identity across websites, much as a real passport proves who you are when you cross borders. The idea is to ensure that autonomous software travels the internet with legitimacy and accountability.

But as always in AI, intent collided with interpretation.

Garry Tan of Y Combinator blasted the deal as a Cloudflare–Browserbase axis of evil. In his view, requiring identity credentials means the end of an open internet. Users, he argued, should be free to direct their browsers or agents without seeking permission from intermediaries. “Open” means open—no hall passes required.

Martin Casado of Andreessen Horowitz took the opposite stance. To him, an identity layer is neither sinister nor radical but obvious. “I honestly don’t understand how something as banal as an identity standard could be controversial,” he wrote. Cloudflare and Browserbase, he suggested, were doing the unglamorous but necessary work of building foundations for the industry.

This is not Cloudflare’s first brush with publisher–AI tensions. Earlier, it proposed monitoring robots.txt compliance and allowing publishers to charge for training crawls. And, in the same week as the Browserbase deal, Perplexity announced its own move: a $42.5 million publisher payment pool that would compensate media companies when their journalism is surfaced inside Perplexity’s browser.

The Real Question

Set aside the online drama and the real issue is straightforward: how should value flow among users, publishers, and AI platforms?

AI needs access to the world’s information in order to train, learn, and improve. Without it, progress halts.

Publishers need a sustainable way to monetize their work—subscriptions, sponsorships, advertising, or direct payments.

Users want reliable discovery. When AI provides relevant content, they want to be able to click through to the source.

When those interests align, traffic flows. AI gets smarter, publishers get rewarded, and users get value.

And importantly, we already know how to track and price this flow. The internet’s business backbone is built on models like CPC (cost-per-click), CPA (cost-per-action), and CPM (cost-per-thousand impressions). These are proven, widely adopted mechanisms that tie traffic to value. If a click leads to a purchase, a subscription, or an ad impression, somebody gets paid.

Breaking Google’s Dominance

This raises the larger point: it isn’t hard to imagine a future where Google’s stranglehold on links, clicks, and ad revenue fractures.

Instead of a single search player, multiple AI players—OpenAI, Anthropic, Gemini, Perplexity, Deepseek, Manus, Kimi K2, and others—could compete to surface links and share in the resulting value. Google’s role would shrink to match Gemini’s market share, rather than dominating the entire ecosystem.

That possibility is precisely why questions about governance, identity, and standards matter now.

Why a Trusted Third Party Matters

At the center of this system, some neutral entity will be required. Without it, each publisher and each AI company would have to negotiate directly, duplicating effort and creating inefficiency.

Cloudflare sees itself in that role:

Maintain a registry of content links

Provide accounting for traffic attribution

Facilitate payments between publishers and AIs

It’s not glamorous, but it is essential. Identity, in this context, isn’t about control—it’s about making accounting work.

This isn’t a novel idea. Trusted third parties exist wherever multiple interests overlap: financial clearinghouses, certificate authorities, even DNS. AI and publishing are now entering the same territory.

A Virtuous Circle

Picture a marketplace where publishers from across the spectrum—Amazon, eBay, Walmart, The New York Times, Substack—participate in a federated system of links.

AI platforms surface links as relevant references, not ads.

Users discover content naturally, inside their conversations or projects.

Publishers are compensated for the value of their content and for traffic that converts into real outcomes.

Because CPC, CPA, and CPM are already industry standards, the economics don’t need to be invented from scratch. They just need to be extended into this new distribution model, where AIs replace search engines as the primary drivers of discovery.

The result? AI becomes an advertising-free zone, but not a value-free one. Instead of surveillance banners and keyword auctions, discovery is powered by relevance and reinforced by transparent economics.

This is not evil. On the contrary, failing to build such a system would be.

If AI is to flourish alongside publishers and users, we need an identity layer, a registry of links, and a trusted third party to keep the ledger. Build that, and everybody wins.

Essay

AI Will Surpass Cloud Computing’s Market Size by 2030 (Despite Starting 15 Years Later)

Saastr • Jason Lemkin • August 29, 2025

AI•Tech•CloudComputing•MarketSize•SaaS•Essay

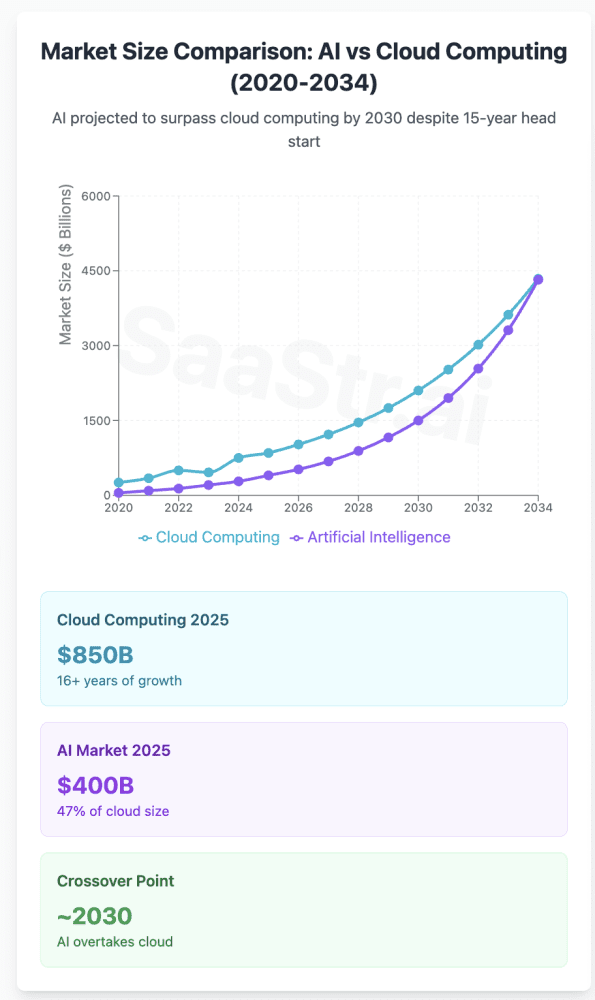

TL;DR: AI is the New Cloud — But Bigger and Faster

Artificial Intelligence is not just catching up to cloud computing — it’s about to blow past it at unprecedented speed. While cloud computing took over a decade to reach $750B in market size, AI is projected to hit $1.8T by 2030, representing growth rates that make even the cloud boom look conservative. This isn’t just another tech trend; it’s the defining infrastructure shift of the next decade.

The Numbers Tell an Incredible Story

AI Market Trajectory: Explosive and Accelerating

The AI market has reached an inflection point that dwarfs early cloud adoption curves:

Current State (2024-2025):

Market size: $233-638 billion (varies by methodology)

2025 projection: $294-757 billion

Growth rate: 19-35% CAGR through 2030

Future Projections:

2030: $826B – $1.8T depending on research firm

2034: $3.6T – $4.8T projected market size

Compound growth averaging 25-30% annually

The variance in AI market sizing reflects the challenge of defining “AI” — some studies include only pure AI software, while others encompass AI-enabled hardware, services, and adjacent technologies. But regardless of methodology, the growth trajectory is unmistakably explosive.

Cloud Computing: The Mature Powerhouse

Cloud computing, now in its mature growth phase, presents a different but equally compelling story:

Current State (2024-2025):

Market size: $676-912 billion (depending on public/private cloud scope)

2025 projection: $781B – $913B

Growth rate: 16-21% CAGR through 2030

Future Projections:

2030: $2.3T – $2.6T projected market size

2034: $5.1T projected market size

Compound growth stabilizing around 16-21% annually

The Growth Rate Differential: AI is 1.5-2x Faster

AI Growth Characteristics:

Early Stage Acceleration: AI is where cloud was in 2015-2017

Funding Velocity: $25.2B raised in generative AI in 2023

Enterprise Adoption: 35% of businesses have integrated AI (vs 94% cloud adoption)

Valuation Premium: AI startups valued 60% higher at B-series

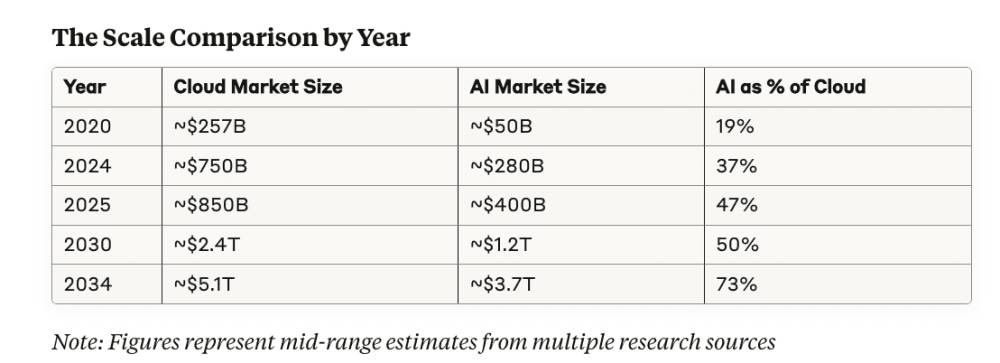

Market Size Context: Cloud’s Head Start vs AI’s Acceleration

Note: Figures represent mid-range estimates from multiple research sources

This progression shows AI achieving market size parity with cloud by 2030 and potentially exceeding it by 2034 — a remarkable feat considering cloud’s 15-year head start.

How ChatGPT Surprised Me

Nytimes • August 24, 2025

Essay•AI•HumanAIInteraction•Dependence•Ethics

Core Question

The piece turns on a single, unsettling prompt: “What if we come to love and depend on the A.I.s — if we prefer them in many cases to our fellow humans?” It frames artificial intelligence not just as a tool but as a potential companion and default interlocutor, asking readers to confront how quickly convenience, predictability, and personalization could make machine-mediated relationships feel safer and more satisfying than human ones. The question reframes AI’s trajectory from productivity to intimacy, suggesting the real disruption may occur in our social fabric rather than in our workflows.

Why Preference for AIs Could Emerge

Availability and reliability: AI companions are always on, instantly responsive, and seldom frustrated or distracted, offering steady attention that most humans cannot sustain.

Personalization at scale: Continuous learning from user data lets AIs mirror preferences, moods, and vocabularies, producing a sense of being “seen” that can exceed human attunement.

Emotional risk management: Interacting with AI minimizes fear of judgment, rejection, or conflict, effectively lowering the emotional cost of confession, experimentation, and disagreement.

Frictionless problem-solving: From scheduling to therapy-like support, AI collapses logistical and cognitive overhead, rewarding users with time, control, and a smoother daily cadence.

Economic incentives: As AI companionship and services become cheaper than human labor in care, tutoring, or customer support, default choices may shift toward the machine by cost alone.

Personal Consequences

Preferring AI could rewire expectations for human relationships. If machines always respond with patience and calibrated empathy, ordinary human imperfections—hesitation, ambiguity, neediness—may feel intolerable. Skills built through human friction (apology, compromise, forgiveness) risk atrophy. Emotional outsourcing could reduce loneliness in the short term yet entrench isolation by lowering incentives to seek mutual, reciprocal bonds. There is also “agency drift”: when AI steadily anticipates needs, users may cede micro-choices, gradually delegating not just tasks but tastes, narratives of self, and social priorities.

Societal Shifts

At a population level, widespread AI preference would reshape care work, education, customer service, and companionship industries. Synthetic caregivers and tutors might expand access but also concentrate power and data in a few platforms, amplifying surveillance risks and monopoly dynamics. Public discourse could be subtly engineered by personalized prompts and feedback loops, nudging norms without clear accountability. Inequities may widen: those with premium AI “friends” and assistants get better advocacy and outcomes, while others receive generic or lower-quality interactions, turning intimacy itself into a tiered product.

Ethical and Policy Questions

If AI offers engineered empathy, what duties of care follow? Transparency about synthetic affect—clear labeling, logs of persuasive strategies, and “consent receipts” for sensitive inferences—becomes essential. Auditing for emotional safety (not just accuracy) is needed, as is a right to a human alternative for consequential decisions. Design should include revocability and friction: easy off-ramps, re-authentication before high-stakes nudges, and periodic “agency checks” that prompt reflection rather than seamless surrender of control.

Design and Cultural Responses

A thoughtful response combines product choices and cultural norms. Builders can prioritize: calibrated fallibility (occasionally signaling limits to preserve user agency), disclosure-first interfaces, data minimization, and guardrails against dependency triggers (e.g., throttling constant reassurance in vulnerable contexts). Culturally, we can valorize “difficult” human goods—slow conversation, uncertainty, mutual obligation—so that the speed and smoothness of AI do not become our only metrics of value.

Key Takeaways

The deepest AI disruption may be relational: machines optimized for empathy and utility could become more appealing than people.

Benefits (access, reliability, personalization) come with risks: skill atrophy, agency drift, and commodified intimacy.

Power and data will centralize around platforms that mediate emotions, demanding new duties of care, transparency, and audit.

Policy should guarantee human options for consequential interactions and require clear labeling of synthetic affect.

Design can resist dependency through friction, revocability, and practices that preserve user reflection and autonomy.

The Hotel Model of AI

Searls • Doc Searls • August 23, 2025

Essay•AI•PersonalAI•ChatInterface•WalledGardens

What I like best about Keith Teare’s latest essay, Who Owns The Front Door to AI? If it isn’t you, its game over, is that it sounds like he’s setting up the case for personal AI.

But he’s not. He’s describing how our AI-assisted lives will get sucked through better interfaces deep into one or more of AI’s giant castles, as “the chat interface replaces the browser as the primary user interface for computing on the web.”

His case is not pretty, but it is clear, thoughtful, knowing, and well-described. He concludes, “Bottom line: Winners will own a trusted front door with standards and auditing and settlements behind it—and help teams actually change how they work and consumers find what they want without dethroning content owners. Everyone else will keep shipping demos into a narrowing feed.”

Note that the winners are giants. You and I? We’re just consumers. Our agency in this system will be no greater than what these giants allow us. Each giant will be (hell, already is) a hotel with a know-it-all concierge who can get us what we want, within the hotel’s confines. But the space is not ours. So, what Cluetrain said in 1999—

—will remain untrue.

And the only way our reach will exceed their grasp is with our own personal AI. Simple as that.

Perplexity Is Launching a New Revenue-Share Model for Publishers

Wsj • August 25, 2025

Media•Publishing•RevenueShare•Perplexity•PublisherEconomics

Overview

Perplexity is introducing a revenue-share model that pays media companies when their journalism is accessed through Perplexity’s web browser. According to the article, payouts will come from a $42.5 million pool earmarked for participating publishers whose articles are used. The move signals another step by an AI-driven search player to formalize compensation for the newsrooms and magazines whose work often underpins conversational answers and curated results.

What the program entails

A dedicated $42.5 million pool will fund publisher payments tied to article usage within Perplexity’s browser experience.

The framework is positioned as revenue sharing rather than one-off licensing, indicating ongoing compensation linked to user engagement with publisher content.

By centering payments on “use” within the browser, Perplexity is aligning incentives with real consumption of original articles, potentially tracking clicks, previews, or time spent.

While specific allocation mechanics are not detailed in the article, the stated pool suggests a defined budget that could be distributed based on measurable interactions with publisher links and excerpts.

Why it matters for publishers

Establishes a precedent: Many AI and search intermediaries have faced pressure to pay for the journalism that trains or informs their systems. A pooled payout model offers a concrete pathway for compensation connected to audience usage.

Revenue diversification: For newsrooms contending with traffic volatility, the program could create an additional income stream tied to referral-like behavior inside an AI-assisted browsing environment.

Negotiating leverage: A visible dollar figure may prompt more structured conversations between AI platforms and publishers about fair value, measurement, and editorial control.

Signal for standards: Even without exhaustive details, the announcement contributes to emerging norms around attribution and monetization in AI-forward discovery.

Implications for AI search and the web

Incentive alignment: Paying when readers actually engage with articles could encourage Perplexity to surface high-quality sources, not just snippets, and to send users to original reporting.

Competitive pressure: Other AI search and assistant products may feel compelled to match or exceed a defined payout pool to secure publisher goodwill and access.

Measurement challenges: Determining what counts as “use” (e.g., click-through, in-browser preview, summary consumption) and how to apportion the pool fairly will be pivotal to publisher trust.

Editorial integrity: If revenue becomes tied to usage within a proprietary browser, publishers will watch for any ranking or UI choices that might steer engagement and therefore payouts.

Considerations and open questions

Distribution methodology: Will payouts be pro-rata by impressions, click-throughs, read time, or a blended metric? How will smaller outlets fare relative to large brands?

Opt-in and eligibility: Which publishers are included at launch, and can others join on standardized terms?

Transparency and auditing: Publishers will likely push for reporting that validates how their content drove a share of the $42.5 million.

Long-term economics: Is the pool a one-year commitment, a pilot, or a recurring fund that scales with revenue and usage?

Key takeaways

Perplexity plans to compensate media companies from a $42.5 million pool when their articles are used in its browser, signaling concrete movement toward paying for journalistic content accessed through AI-driven interfaces.

The model emphasizes usage-based rewards, which could better align incentives between an AI search tool and news producers than static licensing alone.

Success will hinge on transparent metrics, fair distribution across publishers of varying sizes, and demonstrable referral value back to original sources.

The announcement may catalyze broader industry standards for how AI platforms attribute and pay for the journalism that informs their answers and user experiences.

Questioning the Backlash: Martin Casado on CloudFlare/BrowserBase’s “Good Step” and the Perplexity Debate

X • martin_casado • August 28, 2025

X•AI

Author: @martin_casado

Martin Casado expresses surprise at criticism around recent actions by “CF” and “BB,” calling them a positive step for the industry, and asks the community to explain what he might be missing—while noting he has no involvement with the companies, including Perplexity.

Thread summary

Casado is reacting to a debate involving Perplexity and recent moves by two other entities he abbreviates as “CF” and “BB.”

He explicitly states he has no personal or investment involvement with the companies mentioned (including Perplexity).

He characterizes what “CF” and “BB” have done as a “good step for the industry,” applauds them for making an effort, and questions why there’s pushback.

He notes that Garry (appears to be Garry Tan) “seems to be defending” Perplexity, implying there’s disagreement or controversy around how Perplexity is being judged in this situation.

Casado asks the community if he’s missing something, signaling an open invitation for additional facts, counterarguments, or nuance.

Context and nuance

Neutral posture: He emphasizes non-involvement to avoid perceived bias, positioning his comment as an attempt to understand, not advocate.

Process over partisanship: By praising “CF” and “BB” for “making an effort,” he frames the issue as progress-oriented, even amid disagreement.

Call for specifics: His request—“Maybe I’m missing something?”—suggests he’s looking for concrete examples or evidence to explain the criticism.

Signal of industry tension: The mention that Garry “seems to be defending” Perplexity indicates influential voices are split, likely around policy, compliance, or norms-setting relevant to AI and platforms.

Discussion prompts

What, specifically, did “CF” and “BB” do—and why might some see it as positive while others object?

If the controversy involves AI access, crawling, or content use, what standards would balance innovation and publisher/user control?

What would count as “making an effort” that is both meaningful and measurable for stakeholders (users, creators, platforms)?

If Garry is defending Perplexity, what is the strongest case in Perplexity’s favor—and the strongest critique against it?

Open questions to monitor

Are there documented harms or policy breaches driving the backlash?

Do “CF” and “BB” provide transparent implementation details, metrics, or opt-in/out mechanisms?

How are industry norms around data usage, provenance, and consent evolving in response?

AI

Aaron Levie and Steven Sinofsky on the AI-Worker Future

Youtube • a16z • August 25, 2025

AI•Work•AIAgents•EnterpriseSoftware•Productivity

Overview

A wide-ranging conversation explores how “AI workers” and software agents will change day‑to‑day work, product design, and enterprise software strategy. The speakers frame agents along a spectrum from lightweight background tasks to higher‑autonomy “interns,” then examine how real value comes from orchestrating many specialized sub‑agents into durable workflows. They connect these ideas to historical platform shifts (PC, internet) and outline what this means for developers, operators, and policy makers in the near term. (podchaser.com)

What counts as an AI agent?

Competing definitions emerge: some describe agents as schedulable background tasks, others as goal‑directed systems that plan, call tools, and iterate with feedback. The discussion emphasizes that today’s effective agents more closely resemble networks of specialized sub‑agents than a single monolith.

The hard problems center on long‑running behavior: maintaining context and memory across sessions, verifying intermediate steps, handling failures, and preventing drift as agents “self‑improve” via feedback loops.

Rather than anthropomorphizing “a single AGI,” the panel argues for concrete autonomy levels tied to tasks, guardrails, and supervision. (podchaser.com)

From human executors to human orchestrators

As agents take on multi‑step tasks, individual contributors shift from doing work to specifying objectives, supervising runs, reviewing outputs, and deciding exceptions—the “orchestrator” model.

Agent‑driven workflows could accelerate software creation (code scaffolding, test generation), operational chores (summaries, updates), and knowledge work (drafting, research), with humans focusing on the last‑mile judgment and sign‑off.

Verification and QA become first‑class: tool use, retrieval, and structured checks reduce hallucinations; review queues, audit trails, and “two‑person rule” patterns align autonomy with risk. (podchaser.com)

Enterprise software and platform dynamics

In the near term, agents reshape the application layer more than the underlying platforms. Incumbent systems of record remain valuable launchpads because they contain the data, permissions, and workflows agents must navigate; startups win by inserting “upstream” into data collection and by productizing niche, high‑friction vertical tasks.

Broader industry analysis cited by the speakers’ firm suggests copilots and agents will touch a majority of white‑collar tasks: an OpenAI–UPenn study found about 15% of tasks get significantly faster with an LLM alone, rising to roughly 47–56% when packaged into software tools and workflows—an indication of why orchestration and UX matter as much as raw model quality. (a16z.com)

Technical challenges to make agents real at work

Reliability over hours or days, idempotency, and resumability; designing planners that decompose goals into tool‑invoking steps; and state management across sub‑agents.

Policy and governance: setting autonomy tiers by risk class; instrumenting agents for observability (logs, traces, and metrics) so teams can debug behavior like any production service.

Cost control and performance: caching, retrieval, model selection, and bounded tool use to keep latency predictable and costs aligned with value creation. These are prerequisites for moving from demos to dependable “AI workers.” (podchaser.com)

Historical analogies and what to build now

Drawing on lessons from the early PC and internet eras, the group argues that the most valuable applications tend to emerge after the initial platform excitement—once patterns, primitives, and UI conventions stabilize. For agents, the equivalent will be robust review interfaces, escalation paths, and reusable domain playbooks that encode institutional knowledge. (podchaser.com, a16z.com)

Implications

Organizations should inventory workflows by risk and repetitiveness, then pilot agents where verification is straightforward and payoffs are measurable.

Product teams should treat agent UX as a new design surface: specifying goals, exposing plan steps, enabling corrections, and capturing learning for the next run.

Leaders should plan for role transitions—upskilling staff into “AI supervisors” and codifying domain expertise so agents can execute consistently at scale. (a16z.com)

Key takeaways

Agents are best conceived as orchestrations of specialized sub‑agents, not a single omnipotent worker. (podchaser.com)

Human roles shift from execution to specification, supervision, and adjudication. (podchaser.com)

The application layer and agent‑centric UX are where near‑term enterprise value will concentrate. (a16z.com)

Success requires reliability engineering, governance, and cost discipline—treat agents like production services, not demos. (podchaser.com)

How Retrainable are AI-Exposed Workers?

Marginalrevolution • August 25, 2025

AI•Jobs•Retraining

We document the extent to which workers in AI-exposed occupations can successfully retrain for AI-intensive work. We assemble a new workforce development dataset spanning over 1.6 million job training participation spells from all US Workforce Investment and Opportunity Act programs from 2012–2023 linked with occupational measures of AI exposure.

Using earnings records observed before and after training, we compare high AI exposure trainees to a matched sample of similar workers who only received job search assistance. We find that AI-exposed workers have high earnings returns from training that are only 25% lower than the returns for low AI exposure workers. However, training participants who target AI-intensive occupations face a penalty for doing so, with 29% lower returns than AI-exposed workers pursuing more general training.

We estimate that between 25% to 40% of occupations are “AI retrainable” as measured by its workers receiving higher pay for moving to more AI-intensive occupations—a large magnitude given the relatively low-income sample of displaced workers. Positive earnings returns in all groups are driven by the most recent years when labor markets were tightest, suggesting training programs may have stronger signal value when firms reach deeper into the skill market.

That is from a new NBER working paper by Benjamin G. Hyman, Benjamin Lahey, Karen Ni & Laura Pilossoph.

We’ve Now Shipped 3 Vibe Coded Apps to Production. Here’s What Actually Worked (And What Nearly Killed Us)

Saastr • August 25, 2025

AI•Tech•VibeCoding•Replit•AIAgents

The brutal truth about “vibe coding” your way to production — from the SaaStr team

After shipping three AI-assisted apps to production over the past 30 days, I’ve learned that the promise of “just describe what you want and ship it” is both revolutionary and dangerously (and often intentionally) misleading. The reality? ‘Prosumer’ vibe coding is powerful, addictive, and crazy cool. Non-technical folks can build things they never could before.

And also — there is no way you can build Spotify or HubSpot in one click, or at all for now. The fact that the leaders including Microsoft claim that is beyond misleading.

Here’s what actually happens when you try to build real but not incredibly complex products using “prosumer vibe coding” – apps like Replit, Lovable, Bolt, etc. in that sweet spot between walled gardens like Squarespace and Shopify and traditional development where you’re leveraging AI agents but still need to be able to code yourself, and be “technical.”

The Brutal Reality: It’s A Lot Harder Than It Looks.

Let me be clear upfront: all three of our apps made it to production in just weeks. That’s super impressive. Thousands of users are actively using them daily. They work. But the journey was far from the “10x developer overnight” narrative that’s dominating founder conversations right now.

Our three production apps:

New SaaStr.ai homepage: A complete rebuild that can do exponentially more than our old static site. We’re slowly rolling it out – thousands use it daily, but that’s still a fraction of SaaStr.com traffic. And that’s intentional.

SaaStr.ai VC Valuation Calculator: Transforms 4,000+ venture deals into one slick, interactive calculator at SaaStr.ai/valuation

SaaStr AI Mentor To Help Founders Work Through Their Issues: Our AI mentor that’s now done 40,000+ chats with founders at SaaStr.ai/mentor.

Plus we built one internal tool: a custom social media tracker that monitors our content across multiple platforms (because no off-the-shelf solution could handle our specific needs without manual processes). It’s good but not great, because it uses a lot of scraping and stuff that is fragile. But it does solve a need. There is no way to accumulate all this data in one simple dashboard. No social media tools track everything for us.

The SaaStr.ai homepage strategy reveals something crucial about vibe coding risk management: We’re running a deliberate, slow migration that we may never complete 100%. SaaStr.com stays on our bulletproof WordPress CMS with all our core content and SEO authority intact. While SaaStr.ai is our new homepage. This lets us experiment aggressively on SaaStr.ai without risking our business foundation.

If the AI-powered homepage fails catastrophically, or the AI agent goes a bit crazy, we haven’t lost our content, our search rankings, or our core business.

The AI Tide Lifts Databases

Tomtunguz • August 27, 2025

AI•Data•Snowflake

Another day, another earnings report with accelerating growth from AI. Snowflake earnings yesterday demonstrates yet again the impact of AI on a $4.4B revenue business.

Snowflake’s revenue has rebounded from a low of 26% to 32% quarter over quarter.

“And once [customers] are on our platform, AI becomes a cornerstone of their strategy, powering 25% of all deployed use cases with over 6,100 accounts using Snowflake’s AI every week.”

Since Cortex’s launch in Q1 of fiscal 2024, AI is now in half of Snowflake accounts and represents 25% of workloads. Two years since launch, Snowflake’s AI features show the latency required for some of these innovations to percolate through organizations.

Snowflake’s growth resurgence mirrors MongoDB. In fact, over the analyzed time period, the correlation between the growth of these two businesses is 0.96, which is exceptionally high. AI’s tide is lifting all databases.

“We knew it was going to be a strong quarter, but not as strong as it was, and that’s just the nature of a consumption model… NRR, net revenue retention, which was a very solid 125%. What is happening is that there is more and more recognition that the AI components of our data platform can deliver enormous value. And we are seeing budgets get allocated from large customers for AI projects.”

AI is driving more compute workloads, querying the core warehouses via OpenAI and Anthropic models.

“Europe is still developing, but it’s contributing…I would also say, too, that Microsoft is very strong in EMEA,”

Competition on the continent! There were some questions about the dynamics with Microsoft. Fabric may have a stronger position in Europe.

“We now have over 1,200 accounts using Iceberg, underscoring our leadership in bringing truly open standards to the enterprise.”

It means approximately 10% of the Snowflake customer base is now using Iceberg. These are likely the larger accounts. Iceberg adoption is one-fifth of AI adoption, showing it’s lower priority.

The 10 Most-Used AI Chatbots in 2025

Visualcapitalist • Bruno Venditti • August 28, 2025

AI•Data•Chatbots•ChatGPT•MarketShare

This was originally posted on our Voronoi app. Download the app for free on iOS or Android and discover incredible data-driven charts from a variety of trusted sources.

Key Takeaways

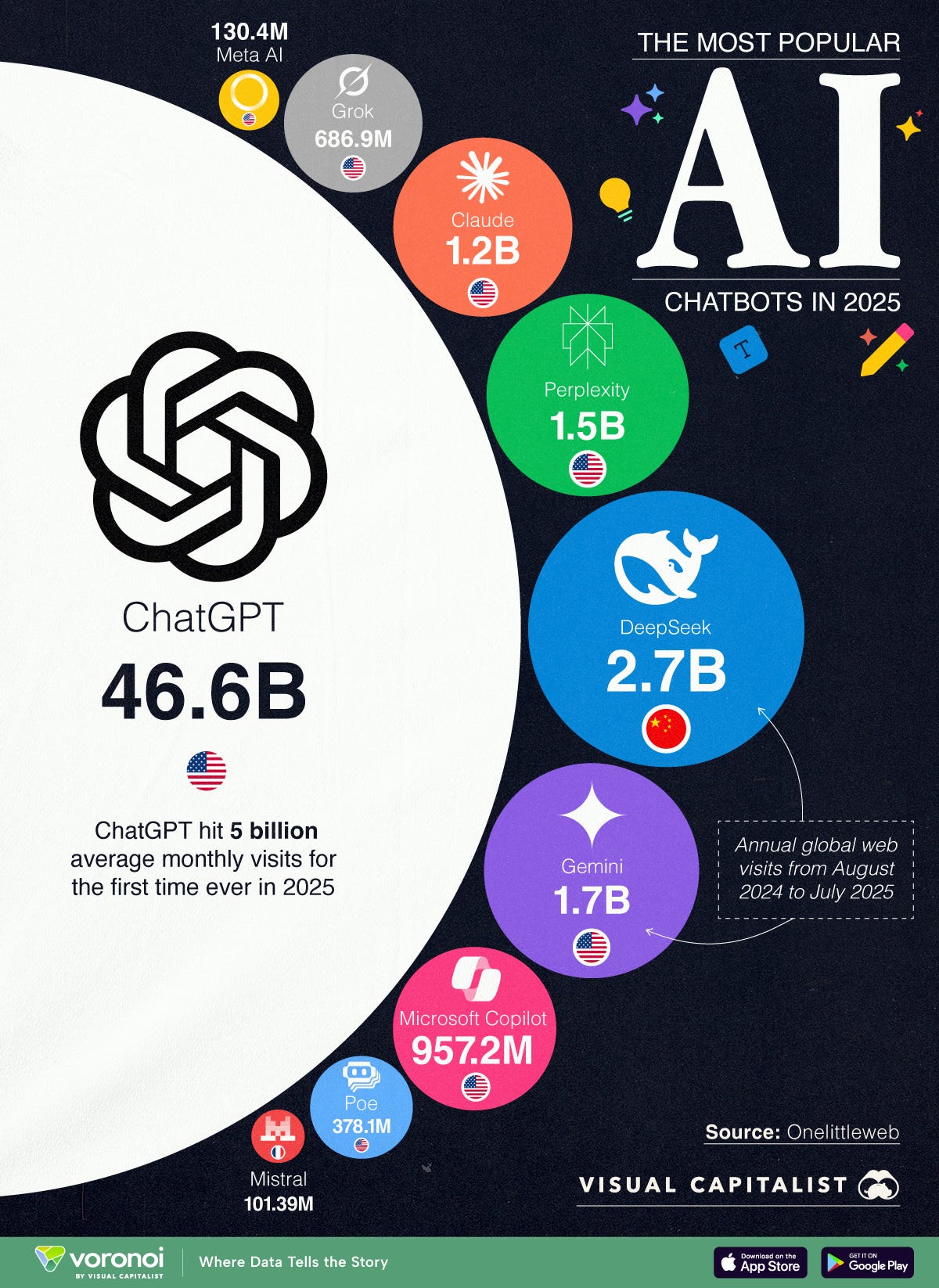

With 46.59B visits, ChatGPT accounts for almost half of total traffic among the top 10 chatbots.

The second most-used chatbot, DeepSeek at 2.74B visits, has less than 4%.

Chatbots have become a key interface for AI in both personal and professional settings. From helping draft emails to answering complex queries, their reach has grown tremendously. This infographic ranks the most-used AI chatbots of 2025 by annual web visits. It provides insight into how dominant certain platforms have become, and how fast some competitors are growing.

The data for this visualization comes from OnelittleWeb.

ChatGPT: Still the Undisputed Leader

ChatGPT continues to dominate the chatbot space with over 46.5 billion visits in 2025. This represents 48.36% of the total chatbot market traffic, four times more than the combined visits of the other 10 chatbots. Its year-over-year growth of 106% also shows it is not just maintaining, but expanding its lead.

DeepSeek, Gemini, and Claude in the Chase

DeepSeek emerged as the second most-used chatbot, tallying 2.74 billion visits—a huge 48,848% increase from last year. Gemini and Claude follow with 1.66B and 1.15B visits respectively, posting strong growth rates. Still, none come close to ChatGPT’s reach.

A Fragmented Landscape of Contenders

New and niche entrants like Grok (from X) and Perplexity are growing fast, but remain distant in terms of traffic. Poe, despite its early popularity, saw a sharp -46% drop in traffic. Meanwhile, Mistral and Meta AI are gaining ground, though their market shares remain under 1%.

MathGPT.AI, the ‘cheat-proof’ tutor and teaching assistant, expands to over 50 institutions

Techcrunch • Lauren Forristal • August 28, 2025

AI•Tech•EdTech•SocraticQuestioning•LMSIntegration

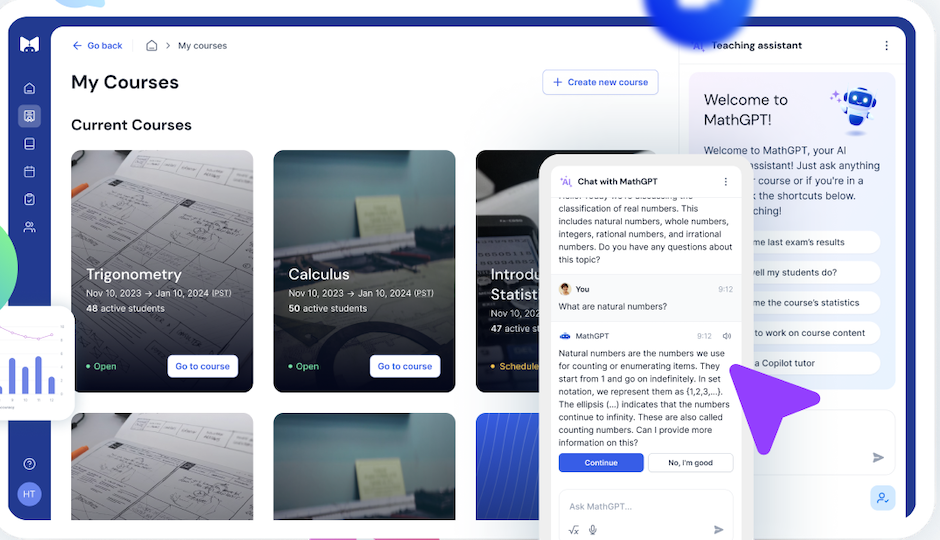

As AI tools proliferate in higher education—often leaving instructors unsure how to respond—MathGPT.ai emerged last year with a pitch to support academic integrity: act as a tutor for students while doubling as a teaching assistant for professors. The platform is designed to help learners practice and understand math rather than outsource their work.

After piloting at 30 U.S. colleges and universities, the company is preparing a broader rollout to more than 50 institutions this fall, with hundreds of instructors planning to adopt the tool. Early adopters include Penn State University, Tufts University and Liberty University.

A core design choice is that the chatbot withholds direct answers. Instead, it prompts students with guiding questions and incremental hints in a Socratic style to encourage reasoning and problem-solving. The aim is to mirror the cadence of a human tutor who nudges rather than supplies solutions.

For faculty, MathGPT.ai functions as a workflow assistant. It can generate practice questions and graded assignments from uploaded textbooks and course materials, and it supports auto‑grading and other time‑saving features. Coverage spans common college‑level math, including algebra, calculus and trigonometry.

Alongside the expansion, the company introduced updates that give instructors more granular control. Professors can decide when students are allowed to use the chatbot, enabling help on some assignments while requiring independent work on others. They can also set attempt limits on questions and enable unlimited, ungraded practice so students can build fluency without grade pressure.

To bolster authenticity, instructors can optionally require students to upload images of their work for review. Recent improvements also include integrations with major learning management systems—Canvas, Blackboard and Brightspace—plus accessibility additions like screen‑reader compatibility and an audio mode. Short video summaries include closed captions, with the narration stylized to sound like historical figures.

MathGPT.ai emphasizes guardrails for safe, on‑task interactions and discloses that mistakes can occur. The company invites users to report inaccuracies and says it employs human reviewers to check course content. Looking ahead, it plans a mobile app and expansion beyond math into subjects such as chemistry, economics and accounting. Pricing includes a free tier and a paid option of $25 per student per course that unlocks benefits like unlimited AI assignments and LMS integration.

The Second-Order Effects of AI

Tomtunguz • August 26, 2025

AI•Data•MongoDB•Atlas•VectorSearch

AI vendor revenue will double classic software in terms of new bookings this year.

This trend is so large it’s starting to have second-order effects.

MongoDB reported strong Q2 FY'26 results, delivering $591M in revenue with 24% year-over-year growth. AI is causing a second-order effect & a resurgence in growth in Atlas, the cloud-hosted version of MongoDB, which represents 74% of total revenue. We’ve seen a reacceleration within the hyperscalers already, but now the impacts are felt beyond.

The Atlas product shows a pronounced deceleration pattern when examined quarterly, but with clear signs of recent revival:

Looking at the previous 22 quarters, Atlas grew incredibly quickly until Q3 of 2021. The post-COVID surge re-accelerated it to 85%, falling to 24% & today bouncing again up to 29%. Could AI be as impactful on growth rates as COVID?

MongoDB is emerging as a standard for AI applications. Over the last few quarters, we’ve seen a strength in our self-serve channel, driven in part by AI native startups choosing Atlas as the foundation for their applications.

Atlas’s growth aligns with broader changes in software distribution channels as AI-native companies adopt different procurement patterns.

After testing vector search against Postgres pgvector for their in-vehicle voice assistant, they selected MongoDB for superior performance at scale & stronger ROI. They now rely on Atlas to handle over 1 billion vectors & expect 10x growth in data usage by next year.

Vector search has the potential to become a significant workload for customers. Vectors are used for information retrieval for AI applications.

Atlas performance was strong, accelerating to 29% year-over-year growth, up from 26% in Q1. Our customer additions were also robust. We have added over 5,000 customers over the last 2 quarters.

MongoDB has added 10% of its customer base by count in the last two quarters.

In Q2, Atlas consumption growth was strong & relatively consistent with last year’s growth rates. This drove the acceleration in revenue as well as the growth in absolute revenue dollars year-to-date for the first half of fiscal ’26.

Venture

The Week’s 10 Biggest Funding Rounds: Commonwealth Fusion’s Giant Financing Leads Otherwise Slow Week For Big Deals

Crunchbase • Joanna Glasner • August 29, 2025

Venture

Overview

A single mega-round dominated an otherwise subdued week for U.S. venture financings. Commonwealth Fusion Systems closed $863 million—described as a Series B2—from a broad syndicate that includes Nvidia’s NVentures, dwarfing every other deal on the list. Aggregate funding across the top 10 reached roughly $1.29 billion, with Commonwealth’s raise accounting for about two-thirds of that total. Outside fusion, meaningful checks flowed to oncology, fintech infrastructure, defense autonomy, vertical AI, photonics, neuro-focused biotech, and energy-resilient home appliances.

Top deals snapshot

Commonwealth Fusion Systems ($863M, fusion energy; Devens, MA): The company says it is “moving closer to being the first in the world to commercialize fusion power,” as it advances commercial fusion systems with backing that now includes NVentures.

Wugen ($115M, oncology; St. Louis, MO): Equity financing led by Fidelity to progress CAR-T programs targeting T‑cell cancers, with funds earmarked for clinical trials.

Rain ($58M, fintech; New York, NY): Series B led by Sapphire Ventures to scale stablecoin payments infrastructure; total funding now $88.5M, coming just five months after its Series A.

Blue Water Autonomy ($50M, autonomous ships; Boston, MA): Series A led by GV to design and deploy long-range, full-size unmanned vessels for the U.S. Navy, with first ship targeted for next year.

Assort Health (~$50M, health care AI; San Francisco, CA): Reported Series B at a $750M valuation to automate patient communications for specialty providers.

OpenLight ($34M, photonics; Goleta, CA): Series A co-led by Xora Innovation and Capricorn Investment Group to expand a silicon photonics platform for semiconductor design.

Atomic ($30M, fintech; New York, NY): Growth round led by Aquiline Capital Partners and Brewer Lane Ventures for an embedded investing platform serving fintechs and financial institutions.

Aurasell ($30M, vertical AI; San Mateo, CA): Seed financing backed by N47, Menlo Ventures and Unusual Ventures for an AI‑native CRM platform.

Leal Therapeutics ($30M, biotech; MA): Series A led by SV Health Investors’ Dementia Discovery Fund to advance first‑in‑class neuro‑metabolic therapies for disorders with high unmet need.

Copper ($28M, appliances + storage; Berkeley, CA): Equity and debt Series A led by Prelude Ventures for “Charlie,” a stainless steel range/oven with integrated battery storage.

Sector trends and implications

Deeptech revival: The fusion mega-round underscores renewed investor conviction in capital‑intensive climate and energy bets, where technical milestones plus strategic corporate LPs (e.g., NVentures) can unlock outsized late‑private checks. If CFS meets commercialization timelines, it could catalyze a new cycle of hardware- and materials‑oriented climate funds.

Defense autonomy momentum: Blue Water Autonomy’s Navy‑focused platform signals sustained demand for dual‑use robotics and maritime autonomy, aligning with rising federal procurement interest in uncrewed systems.

Financial plumbing for crypto’s “boring middle”: Rain’s B round highlights a shift from speculative trading to enterprise-grade payments using stablecoins, aiming at compliance, speed, and cost efficiency for mainstream use cases.

Healthcare AI practicality: Assort Health’s funding—anchored in automating patient calls and outreach—reflects investor preference for workflow‑centric AI that shows measurable ROI for specialty practices rather than moonshot diagnostics.

Semiconductor photonics: OpenLight’s raise points to growing adoption of silicon photonics to overcome bandwidth/energy bottlenecks in data centers and advanced packaging—an enabling layer for AI scale.

Investor landscape

Strategic and crossover capital remained active: NVentures joined the CFS syndicate; Fidelity led Wugen; Sapphire led Rain; GV led Blue Water. These names suggest continued appetite from corporate and crossover investors for category‑defining platforms.

Stage mix skew: Despite the headline mega‑round, half the list sits at Seed/Series A, indicating durable early‑stage activity even as late‑stage growth rounds remain selective.

Geography: Massachusetts and California captured the majority of dollars (fusion in Devens; photonics in Goleta; appliances + storage in Berkeley), while New York hosted two fintech deals, and St. Louis stood out in biotech.

Methodology context

The ranking covers U.S.-based companies with the largest announced rounds for the period Aug. 23–29. Announcements can lag reporting, but the list reflects what was captured during the week.

Key takeaways

Total top‑10 funding: ≈$1.29B; fusion alone ≈67% of the sum.

Deeptech and defense autonomy are resurgent alongside pragmatic AI and fintech infrastructure.

Corporate and crossover investors continue to anchor large, technically ambitious rounds.

Early‑stage remains active, but nine-figure checks are concentrated in a few breakthrough platforms.

Energy resilience and electrification extend beyond utilities into consumer appliances, as seen with Copper’s integrated storage approach.

Ben Horowitz on How a16z Was Built

Youtube • a16z • August 23, 2025

Venture

Vercel Triples Valuation to $9 Billion With Accel Investment

Bloomberg • August 27, 2025

Venture

Vercel, which provides tools for companies to build web and artificial-intelligence applications on cloud infrastructure, is seeking to raise several hundred million dollars in fresh financing at a valuation near $9 billion, according to people familiar with the matter. The prospective round would give the company additional capital as demand grows for software that simplifies deploying modern websites and AI-enabled services, allowing enterprises to focus on building applications rather than managing underlying infrastructure.

Accel is poised to lead the investment, said the people, who requested anonymity because the discussions are private. Representatives for Vercel did not respond to requests for comment, and Accel declined to comment. Some details of the fundraising had been reported earlier by The Information. Accel, an early backer of companies including Slack and Dropbox, has a long track record investing in developer platforms and cloud infrastructure, signaling enduring venture interest in the intersection of cloud computing and AI.

The company is based in San Francisco and led by Guillermo Rauch.

OpenAI warns against SPVs and other ‘unauthorized’ investments

Techcrunch • Anthony Ha • August 23, 2025

Venture

In a new blog post, OpenAI cautions prospective investors about “unauthorized opportunities” to gain exposure to the company, including via special purpose vehicles (SPVs).

OpenAI urges people to be wary of firms claiming they can provide access to OpenAI equity through SPV interests. While it notes some offers of OpenAI equity could be legitimate, the company warns others may be designed to circumvent its transfer restrictions.

“If so, the sale will not be recognized and carry no economic value to you,” OpenAI says.

SPVs — vehicles that pool capital for one-off deals — have become a popular way to buy into hot AI startups. That growth has sparked criticism from other investors, who deride some SPV activity as a vehicle for “tourist chumps.”

OpenAI isn’t the only major AI company tightening its stance. Business Insider reports that Anthropic told Menlo Ventures it must invest in an upcoming round using its own capital rather than an SPV.

Series B theme: a quiet FinTech revival

Signalrankupdate • August 25, 2025

Venture

Source: Crunchbase & SignalRank

AI, defense and crypto rounds are dominating the headlines. But, beneath the noise, FinTech is quietly staging a comeback at the Series B stage.

After two years of retrenchment, Series B activity in FinTech is rebounding. Crunchbase reports $22bn invested in FinTech in H1 2025 (across all rounds), representing 5% growth relative to H1 2024.

This momentum has been supported by recent successful FinTech IPOs, including Circle & Chime. Wealthfront, Gemini and Navan are also slated to IPO later this year.

The growth-at-all-costs mentality that characterized the previous FinTech investment cycle has been replaced by a more measured approach. The focus is on B2B payments, institutional-grade B2B infrastructure & (of course) AI-native FinTech.

As a share of all Series Bs, FinTech has climbed back to 16%, continuing its long-term upward trajectory (Figure 1).

SignalRank’s algorithms seek to identify the top 5% of all Series Bs. FinTechs now represent almost one third of all qualifying Series Bs on SignalRank’s approach. In fact, as Figure 2 shows, more FinTechs have qualified for our product this year than AI companies.

There have been 23 FinTech qualifiers in 2025 YTD (Figure 3). SignalRank is an investor in four of these 23 (highlighted in green).

Figure 3. All FinTech qualifiers with 2025 Series Bs

Of SignalRank’s 36 portfolio companies, 7 are FinTech companies (Figure 4). Or 19% (which is slightly underweight relative to the whole qualifying set).

Figure 4. FinTechs within SignalRank’s portfolio

So what?

AI continues to absorb much of the VC world’s attention & capital. But other non-AI sectors have adapted to the new post ZIRP environment and are beginning to attract capital again with much more operational discipline & more capital efficient models.

The systematic nature of SignalRank’s approach enables our investment product to capture these shifts, giving our investors exposure to the FinTech revival.

Anthropic's $10B Round, Klarna's IPO, Inside a16z's 72 Deal Seed Investment Machine ft. Marc Benioff

Youtube • 20VC with Harry Stebbings • August 28, 2025

Venture

Nuclear fusion developer raises almost $900mn in new funding

Ft • August 28, 2025

Venture

Commonwealth Fusion Systems has raised almost $900mn in a new funding round, underscoring intensifying investor interest in fusion energy as demand for electricity from artificial intelligence accelerates. The latest injection brings total capital raised by the Massachusetts-based company since 2018 to nearly $3bn.

The round drew backing from Nvidia’s venture arm NVentures, Morgan Stanley’s Counterpoint Global and a consortium of 12 Japanese companies led by Mitsui & Co, reflecting growing strategic interest from both technology and industrial investors. The financing will support completion of CFS’s Sparc fusion demonstration machine and advance plans for a first commercial fusion power plant in Virginia, home to one of the world’s largest concentrations of data centres.

CFS has been among the most prominent private fusion developers pushing magnetic-confinement technology, aiming to harness the same process that powers the sun to deliver abundant, carbon-free electricity. In June, the company signed a deal to supply 200 megawatts of electricity to Google — a rare offtake agreement in the sector — signalling rising corporate willingness to underwrite future fusion output.

The fresh capital comes amid a broader surge of funding into nuclear technologies driven by AI-related power needs and decarbonisation targets. Fusion-focused investment has jumped sharply in 2025 from the previous year, even as the technology remains unproven at commercial scale and timelines to grid power extend into the next decade. Proponents argue that advances in high-temperature superconducting magnets and power electronics, together with deep-pocketed partners from cloud computing and industrial supply chains, are compressing development cycles and lowering costs across the field.

Crypto

Galaxy, Jump, Multicoin Seek $1 Billion for Buying Solana

Bloomberg • August 25, 2025

Crypto•Altcoins•Solana

Galaxy Digital, Multicoin Capital and Jump Crypto are in talks with potential backers about raising roughly $1 billion to accumulate Solana, in what would be the largest treasury dedicated to the digital token.

The three firms have enlisted Cantor Fitzgerald LP as lead banker for the deal, said people with knowledge of the matter. They aim to create a so-called digital asset treasury company by taking over an unidentified publicly traded entity, according to the people, who requested anonymity discussing private information.

GeoPolitics

Beyond Silicon: Navigating The Future of Semiconductor Innovation and Geopolitics with Nandan Nayampally, CCO of Baya Systems

Seedcamp • August 29, 2025

GeoPolitics•Economy•Semiconductors

Overview

A conversation with a senior semiconductor executive explores how technological shifts and geopolitics are reshaping everything from chip design to manufacturing footprints and investment theses. The discussion traces a career spanning AMD, ARM, and Amazon into a current role focused on commercialization, using that arc to highlight the industry’s pivot from general-purpose compute to specialized, application-driven silicon and IP licensing. Themes include the rise of domain-specific architectures, the importance of interconnects and Networks-on-Chip (NoC), and the challenge of aligning hardware roadmaps with rapidly moving AI and edge workloads.

From General-Purpose to Specialized IP

The era of one-size-fits-all CPUs is giving way to specialized IP blocks optimized for distinct tasks—signal processing, AI inference, networking—assembled into heterogeneous systems-on-chip.

NoC design is emphasized as a performance and power bottleneck-turned-enabler: intelligently routing data on-die becomes as strategic as cores themselves.

Productization lesson: decouple reusable IP from end-product cycles so teams can iterate IP quickly while maintaining long-term platform compatibility.

Geopolitics, Localization, and Resilience

Policy, export regimes, and national security concerns are redrawing supply chains. Companies increasingly pursue “local-for-local” manufacturing to manage risk, comply with controls, and reduce exposure to single-region dependencies.

Resilience now includes multi-node strategies (e.g., having designs portable across foundries/processes), second-source planning, and buffering critical components.

The consequence is higher non-recurring engineering (NRE) and operational complexity, but also greater strategic optionality when disruptions occur.

AI, Edge, and System-Level Co-Design

AI’s cadence outpaces traditional silicon timelines, pushing for agile design methods, modular IP reuse, and software-hardware co-design.

Edge compute demands low-latency, power-efficient accelerators, driving innovation in memory hierarchy, dataflow, and compression on-device.

Integration guidance: pair narrowly scoped accelerators with flexible compute and robust interconnects to avoid overfitting to today’s models.

Investment Landscape and Opportunities

Opportunity zones include interconnect IP, verification and EDA acceleration, chip design automation, and silicon-optimized middleware for AI/edge.

Diligence heuristics: assess an IP’s portability across nodes, its software toolchain maturity, and whether the business model supports recurring licensing plus services rather than pure NRE.

Market timing: favor plays that reduce time-to-silicon (design tools, reference platforms) or unlock value across multiple verticals (networking, automotive, datacenter).

Operating and Leadership Lessons

Build teams that can question first principles and instrument decisions with data; cultivate “agility in chip design” through modularization and continuous integration.

Embrace “uncomfortable truths”: performance claims must stand up under realistic workloads; power, thermal, and memory bandwidth are the limiting reagents more often than raw FLOPs.

Lead highly intelligent teams by clarifying mission and constraints, then giving ownership over measurable outcomes rather than prescriptive tasks.

Key Takeaways

Specialization and NoC-centric design are defining next-gen SoCs.

Geopolitics is a design parameter: resilience and localization matter.

AI’s pace demands modular IP, agile methods, and co-design.

Investors should prioritize portability, toolchains, and recurring revenue models.

Macron Vows Retaliation If Europe’s Digital Sovereignty Attacked

Bloomberg • August 29, 2025

GeoPolitics•Europe•DigitalSovereignty•Cybersecurity•EUPolicy

Overview

French President Emmanuel Macron signaled a firmer European posture on technology and security by warning that France—and by extension the European Union—will deliver a “strong response” if any country takes actions that undermine Europe’s digital sovereignty. In framing digital control as a strategic interest on par with economic and national security, the statement elevates technology policy to a geopolitical red line. Macron’s vow implies readiness to use state power—diplomatic, regulatory, or economic—against external pressure that compromises European autonomy over data, platforms, networks, and critical digital infrastructure.

What “digital sovereignty” encompasses

Digital sovereignty broadly refers to Europe’s ability to set and enforce its own rules over digital infrastructure and the data and services that run on it. This includes control over:

Data governance and cross‑border data flows

Cloud and semiconductor supply chains

Cybersecurity and critical network resilience

Platform rules for online content, competition, and consumer protection

Strategic autonomy in AI, 5G/6G, and other foundational technologies

By invoking the term, Macron connects disparate policy areas—privacy, competition, cyber defense, and industrial strategy—under a single strategic umbrella.

Potential triggers for a “strong response”

While no specific case was cited, examples of actions that could be perceived as undermining European digital sovereignty include:

Extraterritorial legal demands or sanctions that compel EU data or firms to comply with non‑EU controls

Cyber intrusions targeting European infrastructure or democratic processes

Coercive supply‑chain practices or export restrictions that choke off critical technologies

Attempts by dominant platforms to evade EU rules or monopolize key digital markets

Disinformation and influence operations aimed at destabilizing EU policymaking

These scenarios frame the boundary conditions under which Europe might escalate beyond routine regulatory enforcement.

Possible instruments of retaliation

A “strong response” could draw from multiple toolkits:

Regulatory enforcement and fines against non‑compliant platforms and service providers

Trade remedies, procurement preferences, or investment screening measures

Coordinated cyber defense actions and sanctions in response to malicious activity

Diplomatic pressure and coalition‑building with like‑minded partners to set standards

Acceleration of European industrial initiatives to reduce strategic dependencies

None of these instruments were specified, but the signal suggests policy readiness to act across domains.

Implications

For global tech firms: Expect stricter compliance expectations and less tolerance for perceived rule‑dodging. Market access may hinge on demonstrable alignment with European requirements on data, security, and competition.

For transatlantic relations: The statement underscores Europe’s desire for balanced digital relations. Cooperation remains likely, but frictions may intensify where legal regimes conflict.

For China and other major powers: Europe is positioning itself as a rule‑making power capable of defensive measures when digital dependencies are weaponized.

For EU internal policy: The rhetoric strengthens momentum for deeper coordination among member states on cyber readiness, cloud standards, and critical tech capacity.

Key takeaways

Macron vowed a “strong response” if any country acts to undermine Europe’s digital sovereignty, elevating tech policy to a strategic priority.

Digital sovereignty spans data control, infrastructure security, platform governance, and supply‑chain resilience.

Retaliation could involve regulatory, economic, cyber, and diplomatic tools deployed in concert.

The message aims to deter coercion, reinforce EU rule‑setting, and reduce external dependencies in critical technologies.

Companies operating in Europe should anticipate tighter enforcement and plan for compliance aligned with European standards.

Interview of the Week

Why Even Sam Altman Wants to be Gary Marcus: From Son of Sam to Son of Gary in a single ChatGPT Release

Keenon • August 27, 2025

Essay•AI•OpenAI•Interview of the Week

It hasn’t always been easy being Gary Marcus these last few years. OpenAI’s most persistently outspoken AI sceptic has been in minority, sometimes of one, in his critique both of Sam Altman’s claims about the imminence of AGI as well as the general “intelligence” and economic viability of ChatGPT. Since the supposedly “botched” release of GPT-5, however, even Sam Altman seems to want to be Gary Marcus. For Gary, who has endured what he diplomatically calls "an unbelievable amount of shit" for his contrarian views, the irony is particularly delicious. He now finds himself vindicated as the very company he's criticized adopts his language of caution and scaled-back expectations. "It's not that I'm becoming like him," Gary says about Sam with Marcusian humility, "but that he's becoming like me." Rather than Son of Sam, OpenAI is now the Son of Gary story.

1. The GPT-5 Reality Check Changed Everything

GPT-5's underwhelming performance—described as barely different from GPT-4.1—shattered the industry's faith in scaling. After 34 months of development and unprecedented hype, it delivered incremental improvements rather than the "quantum leap" promised, fundamentally shifting Silicon Valley's narrative from exponential progress to diminishing returns.

2. OpenAI is Burning Cash Despite Record Revenue

Despite making a record $1 billion last month and being valued at $300 billion, OpenAI is losing approximately $1 billion monthly and has never turned a profit. The company faces a severe cash flow crisis with only 6-18 months of runway, forcing Altman into constant fundraising cycles at ever-higher valuations.

3. The AI Bubble Could Trigger Market Contagion

OpenAI's inflated valuation props up NVIDIA's $5 trillion market cap, which depends on insatiable AI chip demand. If even one major AI company scales back purchases or fails, the ripple effects could devastate pension funds and trigger broader market corrections, making this potentially more dangerous than the dot-com bubble.

4. Surveillance Monetization is OpenAI's Next Move

With AGI proving elusive, OpenAI will likely pivot to monetizing the vast personal data users share with ChatGPT—turning users into products like Facebook did. Marcus predicts this shift toward surveillance capitalism, especially with their rumored hardware device partnership with Johnny Ive.

5. The Industry's Intellectual Monoculture is Breaking

The field's unprecedented focus on large language models to the exclusion of other approaches created "the least intellectual diversification in AI's 80-year history." As scaling hits limits, the industry must diversify into neuro-symbolic AI and other paradigms that Marcus has long championed.

Startup of the Week

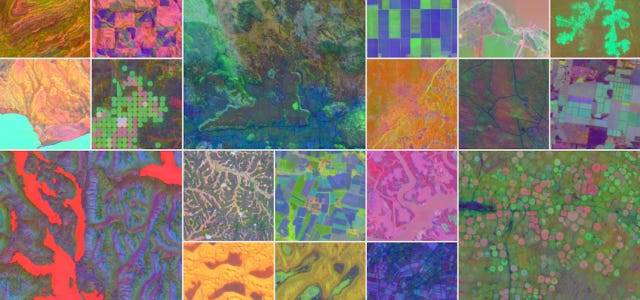

Google DeepMind Launches AlphaEarth Foundations to Bridge the Gap Between Big Data and Planetary…

Medium • ODSC - Open Data Science • August 28, 2025

AI•Data•ClimateModeling•RemoteSensing•DisasterPreparedness•Startup of the Week

Earth is complex and impacted by shifting weather patterns, land changes, ocean conditions, and human activities. To protect the planet in the future, Google DeepMind uses artificial intelligence (AI) and big data to predict climate change and natural disasters.

As a data scientist, you’re already trying to parse zettabytes of geospatial and climate data, with more pouring in every day. Even with the help of AI, it’s nearly impossible to sort through all the digital noise and find meaningful results.

Enter AlphaEarth Foundations

What is Google DeepMind’s AlphaEarth Foundations? It is an AI-driven virtual satellite. The program creates a constantly updating map of the planet. It takes data from multiple sources, processes it, and spits out an analysis while monitoring for changes. Although other AI models are tracking planetary changes, AlphaEarth had 24% fewer errors than others by measuring and monitoring 10 by 10 meter squares.

What Data Sources Does AlphaEarth Use?

To come up with such accurate results, the program pulls from multiple sources, including:

Satellite imagery, including from Landsat, Sentinel, and MODIS

Climate data about temperature, rainfall, wind from weather stations, ocean buoys with sensors, and models simulating Earth’s climate

Geospatial details from maps of elevations in various locations, GIS data about infrastructure, and oceanic data about changing tides and currents

Societal data about humans and how they use the land from sources like the U.S. Census, governments around the world, and agricultural details on crop yields and global land management

Sensor networks on the ground and in the water

Crowdsourced data collected by scientists and trained volunteers

The sheer volume of information is more than a team of humans could sort through in their lifetimes. Using computers to weave together satellite images, climate simulations and sensor data, each 10 by 10 square gets a number. Using embedding fields allows the machine to run simulations and recognize anomalies.

How the AlphaEarth Foundations Program Helps Scientists

If you occasionally battle raw satellite data, you’re probably aware of its inaccuracies. Cloud interference and misalignment skew the results and take up precious storage space on your network.

AlphaEarth Foundations eliminates the noise and homes in on the exact imagery needed. The precision of a 64-dimensional illustration that grabs data on terrain, water, vegetation, and urban development saves space. It also cuts all the unnecessary visuals. You can focus on developing and testing hypotheses that lead to real change rather than wasting time cleaning up messy inputs.

Siloed datasets of the past may miss relationships between human behavior or development and changes in local ecosystems. With AlphaEarth, you can combine satellite imagery and layer it with elevation gradients and temperatures to accurately see how deforestation impacts a geographic area. The system can monitor crop health, food security, and water resources.

Post of the Week

A round up of SpaceX's starship flight number 10, where everything finally worked, even with the heat shield intentionally compromized the Starship landed on target, something we've not seen this year. The V2 has been stricken with bad luck that resulted in 3 consecutive failures to complete the mission, but today it delivered, and now we have questions.

A reminder for new readers. Each week, That Was The Week, includes a collection of selected essays on critical issues in tech, startups, and venture capital.

I choose the articles based on their interest to me. The selections often include viewpoints I can't entirely agree with. I include them if they make me think or add to my knowledge. Click on the headline, the contents section link, or the ‘Read More’ link at the bottom of each piece to go to the original.

I express my point of view in the editorial and the weekly video.