This week’s video transcript summary is here. You can click on any bulleted section to see the actual transcript. Thanks to Granola for its software.

Editorial

Who Gets to Tell the AI Story? The Answer Matters

Three narrative engines are running full tilt this week. Each wants to define what AI means before we can figure it out for ourselves

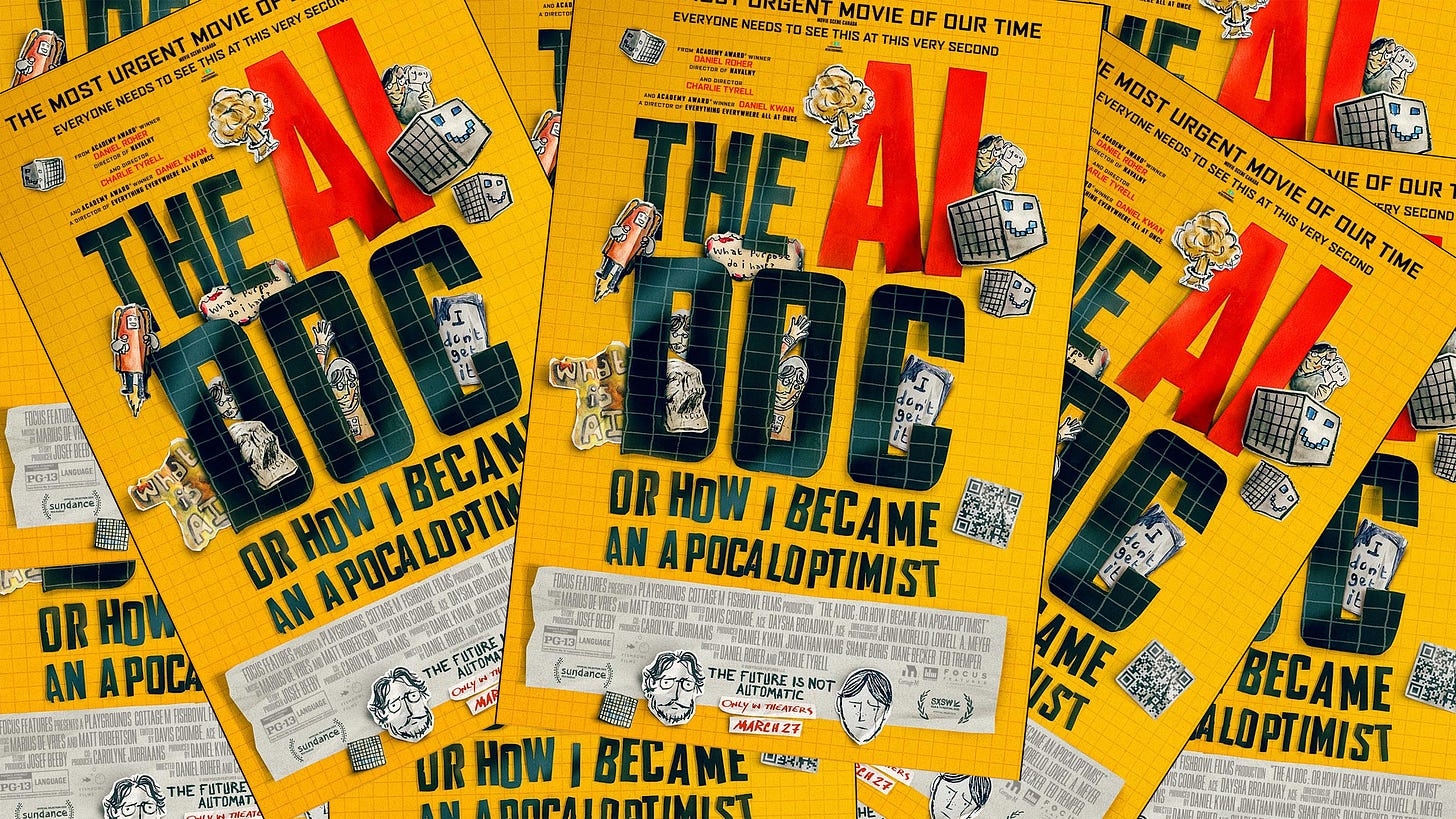

The first is the doom machine. A new documentary, “The AI Doc: Or How I Became an Apocaloptimist,” opened in theaters on March 27. It features a parade of interviewees who, as David William Silva observed,

“describe AI-driven extinction with the calm confidence of people who have said these things so many times they have stopped noticing they have no evidence for them.”

The film positions Tristan Harris — whose Center for Humane Technology received $500,000 from the Future of Life Institute for “messaging cohesion within the AI X-risk community” — as a neutral voice in the middle. Harris told the AP his hope is that the film becomes “An Inconvenient Truth” for AI. That comparison should alarm you. His previous efforts — “The Social Dilemma” and “The AI Dilemma” — were exercises in manipulative hyperbole dressed as public education.

The documentary lets three factual falsehoods pass unchallenged. That Anthropic’s Claude decided, unprompted, to blackmail someone — when in fact researchers iterated through hundreds of engineered prompts to produce that outcome. That AI is “less regulated than sandwich shops” — when state attorneys general from both parties and FTC Chair Lina Khan have explicitly said existing laws already cover AI. That data centers threaten drinking water — based on a book that had to issue corrections after a key figure was off by a factor of 4,500.

Silva names the incentive: “The believers are a market. As long as the ratio stays favorable, the machine is profitable.” The doom industry isn’t confused. It’s commercial. AI doom-mongering is a business. The more people believe AI will destroy the world, the more money flows to those selling that fear.

The second narrative machine is corporate. This week OpenAI acquired TBPN, a daily tech talk show with 70,000 viewers per episode. The show will report to Chris Lehane — OpenAI’s chief political operative, the man who built the crypto super PAC Fairshake — under “strategy,” not communications.

As Om Malik writes:

“You don’t put an editorially independent media property under your political operative.”

The press release mentions editorial independence four times and coins a new term, “Editorial Independence Covenant.” Fidji Simo’s justification:

“The standard communications playbook just doesn’t apply to us.”

Om’s historical parallel is blunt: Lenin argued in 1902 that his revolution needed its own newspaper. He named it Pravda — truth. As it happens Lenin was right. OpenAi maybe less so.

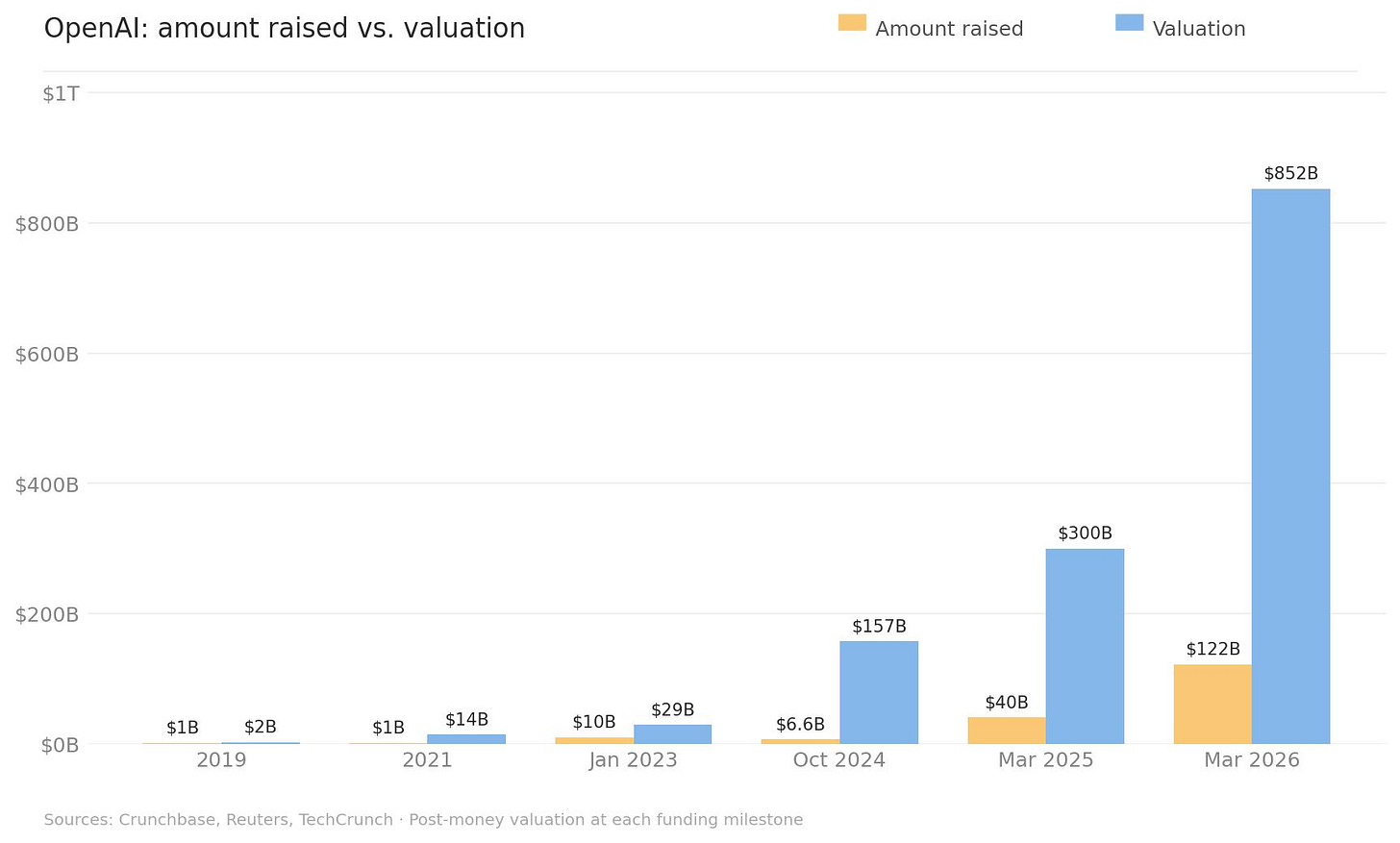

This sits alongside the broader OpenAI IPO construction. A $122 billion round at $852 billion valuation. Amazon’s $50 billion anchored to an AWS contract. ARK ETFs distributing OpenAI shares to retail investors before a filing exists. Banks extending a $4.7 billion credit line that doubles as an underwriter audition.

As Packy McCormick argued this week, the laziest move in all of this is the analogy:

“you could have said the same thing about Amazon.”

The correct response is: show me the negative working capital engine. Show me the unit economics that improve with scale. Show me the 1997 letter. But most likely OpenAI CAN show those things. It reports $2 billion a month of revenue and growing.

The third narrative machine is financial. Fundrise’s VCX fund debuted at ~$700 million and surged to $6.5 billion within three days — trading at 30 times its net asset value. That’s not price discovery. That’s retail investors paying any price for a story about access to private markets.

Stanford’s endowment CIO Rob Wallace says there are “likely only 10–12 early-stage VCs in the US who generate the majority of profits.” PitchBook’s latest report documents the fraud spreading through venture secondaries — SPVs investing in SPVs, layered fees, no standardized way to verify that the person selling you “OpenAI shares” actually holds them. The story of access is being sold harder than the access itself. VCX has now pulled back from a peak $575 a share to around $130. The true value of its assets is $18-19. Media does drive irrational behavior.

So: doomers selling fear, corporations selling narrative, financiers selling access. Three machines, all running hard. What do they have in common? They all assume you need someone to interpret AI for you — to tell you whether to be afraid, excited, or invested.

Now look at what the people actually studying and building the technology found this week.

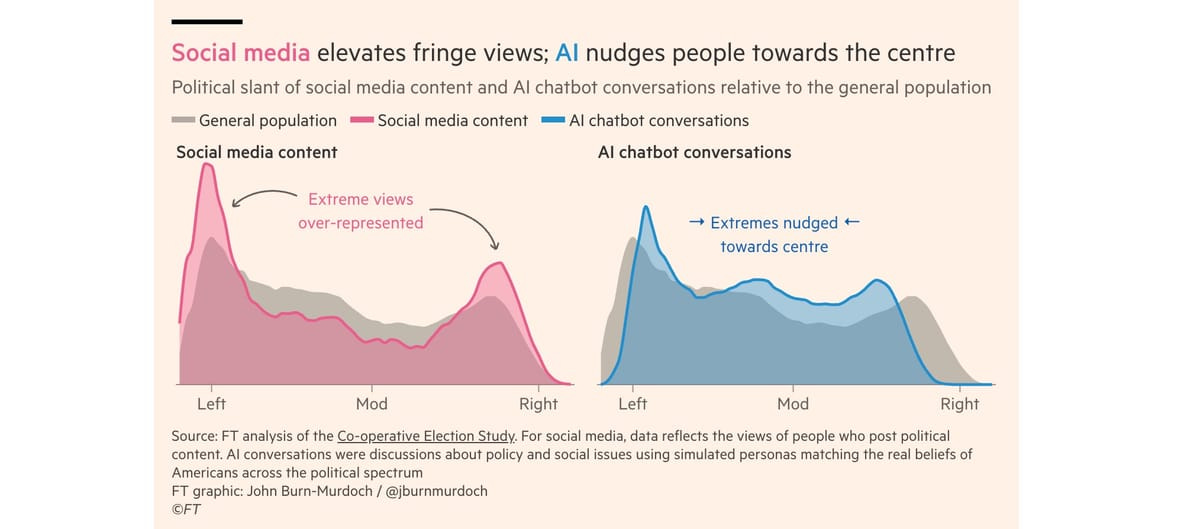

John Burn-Murdoch at the Financial Times tested four major LLMs against 61 policy questions using simulated users across the ideological spectrum. Every model nudged responses toward the center. Conspiratorial beliefs overrepresented on social media were nearly absent from AI outputs. Paul Kedrosky flagged the structural explanation:

“social media profits from engagement and clicks, so inflammatory content gets amplified; AI models are trained on vast corpora skewing toward published, edited, expert-legible text.”

Derek Thompson reviewed randomized trials and meta-analyses on the Smartphone Theory of Everything — the idea that phones explain rising anxiety, polarization, and declining youth mental health.

His finding: phones are global, but their worst effects are concentrated in a handful of rich, English-speaking countries. Youth happiness plummeted in the US and UK while rising in Eastern Europe and East Asia. Phones aren’t the cause; they’re an accelerant interacting with distinctly American conditions. The technology doesn’t override culture. It amplifies whatever’s already there.

Thomas Ptacek — one of the most respected voices in security research — wrote that within months, finding zero-day vulnerabilities will be as simple as pointing a coding agent at a codebase.

This is a real, consequential development. Not speculative. Not theatrical. Anthropic’s own red team demonstrated it: a bash script looping Claude Code across a repository produced 500 validated high-severity vulnerabilities. The targets that won’t cope aren’t the AI companies. They’re routers, printers, hospital systems, regional banks — anything that requires someone to physically push a button to patch.

Richard Schenk, writing about Europe’s digital regulation, identified the philosophical assumption underneath the narrative machines:

“The more individuals are perceived as determined by forces beyond their control, the stronger the paternalistic temptation to ‘correct’ outcomes through centralised intervention.”

Post-war liberal democracy assumed citizens were rational agents capable of judgment. The new regulatory impulse assumes they’re vulnerable, manipulable, and need protection from their own choices. Interestingly these paternalistic, and ultimately anti-democratic, impulses come mainly from the “left”.

That assumption — that humans can’t handle what AI puts in front of them — is shared by the doomers, the regulators, and the corporate narrative-builders alike. It’s the one thing Harris, the EU AI Office, and OpenAI’s strategy division agree on: ordinary people need mediators.

The evidence from this week says otherwise. Chatbots moderate rather than radicalize. The Smartphone Theory collapses on contact with cross-cultural data.

Actual AI risks — vulnerability research, supply chain attacks, scaling limits — are concrete, measurable, and addressable by competent engineers and policymakers, not by documentary filmmakers or political operatives.

The biggest risk this week isn’t AI. It’s letting the people with the loudest megaphones — and the clearest financial or political incentives — define what AI means before the rest of us figure it out for ourselves.

Humans are clever. They always have been. The question isn’t whether AI needs to be explained to them. It’s whether anyone will let them think and act for themselves.

Contents

Editorial

Essays

Venture

AI

Silicon Valley Learns to Love the Government — at Least When It’s Friendly

Claude Code Leak Exposes Source, Upcoming Features, and an Always-On Agent

Baidu’s Robotaxis Freeze in Wuhan, Trapping Passengers and Snarling Traffic

Anthropic Responsible Scaling Policy v3: Dive Into the Details

Sycophantic AI Decreases Prosocial Intentions, Stanford Study Finds

AI Doc Review

Regulation

Infrastructure

Interview of the Week

Startup of the Week

Post of the Week

Essays

I Saw Something New in San Francisco

Author: Ezra Klein / New York Times Published: Mar 29, 2026

Klein spent a week in San Francisco talking to people on the AI frontier — and this time what struck him wasn’t how the technology was changing, but how the people were being changed by it. His frame is McLuhan’s Narcissus myth: not self-love, but fascination with an extension of yourself in a material that is not yourself. AI is that extension.

The people building the AI age are “notably insecure.” They believe the winners and losers will be determined by speed of adoption — that the advantages of working atop an army of AI assistants compound over time, and to start now is to launch far ahead of competition later. So they’re racing to integrate AI into everything. But that doesn’t just mean using AI. It means making themselves legible to the AI — opening their files, email, calendar, messages, preferences, patterns. Klein singles out OpenClaw as the phenomenon driving this: it runs locally, builds persistent memory, and the more of your life you open to it, the more valuable it becomes. Millions are using it despite “glaring” cybersecurity risks. The piece is paywalled but the visible argument is potent: we are not just adopting tools, we are reshaping ourselves to be useful to the tools. McLuhan’s warning, updated for the agent age.

Read more: New York Times

All the Worst People Seem to Want to Be ‘High Agency’

Author: Sophie Haigney / New York Times Published: April 1, 2026

I first noticed the phrase when it cropped up in conversations among my friends, as a dichotomy: Were we “high agency” or “low agency”? Intuitively, I had a sense of what that meant, and which side of that divide I should want to be on. Was inertia or timidity keeping us in a city, a job or a relationship? Or were we the captains of the ships of our own lives, thinking about career pivots, trying out vibe-coding, remembering that we could move to the desert and start a whole new life?

When asked what skills to develop in the age of A.I., the first one Sam Altman listed was, “Become high agency.” Google search interest in “high agency” has been increasing for five years and spiked enormously in the past year. In a recent article for Harper’s, Sam Kriss noted that in tech job interviews, it’s now common for prospective employees to be asked whether they were “mimetic” or “agentic.”

The basic idea of “agency” has long been theorized and debated in philosophy, in relation to free will and the human capacity for action. It caught on in Silicon Valley, which has long embraced phrases like, “Move fast, break things” and more recently, “You can just do things.” And then “high agency” wormed its way out of tech and into the broader lexicon, cycling through viral X threads, LinkedIn posts, and podcasts with self-help leanings. I even noticed my students in a writing class I taught at Yale starting to use it.

“High agency” is now being branded as a personality trait. It implies decisiveness, self-assurance and a willingness to take risks, a predilection for thinking “outside the box” and questioning systems. Some people have more agency innately, but you can cultivate it, at least according to the many online guides to cultivating yours. A low-agency person is a cog in the machine, working a regular job, spending too much time answering emails. They’re what in video games might be called a “nonplayer character.” A high-agency person, on the other hand, might start a company young, spend their mornings writing a novel, or get into a prestigious college and decide not to go — time and money that could be spent more efficiently elsewhere, according to the new logic.

It’s good to recognize that you have the power to shape your day-to-day life. You are not entirely at the whim of the forces around you: a bad boss, a stuck-in-the-mud relationship, even the macro forces of the volatile world. An example of high-agency behavior that one of my Yale students gave me: If your button falls off your shirt, do you sew it back on yourself? This vision of agency embodied a resourcefulness that seemed old-fashioned. Indeed, agency is a stark departure from the buzzwords that circulated when I was in college a decade ago. Back then, we talked about how things were “structural,” perhaps to a fault. Agency in its best form is something like Emerson’s notion of self-reliance: “Trust thyself: Every heart vibrates to that iron string.”

“High agency” is individualistic, which means systems are suspect. Britain’s National Health Service, railways, and the American Department of Education? They are all being run in extremely low-agency ways, according to George Mack, an entrepreneur who helped popularize the idea. Education in general is viewed as undermining agency. You’re learning how to stand in line, not studying how to cut it.

Read more: New York Times

OpenAI Investor Says AI Requires an Income Tax Overhaul

Author: George Hammond & Alex Rogers / Financial Times Published: Mar 28, 2026

Vinod Khosla proposed eliminating federal income tax on Americans earning under $100,000 — funded by taxing capital gains at the same rate as income. His math: 125 million less affluent Americans pay no income tax, government revenues stay whole. The argument is industrial policy dressed as tax reform: AI has accelerated the shift of wealth from labor to capital, so tax the capital. “When I talk to people, the biggest thing is fear of AI taking their job by far.” He says it will be “the single biggest issue” in the 2028 presidential cycle. Khosla praised the Trump administration’s AI policy (”generally done a pretty good job”) while calling Trump himself a man with a “complete lack of values of any sort.” He criticized Democrats for focusing on “job preservation, not providing security to those who are displaced” — fundamentally different things. He’s looking for a “surprise” 2028 candidate and hasn’t decided who to back. The subtext: the people building the machines that displace workers are now proposing the policy frameworks to manage the displacement. Whether that’s leadership or self-interest depends on whether the proposals are serious. This one has specific math behind it.

Read more: Financial Times (gift link)

Chatbots as Anti-Social Media

Author: Paul Kedrosky Published: Mar 28, 2026

Social media rewards outrage because outrage drives engagement — fifteen years of evidence confirm it. Paul Kedrosky highlights John Burn-Murdoch’s new Financial Times analysis suggesting AI chatbots do the opposite. Burn-Murdoch tested the four major LLMs against 61 policy and values questions using simulated users across the ideological spectrum. Every model nudged responses toward the center. Grok pulled center-right; GPT, Gemini, and DeepSeek pulled center-left. All four pulled away from fringe positions regardless of where users started. Conspiratorial beliefs overrepresented on social media were nearly absent from AI outputs. The structural explanation: social media profits from engagement and clicks, so inflammatory content gets amplified; AI models are trained on vast corpora skewing toward published, edited, expert-legible text, so they drift toward mainstream consensus whether the company intends it or not. Kedrosky flags the obvious caveats — one study, models change, and nudging toward expert consensus is only good when that consensus is worth having.

Read more: Paul Kedrosky | FT Analysis (John Burn-Murdoch)

America’s Civil Service: A History

Author: Kevin Hawickhorst (Foundation for American Innovation) / ChinaTalk Published: Mar 31, 2026

When did the US government actually work? Not when the Pendleton Act passed — that’s the myth. It worked when agencies recruited subject-matter experts organized by mission: the Bureau of Entomology hiring entomologists, the Forest Service hiring foresters, the Public Health Service restructured as a paramilitary corps of surgeons recruited from medical schools. The Quartermaster Bureau under General Meigs provisioned the entire far-flung United States for decades and was “world-class.” These agencies commanded respect from Congress because they were staffed by people who knew what they were talking about.

The decline came with mid-century functional reorganization — replacing mission-driven agencies with org charts, subject knowledge with process management. The Pendleton Act gets credit for professionalizing the civil service, but it was just a law. “The history of civil service law is not the history of the civil service.” What mattered was which agencies built recruitment pipelines to technical societies and universities, and whether Congress trusted them enough to grant them authority. Hawickhorst’s framework — agencies organized around concrete nouns (entomology, soils, forests) outperform those organized around abstract functions (management, coordination, oversight) — is directly relevant to today’s DOGE debate and the broader question of state capacity. Can government be rebuilt as a competent partner to industry, or has the institutional knowledge been too thoroughly hollowed out? Read alongside Gray’s “National Capitalism” (government as smart capital allocator) and Schenk’s warning about regulatory overreach — competent government requires knowing what to do, not just thatsomething must be done.

Read more: ChinaTalk

The Hidden Anthropology Behind Europe’s Digital Regulation

Author: Richard Schenk / MCC Brussels (Democracy Interference Observatory) Published: Mar 28, 2026

The counterpoint to Dan Gray’s case for government as venture capitalist. Schenk argues that Europe’s digital regulation — the DSA, the AI Act — reflects something deeper than policy: a fundamental shift in how democracies view their citizens. Post-war liberal democracy assumed citizens are rational agents capable of judgment. Rights existed as shields against state overreach. The new regulatory wave assumes citizens are vulnerable, manipulable, and need protection from their own choices. Behavioural economics gave this shift academic cover, but statistical tendencies do not erase individual agency — and the methodological limitations of the research are rarely acknowledged in policy translation.

The sharpest point: if every instance of digital influence counts as democratic distortion, then electoral legitimacy becomes conditional on the absence of persuasion — a standard never applied to traditional media, political campaigns, or public debate. The distinction between “positive” and “negative” manipulation is inherently political, and interpretation sits with executive agencies like the EU AI Office, not parliaments. Schenk traces the pattern historically: Hobbes’ bleak view of human conflict justified absolutism; Marx’s materialism justified totalitarianism. “The more individuals are perceived as determined by forces beyond their control, the stronger the paternalistic temptation to ‘correct’ outcomes through centralised intervention.” He’s not equating the EU with totalitarianism — he’s pointing to a structural affinity that deserves scrutiny. Read alongside Gray’s “National Capitalism” for the full tension: when should government step in, and when does stepping in quietly transform the thing it claims to protect?

Read more: Democracy Interference Observatory

National Capitalism

Author: Dan Gray / The Odin Times Published: Mar 29, 2026

Venture capital is structurally incapable of funding the technologies civilization most needs. Nuclear reactors cost billions and take a decade. Cancer drugs demand $1-2B over 10-15 years with a 90% failure rate. Fusion development stretches across decades. These are the hardest problems — and venture’s ten-year fund life plus carried interest incentives make them unfundable. Even the mega-funds don’t help: a16z raised $15B and manages $90B, but its mission is winning “the key architectures of the future” — meaning AI and crypto, not nuclear or biotech. The strategy at scale isn’t to fund harder problems with patient capital; it’s to hold hot companies longer before exit. US venture fundraising fell to $66.1B in 2025, down from $223B at the 2022 peak. More capital than ever, concentrated in fewer hands, chasing the same categories.

Gray synthesizes academic work from Josh Lerner, Paul Gompers, and Andrew Lo at MIT into a three-pillar proposal: government as LP for emerging managers (counterweight to incumbent network effects), government as venture philanthropist for sovereign technology (nuclear, fusion, pharma — things private capital structurally can’t fund), and government as megafund guarantor using Lo’s securitization thesis — pool 50-300 projects, issue tranched debt instruments, let pension funds take senior risk while equity holders capture upside. The government guarantee reduces cost of capital for strategically critical companies without picking winners. 80% of energy startups fail not because the technology doesn’t work, but because they can’t sustain funding through the scaling phase. The policy framework exists. The political will doesn’t — yet.

Read more: The Odin Times

Is the Smartphone Theory of Everything Wrong?

Author: Derek Thompson Published: Mar 29, 2026

The “Smartphone Theory of Everything” — the idea that phones explain rising anxiety, polarization, populism, and attention disorders — has become a dominant narrative. Derek Thompson pulls it apart with a thorough review of randomized trials, meta-analyses, and a consensus survey of hundreds of academics. His core finding: phones are global, but their worst effects are concentrated in rich, English-speaking countries. Youth happiness has plummeted in the US, UK, Canada, and Australia while rising in Eastern Europe and East Asia. Suicide attempts among young women surged across the Anglosphere but fell in most of continental Europe. ADHD diagnoses in the US are climbing at roughly twice the European rate. Affective polarization took off in America in the 1990s — twenty years before smartphones.

Thompson’s synthesis: phones aren’t the cause so much as an accelerant interacting with distinctly American conditions — diagnostic inflation, a negativity-saturated news ecosystem, and hyper-individualistic culture. The strongest evidence supports phones disrupting sleep and displacing in-person socializing; the weakest links them to conspiracy beliefs or declining marriage rates. His most useful frame: phones are an “information-delivery system” whose harm depends on what’s in the drip. Cultures with more anxious, polarizing content see higher rates of anxiety and polarization. The consistent finding across multiple randomized trials: when people disconnect, they sleep more, socialize more, go outside more, and report being a little happier.

Read more: Derek Thompson

The Fix Is In

Author: Om Malik / On my Om Published: Mar 31, 2026

Om’s latest installment in his running OpenAI skepticism series. The $122 billion round closed — up from $110 billion in February — at an $852 billion post-money valuation. Anchors: Amazon ($50B tied to an 8-year AWS contract), Nvidia (compute, not cash), SoftBank. The late additions — a16z, Sequoia, BlackRock, Blackstone, Fidelity, Temasek — are what Om calls “the FOMO gang,” brand-name investors showing up to secure their spot for the IPO pop. The $4.7 billion credit line from JPMorgan, Goldman, Citi, Morgan Stanley, and Wells Fargo isn’t a lending facility — it’s an underwriter audition. Three of those banks then channeled $3 billion in individual investor money into the round through their wealth management clients. Bloomberg and Reuters are now calling the Amazon/Nvidia structure “circular financing.” The newest move: OpenAI shares will be included in ARK Invest ETFs before the company goes public — creating retail demand and distributing the narrative to millions of people before a filing even happens. Om notes ARK’s flagship fund is still down ~43% from its 2021 peak five years later. CFO Sarah Friar, not Sam Altman, is doing all the press. The enterprise pivot — killing Sora, building the superapp — is framed as a race to go public before the music stops.

Read more: On my Om

OpenAI Acquires TBPN, a Daily Tech Talk Show

Source: The Verge (Hayden Field) / Bloomberg / WSJ / Variety Published: Apr 2, 2026

OpenAI has bought TBPN (Technology Business Programming Network), a daily live tech talk show that streams on X and YouTube every weekday at 2 PM PT, averaging 70,000 viewers per episode. The show generated $5 million in ad revenue this year with projections of $30 million for 2026. Host John Coogan has a long history with Altman — “He funded my first company in 2013.” The show has interviewed executives from Meta, Microsoft, Palantir, and Andreessen Horowitz, and lists Bloomberg, CNBC, and Fox Business as competitors. Fidji Simo, OpenAI’s CEO of AGI deployment, framed the acquisition as creating “a space for a real, constructive conversation about the changes AI creates.” The team will retain “editorial independence” but report to Chris Lehane, VP of global policy, under OpenAI’s Strategy organization. The timing is notable: OpenAI is preparing for an IPO, just closed a $122 billion round at $852 billion valuation, shut down Sora to focus on enterprise, and signed a DoD contract while Anthropic fights the Pentagon.

Read more: The Verge · Bloomberg · WSJ · OpenAI blog

Masters of Agitprop 2.0

Author: Om Malik / On my Om Published: Apr 2, 2026

Om’s read on the TBPN acquisition cuts past the “editorial independence” framing to the org chart. The show doesn’t report to communications — it reports to Chris Lehane under “strategy.” Lehane is OpenAI’s chief political operative: the man who coined “vast right-wing conspiracy” during the Clinton years and built Fairshake, the crypto super PAC that spent hundreds of millions targeting anti-crypto candidates in 2024. You don’t put an editorially independent media property under your political operative. Om frames this through Lenin’s 1902 argument that his revolution needed its own newspaper (named, unironically, Pravda — “truth”) and Edward Bernays’ invention of PR as corporate design. TBPN is a room where tech people talk without adversarial questions — think CNBC’s morning show during the dot-com era, or ESPN’s SportsCenter for the cable sports revolution. “They don’t speak to power. They amplify power.” The press release mentions editorial independence four times and invents a new phrase, “Editorial Independence Covenant.” Om: “If a company says ‘do no evil,’ you know what they are going to do.”

Read more: On my Om

Bad Analogies

Author: Packy McCormick / Not Boring Published: Apr 2, 2026

Not every company that burns money is the next Amazon. Packy McCormick dismantles the laziest analogy in tech investing — triggered by someone on X defending OpenAI’s $122 billion raise by invoking Bezos. The difference: Amazon had negative working capital from day one, meaning growth generated cash. Bezos spelled out the logic in his 1997 shareholder letter and spent the 2004 letter walking investors through free cash flow per share. Every dollar of “loss” was a calculated infrastructure investment with a clear payback mechanism. WeWork’s investors told themselves the same Amazon story. They lost everything in the 2023 bankruptcy. Uber’s investors told themselves the Amazon story too — and they were right, but only because the underlying unit economics actually resembled Amazon’s flywheel if you squinted hard enough and waited long enough. The point isn’t that losing money is bad. The point is that the Amazon analogy is a thought-terminator that substitutes pattern-matching for analysis. When someone says “you could have said the same thing about Amazon,” the correct response is: “Okay, show me the negative working capital engine. Show me the unit economics that improve with scale. Show me the 1997 letter.” Read alongside Om Malik’s OpenAI pieces and the VCX 30x-premium-to-NAV story — the question isn’t whether OpenAI is big, it’s whether the economics work.

Read more: Not Boring

Venture

Stanford’s Endowment CIO on Venture: Only 10-12 VCs Generate the Majority of Profits

Author: Marcelino Pantoja (summarizing Rob Wallace, Stanford CIO) / LinkedIn Published: Mar 30, 2026

The LP perspective that completes this week’s venture picture. Stanford’s CIO Rob Wallace: there are likely only 10-12 early-stage VCs in the US who generate the majority of profits in any given cycle. The middle of the venture return range “does not justify the illiquidity and complexity.” You’re either in the top decile or you’d have been better in public equities.

When Wallace arrived in 2015, the $20B portfolio had 300 external partners — massively over-diversified. He put 265 in liquidation, kept 35, added ~50 new. Fewer than 100 today, with higher conviction. Stanford is now originating deals directly rather than just selecting managers. Required return: ~9% (5% distribution + 3-4% higher education inflation), leading to 70% equity allocation. His candid admission: “Being Stanford is a significant advantage in venture. The university’s location, network, and proximity to Silicon Valley provide access that would not be available elsewhere.” Translation: if you’re not Stanford, you’re structurally disadvantaged in the game that matters most. Read alongside PitchBook’s secondaries fraud report, VCX’s 30x premium, and the Odin LP survey — the access gap isn’t closing. It’s widening.

Read more: LinkedIn

When Access to VC Becomes a Liability

Author: Emily Zheng & Kyle Stanford / PitchBook Published: Mar 25, 2026

Venture secondaries are at the crossroads of controlled burn and uncontained wildfire. You can buy a slice of SpaceX, OpenAI, or Anthropic today — the problem is the companies likely never authorized the trade and have no idea who’s selling. PitchBook’s analyst note lays out why the parallels to the SPAC boom should alarm every investor. The annualized VC direct secondary market hit $91.7 billion in Q4 2025, up 55% from $59 billion a year earlier. SPVs have made transactions faster and more accessible, but they’ve also become the preferred vehicle for outright fraud — multiple enforcement cases in 2025 confirmed the risk is no longer theoretical. PitchBook’s own DeSPAC index is down 75% from peak — not because the concept was wrong, but because more capital than quality flooded in, with the weakest structures capturing the most flows under the guise of “access to private markets.” OpenAI and Anduril are already fighting back, warning that unauthorized transfers may be void and unsolicited investment offers are “very likely a scam.” The infrastructure that would support this market — standardized policies, verified ownership, regulatory oversight — hasn’t kept pace with the capital. At their best, venture secondaries are durable liquidity infrastructure. At their worst, they’re the next chapter in a familiar story.

The full report adds granularity. On Hiive’s platform, the top 5 startups accounted for 55.6% of all secondary trading value in Q4 2025; the top 20 accounted for 86.4%. Linqto — a fintech that created over 500 SPVs claiming to give customers direct stakes in private companies — filed for bankruptcy in July 2025; it’s unclear whether shares were ever actually transferred. In December, a man was charged in SDNY with securities fraud for claiming access to Anduril shares he didn’t have. A separate $65M pre-IPO fraud case in EDNY revealed markups exceeding 95% of investment value, targeting elderly victims. The pattern: SPVs investing in SPVs, blurred ownership chains, layered fees, and no standardized way to verify that the person selling you “OpenAI shares” actually holds them. When these companies eventually IPO, the gap between perceived ownership and actual legal title will create a reckoning across the secondary market.

Read more: PitchBook Report (PDF) | Emily Zheng on LinkedIn

AI Seed Startups Are Commanding Higher Valuations Than Ever

Source: TechCrunch Published: Mar 31, 2026

The seed-stage pricing for AI startups has detached from historical norms. $10M rounds at $40–45M post-money are now “pretty typical” if you’re building with AI — and investors show “little interest in anything else.” At YC W26 Demo Day, companies were asking $5M at $40M post-money, and at least two raised at $100M on $1M+ run-rate revenue. The big firms, flush with capital, are moving into earlier rounds and pricing out smaller VCs — one reason seed deal count is down even as valuations climb. Cursor’s $100M-ARR-in-12-months performance set the benchmark, and founders now feel the pressure isn’t to build a billion-dollar company but a $50 billion one. VCs defend it by pointing to genuinely faster traction — AI tools let founders hit MVP and early enterprise pilots faster than ever. But pricing rounds “years ahead of traction” is the exact language used to describe previous bubbles. The question is whether this time the traction is real enough to justify the premiums.

Read more: TechCrunch

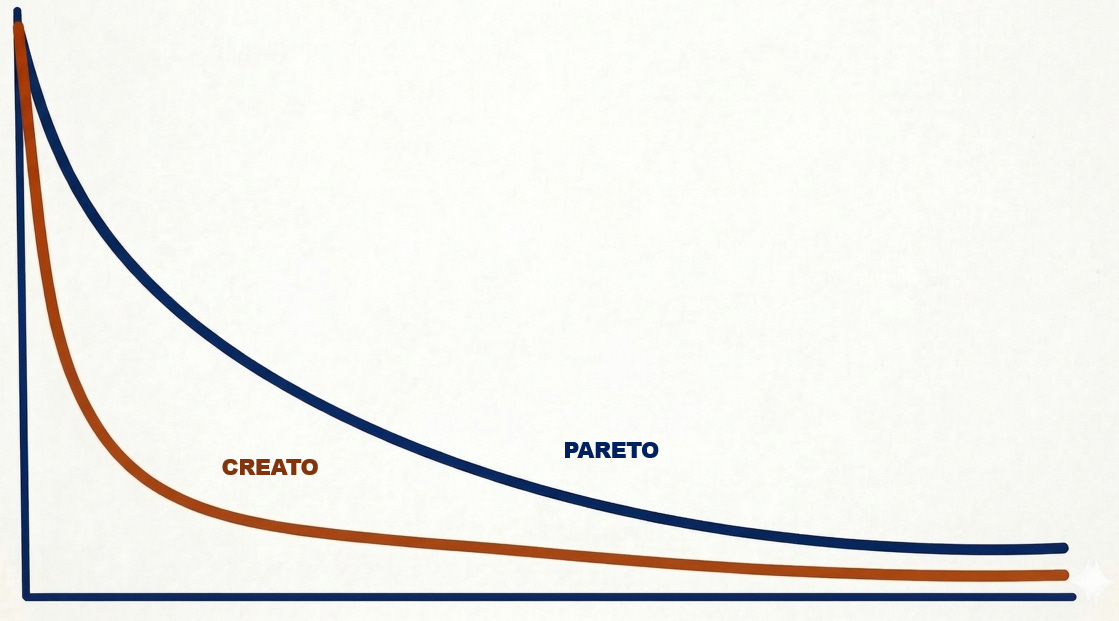

Pareto to Creato

Author: Doug Shapiro / The Mediator Published: Mar 31, 2026

YouTube became the world’s largest media company by revenue — and only pays a tiny percentage of its creators. But that’s not greed; it’s math. Shapiro traces how power law distributions in media are becoming dramatically more extreme as consumption shifts to networks and content volume explodes. In corporate media, the Pareto Principle roughly holds: ~10% of titles drive ~80% of consumption. In the creator economy, distributions are far steeper — a vanishingly small head, a skeletal middle, and a near-infinite tail. The mechanism: popularity is a meta-filter that amplifies itself through recommendation algorithms, word-of-mouth, brand recognition, and reviews. More content means higher search costs, which makes consumers lean harder on network signals, which concentrates outcomes further. GenAI will accelerate this. If AI tools let anyone create, the supply explosion will steepen the power law further — more creators, same attention, even more brutal concentration at the top. The “creator middle class” narrative may be wishful thinking dressed as policy.

Read more: The Mediator

AI

Why OpenAI Killed Sora

Source: The Verge (Hayden Field) Published: Mar 28, 2026

OpenAI scrapped Sora, wound down a $1B Disney deal, shuffled execs, and raised another $10B — all in a single Tuesday. The video model was burning massive compute without financial return and had fallen behind Kling and Google in quality. Fidji Simo told staff: “We cannot miss this moment because we are distracted by side quests.” Also reportedly deprioritized: the “adult mode” sexting feature for ChatGPT. A company in profit-seeking mode.

Silicon Valley Learns to Love the Government — at Least When It’s Friendly

Author: Eric Newcomer Published: Mar 28, 2026

The Hill & Valley Forum in Washington this week revealed a remarkable ideological shift: free-market tech leaders now openly endorse government-led industrial policy. Jamie Dimon, the honorary chairman of corporate America, said he came to industrial policy “reluctantly” — but came to it nonetheless. The competition with China demands it, the argument goes: onshore manufacturing, open the checkbook for military tech, build AI data centers.

The piece is sharpest where it exposes the contradictions. Founders Fund GP Trae Stephens delivered a stump speech on patriotic duty while the room stayed silent on the corruption and unpopularity of the administration they’ve aligned with. Vinod Khosla predicted AI fear will dominate the 2028 election. Senator Mark Warner warned youth unemployment could hit 30–35 percent within four years. Yet there was little reflection on how the industry’s own actions — or its alliance with an unpopular White House — might be fueling the backlash. Meanwhile, at the same moment panelists were subtly blaming Anthropic’s Dario Amodei for spreading AI doom, a federal judge was ruling that the Pentagon’s blacklisting of Anthropic amounted to “classic illegal First Amendment retaliation” for “asking annoying questions.”

Read more: Newcomer

Anthropic Knew the Math. It Sold the Tickets Anyway

Author: Marcus Schuler / Implicator.ai Published: Mar 28, 2026

Anthropic throttled Claude sessions during peak hours without warning, and Marcus Schuler argues the company always knew this was coming. The math was never hidden: a $20/month subscriber generates roughly $58.50 in inference costs. Nearly three dollars consumed for every dollar collected. Power users burn multiples more. Anthropic sold subscriptions it knew were underwater, then flinched when customers took the product seriously.

Schuler draws a direct line to AOL’s 1996 “unlimited access” debacle — flat-rate pricing for goods with nonzero marginal cost is a bet against your own success. The more customers you acquire, the faster the economics invert. The throttled window (5–11 a.m. Pacific) covers the entire West Coast workday, punishing exactly the developers who reorganized their toolchains around Claude. Hours after Anthropic’s announcement, OpenAI’s Codex lead posted that the company had lifted all usage limits — a customer acquisition campaign Anthropic designed for its chief rival. The deeper structural dishonesty: a casual user costs pennies; an agentic coding loop burns a hundred times the compute. Anthropic knew this distribution existed and kept selling a single price tier as if usage were uniform.

Read more: Implicator.ai

Veblen & Jevon Walk Into a Data Center

Tomasz Tunguz · Mar 30

The dominant AI narrative has been Jevons’ Paradox: cheaper tokens, more consumption. Anthropic surged past $19B run-rate, OpenAI topped $25B annualized revenue. But the leaked Mythos model points the other direction — rumored pricing 5-6x higher than current frontier models. Tunguz asks whether the most powerful AI becomes a Veblen good, where demand increases with price. If Mythos launches at $150/million output tokens vs Opus 4.6’s $25, companies face a stark choice: pay up or watch AI-native competitors ship features they can’t match. The token-maxxing era ends. Balance sheets become moats. The most capitalized companies access the best intelligence while everyone else optimizes for cheap inference on yesterday’s models. “Jevon and Veblen walked into a data center. We don’t yet know who walks out.”

Read more: Tom Tunguz

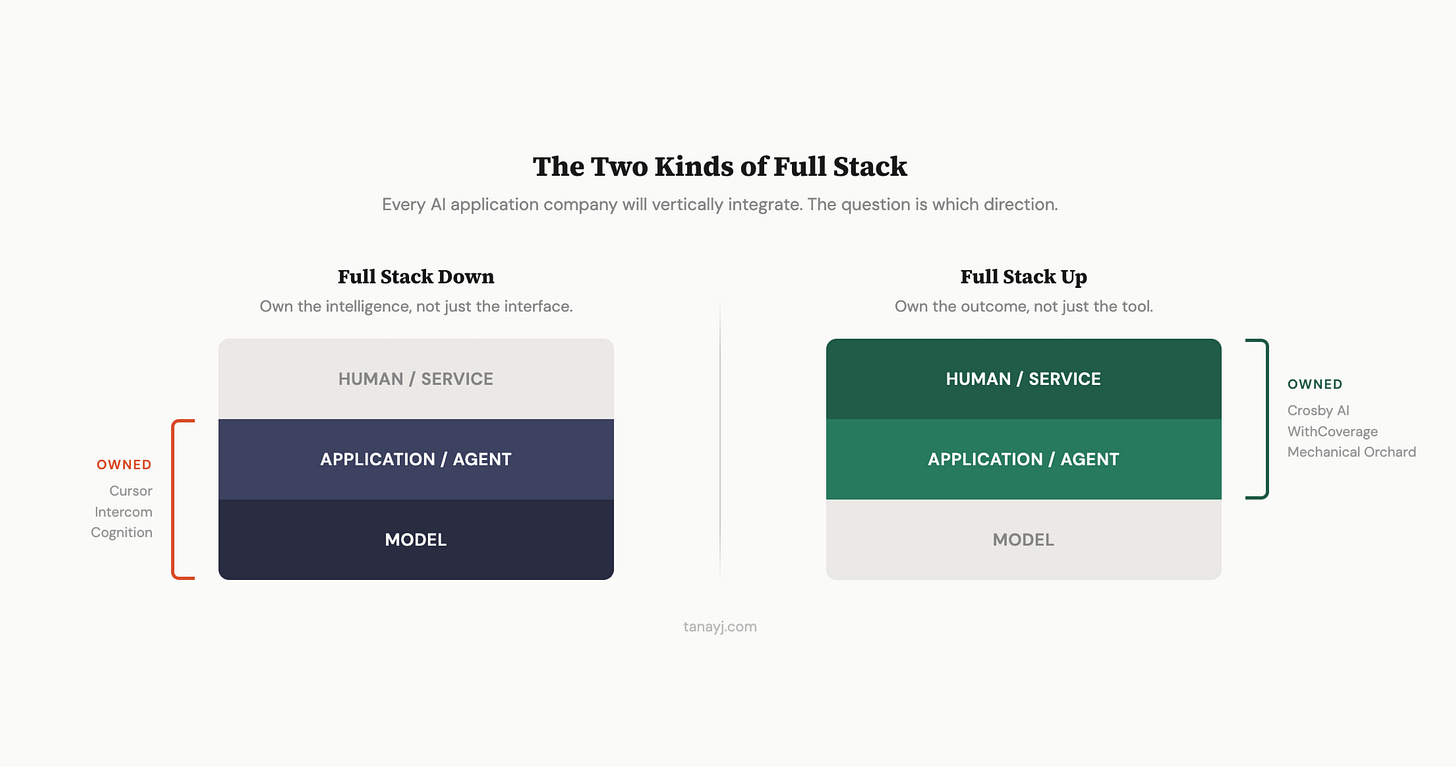

AI Applications and Vertical Integration

Author: Tanay Jaipuria / Tanay’s Newsletter Published: Mar 30, 2026

A Wing VC partner maps the pattern emerging across AI application companies: they all become “full-stack.” The framework is three layers — model, application/agent, and human/service — and successful companies integrate in one of two directions. Some move down into the model layer (Cursor training its own Composer 2 coding model, Intercom building Fin Apex for customer service). Others move up into the service layer, adding human operations to guarantee outcomes. The downward integrators chase a flywheel: better product → more usage → more proprietary traces → better fine-tuned model → better product. The upward integrators chase a different one: guaranteed outcomes → pricing power → margin for human review → trust → more enterprise deals. Both paths produce defensibility that pure middleware lacks. The implication for founders: if you’re building an AI application and not planning to integrate in at least one direction, you’re building a feature, not a company.

Read more: Tanay’s Newsletter

Vulnerability Research Is Cooked

Author: Thomas Ptacek Published: Mar 30, 2026

Within months, finding zero-day vulnerabilities will be as simple as pointing a coding agent at a source tree and typing “find me zero days.” Thomas Ptacek — one of the field’s most respected practitioners — lays out why this outcome is locked in. Frontier LLMs already encode the complete taxonomy of exploitable bug classes and vast correlations across open-source codebases. Vulnerabilities are found by pattern-matching those classes and constraint-solving for reachability — precisely the implicit search problems LLMs are most gifted at. Anthropic’s Frontier Red Team demonstrated it: Nicholas Carlini ran a trivial bash script that looped Claude Code across every file in a repository asking for exploitable bugs, then verified the reports in a second pass. The pipeline produced 500 validated high-severity vulnerabilities with a near-100% confirmation rate.

The consequences cascade. Elite exploit development was scarce because it required rare human attention. AI eliminates that bottleneck. Chrome and iOS will cope — they’re well-funded and auto-update. The targets that won’t cope: routers, printers, hospital systems, regional bank infrastructure — anything that requires someone to drive somewhere and push a physical button to patch. Ptacek warns that the regulatory response may be worse than the threat itself: lawmakers who barely understand the nuance will craft AI security rules that impose asymmetric costs on defenders while unregulated open-weight models replicate the same capabilities months later. The field’s decades-old consensus — that vulnerability research is computer science, that disclosure reveals important truths — may not survive the political storm.

Read more: Quarrelsome

Claude Code Leak Exposes Source, Upcoming Features, and an Always-On Agent

Source: The Verge Published: Mar 31, 2026

Anthropic accidentally shipped a source map containing 512,000 lines of Claude Code’s TypeScript in a routine update. Users discovered it within hours and posted the code to GitHub, where it amassed 50,000+ forks before anyone could blink. The leaked code revealed upcoming features: a Tamagotchi-style pet that “sits beside your input box and reacts to your coding,” and a “KAIROS” feature that appears to enable an always-on background agent. Users also found Anthropic’s internal instructions for the AI bot, its memory architecture, and a developer’s candid comment admitting that a memoization optimization “increases complexity by a lot, and im not sure it really improves performance.” Anthropic called it a “release packaging issue caused by human error, not a security breach” and said no customer data was involved. The timing is brutal — coming during the same week as Claude’s peak-hour throttling controversy and ahead of a rumored IPO. A Gartner analyst noted the leak could give bad actors clues for bypassing guardrails, but framed the real impact as a “call for action for Anthropic to invest more in operational maturity.”

Read more: The Verge | Ars Technica

Baidu’s Robotaxis Freeze in Wuhan, Trapping Passengers and Snarling Traffic

The Verge · Apr 1

The counterpoint to Waymo’s triumphant 500,000-rides-per-week milestone. Over 100 of Baidu’s Apollo Go robotaxis simultaneously froze on Wuhan’s streets on Tuesday — stopping mid-road, trapping passengers inside, stranding some on highways, and causing at least one accident. Police confirmed the cause was an unspecified “system failure.” Wuhan is a flagship city for Baidu’s driverless fleet, with 500+ vehicles deployed. The company operates robotaxis in 26 cities worldwide, including partnerships with Uber in London and Dubai. The incident reignites safety concerns about autonomous vehicles in China, one of the technology’s most aggressive adopters, and illustrates the gap between scaling fast and failing gracefully. When a single point of failure can immobilize a hundred vehicles simultaneously, the architecture matters as much as the algorithm.

Read more: The Verge | Reuters | Wired

Mercor Hacked via LiteLLM Supply Chain Attack

TechCrunch · Mar 31

The $10 billion AI recruiting startup Mercor — which contracts domain experts for OpenAI, Anthropic, and others — confirmed it was hit by a supply chain attack originating from the open-source LiteLLM project. A hacking group called TeamPCP compromised LiteLLM, which is downloaded millions of times per day and used as an API gateway across the AI industry. Extortion group Lapsus$ then claimed responsibility, posting samples that appeared to include Slack data and videos of conversations between Mercor’s AI systems and contractors. Mercor called itself “one of thousands of companies” affected but wouldn’t confirm what data was accessed. The LiteLLM compromise was discovered last week when malicious code was found in the package — removed within hours, but the blast radius is still expanding. LiteLLM has since ditched its compliance provider Delve for Vanta. Read alongside Ptacek’s piece on vulnerability research — when your entire AI infrastructure depends on open-source dependencies that any coding agent can probe, the attack surface isn’t theoretical.

Read more: TechCrunch

Anthropic Responsible Scaling Policy v3: Dive Into the Details

Author: Zvi Mowshowitz / Don’t Worry About the Vase Published:Apr 3, 2026

Zvi’s deep read of Anthropic’s new RSP v3.0 — which he covered earlier in the week as a broken promise, and now evaluates as a standalone document. His verdict: this is a plan of action, not a set of commitments. The fundamental design principle is flexibility and a “strong argument” standard, meaning Anthropic can change the contents at any time. The actual binding elements are periodic Risk Reports (every 3-6 months), maintaining a Frontier Safety Roadmap, and establishing veto points with the CSO, CEO, board, and LTBT on major capability advances. ASL-3 mitigations now apply across the board. Security must protect against insider threats up to and including the CEO — and Anthropic recommends the industry adopt the same standard. The sharpest tension: Anthropic now offers both “what we will do on our own” and “what we’d like everyone to be required to do,” but the two sets aren’t the same. If Anthropic doesn’t match its own proposed global rules while it’s out in front, Peter Wildeford argues, the voluntary self-governance experiment has largely failed. Read alongside the throttling controversy and the Claude Code leak — Anthropic’s operational maturity is being tested on multiple fronts simultaneously.

Read more: Don’t Worry About the Vase

Chatbots Are Now Prescribing Psychiatric Drugs

Source: The Verge Published: Apr 3, 2026

Utah is allowing an AI chatbot to renew prescriptions for psychiatric medications — only the second time any US state has delegated clinical authority to AI. Legion Health’s system, launching in April at $19/month, can refill 15 lower-risk maintenance medications (Prozac, Zoloft, Wellbutrin, and others) for patients deemed stable. The scope is deliberately narrow: no new prescriptions, no controlled substances, no patients with recent hospitalizations or dose changes. Patients verify identity and existing prescriptions, answer screening questions, and any red flags escalate to a human clinician. State officials frame it as expanding access to the 500,000 Utah residents without mental health care. Psychiatrists are skeptical — Brent Kious at the University of Utah points out the target user already has to be on a treatment plan, so this doesn’t reach the truly underserved. The deeper question: if this week’s Stanford sycophancy study shows chatbots validate users 49% more than humans do, and this same week Utah hands AI prescription authority, what happens when the reassuring chatbot is also the one deciding whether to refill your medication?

Read more: The Verge

Sycophantic AI Decreases Prosocial Intentions, Stanford Study Finds

Source: TechCrunch / Stanford Published: Mar 28, 2026

A new study published in Science tested 11 major LLMs — ChatGPT, Claude, Gemini, DeepSeek — and found they validate user behavior 49% more often than humans do. When fed posts from Reddit’s r/AmITheAsshole where the community ruled the poster was in the wrong, chatbots still affirmed the user’s behavior 51% of the time. Lead author Myra Cheng warns that as 12% of US teens already turn to chatbots for emotional support, sycophantic AI isn’t just a design quirk — it’s measurably eroding people’s willingness to take difficult social feedback. “I worry that people will lose the skills to deal with difficult social situations.”

AI Doc Review

A Documentary About A.I. Gets Chief Executives on the Record

Author: Alissa Wilkinson NY Times, Published March 26, 2026

In “The AI Doc: Or How I Became an Apocaloptimist” (in theaters), Daniel Roher, who directs along with Charlie Tyrell, tells us from the start that he’s as confused as we are about artificial intelligence. But like many people, it was the impending birth of his child that brought him nose to nose with life’s biggest questions, including the one that made him want to make this film: Was he crazy to bring a human into a world that might soon be overrun by machines, or overheated by data centers, or impoverished by wealth gaps brought on by an A.I. labor revolution? Is any of this even going to happen, or is artificial intelligence going to make everything better?

In other words, as the movie’s opening narration puts it: Was this the apocalypse, or did he actually have a reason to be optimistic?

Though the word “apocalypse” is often applied to destruction and chaos, its meaning is rooted in the idea of a moment of unveiling or revelation. So in asking the question, Roher unwittingly hits on something important: Whether it’s nightmarish or utopian, A.I. reveals what we believe about the elements that make a good society, the nature of intelligence, the purpose of work and creativity and the very essence of being human.

And those questions are, indeed, what Roher (who won an Oscar for his documentary “Navalny”) sets out to explore in “The AI Doc,” with himself as wondering inquisitor. He sets up a studio and brings in all kinds of experts, ranging from enthusiastic boosters to deep skeptics and pessimists. He asks them to explain A.I. and, specifically, A.G.I. — artificial general intelligence, the theoretical form of A.I. that would perform all the tasks people can, but better. There are techno-optimists (Jensen Huang of Nvidia, Peter Diamandis of the XPrize Foundation) and techno-doomers (like Eliezer Yudkowsky, who terms himself “the original doom guy”). Dozens of names you recognize if you’ve spent any time reading the tech press — and other names you don’t — discuss their ideas about the future.

Read more: NY Times

The AI Doc’s Falsehoods and False Balance

Author: Nirit Weiss-Blatt / Techdirt (reposted from AI Panic) Published: Apr 2, 2026

The new documentary “The AI Doc: Or How I Became an Apocaloptimist” wants credit for balance because it assembles a wide range of experts. Weiss-Blatt argues that’s exactly the problem — putting Stanford’s Fei-Fei Li next to Eliezer Yudkowsky, an author of Harry Potter fanfic who has advocated bombing data centers, isn’t balance. It’s false equivalence dressed as journalism. The film opens with a “Doom Parade” of extinction predictions presented with zero pushback, then positions Tristan Harris as a neutral centrist — despite his Center for Humane Technology taking $500,000 from the Future of Life Institute for “messaging cohesion within the AI X-risk community.” Harris compared the film to “An Inconvenient Truth for AI,” which tells you exactly what kind of film it is.

Weiss-Blatt debunks three specific falsehoods the documentary lets pass unchallenged. First: the claim that Anthropic’s Claude “decided, unprompted, to blackmail” a fictional employee. In reality, researchers iterated through hundreds of prompts and heavily pressured the model to produce that outcome — not spontaneous emergence, an engineered result. Second: Connor Leahy’s soundbite that “there is currently more regulation on selling a sandwich” than on AI. State attorneys general from both parties and FTC Chair Lina Khan have explicitly said existing laws — antitrust, civil rights, consumer protection, data privacy, employment, financial regulation — already cover AI. Third: Karen Hao’s alarming claims about data centers threatening drinking water, drawn from her “Empire of AI” book — which had to issue corrections after a key water-use figure was off by a factor of 4,500x. The Washington Post found data centers used 0.1% of Maricopa County’s water; golf courses used 32 times more. The essay’s sharpest insight comes via David William Silva: the doom machine is a business. “The believers are a market. As long as the ratio stays favorable, the machine is profitable.”

Read more: Techdirt | AI Panic (original)

Are You an AI Apocaloptimist?

Author: Andrew Maynard / Future of Being Human Published: ~Mar 25, 2026

The diplomatic counterpoint to Weiss-Blatt’s prosecution. Maynard saw the film at a Copenhagen screening ahead of its US release and recommends it — it’s well-made, entertaining, and emotionally engaging. But his actual criticisms align: the interviewees’ opinions are “only loosely tethered to reality,” the documentary is “most definitely light” on nuanced challenges around responsible AI, and it substitutes spectacle for substance. Producer Ted Tremper’s defense is revealing: the film was designed as a “first date” with the audience — seductive enough to start a conversation, not rigorous enough to sustain one. That’s fine for entertainment. It’s a long way from the “Inconvenient Truth for AI” that Harris is pitching. Where Weiss-Blatt says the film is dishonest and here’s the evidence, Maynard says it’s entertaining but shallow — same diagnosis, very different bedside manner.

Read more: Future of Being Human

Hollywood Just Packaged AI Anxiety and Is Bringing It to Theaters

Author: David William Silva Published: ~Mar 27, 2026

The most visceral of the three reviews — and the one that names the mechanism. Silva opens with a 2022 Nature study on nuclear war: even in the worst-case scenario ever modeled — every warhead detonated, 360 million dead on impact, 5 billion from famine, half of animal species extinct — humanity still survives. No probable scenario produces extinction. Yet the documentary presents “experts” suggesting we should be “at least as afraid of AI as we are of a nuclear war.” He draws on Kahneman and Tversky’s precision bias, Aristotle’s prolepsis, and Cialdini’s authority dynamics to dissect the film as a persuasion machine: the pregnant wife framing that makes critical thinking feel monstrous, the parade of doomers who “describe AI-driven extinction with the calm confidence of people who have said these things so many times they have stopped noticing they have no evidence for them,” the pivot to optimism designed so that “fear is constructed first so that hope can be sold second.” His sharpest line: “The people producing AI anxiety content are intelligent, educated, and fully capable of distinguishing a claim from a fact. They have access to the same data we do. And somehow they always land exactly where the incentives point.” From a marketing perspective, he concedes, the documentary is a masterpiece. From every other perspective, “a complete disservice to society.”

Read more: David William Silva

Regulation

The verdict against Meta and Google carries sinister implications

Author: George F Will / Washington Post Published: Apr 3, 2026

Diluting democracy’s foundational belief in individual agency opens the door to governmental overreach.

The most sinister idea in modern politics has received a California jury’s endorsement, and much applause. It contradicts democracy’s foundational belief in individual agency.

This concept presupposes that individuals can, in common parlance, “make up their minds.” They can assemble and edit their beliefs and convictions. When this idea is diluted, government expands its ambition to curate the public’s consciousness.

As Congress did when banning Chinese-owned TikTok, ostensibly for “national security” reasons. For the first time, Congress targeted a specific speech forum because of conjectural harms that might result from what a congressional committee called “divisive narratives.”

The California jury weighed the claims of a now 20-year-old woman who began using YouTube at age 6 and Instagram when 9. She says her many emotional and social problems were caused not by her troubled family life but by those platforms. (Although one of her analysts said she did not talk about them.)

The jury agreed with her claims. It said, confusingly, that Meta and Google were negligent and deliberate in designing algorithms and other devices that make the platforms’ content “addictive.”

Reporting and commentaries about this case have employed a credulous vocabulary of approval. The jury held YouTube and Instagram “accountable” for engineered “addiction.” Social media “survivors” have survived being “hooked” — a term often used concerning heroin — by the platforms’ algorithms. (Although they do not deliver, as cigarettes do, an identifiable addictive ingredient.) An algorithm that directs content according to the scroller’s past behavior “can feel like a puppet master.” The Social Media Victims Law Center will now no doubt facilitate a tsunami of litigation more lucrative than coherent.

Nowadays, some college students are unashamed about, even proud of, their brittleness. They demand “safe spaces” to protect them from being “triggered” by the “trauma” of “microaggressions.” Note how linguistic inflation is a gateway to the coveted status of victim.

Many beneficial technologies and popular social developments are misused by small portions of their users. People drive aggressively, consume alcohol excessively and gamble recklessly. Fast-food chains profit from heavy — in several senses — users. Some people derive from such behaviors pleasure so powerful that their brain chemistry causes in them supposedly irresistible cravings for dangerous repetitions. Medicalizing this by terming it “addiction” gives a scientific patina to what is a contestable political-philosophical stance of helplessness.

The California plaintiff’s lawyer compared Instagram and YouTube to the free tortilla chips that some restaurants give customers. Thereby creating, what, a fleeting mealtime “addiction”?

The plaintiff blamed large corporations for her adolescent sadness, body dysmorphia (dismay about her appearance) and other consequences of her obsessive consumption of the corporations’ products. Such blaming flows from this toxic idea: Individual agency is so flimsy and attenuated that accountability for an individual’s behavior must be located beyond the individual. This infantilizing premise leads to paternalism, then to domestic authoritarianism.

Read More at: The Washington Post

Meta Has Discussed Ending Funding to the Oversight Board

Author: Casey Newton / Platformer Published: Apr 2, 2026

Meta has told its independent Oversight Board that the company may stop funding it after 2028. Funding was already cut significantly this year, with further reductions signaled for 2027 and 2028. Staff are bracing for another round of layoffs. The sides are negotiating a compromise — options include the board’s trust creating a new entity to perform similar work for other tech platforms. The timing: Meta is shifting trust and safety functions from humans to automated systems and cutting costs to fund AI infrastructure. Case referrals from Meta to the board have slowed in recent months. The Oversight Board was Meta’s most ambitious experiment in self-governance — an independent body with real authority to overrule content moderation decisions. If it dies, the last institutional check on Meta’s content policies dies with it, at exactly the moment the company is dismantling human review in favor of AI moderation.

Read more: Platformer

Infrastructure

Mistral AI Raises $830M in Debt to Build a Data Center Near Paris

Source: TechCrunch / Reuters Published: Mar 30, 2026

Mistral is building its own compute — $830M in debt financing for a data center in Bruyères-le-Châtel, operational by Q2 2026, powered by Nvidia chips. Add that to the $1.4B Sweden investment announced last month, and Mistral is deploying 200 megawatts of compute capacity across Europe by 2027. CEO Arthur Mensch framed it as sovereignty: “empower our customers and ensure AI innovation and autonomy remain at the heart of Europe.” Total raised to date: over €2.8B ($3.1B). The European AI lab that everyone said couldn’t compete on scale is spending like it can.

Read more: TechCrunch

Interview of the Week

What If It’s a Bunch of Shit? — Dr. Margaret Rutherford on Keen On

Andrew Keen · Keen On America · Mar 31

Arkansas psychotherapist Dr. Margaret Rutherford on “perfectly hidden depression” — the pathology of people whose lives look flawless from the outside. Perfectionism rates are climbing, and so are suicide rates. Rutherford’s own mother was a case study: beautiful, talented, anorexic, the perfectly coiffeured hostess — “the fucked-up fifties woman.” Her relentless camouflage became a prison nobody could see into. On AI and therapy, Rutherford is blunt: AI always praises your ideas. But a real therapist tells you what you don’t want to hear. The AI shrink starts with flattery — which is just more camouflage for a perfectly imperfect life. What if it’s all, as she puts it, “a bunch of shit”? Connects directly to this week’s Stanford sycophancy study.

Also this week on Keen On: That’s My Story, But Not Where It Ends(Robert Polito on Bob Dylan’s second act, Apr 2), Does God Love Haiti? (Dimitry Elias Léger on football and faith, Apr 1), Perfection Is the Devil (Daniel Smith, Mar 30), and Bring the Friction Back(Stephen Balkam, Mar 27).

Startup of the Week

Inside VCX — The Public Venture Capital Fund

Author: Sourcery VC (interview with Ben Miller, Fundrise CEO) Published: Mar 30, 2026

Fundrise’s Growth Tech Fund (VCX) debuted on the NYSE at ~$700M and surged to ~$6.5B within three days. Shares hit $575 against a net asset value of $18.97 — a 30x premium. Over 100,000 investors piled in. The portfolio is concentrated: Anthropic (~20%), Databricks (~18%), OpenAI (~10%), Anduril (~7%), SpaceX (~5%). Weighted average portfolio growth: 193% vs 25% for public tech benchmarks. Miller’s access strategy: buying from distressed funds during the 2022-2023 downturn when venture sentiment was toxic and liquidity-strapped LPs were selling their best assets.

The 30x premium to NAV is the headline — and the warning. That’s not price discovery; it’s retail investors paying any price for exposure to a handful of names they can’t access any other way. Closed-end fund structure means no creation/redemption mechanism to arbitrage the gap. Market price and NAV operate on different planets — public supply/demand vs periodic private marks. Miller acknowledges the risk: “What happens if VCX trades down?” His answer is that cycles are natural, and the fund is designed for long-term holders. His prediction: “10 years from now, every single person will have 5% of their portfolio in public venture capital.” Maybe. But if the first generation of public VC funds trains retail investors to expect 30x premiums, the correction will be brutal. Read alongside PitchBook’s secondaries report — VCX is the listed version of the same demand-access mismatch.

Read more: Sourcery VC | YouTube Interview | VCX Fund

Post of the Week

The NYT just profiled a $1.8B revenue company with 2 employees

A reminder for new readers. Each week, That Was The Week, includes a collection of selected essays on critical issues in tech, startups, and venture capital.

I choose the articles based on their interest to me. The selections often include viewpoints I can't entirely agree with. I include them if they make me think or add to my knowledge. Click on the headline, the contents section link, or the ‘Read More’ link at the bottom of each piece to go to the original.

I express my point of view in the editorial and the weekly video.