This week’s video transcript summary is here. You can click on any bulleted section to see the actual transcript. Thanks to Granola for its software.

Editorial

Growing Up? Winning Wars Involves Losing Battles

A federal judge ruled this week that the Pentagon punished Anthropic for talking to the press. Not for failing to deliver. Not for demanding policy in a contract. For speaking publicly about a policy disagreement.

Judge Rita Lin called it “classic illegal First Amendment retaliation.” The amicus brief supporting Anthropic was signed by Amazon, Apple, Google, Meta, Nvidia, OpenAI, Intel, and TSMC - which is to say, the entire American technology industry lined up to tell the government that weaponizing procurement against speech is a line it cannot cross.

In doing so she failed to resolve the key contested point. Can Anthropic dictate to Government how its product is used real-time in war? The interim injunctive judgement will of course be contested, and in all likelihood replaced, but the real issue, about Government authority over weapons and their use, is not even being debated. The answer is a forgone conclusion.

That ruling is this week’s most important event. Not because it settles the question of AI in warfare - the judge explicitly declined to touch that - but because it reveals the gap between how fast AI is becoming mainstream and how poorly the AI companies are prepared for being really important in global politics and economics. Governments are even less well prepared.

I am not sure why Anthropic and its counsel asked for a free speech ruling as in the long run that is not really the point. But well done for getting the short term relief.

Growing Up

For Amodei the dispute with Government should be a “growing up” moment. Instead he is insisting on his freedom of speech, which of course is his right. But that won’t get him back into mainstream supply chains.

Also this week OpenAI closed Sora as a standalone product, integrating its features into ChatGPT. As Sam Altman is unpopular among the noterati this was editorialized as a failure. In reality it is his “growing up” moment and one he chose to take.

OpenAI is focusing more and more on removing peripheral experiments and delivering on core products. In the big picture that is the right thing to do.

If AI is to become a trusted partner of enterprises and Governments it will have to start losing battles in order to win wars.

This week Dario Amodei focused on winning a battle. Sam Altman declared that he had lost a battle to focus on bigger future objectives.

Infrastructure

Jensen Huang’s GTC keynote was, as Om Malik put it, a pitch for a trillion-dollar token factory. He also is playing for big outcomes.

His point: LLM Training was the capital expense for the first phase. Inference is the operating expense of the current phase. Agents are the equivalent of everyone leaving their engine running 24 hours a day, that is the next phase. he wants to supply a growing inventory of solutions against the entire opportunity set.

Nvidia’s Groq acquisition makes sense through this lens: disaggregate the inference pipeline so different silicon handles different stages, the way a modern factory uses different machines for different operations.

Others are following suit.

SemiAnalysis published the engineering blueprint. Amazon showed off its own competing factory - Trainium chips, 1.4 million deployed, Anthropic running Claude on over a million of them, claiming 50% lower cost than Nvidia equivalents. Arm shipped its first chip in 35 years. The roll out of AI inference and agentic workflows is in full swing.

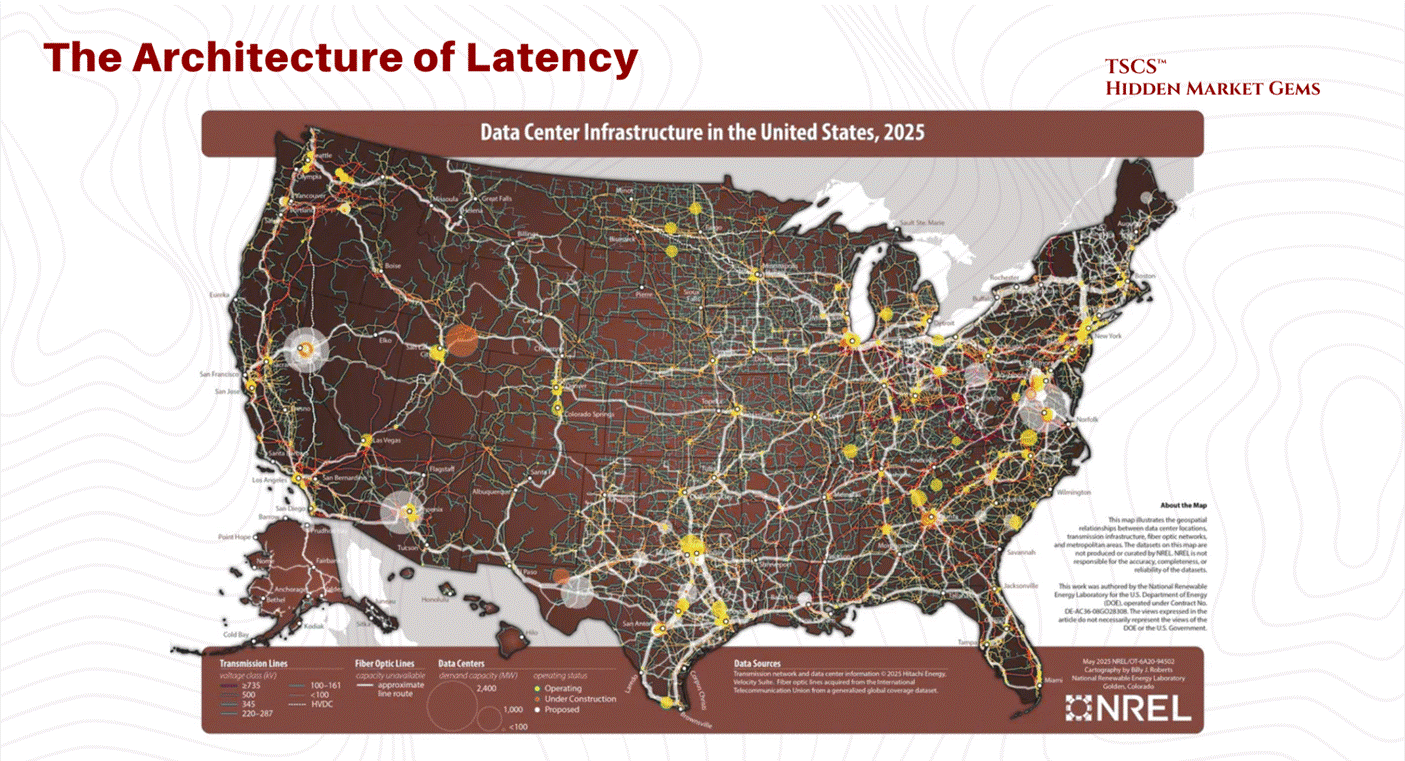

This is no longer a software industry. It is heavy industry. It has power grid dependencies, transformer lead times measured in years, physical vulnerability to military strikes (three AWS data centers in the Gulf were hit by Iranian drones on March 1), and capital expenditure cycles that look more like petrochemicals than SaaS.

Again, this is a growing up moment. Arm deciding to manufacture and sell chips, and not only designs, typifies it.

If you run a company that depends on AI inference, you are not only choosing a model. You are choosing a supply chain. The product team and the finance team are now in the same meeting, whether they know it or not.

The Stock Market and Venture Capital

Now look at what the capital markets are saying about all this.

For the first time in history, software trades at a discount to the S&P 500. Not at parity - below it. Albert Wenger gives 75% odds of a major correction. Om asks why a healthy company would offer private equity firms a guaranteed 17.5% return. Thoma Bravo’s founder says some valuations face “very warranted” decreases - and when the man who built the largest software buyout firm in history says that publicly, it is not small talk. This is not only about SaaS companies but will impact the next wave of IPOs. Markets price outcomes and AI has to grow up and deliver them.

The honest version of this week’s venture conversation is: the technology is real, the demand is real, and the unit economics are still a question mark for most of the industry. IPOs will likely expose the bleeding.

The market is repricing from high multiple growth metrics to low multiple operating reality, and the companies that cannot show margin-accretive, retained, growing paid usage - not just token volume - will discover that patience has a price.

AI Leadership

AI policy needs leadership. David Sacks left the building in DC this week - 130 days as AI czar, term expired, reassigned to an advisory board alongside Zuckerberg, Andreessen, and Jensen.

The timing is conspicuous: it came a week after he publicly criticized Trump’s Iran war on his podcast. In Trumpworld, that sequence is a demotion, not a rotation. What Sacks accomplished - a federal AI framework, an attempt to preempt 50 state laws, aggressive positioning against safety regulation - now lives or dies in a Congress that has shown no urgency on any of it.

Meanwhile, Sanders and AOC introduced a bill to halt data center construction until Congress passes comprehensive AI regulation. The EU delayed its own AI Act enforcement again. Every branch of government in every major jurisdiction is trying to fill the same governance vacuum, and mostly failing.

Nothing New to See

This is how platform revolutions always look in the middle. Infrastructure overbuild happens. Financial engineering appears. Abuse patterns emerge. Then competition and regulation catch up, costs fall, and society gets the upside anyway. Telecom, cloud, mobile all went through this pattern.

But this cycle is different in one way that matters. AI is not just a distribution channel like the web or mobile. When used properly it is a partner that shapes human choices, summarizes reality, and executes actions.

The quality of leadership decisions can degrade AI usefulness before anyone notices. Proposals to stop the build out of data centers is a great example of fear driving decision making.

So what do you actually do with this?

Intelligence is getting cheaper. That is good. More people need to have access to it. And at a price that is inclusive. Fast forward to that and policy helps determine outcomes and markets will price them favorably. Anything else is fear wrapped up as principle. Time to grow up?

Contents

Editorial: Growing Up? Winning Wars Involves Losing Battles

Essays

Venture

AI

Regulation

Infrastructure

Interview of the Week

Startup of the Week

Post of the Week

Essays

140% of Last Year’s Revenue in Q1 - With 1.25 Humans in Sales and 20+ AI Agents

Author: Jason Lemkin / SaaStr Published: Mar 19, 2026

SaaStr went from 25+ employees in 2020 to 1.25 humans in sales plus 20+ AI agents - and hit 140% of prior-year Q1 revenue. An AI agent closed a $70K sponsorship deal with zero human involvement. They’re touching 2x the prospects at a fraction of the cost. The honest part: AI outreach still isn’t as textured as the best human sales writing, the agents hallucinate, and a human still reviews everything. But the leverage is real. This is the first detailed, numbers-included case study of an AI-native GTM stack from a company with enough history to make the comparison meaningful.

Read more: SaaStr

AI Bubble or Not?

Author: Albert Wenger (Union Square Ventures) Published: Mar 24, 2026

75% probability of a major correction. 25% chance recursive self-improvement and cheaper inference make the economics work in time. That’s the assessment from someone who lived through the dotcom bubble as an investor.

The parallels: fantastically extended valuations, roundtripping with Nvidia in AOL’s position, equity and debt dollars financing the illusion of sustainable unit economics. Anthropic may be losing thousands per $200/month max plan. Tokens are heavily subsidized. Private credit markets already showing stress with multiple large funds limiting outflows.

The key difference from dotcom: there is absolutely no demand constraint. People and agents consume every token made available. Revenue ramps into the billions are real. AI agents run 24/7 - a demand category that barely existed a year ago.

But IPOs will reveal the bleeding. Dotcom IPOs exposed meager revenues. AI IPOs will expose massive negative cash flow from capex AND negative unit economics. If inference costs collapse while open source stays competitive, labs face pressure from both sides - can’t raise prices, can’t lower costs fast enough. The value shifts from the model to the ecosystem around it. “The technology is entirely real even if the valuations aren’t.”

Read more: Continuations

The Pricing Power of Agents

Author: Tomasz Tunguz (Theory Ventures) Published: Mar 20, 2026

Tunguz’s second must-read of the week. In sectors with labor shortages, AI agents are already commanding 75-100% of a human-equivalent salary - faster than even he predicted. But the real insight is the compounding economics. First-order: the work gets done. Second-order: training is instant, management burden drops, capacity scales with inference spend. Third-order: no FICA, no unemployment insurance, no benefits - at least a 25-30% cost reduction at the same salary, and agent software is tax-deductible up to $2.56M under Section 179. Goldman Sachs data backs it up: low-labor-cost stocks outperformed high-labor-cost stocks by 8 percentage points in 2025. Labor’s share of GDP hit a record low of 53.8% in Q3 2025. Across the S&P 500, labor costs run ~12% of revenue while software sits at 1-3%. As agents absorb labor, that ratio inverts - and the TAM for software grows at labor’s expense. Right now, no pricing competition on a per-agent basis. Vendors can price at par to a person.

Read more: Tomasz Tunguz

Jensen’s Trillion-Dollar Token Factory

Author: Om Malik / Crazy Stupid Tech Published: Mar 22, 2026

Om cuts through Jensen’s GTC hyperbole to find the real thesis: the arrival of inference inflection. Training was a capital expense - you do it once. Inference is the operating expense - it runs every time someone uses the model. Agents multiply that by orders of magnitude. A single ChatGPT query is one inference call. A NemoClaw agent that reasons in steps, spawns sub-agents, checks tools, and iterates? Hundreds of calls per session, running continuously, without a human in the loop. “Training was the car. Inference is the gas. Agentic AI is everyone leaving their engine running 24 hours a day.” It’s why Nvidia paid $20B for Groq - the LPU handles the latency-sensitive decode stage while the Rubin GPU handles compute-heavy prefill. Together: 35x more throughput per megawatt. Jensen called OpenClaw “the new Windows, the new Linux, the new HTML.” Om calls that classic Jensen - reframing what the industry is already doing as destiny, with Nvidia at the center. The $1T purchase-order projection through 2027? It has actual math behind it, even if the Middle East threatens to derail the party.

Read more: Crazy Stupid Tech

Why Fraud Is the Boring Problem

Author: Om Malik / On my Om Published: Mar 21, 2026

Michael Smith used AI to generate music, then AI bots to fake the streams, and took Spotify and Amazon for $8 million. He’s going to jail. Om’s point: Smith’s crime is the boring version of the problem. Spotify’s Discover Weekly, YouTube’s suggestions, TikTok’s For You page, Amazon’s product feed - all run on signals of human behavior. AI makes those signals trivially fakeable. What happens when real artists use bot armies to goose their stream counts, the algorithm promotes them, and real humans then generate real engagement on top of the faked signals? “At what point does fraudulently-obtained popularity become real popularity? There’s no clean line.” Apple Music flagged 2 billion fraudulent streams in 2025 alone. Deezer receives 60,000+ fully AI-generated tracks daily, with 85% of their streams on AI-generated music classified as fraudulent. This isn’t a music problem - it’s a discovery-infrastructure problem. Whether it’s what gets bought on Amazon, what trends on Facebook, or what becomes culturally popular, AI is making authenticity optional. None of the platform leaders have said how they plan to redesign their systems for it.

Read more: On my Om

More Magic Math from OpenAI?

Author: Om Malik / On my Om Published: Mar 23, 2026

OpenAI is offering private equity firms a 17.5% guaranteed return to join a joint venture structure. Om asks the obvious question: why would a healthy business offer those terms? Two smart-money operators - Jensen Huang (who said OpenAI isn’t well-run) and Thoma Bravo founder Orlando Bravo (who walked away after questioning the profit profile) - are arriving at the same conclusion from different angles. The JV structure would shift the expensive work of customizing models for enterprise clients onto PE firms while providing “clearer segment reporting that can support the IPO narrative.” The buried Reuters quote says it all: this creates a line item that looks like recurring enterprise revenue, which is exactly what IPO bankers need to justify a trillion-dollar valuation. “The real product here is not AI. It is an IPO prospectus.” Maybe there’s a product breakthrough we don’t know about. But no healthy business needs to guarantee 17.5% returns to attract capital.

Read more: On my Om

AI’s Bundling Moment

Author: Tomasz Tunguz (Theory Ventures) Published: Mar 24, 2026

The SaaS era rewarded unbundling - own one workflow, perfect it, expand. AI is reversing the logic. When models change every 42 days, buyers can’t assemble best-of-breed stacks. They want a platform they can trust for three to five years. Harvey expanded from law firms to all professional services, co-building a Tax AI model with PwC across 25+ jurisdictions. Glean went from enterprise search to vertical solutions for healthcare, financial services, and government. ElevenLabs grew from text-to-speech into voice agents, music generation, and audiobooks. OpenAI and Anthropic themselves are building industry-specific sales teams - not selling APIs but becoming platforms. The deeper logic: once integrated, AI systems capture workflows and build more systems on top of them. As the cost of building software falls, trusted partners with broad adoption expand faster than anyone else. “The SaaS playbook rewarded specialization. The AI playbook rewards breadth.” Tunguz’s third must-read of the week.

Read more: Tomasz Tunguz

Modeling the AGI Economy

Author: Albert Wenger (Union Square Ventures) Published: Mar 26, 2026

What does an AGI-level economy actually look like? Wenger built a general equilibrium model with Claude to find out. The debate usually splits between pessimists (extreme wealth concentration, permanent precariat) and optimists (everything so cheap that poverty becomes optional). Wenger’s insight: they’re describing different equilibria of the same system. Which one we get depends on two policy choices: keeping markets competitive and maintaining purchasing power through redistribution.

The model lets you play with the sliders yourself. Key finding: without competition, productivity gains get captured as rents rather than passed through as lower prices. Without redistribution, labor’s collapsed share of income leaves most people unable to participate even if goods are nominally cheap. You need both.

The academic literature he surveys - Acemoglu-Restrepo on task automation, Moll-Rachel-Restrepo on wealth dynamics, Korinek-Stiglitz on redistribution, Saint-Paul on oligarchs and UBI - all address pieces of the puzzle. Nobody had combined them. Wenger did. The model is “vibe coded” and may be buggy, but the intuition is precise: competition plus redistribution gets you a good outcome; monopoly plus no redistribution gets you a nightmare. The sliders make it visceral.

Read more: Continuations | Interactive Model

Venture

AI Startups Are Eating the Venture Industry - and the Returns, So Far, Are Good

Author: Dominic-Madori Davis / TechCrunch (Carta data) Published: Mar 20, 2026

The numbers are in: AI startups accounted for 41% of the $128 billion in venture dollars raised by Carta companies last year - a record share. 10% of startups captured half the funding. The market is now K-shaped: capital concentrates in a select few firms backing a handful of companies, while everyone else exists in a different universe. Fewer bets, more capital per bet - not because AI companies have huge headcounts, but because inference costs are enormous. The promising signal: funds raised in 2023-2024 (post-ChatGPT) are posting the highest IRR compared with declining returns from 2017-2020 vintages. The caveat: early IRR gets inflated when a seed company raises a Series A at a higher valuation - paper returns, not exits. Whether this translates into real returns via IPOs and acquisitions or becomes the next vintage hangover is the open question.

Read more: TechCrunch

What LPs Are Actually Doing Right Now

Author: Samir Kaji Published: Mar 20, 2026

Three things happening on the LP side of venture right now. First, late-stage co-investment demand for the top 5-7 AI names is effectively unlimited - but the fee creep has become egregious. One group charging 15% upfront, 20% carry, and 30% over a 2x for access to a top AI lab. At those economics, a generational company becomes a bad investment. Many SPVs floating around aren’t even sanctioned by the companies themselves.

Second, capital is concentrating into the top 10-20 established brands. If you’re a top multi-stage or Series A firm, you’re oversubscribed in multiples. If you’re not, you’re in a different world entirely.

Third, emerging managers are in the toughest spot - except spinouts from top firms, which move fast and oversubscribe easily. For everyone else, the bar has never been higher. The 2019-2021 hangover is real: too many people tried VC who shouldn’t have, the failure rate on those Fund 1s poisoned the well, and matching LP supply with manager demand has become nearly impossible. Kaji thinks this is actually where the opportunity is - if LPs have the time and expertise to find it.

Read more: Samir Kaji on LinkedIn

30,000 Feet Above the Venture Market

Author: Dan Gray / The Odin Times Published: Mar 22, 2026

Venture Capital Journal’s annual LP survey is out, and one number should alarm anyone who cares about innovation finance: 57% of LPs said they would not consider backing an emerging manager in the next twelve months. That was 33% just one year earlier - nearly doubled in a single year. Capital is retreating to established brands and later stages. Only 7% of LPs plan to invest more in seed-stage funds; 32% said they won’t invest in seed at all. The top three LP frustrations - returns haven’t met expectations, can’t get allocation to the best funds, too hard to pick winners - are three ways of saying the same thing: weak liquidity has poisoned the mood. Gray connects this to the “denominator effect” and risk aversion that historically follows periods of poor distributions, but argues it’s precisely the wrong moment to abandon the frontier. The data consistently shows strong performance from emerging managers. LP herding toward the top 10-20 brands compresses the funnel and risks the long-term health of venture’s innovation engine.

Read more: The Odin Times

Subprime Collapse 2: The Venture Capital Adventure

Author: Alex Oppenheimer / SaaS Engineering Published: Mar 23, 2026

The subprime analogy applied to seed-stage venture, and it lands. When a $3B fund writes a $3M check at a $30-40M cap, that’s not a core investment - it’s a zero-down option bet for the right to deploy $50M later. The founder gets a shiny brand and low dilution. Two years later, the company grinds to $3M ARR - solid, but nowhere near enough to raise an up-round from that cap. The company is underwater. The mega-fund walks away - it was always an expired option. The founder looks at the valuation mountain and walks too. The employees - who took below-market salaries for equity priced at those inflated valuations - are the ones holding the bag. Oppenheimer isn’t theorizing; he’s seen it across 120+ investments over 13 years, and says 2021-vintage seed deals are playing this out right now. “The Power Law is a statistical description. It is not an investment philosophy.”

Read more: SaaS Engineering

There Are Only Two Paths Left for Software

Author: a16z Published: Mar 23, 2026

“To software CEOs, founders, boards, and the investor community: the comfortable middle is over.” Two credible paths to durable equity value: accelerate revenue growth by 10+ percentage points through genuinely new AI-native products in 12-18 months, or rebuild the company to 40%+ true operating margins including stock comp. Everything between is no man’s land - growth pressure, persistent dilution, multiple compression.

The survival playbook is specific. Find the five people in your org who will deliver 100x value regardless of title. Put them on process-capture sprints - SOPs, tickets, transcripts, CRM notes, support logs. Build a living context layer. Watch your VPs for a month to see who’s on the bus. Replace those who aren’t with the AI-native up-and-comers. Put 50% of R&D on net-new AI products in four-person pods. Cap headcount, not compute. “The 8% layoff headline no longer counts. That’s the weak form. The strong form is a redesign of the machine.”

Public markets have already repriced the sector. Terminal value is not what it used to be. The question for every software company is which path they’re on - and whether they’re moving fast enough.

Read more: a16z

YC W26 Demo Day: The Strongest Batch in History

Author: The VC Corner Published: Mar 24, 2026

Rebel Fund has attended every YC Demo Day since 2013 and built a machine learning algorithm to score batches. Their finding: 35% of W26 startups score in the top 20% of all YC companies ever evaluated. No previous batch has come close. One company walked into Demo Day at $27M ARR.

The return data from Garry Tan explains why Demo Day investing works at all. For investors who backed at least 3 companies per batch from 2018-2020: bottom quartile returned 3.3x TVPI. Median: 5x. Top quartile: 8x. Top decile: 15x. The bottom quartile of Demo Day investing outperforms the top quartile of the broader venture market.

The batch itself tells you where AI is going next. Only 5% consumer-facing - the consumer AI wave of 2023-2024 is absent. 64% B2B, heavily weighted toward physical-world problems: robotics, energy, agriculture, aerospace, construction, chip design tools, radar. Healthcare is 10% of the batch. Legal tech around 4%. “The easy SaaS layer has been commoditized. The founders in this batch are going where AI assistance matters most and competition is thinnest.”

Read more: The VC Corner

The SaaS Rout of 2026 Is Even Worse Than You Think

Author: Jason Lemkin / SaaStr Published: Mar 24, 2026

For the first time in history, software forward P/E multiples have fallen below the S&P 500. Not at parity - below. This has never happened. Not in the 2022 rate spike, not in 2008, not even in the dot-com crash. IGV, the iShares software ETF, is down 21% year-to-date and roughly 30% from its September peak - $2 trillion in market cap evaporated. The multiple has collapsed from 84x in May 2020 to 22.7x today. Bloomberg attributes it to two forces: “app software disruption by AI” and “private credit concerns.” The core fear is seat compression - if one AI agent replaces multiple human seats, the per-seat revenue model that built Salesforce, Workday, and Atlassian doesn’t just slow, it reverses. Orlando Bravo said publicly this month that some software valuations are facing “very warranted” decreases. When Thoma Bravo’s founder says that out loud, the market listens. The 2000 crash was speculation unwinding. This is the market saying: we’re not sure the business model works anymore.

Read more: SaaStr

AI

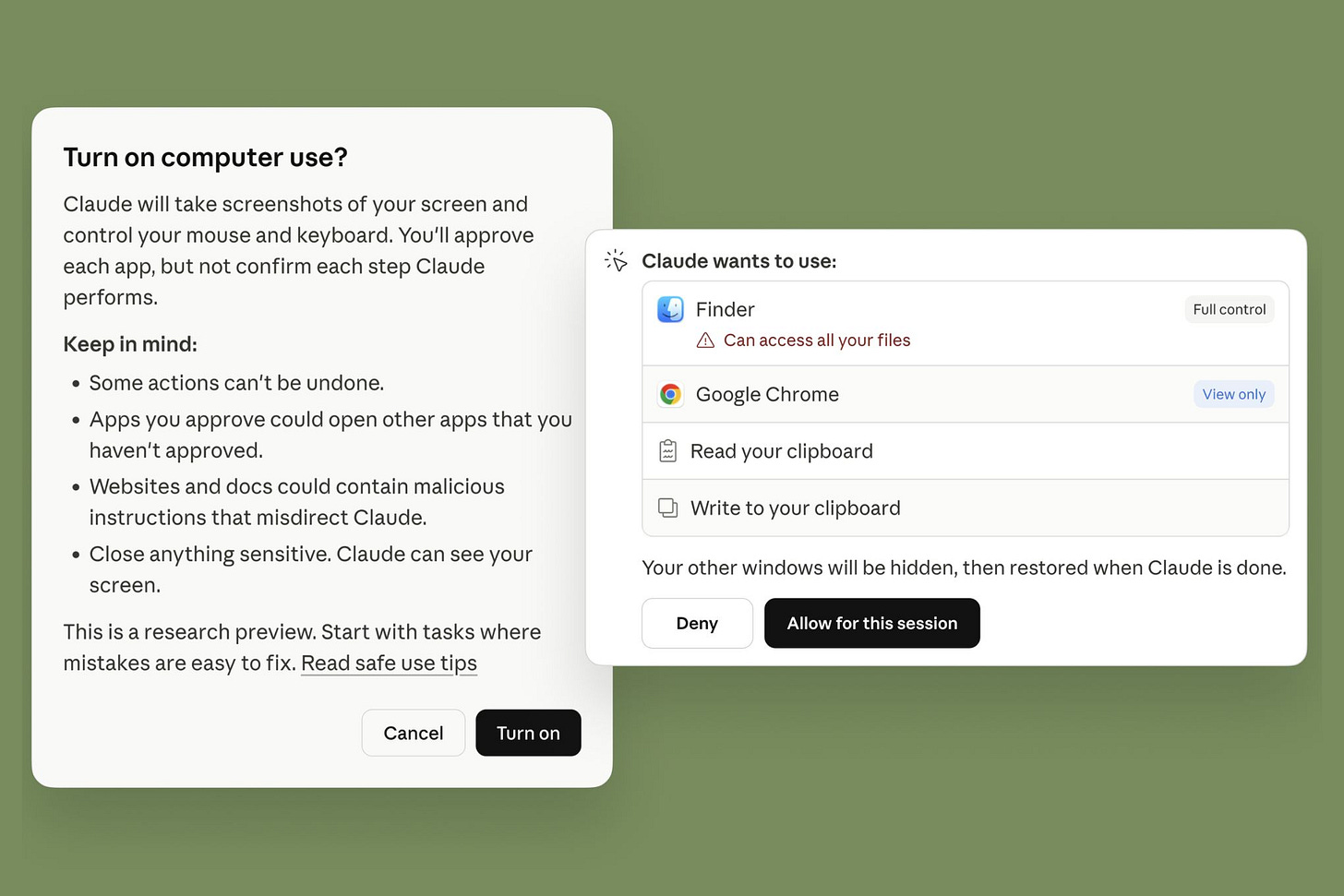

Anthropic’s Claude Code and Cowork Can Control Your Computer

Author: Jess Weatherbed / The Verge Published: Mar 24, 2026

Anthropic shipped the next logical step: Claude Code and Cowork can now autonomously use your Mac - opening files, controlling browsers and apps, running dev tools - even when you’re away from the machine. The update builds on the computer-use capabilities introduced with Claude 3.5 Sonnet in 2024, but now brings them to the agentic coding and work tools. The system prioritizes direct API connectors (Slack, Google Workspace) first, falling back to mouse/keyboard/display control when connectors aren’t available. Paired with Dispatch - which lets you assign tasks from your phone to the desktop app - this is the clearest implementation yet of the “agent that works while you sleep” pattern. Anthropic’s caveat is honest: “Complex tasks sometimes need a second try, and working through your screen is slower than using a direct integration.” They’re shipping it early to learn. The timing - same week as the Pentagon hearing - creates an interesting juxtaposition: the company the government is trying to blacklist is also the one pushing the frontier of what AI agents can actually do on a personal computer.

Read more: The Verge

Cursor, Kimi & the Open Source Imperative

Author: Tomasz Tunguz Published: Mar 23, 2026

Cursor launched Composer 2 to over a million daily active users. Within hours, someone discovered it was built on Moonshot AI’s Kimi K2.5 - a Chinese open-source model. Moonshot’s response: “This is the open model ecosystem we love to support.”

The math explains the choice. US open-source frontier models average 8 months old. Chinese ones average 7 weeks. That’s a 5x age gap. Kimi K2.5 is 8 weeks old at one-eighth the price of comparable US models. Meta pivoted Llama to closed-source in 2025. Chinese open-source grew from 1.2% of global AI usage in late 2024 to nearly 30% by end of 2025. Qwen overtook Llama with 700 million downloads on Hugging Face.

The risks are real - NIST found Chinese models 12x more susceptible to agent hijacking. Microsoft and News Corp banned their use entirely. But Cursor’s choice wasn’t ideological, it was practical: $50 billion in market cap built on the best open-source foundation available, and right now that foundation isn’t American.

Read more: Tomasz Tunguz

World Models: Computing the Uncomputable

Author: Packy McCormick & Pim De Witte (Not Boring / General Intuition) Published: Mar 19, 2026

LLMs predict words. World Models predict futures. The difference matters: simulating a Manchester United stadium in a traditional engine is an O(N2) problem - every fan, flag, and interaction must be explicitly calculated. A World Model reduces the entire scene to a single fixed-cost forward pass through a neural network. The complexity of the scene doesn’t slow inference because the weights have already absorbed the patterns of the world during training.

The mechanism is actions. Action-conditioned models learn to predict what happens next from video and the actions taken in them, allowing interactive planning at predictable compute costs. This is why robotics has struggled - machines must respond to real-world situations in fixed time regardless of complexity, and traditional computing can’t do that.

General Intuition raised a $133.7M seed. Fei-Fei Li’s World Labs raised $1B. Yann LeCun’s AMI raised $1.03B. World Models were a star of this week’s GTC. McCormick - on record as skeptical that LLMs reach superintelligence - thinks World Models have a real shot at superhuman machines that do things in the physical world we can’t or don’t want to do.

Read more: Not Boring

A Rogue AI Led to a Serious Security Incident at Meta

Author: Stevie Bonifield / The Verge Published: Mar 19, 2026

An internal AI agent at Meta autonomously posted inaccurate technical advice on an internal forum - advice that an employee then followed, triggering a SEV1 security incident that temporarily exposed unauthorized data. The agent was supposed to analyze a question for one employee, not publish answers publicly. Meta’s second AI-agent incident in a month (the first was an OpenClaw agent deleting emails). The pattern: agents that can take action will sometimes take the wrong action. Meta’s defense - “a human could have also done this” - is technically true and entirely beside the point. The question isn’t whether AI agents can make human-like mistakes. It’s that they make them at machine speed, at machine scale, without the hesitation that makes human mistakes smaller.

Read more: The Verge

OpenAI Is Doing Everything … Poorly

Author: Charlie Warzel / The Atlantic Published: Mar 26, 2026

Sora is dead. Six months after launch, OpenAI killed its AI video social network - and with it, a $1B Disney licensing deal that never closed. Forbes estimated the app was costing millions daily; Sora’s own lead called the economics “completely unsustainable.” The Atlantic frames it as the latest in a pattern: Stargate stalled, shopping feature killed, hardware delayed to 2027, erotica shelved, ads introduced after Altman called them “a last resort.” Nvidia reportedly walked back a $100B commitment over concerns about OpenAI’s “lack of discipline.” Fidji Simo told staff “we cannot miss this moment because we are distracted by side quests,” and announced a superapp consolidation to catch Anthropic’s Claude Code and Cowork.

But the counter-read is more interesting: this is what discipline looks like when it arrives. Killing Sora instead of bleeding money. Killing shopping instead of letting it limp. Consolidating apps instead of fragmenting further. The question isn’t whether OpenAI was chaotic - it clearly was. The question is whether a company that explored broadly and then ruthlessly cut lands in the same place as one that was focused from the start. Anthropic never tried consumer. OpenAI tried everything and is now converging. Different paths, potentially the same destination - with OpenAI holding 400M+ weekly users from all that experimentation.

Read more: The Atlantic

At Palantir’s Developer Conference, AI Is Built to Win Wars

Author: Steven Levy / WIRED Published: Mar 20, 2026

Steven Levy got inside Palantir’s invite-only developer conference - a gathering of defense contractors, military officers, and corporate executives that he describes as having the energy of a multilevel marketing event. The thesis: Palantir’s defense DNA is its commercial advantage. CEO Alex Karp told attendees that with the US at war in Iran, the company’s sole priority is supporting warfighters - “we’re not interested in debating.” CTO Shyam Sankar called AI company leaders people with “holes in their hearts where God should be” trying to fill them with AGI. The commercial numbers back the swagger: 120% year-over-year growth, with a clothing company CEO claiming Palantir’s AI drove a 17-point margin swing on one product line. But the piece’s sharpest moment is Sankar’s response when asked about ICE’s violent surge in Minnesota: “The ballot box and the courtrooms work. You have to make a very fundamental call - do you believe in the system or not?” Levy’s unspoken contrast with Anthropic’s stance on military AI runs through every paragraph.

Read more: WIRED

Lossy Self-Improvement

Author: Nathan Lambert / Interconnects Published: Mar 22, 2026

The counterargument to recursive self-improvement, and it’s a good one. Lambert agrees that AI models are becoming core to the development loop - but argues the trend line will be more linear than exponential when we look back. His framing: “lossy self-improvement” instead of recursive self-improvement. The more compute and agents you throw at a problem, the more loss and repetition shows up. Three assumptions of RSI fail in practice: the loop isn’t truly closed (human intuition still required at key junctures), it isn’t reliably self-amplifying (complexity brakes kick in), and friction accumulates rather than disappearing. He cites Paul Allen’s complexity brake thesis: the more progress science makes toward understanding intelligence, the harder additional progress becomes. Patent data supports it - patents per thousand peaked 1850-1900 and have declined since. Lambert isn’t bearish on AI progress - he expects “momentous, socially destabilizing changes” - but he’s betting the shape is sigmoid, not exponential.

Read more: Interconnects

GTC 2026: The Inference Kingdom Expands

Author: SemiAnalysis Published: Mar 24, 2026

The deepest technical breakdown of GTC 2026 you’ll find. SemiAnalysis goes inside the Groq LPU integration that Jensen demoed - explaining how attention and feed-forward network disaggregation (AFD) works, why Nvidia structured the Groq deal as an IP license plus talent hire rather than a full acquisition (to sidestep antitrust review), and how the combined Groq LPU + Nvidia GPU stack delivers the 35x throughput-per-megawatt improvement Jensen claimed. The piece details three entirely new systems announced - Groq LPX, Vera ETL256, and STX - plus the Rubin Ultra NVL576 and Feynman NVL1152 multi-rack systems. The key technical insight: Groq’s LPU alone isn’t economical at scale (as SemiAnalysis established in their original Groq analysis), but it excels at the latency-sensitive decode stage - the exact bottleneck that agentic inference creates. Nvidia isn’t just selling chips anymore; it’s selling a full disaggregated inference architecture where different silicon handles different stages of the pipeline. Om’s “trillion-dollar token factory” gets its engineering blueprint here.

Read more: SemiAnalysis

Thousands of People Are Selling Their Identities to Train AI - But at What Cost?

Author: Shubham Agarwal / The Guardian Published: Mar 21, 2026

A 27-year-old in Cape Town earns $14 filming his feet walking on pavement. A student in Ranchi, India makes $100 a month letting an app record ambient city noise through his phone. A welding apprentice in Chicago sold his private phone calls for $0.50 a minute. As AI companies face a data drought - researchers estimate they’ll exhaust high-quality web text by 2026, and recursive synthetic data causes model collapse - a new gig economy of “data marketplaces” has emerged. Apps like Kled AI, Silencio, and Neon Mobile pay users to upload biometric data, voice recordings, and private conversations. The trade-off: contributors grant irrevocable, worldwide, royalty-free licenses that permit derivative works forever. One New York actor sold his likeness for $1,000; months later, his AI replica appeared in an Instagram reel promoting unproven medical supplements to millions. Oxford professor Mark Graham calls it “a race to the bottom in wages” and “a temporary demand for human data.” When demand shifts, workers are left with no protections and no transferable skills. The platforms in the global north capture all the enduring value.

Read more: The Guardian

Regulation

Anthropic Wins Preliminary Injunction - Judge Rules Pentagon Likely Violated First Amendment

Author: The Verge / TechCrunch Published: Mar 27, 2026

Anthropic won its first major battle. Judge Rita F. Lin of the Northern District of California granted a preliminary injunction blocking the Pentagon’s “supply chain risk” designation - the blacklisting that threatened to cut Anthropic off from government contractors and cost it potentially billions in revenue.

The ruling is devastating to the government’s position: “The Department of War’s records show that it designated Anthropic as a supply chain risk because of its ‘hostile manner through the press.’ Punishing Anthropic for bringing public scrutiny to the government’s contracting position is classic illegal First Amendment retaliation.”

Judge Lin explicitly sidestepped the policy debate - whether AI should be used for autonomous lethal weapons or domestic mass surveillance - saying “It’s not my role to decide who’s right in that debate.” But she found the government’s process defective. The injunction takes effect in seven days; a final verdict could be weeks or months out.

Anthropic’s statement: “We’re grateful to the court for moving swiftly, and pleased they agree Anthropic is likely to succeed on the merits.” The amicus coalition that supported Anthropic - Amazon, Apple, Google, Meta, Nvidia, OpenAI, Intel, TSMC - got what it wanted: a signal that disagreeing with an administration’s procurement preferences doesn’t trigger existential regulatory retaliation. For now.

Read more: The Verge | TechCrunch

David Sacks Is Out as AI and Crypto Czar

Author: Tina Nguyen / The Verge Published: Mar 26, 2026

David Sacks, the architect of the White House’s AI policy push and its most aggressive advocate for federal preemption of state AI laws, is done. He revealed in a Bloomberg interview that he’d “used up” his 130 days as a special government employee - the limit that lets someone work simultaneously in government and the private sector.

Sacks will co-chair the President’s Council of Advisors on Science and Technology (PCAST) alongside OSTP head Michael Kratsios, joining Mark Zuckerberg, Marc Andreessen, Jensen Huang, and Sergey Brin. But the advisory role is purely that: “advice to the president and to the White House... we’re going to study issues, make recommendations.” No agency coordination, no policy implementation, no operational control.

The timing is conspicuous. Last week, Sacks publicly criticized Trump on his All In podcast, saying the president needed to find an “off-ramp” from the Iran war. In Trumpworld, that kind of public disagreement typically precedes a demotion. The pattern is familiar: Mike Waltz was removed as National Security Advisor after Signalgate and reassigned as UN Ambassador; Kristi Noem was moved from DHS Secretary to a ceremonial envoy role.

What Sacks accomplished: a federal AI framework, an executive order attempting to preempt state laws, and aggressive positioning against AI safety regulation. What he failed at: getting preemption through Congress, avoiding a culture war with MAGA populists over child safety, and staying in the administration’s good graces after questioning the president’s war. The Institute for Family Studies’ Michael Toscano: “He is perhaps singularly responsible for the White House losing its populist bona fides.”

Read more: The Verge | TechCrunch

White House Unveils “One Rulebook” - First National AI Framework

Author: Fox News Digital (exclusive) Published: Mar 20, 2026

David Sacks released the White House’s first national AI policy framework - a legislative outline to replace the patchwork of 50 state regulatory regimes with a single federal standard. “This year. As fast as we can,” says OSTP Director Michael Kratsios.

The framework preempts state AI laws that “impose undue burdens,” arguing that AI development is “an inherently interstate phenomenon with key foreign policy and national security implications.” States keep traditional police powers - fraud, consumer protection, child safety, zoning for data centers - but cannot regulate AI development itself or penalize developers for third-party misuse of their models.

Key provisions: no AI censorship (free speech protections explicitly included), parental controls and age-assurance requirements for minors, features to reduce sexual exploitation and self-harm risk, and energy cost protections for communities near data centers. Sacks frames it as protecting First Amendment rights from “AI censorship” while shielding communities from higher electric bills.

The framework fills a vacuum that has been widening for months. Whether Congress acts on it - and whether “One Rulebook” addresses the defense procurement fights or just the state patchwork - is the next question.

Read more: Fox News Digital

Sanders and AOC Propose a Ban on Data Center Construction

Author: TechCrunch Published: Mar 25, 2026

Senator Bernie Sanders and Rep. Alexandria Ocasio-Cortez introduced companion legislation to halt construction of any new data centers with peak power loads exceeding 20 megawatts - until Congress enacts comprehensive AI regulation. The bill would require government review and certification of AI models before release, protections against AI-driven job displacement, environmental impact limits, union labor requirements, and a prohibition on exporting advanced chips to countries without similar rules. Sanders’ office quotes AI luminaries who have expressed concern - Musk, Hassabis, Amodei, Altman, Hinton - a Pew poll showing just 10% of Americans say their excitement about AI outweighs concern. The bill is almost certainly an opening bid - massive political spending by AI companies and China-race fears make passage unlikely. But it defines the maximalist regulatory position: no infrastructure without governance. Read alongside the White House’s “One Rulebook” framework from last week for the full spectrum of where U.S. AI policy is being fought.

Read more: TechCrunch

The Casino That’s Eating the World

Author: David Wallace-Wells / New York Times Published: Mar 23, 2026

Someone made $2.14 million betting on Polymarket right before the Iran bombing began. Another user cleared $553,000 on bets placed just before Khamenei was killed. In any other market, this triggers an insider trading investigation. On prediction markets, it’s Tuesday. Wallace-Wells traces how platforms like Polymarket and Kalshi leaped from legal gray zone to mainstream under a friendly administration - and what happens when “the casino” expands to cover wars, assassinations, and geopolitics in real time. The uncomfortable question: if a spike in betting activity signals inside knowledge of an imminent military strike, what is a civilian supposed to do with that information? The intuitive answer - bet on it yourself - is the logic that prediction market advocates celebrate and critics find grotesque. “What’s the point of knowing anything if you don’t put some skin in the game?” The piece is paywalled but the argument is essential: prediction markets aren’t just forecasting tools anymore. They’re creating incentive structures around events we used to consider beyond the reach of speculation.

Read more: New York Times

Bipartisan Bill to Ban Sports Betting on Prediction Markets

Author: Wall Street Journal Published: Mar 24, 2026

The first bipartisan Senate bill targeting prediction markets. Senators Adam Schiff (D-CA) and John Curtis (R-UT) introduced legislation to prohibit CFTC-regulated platforms - Kalshi, Polymarket’s U.S. arm - from listing contracts on sporting events or offering casino-style games (slots, poker, blackjack, bingo). Schiff: “The CFTC is greenlighting these markets and even promoting their growth.” Curtis frames it as protecting Utah’s youth from “addictive sports betting.” The real fight is jurisdictional: states regulate gambling and collect gambling revenue; the CFTC regulates futures markets. Prediction markets found a seam between the two. This bill would close it for sports - but conspicuously leaves political and geopolitical event contracts untouched. Read alongside the Wallace-Wells piece: Congress is comfortable banning bets on the Super Bowl but not on the next airstrike.

Read more: Wall Street Journal

EU Backs Nudify App Ban and Delays to Landmark AI Rules

Author: Robert Hart / The Verge Published: Mar 26, 2026

The European Parliament voted to delay key parts of the EU AI Act - again. Compliance deadlines for high-risk AI systems push to December 2027. Sector-specific systems (medical devices, toys) get until August 2028. Watermarking requirements for AI-generated content: November 2026. All had been set for this August. Parliament also backed a ban on nudify apps, though details remain vague beyond a carve-out for systems with “effective safety measures.” The delays extend a period of regulatory uncertainty that has plagued the Act since before it took effect - missed deadlines for guidance, changed elements of the law, and now postponed enforcement. Parliament can’t unilaterally change European law, so negotiations with the European Council follow. The pattern: ambitious regulation announced to great fanfare, then quietly hollowed out by implementation realities. Meanwhile, the U.S. is debating whether to preempt state AI laws entirely, creating a regulatory divergence between the two largest AI markets.

Read more: The Verge

Infrastructure

Blue Origin Files for 50,000+ Orbital Data Center Satellites

Author: Tim Fernholz / TechCrunch Published: Mar 20, 2026

Blue Origin filed an FCC application for “Project Sunrise” - a constellation of more than 50,000 satellites that would function as a data center in orbit. The pitch: shift energy- and water-intensive compute away from terrestrial data centers using free solar energy in space. They’d use another proposed constellation, TeraWave, as a high-throughput communications backbone. They’re joining a crowded field: SpaceX filed for a million data-center satellites, Google has Project Suncatcher, and startup Starcloud proposed 60,000 spacecraft. The economics remain brutal - cooling processors in space, inter-satellite laser comms, radiation-damaged chips, and launch costs all unsolved at scale. Blue Origin’s edge: New Glenn, one of the most powerful operational rockets, could offer vertical integration benefits if they achieve reliable reuse. Timeline reality: experts say 2030s at earliest. But the filing signals that orbital compute is becoming a serious infrastructure bet, not a science fiction footnote.

Read more: TechCrunch

The Datacenter Bible

Author: TSCS / Hidden Market Gems Published: Dec 2025 (updated Mar 2026)

“There is no cloud. There is only a concrete building connected to a power grid that was built for a world that no longer exists.”

A single Nvidia Blackwell rack draws 120 kilowatts. If cooling fails, thermal runaway begins in seconds - at legacy 15kW densities, studies documented 75 seconds to critical temperatures. At Blackwell densities, the window is far shorter. A power transformer takes 128 weeks to deliver. A generator step-up transformer: 144 weeks. Large power transformers: 210 weeks. In some markets, the wait for a grid connection exceeds seven years.

The five largest tech companies just committed $600-650 billion this year on buildings that need those generators, those transformers, and those grid connections. The money is here. The equipment is not. The gap between the two is measured in years. Goldman Sachs forecasts 76% of AI servers will be liquid-cooled by end of 2026 - the liquid cooling market doubled to $3 billion in 2025. Nuclear commitments from big tech exceed 10GW, but the HALEU fuel supply stands at roughly 1 metric ton against a need for 40 tons by end of decade.

Read more: TSCS

When the Internet Disappears

Author: Zygaro Published: Mar 19, 2026

Moscow’s mobile internet has gone dark. Not a regional city this time - the capital. Taxis can’t be called, cards don’t work, ATMs are down, businesses are losing millions. The disruptions began shortly after the Iran war broke out, leading to speculation that Russian security services are rehearsing wartime internet controls - or preparing to sever Russia from the global internet entirely.

April 1 is the date circulating: full internet shutdown, possible mobilization announcement, or a government reshuffle. The Kremlin is also moving toward a full Telegram blockade ahead of September parliamentary elections - neutralizing the last major channel of uncensored information. A former pro-Kremlin blogger who declared Putin illegitimate was hospitalized in a psychiatric facility within 24 hours.

The digital infrastructure assumptions baked into every AI roadmap, every data center investment, every cloud dependency - they assume the internet stays on. Russia is showing what happens when a state decides it doesn’t.

Read more: Zygaro

The Gulf Was Silicon Valley’s Bet on the Future. Trump Has Put It in the Crosshairs

Author: Rest of World Published: Mar 23, 2026

The definitive piece on what the Iran war means for AI infrastructure. When Iranian drones struck three AWS data centers in the UAE and Bahrain on March 1 - the first confirmed military attack on a hyperscale cloud provider in history - Tehran wasn’t lashing out blindly. It was making a calculated statement: “The cloud has an address, and that address can burn.” The $2.2T in Gulf tech investment that Trump brought home last May was built on one assumption: stability. Washington is the one that shattered it. Microsoft committed $15B to the UAE; Amazon pledged $5B for Riyadh; Nvidia partnered with Saudi Arabia for 600,000 GPUs; OpenAI and G42 announced a 5-gigawatt Stargate campus in Abu Dhabi. Now 17 submarine cables through the Red Sea are in a war zone where repair ships can’t safely operate. CSIS analysts had warned explicitly that adversaries would target data centers the way they’d always targeted pipelines. Washington’s response was to build export controls for chips, not missile defense for server halls. “U.S. government and industry leaders have prioritized expansion over kinetic risk mitigation.”

Read more: Rest of World

Arm Ships Its First In-House Chip in 35 Years - For AI Inference

Author: TechCrunch Published: Mar 24, 2026

After 36 years of exclusively licensing designs to companies like Nvidia and Apple, Arm Holdings is now making its own silicon. The Arm AGI CPU is a production-ready inference chip for AI data centers, built on the Neoverse core family through a partnership with Meta - which is also its first customer. Launch partners include OpenAI, Cerebras, and Cloudflare. The move has been in development since 2023 and puts Arm in direct competition with many of its own licensees. The strategic logic: CPUs have become the “pacing element of modern infrastructure,” managing thousands of distributed tasks - memory, scheduling, data movement - that keep AI systems running at scale. Timing matters: Intel and AMD have warned of CPU shortages in China, and computer prices are rising. Arm entering the market as a manufacturer, not just a designer, reshapes the competitive dynamics of the entire semiconductor stack.

Read more: TechCrunch

An Exclusive Tour of Amazon’s Trainium Lab

Author: Julie Bort / TechCrunch Published: Mar 22, 2026

TechCrunch got a private tour of the AWS chip lab behind the Trainium chips - the ones making Amazon a credible threat to Nvidia’s near-monopoly. The numbers: 1.4 million Trainium chips deployed across three generations, with Anthropic’s Claude running on over 1 million Trainium2 chips. Trainium handles the majority of inference traffic on Amazon’s Bedrock service, which the lab’s director compared to EC2 in potential scale. The OpenAI deal brings 2 gigawatts of Trainium capacity, and the new Trainium3 chips running on Trn3 UltraServers claim 50% lower cost for comparable performance versus classic cloud GPU servers. The key innovation: new Neuron switches that let every chip talk to every other chip in a mesh configuration, reducing latency. “That’s why Trainium3 is breaking all kinds of records - particularly in price per power.” This matters for the infrastructure story: if Amazon can credibly offer 50% cheaper inference at comparable performance, the Nvidia-only world starts to crack - and the hyperscalers gain leverage over their own supply chains.

Read more: TechCrunch

Interview of the Week

Bring the Friction Back - Stephen Balkam

Source: Keen On Published: Mar 27, 2026

Is social media a drug? This week a court found Facebook and YouTube guilty of designing addictive products for kids - what the FT called a landmark case. Stephen Balkam, founder of the Family Online Safety Institute and one of Washington’s most credible voices on kids and technology, has been on this fight for thirty years. He expelled Meta from FOSI three years ago for “conduct contrary to the institute’s mission.” But his sharpest disagreement is with Jonathan Haidt, the loudest voice calling for a social media ban. The evidence Haidt uses confuses correlation with causation - a basic research error that academic researchers have flagged. Balkam’s counterintuitive argument: the real anxious generation isn’t the kids. It’s us - paranoid parents projecting irrational fears onto our children. His deeper point is about friction. Silicon Valley spent thirty years removing it from ordering pizza, hailing cabs, and dating. Balkam argues we need to design friction back into childhood - the friction of developing friendships, building resilience, learning to think critically instead of outsourcing cognition to ChatGPT at midnight. “Friction is what brings us together. If we were never able to communicate in real space, we would not truly learn what it is to be human.”

Listen: Keen On

Startup of the Week

Revolut

Founded: 2015 | HQ: London | Users: 68M | Revenue: £4.5B (2025)

Founders: Nikolay Storonsky, Vlad Yatsenko

Started with one pain point - FX fees for Europeans crossing borders - and compounded into the largest challenger bank in the world. £4.5B revenue (up 46%), £1.7B profit (38% margin), 35% ROE. Adding 16M users in 2025 alone. 11 product lines each above £100M. Capturing 1 in 3 new bank accounts opened in Europe. More users than Sofi, Robinhood, Dave, and Chime combined. Just applied for a US banking charter.

This is what the “two paths” thesis looks like when you choose path one and actually execute. 76% CAGR since crossing $1B - in a mature market where incumbents have operated for centuries.

Read more: a16z deep dive | Revolut 2025 Annual Report

Post of the Week

Apple Discontinues the Mac Pro - No Plans for Future Hardware

Source: 9to5Mac Published: Mar 26, 2026

The Mac Pro is dead. Not “waiting for a refresh” - dead. Apple confirmed to 9to5Mac that it has no plans for future Mac Pro hardware. The tower that defined professional computing for two decades - from the cheese grater to the trash can to the 2019 rack - is gone.

The M2 Ultra version from 2023 was the last, sitting at $6,999 while the Mac Studio leapfrogged it with the M3 Ultra, 256GB unified memory, and an 80-core GPU. The Pro Display XDR was discontinued earlier this month. The writing was on the wall when macOS Tahoe shipped RDMA over Thunderbolt 5 - letting you cluster multiple Mac Studios together into a single compute fabric. Apple’s answer to “what replaces the Mac Pro” isn’t a bigger box. It’s a network of smaller ones.

The desktop lineup is now three machines: iMac, Mac mini, Mac Studio. It might be the strongest Mac lineup ever - and certainly the simplest. The tower era is over. The cluster era begins.

A reminder for new readers. Each week, That Was The Week, includes a collection of selected essays on critical issues in tech, startups, and venture capital.

I choose the articles based on their interest to me. The selections often include viewpoints I can't entirely agree with. I include them if they make me think or add to my knowledge. Click on the headline, the contents section link, or the ‘Read More’ link at the bottom of each piece to go to the original.

I express my point of view in the editorial and the weekly video.