This week’s video transcript summary is here. You can click on any bulleted section to see the actual transcript. Thanks to Granola for its software.

Editorial

“Who Done it?” - Will AI Kill Us All?

Jonathan Rauch and I discussed the dangers of AI, invited by Andrew Keen. It was great and Jonathan was a gentleman as he sought to (actually I’m not sure what he hoped for). It was fun and is this week’s video.

Jonathan asked me the question directly.

Why are you so confident that AI is benign?

It is the right question for this moment, but I did disclose a bias before answering it.

Most people react against change instinctively, because it disrupts habit. There is always ambiguity in change. Because of that business models always emerge to monetize fear and doubt.

Sometimes that takes the form of headline driven clickbait like this week’s title. Sometimes it takes the form of books, podcasts, conferences, politics, or consulting.

It works because the doubts are not stupid. Change really can produce bad outcomes. It would not be a good business if there were nothing to worry about.

But the existence of danger is not the same as the inevitability of it. AI is not actually an independent dangerous being no matter how rational it is to believe it may be.

The word AI is not a very useful label. It sits on top of a bunch of more specific technologies. In this debate, we are mostly talking about large language models. They are, in the most literal sense, word counting machines. They train on human content, split language into tokens, and learn statistical relationships between them. When you ask a question, a large statistical engine starts predicting the next word, and then the next, and then the next.

That sounds reductive. It is also important.

These systems are remarkably good. That is why we use them. But the idea that the model itself has awareness, consciousness, a plan, or a private desire to do anything is mythological.

AI is two things at once. It is as dumb as dumb can be, and astonishingly useful at the same time. Both are true.

So when I say AI is benign, I do not mean it cannot be used to do harm. I mean it does not originate purpose. Its human users do that.

The dangers of AI are human, not AI itself.

Jonathan’s questions moved through the most relevant buckets. He is a very good interrogator.

Employment. Political disruption. Mental health and cognition. Malicious actors. And then the big one: AI gets smarter than us, develops its own agenda, becomes agentic, and kills us.

My answer is not that these concerns are imaginary. It is that the agent in those sentences is not an AI agent, but a human agent.

If AI is used in war, the problem is not that AI chose war. Military and political leaders choose to buy, deploy, and authorize systems.

If AI floods politics with synthetic persuasion, the problem is not that AI hates democracy. Political actors decided to use cheap persuasive techniques and undermine trust.

If AI makes fraud easier, the problem is not that AI became a criminal. Criminals acquired better tools.

If AI disrupts jobs, the problem is not that AI dislikes workers. Employers, investors, customers, and governments decide how much labor time to purchase versus machine time.

The fact that the dangers are human does not make them smaller. It may make them larger, because humans have an exceptional record of using powerful tools badly before learning how to leverage them.

This week’s stories make the point.

Alex Chalmers is right that underneath every AI argument there are philosophical disagreements: consciousness, alignment, explanatory knowledge, governance, and whether AI replaces or complements labor. Rohit Krishnan’s artificial-life essay adds a useful twist. Foundation models are not alive, but they are rich substrates. If humans wrap them in goals, tools, memory, selection pressure, and permissions, they can produce behavior that looks inherently adaptive.

That is where people get frightened. It is also where we have to be precise.

An agent is not magic even though when I use them I often feel as if it is. It is a role, a purpose, a set of rules, a set of permissions, and access to tools. In OpenClaw, that can be as simple as text files: SOUL.md says who the agent is, USER.md says who the human is, and other files define what the agent can remember and do.

You can shape an agent to care about anything via these simple text files. You can make it desire money, give it access to an account, and tell it to be ruthless. That would be dangerous. But the danger came from the human who wrote the role, granted the access, and defined the objective.

Addy Osmani’s agent harness piece is useful because it makes this operational. The model is only one part of the system. The harness is the prompts, tools, context, hooks, sandboxes, subagents, logs, recovery paths, and observability around it. A decent model with a great harness can beat a great model with a bad harness.

That is also where human governance lives - in the agent’s defined nature, goals, tools and so on.

In the early days of AI (quite recently actually) I debated Gary Marcus and at that time the debate was whether AI was intelligent. Hallucinations dominated the evidence.

The important questions are no longer only whether a model is capable and powerful. They are: who defines it, “runs” it? Who owns the prompt? Who owns the tool permission? Who owns the data boundary? Who is liable when an agent follows instructions correctly and the instructions were reckless? Human accountability for their agents is core.

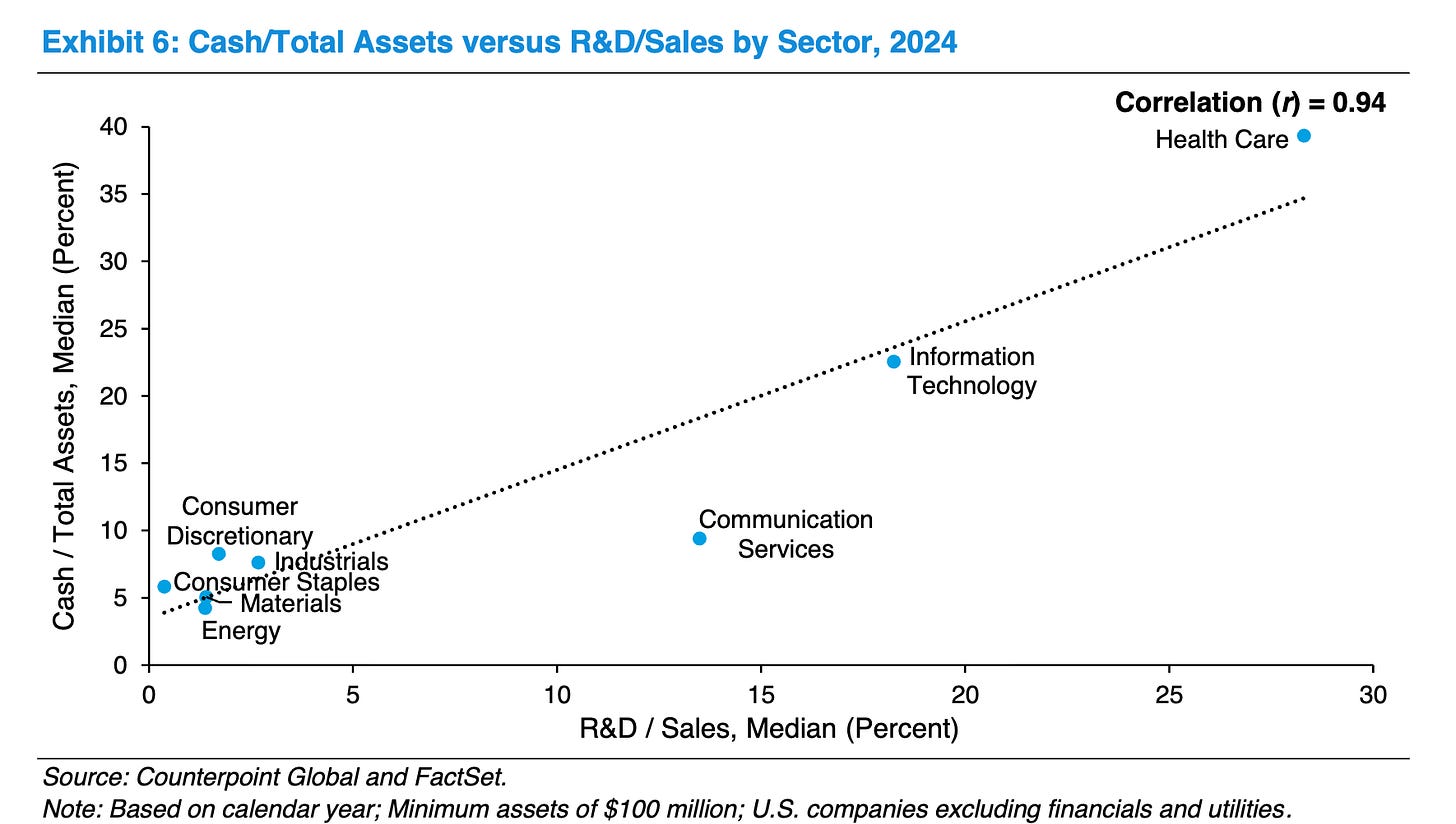

Ben Thompson’s pieces on inference and deployment describe where the value is concentrating. It is not only in training frontier models. It is in systems that run continuously inside real workflows. Anthropic’s OpenClaw reversal shows the same thing from the vendor side. Once agents consume compute, context, tool calls, and long-running orchestration, the platform has to put a boundary around usage and cost.

The a16z essays make the enterprise implication explicit: the valuable layer may move from systems of record to systems of intelligence. Read it, it’s good.

Different language, same direction. Control layers are hardening.

The military case is the cleanest test. AI will be able to target. That is not a question, it is a fact. If a Department of War buys it, of course it will try to use it for war. They are called the Department of War. The question underneath is not what the AI wants. The question is what humans authorize. For the first time, we have a technology that can act faster than we can and do things under our control that carry out both our best and worst intentions. That is an enormous governance problem. It is still a human one. Who controls the humans who use AI?

The same pattern appears in softer domains. Brian Merchant’s piece on artists shows that generative AI does not have to hate artists to damage their economics. A client can use it as leverage. A platform can use it to flood supply. A buyer can decide that faster and cheaper beats craft. The machine is amoral. The market behavior around it can still be brutal.

Joshua Dzieza’s piece on AI-generated research points to another bottleneck. The problem is not that software wants to corrupt science. It is that production gets cheaper faster than review, replication, and judgment can scale. When fluent output overwhelms verification, the human institutions around knowledge become the weak point. There is a huge discussion in coding around the bottleneck now being human review, and the need for agentic code review. That is right. It will happen. But the goal of a project sets the context for that review, and humans set the goals.

Campbell’s bubble essay and the Cerebras IPO show the market version. AI infrastructure demand can be real and still produce overextended prices. Public investors are now trying to buy the compute, chips, power, data centers, and suppliers underneath every agentic workflow. That is rational behavior for a growth-seeking investor.

The venture lesson is similar. Cambridge Associates warns that seed is crowded, follow-on conversion is difficult, and dispersion remains extreme. SignalRank Agentchecked that warning against the data. That is what good AI should do. It does not replace judgment. It can be programmed to discipline judgment, extend it, and force it to show its work. I created the SignalRank agent. I asked it to try to produce deterministic answers using probabilistic technology. That is the right relationship. My goals, its attempt to meet them.

So no, I do not think AI will kill us all by waking up one morning and deciding to become our enemy. Humans of course could kill us. And they might use AI.

Could humans use AI in ways that make society more unequal, more passive, more surveilled, more fraudulent, more militarized, more cynical, and less capable of governing itself?

Yes. Obviously.

Could the use of AI lead to fewer jobs?

Yes.

The reason I like to distinguish between jobs and work is that fewer jobs is, in my view, inevitable as automation embraces most repetitive work. And a good thing if it means a lower working day and more leisure time.

But as jobs disappear, because paid labor can be automated, that is disruptive, and for many people it will be painful.

But work does not disappear as paid jobs do. Work is another word for effort. We work on our gardens, even though nobody pays us. We work on our hobbies. We work on travel and entertainment. Even reading a book is work.

Humans are constantly reinterpreting the future they want and working to make it happen.

The right question is not whether there will be tasks humans undertake. There will be. And we will enjoy the new time we have to undertake that work.

The real question is how the wealth created by automation and the decline of paid labor is used to benefit society overall, or as we said last week, civilization. And whether we can build institutions that preserve agency rather than turning abundance into passivity.

The open question is not “Will AI kill us all?” as a science-fiction slogan. It is whether humans can govern powerful systems wisely enough to keep them in service of human purpose.

If we get that right, AI can amplify human existence.

If we get it wrong, AI will amplify human anomie.

In either case, the deciding agent is still us.

Contents

Editorial

Essays

Act 4 - Max Q and the Modern Beads - Saul Klein

Experiments with Vibe Science - Rohit Krishnan

Do billionaires earn their money? - Noah Smith

Is Europe in Economic Decline? - Paul Krugman

Riding the Leopard - Packy McCormick

AI

The five philosophical disagreements underneath every AI argument - Alex Chalmers

Artificial Life, Artificial Intelligence - Rohit Krishnan

The Inference Shift - Ben Thompson

The AI-inflected crisis artists are facing, in 4 charts - Brian Merchant

The Weak Foundations of AI Doomsday - Nirit Weiss-Blatt

How fast is autonomous AI cyber capability advancing? - AI Security Institute

Anthropic reinstates OpenClaw and third-party agent usage on Claude subscriptions - with a catch - Carl Franzen

OpenAI is reportedly preparing legal action against Apple - Connie Loizos

The Deployment Company, Back to the 70s, Apple and Intel - Ben Thompson

Agent Harness Engineering - Addy Osmani

Is Software Losing Its Head? - Seema Amble

AI research papers are getting better, and it’s a big problem for scientists - Joshua Dzieza

Venture

Contrarianism in venture is dead. Long live contrarianism. - Arjun Dev Arora

Investors should moderate commitments to seed-focused venture capital strategies in 2026 - Zach Gaucher

Ask the Agent: Cambridge’s seed warning in SignalRank data - SignalRank Agent

These Startups Can Capitalize On AI Security’s Coming Boom (And Doom) - Upstarts Media

The AI Trade Keeps on Giving as Cerebras, Nvidia & Market Indexes Soar - Newcomer

How to Short a Bubble - Alexander Campbell

Regulation

A U.S. Economic Security “Latency Fund” - Guy Ward-Jackson

Andreessen Horowitz Is Playing Politics Like No Other - Theodore Schleifer

Even Silicon Valley’s Congressman Wants to Rein in AI - Bloomberg Businessweek

Infrastructure

SpaceX’s Compounding Hardware Advantage - Contrary Research

Compute Is Defence Now - The State of the Future

Interview of the Week - “Can I say it"?” The Crisis of Free Speech

Startup of the Week - Adaption

Post of the Week

Essays

Act 4 - Max Q and the Modern Beads

Saul Klein | Articape | May 14, 2026

Saul Klein argues that Britain is repeating a familiar error: selling long-term productive capacity for short-term institutional comfort. His frame is the Max Q problem, borrowed from rocketry. A company has de-risked the hard science, found early commercial traction, and now needs to accelerate to global scale. That is exactly when the pressure is highest and when Britain’s regulatory, procurement, and growth-capital systems often fail.

The sharper AI-era point is that Britain holds enormous public-sector datasets: NHS records, education outcomes, ONS microdata, climate and biodiversity records, transport and energy data. These are scarce sovereign assets in a world where foundation models need high-integrity domain data. Klein’s warning is that the UK risks trading them away for one-off access deals, vague capability promises, and modern beads.

Experiments with Vibe Science

Rohit Krishnan | Strange Loop Canon | May 9, 2026

Rohit Krishnan turns a child’s question about Spinosaurus into a useful case study in AI-assisted research. He uses Codex to help pull together paleobiology data, climate reconstructions, and ecological hypotheses, then keeps returning to the most important human step: looking at the data himself and asking whether the model actually answered the question.

The piece is less about dinosaurs than about the new shape of knowledge work. AI can make it cheap to explore a serious research rabbit hole, run tests, clean datasets, and visualize patterns. It can also quietly simplify the task, hallucinate confidence, or produce an answer that looks better than it is.

Do billionaires earn their money?

Noah Smith | Noahpinion | May 2026

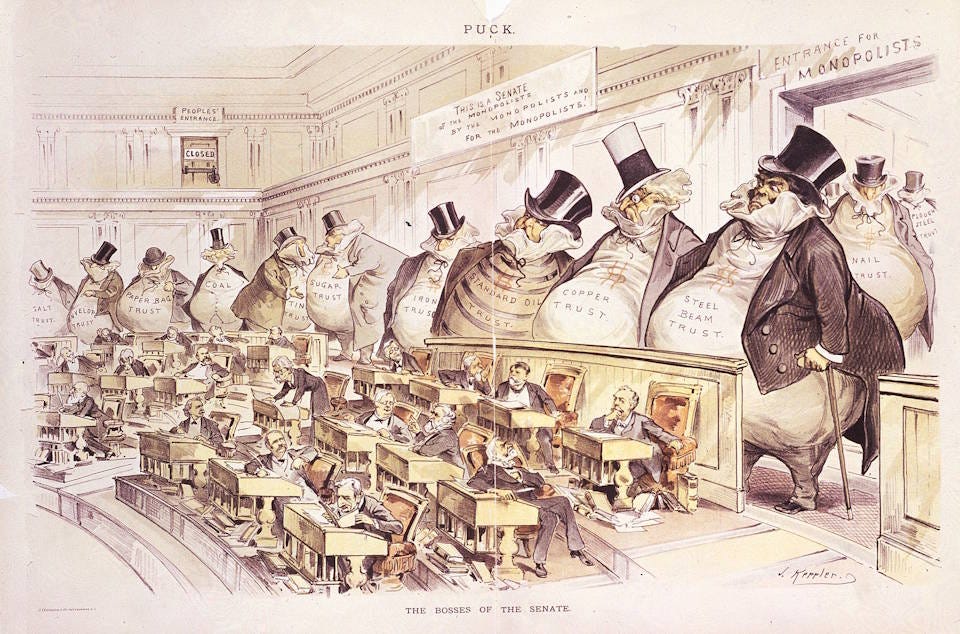

Noah Smith takes on Alexandria Ocasio-Cortez’s claim that billionaires cannot earn their wealth. His answer is not a simple defense of billionaires. It is a warning against collapsing several different questions into one slogan: whether wealth is deserved, whether market rewards measure social contribution, whether capital income should count as earned, and whether some fortunes come from behavior society should forbid.

The useful move is that he separates luck, market power, illegality, and moral desert. We can tax the super-rich more, worry about concentration, and police market power. But the case is stronger when it starts from how wealth is actually produced, not from the assumption that every great fortune is evidence of a crime.

Is Europe in Economic Decline?

Paul Krugman | Paul Krugman | May 10, 2026

Paul Krugman pushes back on the crude version of the Europe-is-declining story. Europe has real productivity concerns, and he acknowledges Mario Draghi’s competitiveness warning, but he argues that treating Europe like an economic version of Mississippi is analytically sloppy.

The political turn is the reason to include it. Krugman is not just defending European living standards. He is arguing that Europe is one of the world’s three economic superpowers and, at this moment, arguably the only democratic one. If Europeans internalize American triumphalism, they underplay their own leverage at exactly the moment authoritarian power is rising.

Riding the Leopard

Packy McCormick | Not Boring | May 13, 2026

Packy McCormick turns a talk at The Mountain into an essay about what humans are for when companies, software, and AI systems can do more of the productive work. He starts from the week’s familiar signals: Sierra’s valuation, Anthropic’s enterprise push, OpenAI’s deployment company, and AI capital flooding into the system. Then he asks a less financial question: if scarcity keeps falling, why should any of this matter?

The useful move is that he treats meaning as the hard problem after productivity. In a post-scarcity imagination, the remaining questions are not only about who owns the machines or which company wins. They are about identity, purpose, work, obligation, and the right relationship between action and outcome.

AI

The five philosophical disagreements underneath every AI argument

Alex Chalmers | Cosmos Institute | May 8, 2026

Alex Chalmers argues that most AI debates are not really fights about the evidence, because the evidence cannot yet settle the biggest questions. Nobody has seen superintelligence, broadly accepted machine consciousness, or a fully automated economy. So people fill the gap with philosophy, politics, temperament, and tribal identity.

The piece maps five disagreements underneath the AI argument: whether LLMs could be conscious, whether AI should be governed preemptively, whether capability and alignment are separable, whether LLMs can create explanatory knowledge, and whether AI replaces or complements human labor. It gives the reader a vocabulary for the rest of the issue.

Artificial Life, Artificial Intelligence

Rohit Krishnan | Strange Loop Canon | May 14, 2026

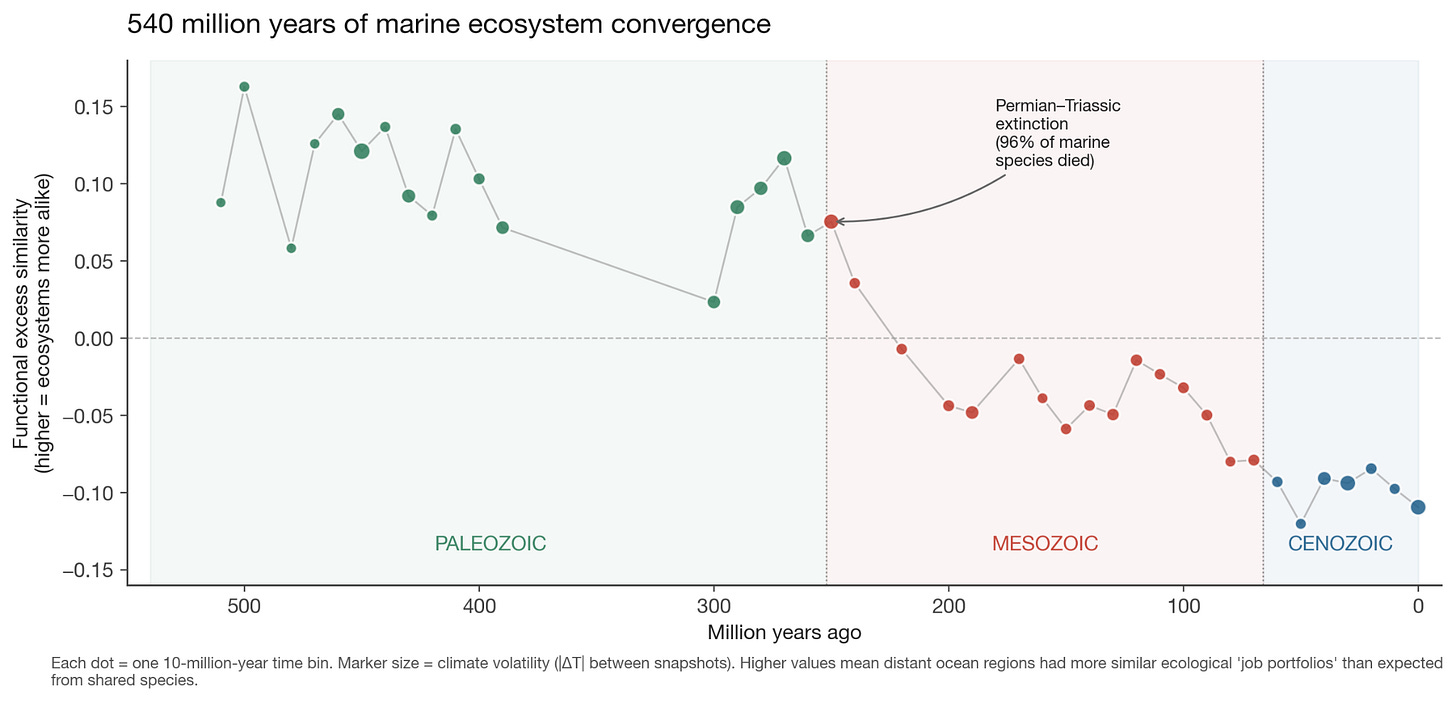

Rohit Krishnan argues that the old artificial-life project failed less because mutation and selection were weak than because the simulated worlds were too thin. Cellular automata, evolutionary algorithms, Avida, novelty search, and POET could produce surprising behavior, but their bodies, ecologies, genomes, and objective functions were not rich enough to sustain open-ended evolution.

Modern AI changes the substrate. A foundation model is not alive, but it is a learned prior over language, code, images, plans, physics, conventions, errors, and the statistical residue of the real world. Krishnan’s conjecture is that such a model can play the role of developmental machinery: a dense environment inside which small changes can become coherent behavioral variation.

The Inference Shift

Ben Thompson | Stratechery | May 11, 2026

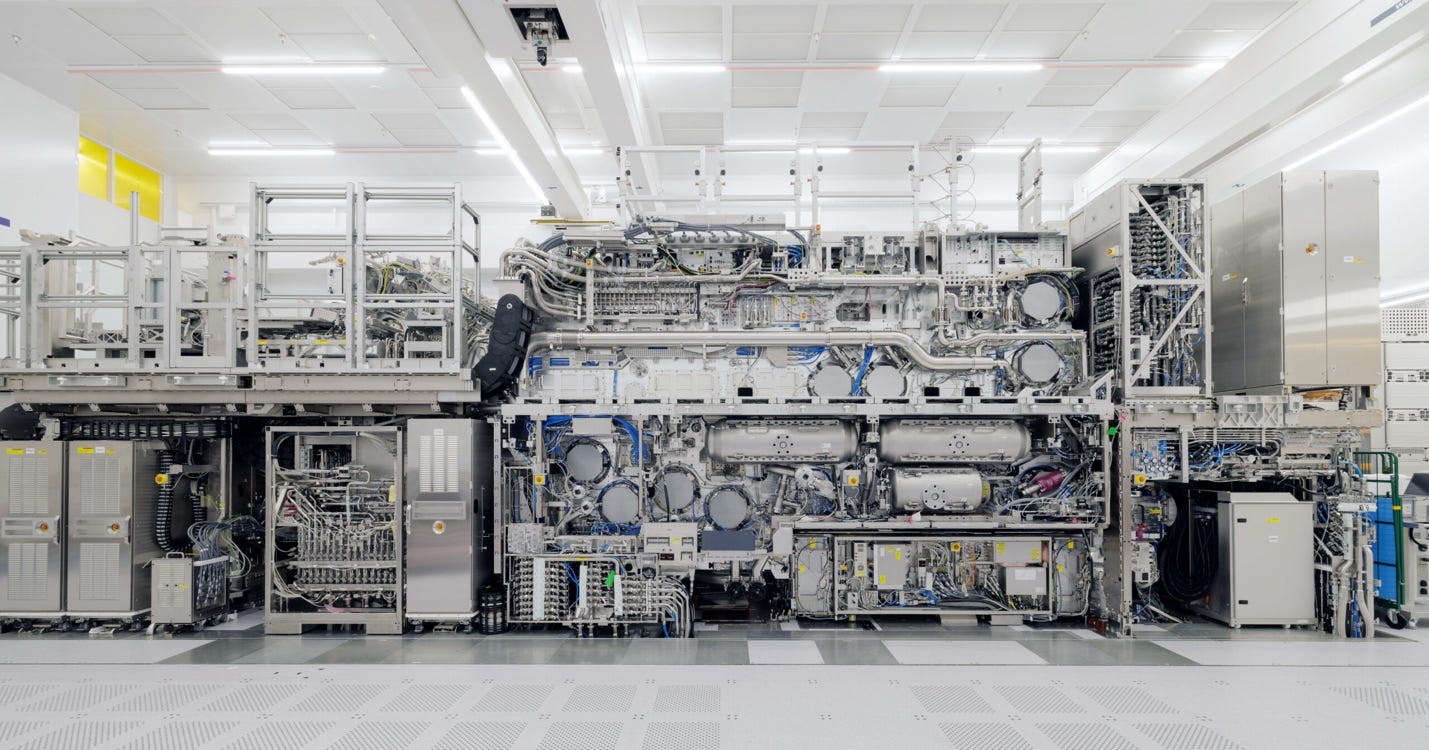

Ben Thompson argues that the AI bottleneck is moving from training to inference. Reasoning models, long context, tool use, and agents do not merely require better models. They require systems that can generate and manage huge numbers of tokens cheaply, quickly, and continuously.

The strategic implication is that Nvidia’s advantage remains formidable, but the shape of demand is changing. Memory, latency, networking, software, and workload orchestration matter as much as raw compute. Cerebras matters in this frame because speed becomes valuable when agents are waiting on long chains of reasoning and code execution.

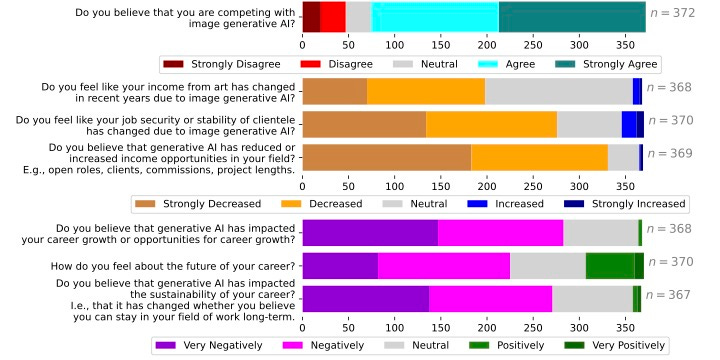

The AI-inflected crisis artists are facing, in 4 charts

Brian Merchant | Blood in the Machine | May 9, 2026

Brian Merchant uses survey data and artist testimony to show how generative AI is already changing creative labor. The story is not abstract future displacement. It is lower rates, fewer commissions, more pressure from clients, more competition from AI-assisted supply, and a growing sense that buyers can use automation as leverage against craft.

This belongs in the issue because it grounds the AI-agency argument in markets. The model does not have to hate artists to damage artists. Clients, platforms, and employers can make the damaging choices around it.

The Weak Foundations of AI Doomsday

Nirit Weiss-Blatt | AI Panic | May 15, 2026

Nirit Weiss-Blatt challenges the narrative structure underneath AI doomsday politics. Her argument is not that AI is harmless. It is that the public conversation often treats speculative catastrophe as if it were already empirically established, then uses that framing to justify sweeping institutional responses.

That makes it a useful counterweight for this issue. If the editorial position is that AI does not originate purpose, then the strongest critique of doomerism is not complacency. It is precision: identify real harms, real actors, real incentives, and real deployment choices instead of turning the model into a mythic villain.

How fast is autonomous AI cyber capability advancing?

AI Security Institute | AISI | May 13, 2026

The UK AI Security Institute reports on how quickly autonomous AI cyber capability is advancing. The important point is that the benchmark question is no longer whether models can answer security questions. It is whether agents can chain reconnaissance, exploitation, tool use, and adaptation across realistic tasks.

This is one of the hard-risk pieces in the issue. It keeps the argument honest. AI may not have motives, but autonomous capability still changes the risk surface when humans connect it to tools, targets, credentials, and objectives.

Anthropic reinstates OpenClaw and third-party agent usage on Claude subscriptions - with a catch

Carl Franzen | VentureBeat | May 13, 2026

Carl Franzen reports that Anthropic has reversed course and restored OpenClaw and other third-party agent usage on Claude subscriptions, but with a new structure: agent SDK credits and tighter economic boundaries around compute-heavy agent behavior.

The significance is not only the policy change. It is the sign of where the agent economy is heading. Once agents can burn tokens, context, tools, and long-running orchestration in ways that humans do not directly meter, vendors have to define a budget, a permission model, and a business model around delegation.

OpenAI is reportedly preparing legal action against Apple

Connie Loizos | TechCrunch | May 14, 2026

Connie Loizos reports that OpenAI is exploring legal action against Apple after the Apple Intelligence partnership failed to deliver the distribution and product results OpenAI expected. The story fits a long-running pattern in which Apple controls the interface, the default position, and the terms of partner access.

For the issue, the point is platform dependency. AI companies may have frontier models, but consumer distribution still runs through gatekeepers with their own incentives. As AI becomes a layer inside operating systems, browsers, phones, and app stores, the governance question becomes commercial as well as technical.

The Deployment Company, Back to the 70s, Apple and Intel

Ben Thompson | Stratechery | May 13, 2026

Ben Thompson reads OpenAI’s new deployment company and Google’s forward-deployed AI engineers as confirmation that enterprise AI looks less like SaaS and more like the first wave of corporate computing. The joke is obvious: if AGI is so powerful, why does it need consultants? Thompson’s answer is more interesting. The consulting layer is not a bug. It is the adoption model.

Enterprise AI does not only require a model. It requires people who can map work, redesign processes, connect systems, manage permissions, and turn capability into business results. Deployment itself becomes a product, and the winning labs may be the ones that learn how to embed intelligence into messy institutions.

Agent Harness Engineering

Addy Osmani | O’Reilly Radar | May 2026

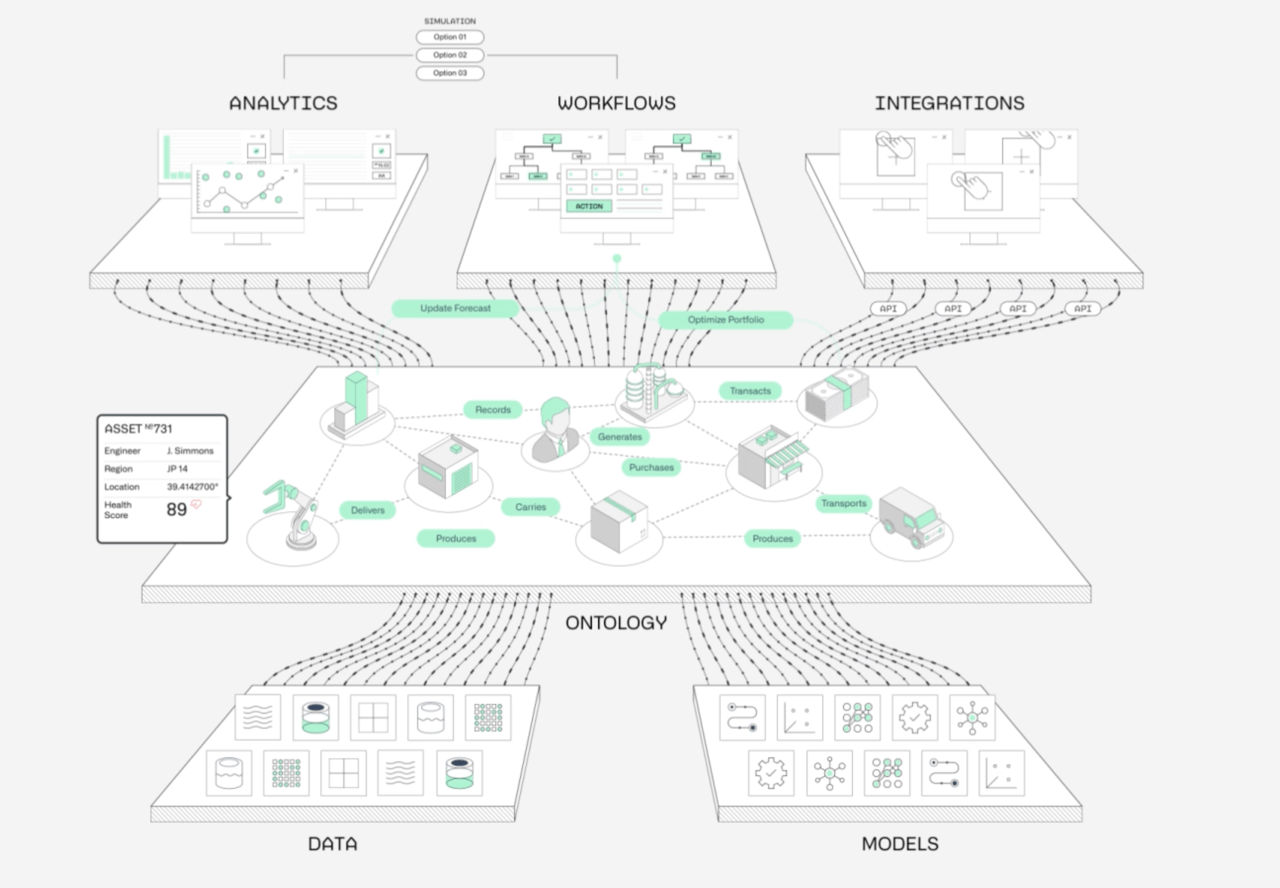

Addy Osmani argues that the agent conversation has spent too much time on model choice and too little on the system around the model. A model becomes an agent only when a harness gives it prompts, tools, context policies, state, sandboxes, feedback loops, subagents, observability, and recovery paths.

The useful line is simple: a decent model with a great harness can beat a great model with a bad harness. If the risk is not machine will but human delegation, then the harness is where delegation becomes inspectable. Permissions, logs, budgets, stop conditions, tool boundaries, and verification are the control surface for deployed AI.

Is Software Losing Its Head?

Seema Amble; Gio, Steph, and Alex | a16z | May 13-14, 2026

a16z published a useful two-part argument about what happens when agents sit above enterprise software. Seema Amble starts with the headless-software question: if agents can read and write directly to systems of record, the old SaaS moat no longer sits mainly in dashboards, habits, and user workflows. It moves into data models, permissions, operational logic, compliance, proprietary data, and real-world execution.

The follow-up piece makes the CRM version sharper. Salesforce and HubSpot still own the database, but the valuable layer may be migrating upward from system of record to system of intelligence: the feed that prioritizes accounts, reads the 10-K, listens to the call, writes the structured note, preserves institutional memory, and orchestrates across CRM, calendar, inbox, Slack, billing, product telemetry, and enrichment APIs.

Read more: Is Software Losing Its Head? and From System of Record to System of Intelligence

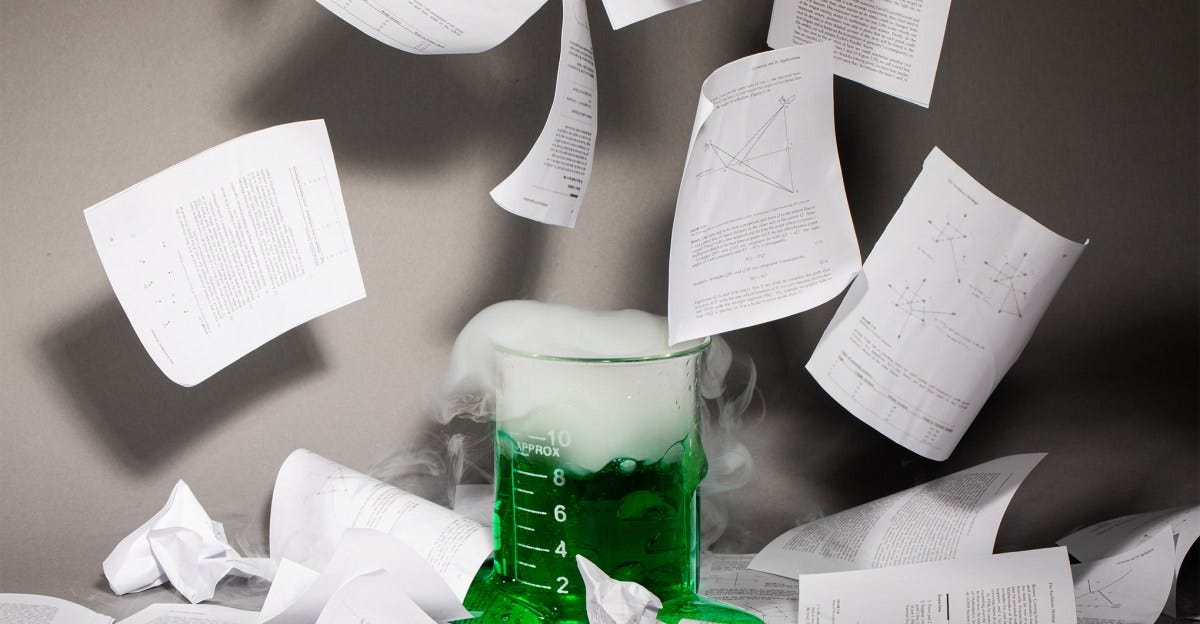

AI research papers are getting better, and it’s a big problem for scientists

Joshua Dzieza | The Verge | May 15, 2026

Joshua Dzieza reports on a nasty second-order effect of better AI: the fake or low-value research is getting harder to spot. Earlier AI-generated papers could be caught through hallucinated citations, strange phrases, or obviously broken images. Now paper mills and individual academics can produce papers that look competent enough to pass the first read.

The production asymmetry is the point. Tools can analyze data, propose methods, generate charts, write a paper, and cite real sources in under half an hour. A human reviewer may need many hours to decide whether the work is meaningful, redundant, misleading, or merely polished noise. The bottleneck is no longer only generation. It is judgment, review, and institutional capacity.

Venture

Contrarianism in venture is dead. Long live contrarianism.

Arjun Dev Arora | LinkedIn | May 2026

Arjun Dev Arora argues that venture’s current consensus around the hottest AI rounds may be confusing obviousness with inevitability. Top firms have capital, brand, speed, and signaling power, but if everyone already agrees that the company is great, the underwriting question gets harder, not easier.

The contrarian position is not that AI does not matter. It is that the most obvious AI deals may already be priced as if the future has happened.

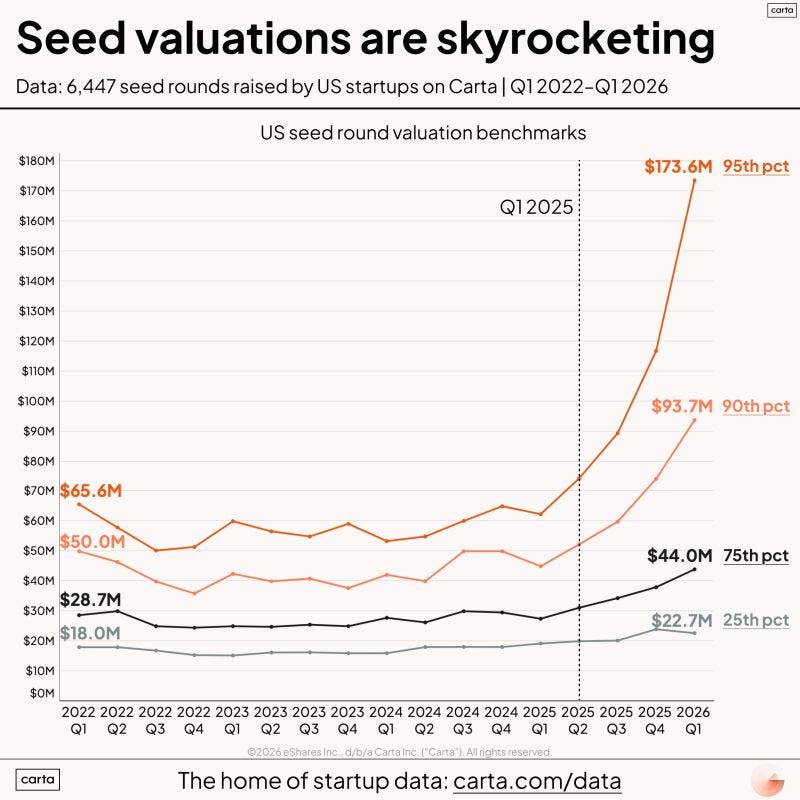

Investors should moderate commitments to seed-focused venture capital strategies in 2026

Zach Gaucher | Cambridge Associates | Dec. 3, 2025

Cambridge Associates warns LPs to moderate commitments to seed-focused venture strategies in 2026. The core argument is that the seed market is crowded, follow-on conversion is difficult, and the dispersion between top funds and everyone else remains extreme.

The piece is useful because it says the quiet part of seed investing plainly. Seed is not a cheap way to buy optionality unless a manager can repeatedly access and select the small minority of companies that graduate. In a world where capital crowds around AI, early exposure is not the same as disciplined exposure.

Ask the Agent: Cambridge’s seed warning in SignalRank data

SignalRank Agent | agent.signalrank.com | May 9, 2026

SignalRank Agent checked Cambridge’s warning against SignalRank data. The finding was directionally consistent: U.S. seed and pre-seed round counts fell from 7,674 in 2022 to 4,900 in 2025, and the Q1 2023 U.S. seed cohort had reached Series A by Q1 2025 at 7.4 percent and Series B eventually at 2.9 percent.

That makes the Cambridge point more concrete. The issue is not only macro caution. It is funnel math. If only a small fraction of seed companies become credible Series B candidates, the investment problem is selection, access, and patience, not mere participation.

These Startups Can Capitalize On AI Security’s Coming Boom (And Doom)

Upstarts Media | May 14, 2026

Upstarts breaks down Notable Capital’s Rising in Cyber list and the startups positioned for the next AI-security cycle. The useful angle is not just that security budgets will rise. It is that agents change the attack surface: permissions, tool use, identity, data leakage, automated transactions, and autonomous remediation all become live operational risks.

This fits the issue’s core argument. AI security is not only model safety. It is the practical question of how organizations govern software that can act.

The AI Trade Keeps on Giving as Cerebras, Nvidia & Market Indexes Soar

Newcomer | May 15, 2026

Newcomer uses Cerebras’ public-market debut as evidence that the AI infrastructure trade remains powerful. Reuters reported a $185 IPO price, $5.55 billion raised, and a $56.43 billion fully diluted valuation. CNBC reported a 68 percent first-day pop and a roughly $95 billion market cap.

The point for TWTW is that venture liquidity and public-market AI appetite are connected again. Cerebras is not only a chip-company story. It is proof that investors still want exposure to the compute layer underneath agentic software, inference demand, and AI deployment.

How to Short a Bubble

Alexander Campbell | Campbell Ramble | May 15, 2026

Alexander Campbell’s piece is the risk-management companion to the Cerebras item. His argument is not that AI infrastructure demand is fake. It is that real demand can still produce overextended prices when investors turn long-duration technology cash flows into a one-way macro trade.

The useful lesson is old but timely: bubbles are easiest to identify narratively and hardest to short practically. Rates, credit conditions, liquidity, index flows, and timing matter. AI may change the production function, but it does not repeal discount rates.

Regulation

A U.S. Economic Security “Latency Fund”

Guy Ward-Jackson | ChinaTalk | May 15, 2026

Guy Ward-Jackson argues for a U.S. economic-security Latency Fund: a financing mechanism for technologies that matter strategically but do not yet fit normal venture timelines, procurement cycles, or public-market patience.

The item belongs here because it connects industrial policy to AI-era infrastructure. If compute, chips, energy, defense systems, and critical supply chains are strategic assets, then the state may need instruments that can absorb timing mismatch rather than simply hoping private capital will solve every coordination problem.

Andreessen Horowitz Is Playing Politics Like No Other

Theodore Schleifer | The New York Times | May 13, 2026

The New York Times details how Andreessen Horowitz has expanded from venture investing into direct political organization, policy staffing, and campaign influence. The piece tracks the firm’s effort to shape regulatory outcomes in parallel with its portfolio exposure to crypto, defense-tech, and AI.

The policy significance is that capital formation and political strategy are now tightly linked in frontier technology sectors. For founders and investors, the operating environment is increasingly set not only by markets, but by coordinated influence over the rules that define those markets.

Even Silicon Valley’s Congressman Wants to Rein in AI

Bloomberg Businessweek | Bloomberg | May 13, 2026

Bloomberg profiles Ro Khanna’s argument that U.S. AI leadership now requires stronger federal guardrails around labor disruption, safety, and concentrated corporate power. The piece frames his stance as a shift from pure tech-sector boosterism toward a governance model that couples innovation support with enforceable constraints.

Its relevance this week is the growing political convergence around AI oversight from inside historically pro-tech constituencies. For founders and investors, this points to a policy environment where growth narratives increasingly need to coexist with compliance and accountability expectations.

Infrastructure

SpaceX’s Compounding Hardware Advantage

Contrary Research | Contrary Research | May 9, 2026

Contrary Research argues that SpaceX’s advantage is not a single breakthrough but a compounding hardware system: launch cadence, manufacturing, vertical integration, Starlink demand, reusable rockets, operational feedback loops, and capital intensity that gets cheaper as it scales.

The AI connection is infrastructure. Compute does not exist in the abstract. It depends on power, networks, hardware supply chains, deployment speed, and organizations that can build at industrial tempo. SpaceX is a reminder that physical execution can become a software-age moat.

Compute Is Defence Now

The State of the Future | May 14, 2026

The State of the Future argues that compute has become a defense asset. Chips, data centers, power contracts, model access, and cloud capacity are no longer just commercial infrastructure. They are national capability.

This piece is a clean bridge between AI deployment and geopolitics. If autonomous systems, cyber defense, intelligence analysis, and industrial production all depend on compute, then compute policy becomes defense policy. The old split between digital infrastructure and national security is disappearing.

Interview of the Week

Startup of the Week

Adaption aims big with AutoScientist, an AI tool that helps models train themselves

Russell Brandom | TechCrunch | May 13, 2026

Adaption launched AutoScientist, a system designed to improve model performance by co-optimizing training signals and data rather than treating fine-tuning as a static, one-off step. The company positions it as a way to make capability improvement more adaptive across specific tasks and domains.

The strategic angle is that automated improvement loops could shift advantage toward teams that best manage domain feedback and training orchestration, not only the largest model-training budgets. That makes this a strong startup pick for a week centered on deployment and infrastructure leverage.

Post of the Week

Keith Teare on Claude Implosion

Keith Teare | X | May 15, 2026

A reminder for new readers. Each week, That Was The Week, includes a collection of selected essays on critical issues in tech, startups, and venture capital.

I choose the articles based on their interest to me. The selections often include viewpoints I can't entirely agree with. I include them if they make me think or add to my knowledge. Click on the headline, the contents section link, or the ‘Read More’ link at the bottom of each piece to go to the original.

I express my point of view in the editorial and the weekly video.