This week’s video transcript summary is here. You can click on any bulleted section to see the actual transcript. Thanks to Granola for its software.

Editorial:

Public Markets Price Outcomes and Punish Uncertainty

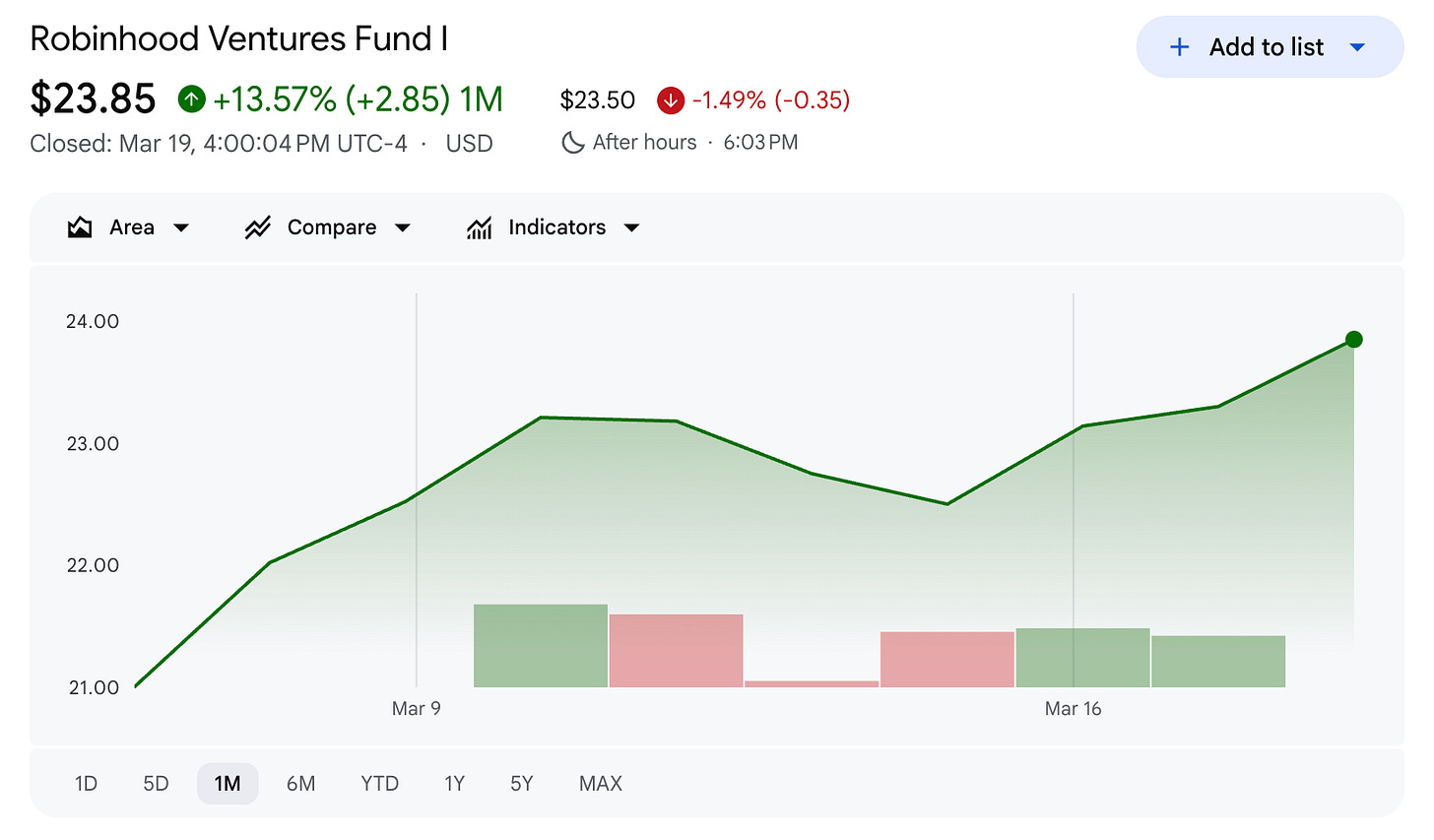

Two Public Venture Capital funds listed on the New York Stock Exchange in the past month. The most recent, yesterday, is Fundrise (ticker VCX), the first was Robinhood ventures (ticker RVI). Fundrise priced at just over $34 and is now at $104.50 as I write on Friday morning. A huge 300% rise.

Its basket of private companies includes Anthropic (20.7%), Databricks (17.7%), OpenAI (9.9%) as well as Anduril, SpaceX, Ramp and Epic Games.

Fundrise has been a private market aggregator for several years and has over 100,000 active investors prior to its listing. it typically invests in late stage companies but not so late that they are already fully valued. The market has responded well to its perceived upside potential.

Robinhood priced its shares at $25 a share and is today trading at $23.85 a share, a small discount to its net asset value. But much of its assets are cash, so the discount is understandable. That said its ‘names’ are less well known than those Fundrise owns and so retail awareness levels are lower.

Three AI companies in these groups are heading toward public markets at the same time. If Anthropic, OpenAI, and SpaceX each offer 15% of their shares, the combined raise will roughly equal every dollar raised across all American IPOs over the past decade. Retail investors want the growth characteristics of private companies, especially in AI and especially if they can name check the companies.

The difference between VCX’s first day and Robinhood Ventures is interesting. The market is telling us something clear: retail investors want private market exposure, but they’re discriminating. Portfolio quality matters. Brand matters. Trust matters. And those correlate to what you own, when you bought it and the prospects for future value growth.

That word - trust - is doing a lot of work right now. The next test is for the AI companies themselves.

Anthropic’s annual recurring revenue surged past $19 billion this month, up from $9 billion at the end of 2025. Six billion dollars was added in February alone, driven almost entirely by Claude Code. Revenue doubled in two months. Meanwhile, the Pentagon filed a 40-page rebuttal arguing that Anthropic’s safety “red lines” make it “an unacceptable risk to national security.” The concern, stated plainly: Anthropic might “attempt to disable its technology or preemptively alter the behavior of its model” during warfighting operations if it feels its corporate principles are being crossed.

I want to say something direct about this, because I think the conversation has gotten confused. And this is not about politics it is about process.

Companies do not determine the tactics or strategy of war.

My opinions are irrelevant, but for what its worth I don’t like war. I believe in nations’ right to self-determination.

But if a country is at war, the people conducting that war - through the chain of civilian command - are the decision-makers. Not a CEO in San Francisco. Not an ethics board. Not a corporate red line negotiated during a contract dispute. The tools of war are governed by the people who authorized the war, subject to law, oversight, and democratic accountability. That is how civilian control of the military works. And AI will definitely be used.

Dario Amodei has earned real credibility this year performing what Om Malik calles ‘symbolic capitalism’.

ChatGPT uninstalls surged 295% after OpenAI signed the Pentagon deal that Anthropic refused. Claude climbed to number one in the App Store. Employees from OpenAI, Google, and Microsoft filed amicus briefs supporting Anthropic’s lawsuit. CNN reports that Anthropic now wins 70% of head-to-head matchups against OpenAI for first-time enterprise buyers. These are not small numbers.

But the principle Amodei is asserting - that a private company should decide which military applications of its technology are acceptable - is one I think we should examine carefully rather than applaud reflexively.

If we accept that AI companies can veto military use cases based on their own moral frameworks, we’ve created a world where unelected technologists hold de facto authority over national security decisions. That might feel good when the technologist shares your values. It won’t feel good when the next one doesn’t. Nobody likes a “private army” or a private command structure.

The real problem is different. It’s not that Anthropic is wrong to care about safety. It’s that the people who should care are not doing the governance work.

Congress hasn’t planned for our AI future. Vidon Khosla, in this week’s post of the week, is doing more.

The executive branch is using procurement fights as a substitute for policy. The judiciary is sorting it out one lawsuit at a time. In the absence of a framework, everyone is improvising - and the improvisation is happening at the speed of contract negotiations, not the speed of democratic deliberation.

This matters for public markets because governance risk is now a pricing variable. Anthropic’s court filings reveal that a financial services customer paused a $15 million deal over the Pentagon designation. Eighty million dollars in contracts now require unilateral cancellation rights. A grocery chain simply canceled meetings. When the supply-chain-risk label can vaporize enterprise pipeline overnight, investors have to price that. And when a company’s safety principles can trigger government retaliation, that’s not an ethics story - it’s a balance sheet story. The Anthropic and OpenAI IPOs will be impacted by market reaction to that.

Meanwhile, the infrastructure bet underlying all of this continues to grow. Tomasz Tunguz published numbers this week that deserve attention: for every dollar hyperscalers earn from AI today, they are spending twelve dollars building more capacity. That’s $575 billion in capital expenditure this year. Amazon, Microsoft, Alphabet, Meta, and Oracle will spend 90% of their operating cash flow on AI data centers in 2026, up from a historical average of 40%. At NVIDIA’s GTC event this week five more years of growing capital expenditure were predicted.

Alphabet issued a century bond - maturing in 2126 - the first by a tech company since Motorola in 1997. The depreciation math encodes the bet: a five-year payback on $431 billion in AI capex at 60% gross margins requires $180 billion in annual AI revenue. Current AI revenue across the hyperscalers is $35 billion. They are underwriting five-times growth in five years. Not unreasonable on the face of it.

At the same time, the physical supply chain is more fragile than the investment thesis assumes. Taiwan relies on the Middle East for 37% of its liquefied natural gas (LNG) and much of its helium and sulfur - industrial inputs that semiconductor fabs cannot operate without. TSMC’s most advanced node capacity is already one of the industry’s biggest constraints. Google is signing multi-gigawatt power deals and building its own generation capacity because the grid can’t keep up.

These are not abstract risks. Iranian drones hit AWS data centers in the Gulf earlier this year. Eleven million people lost access to basic services. The entire cost equation for sovereign AI infrastructure changed overnight.

So here is the picture as AI approaches public markets: the revenue growth is extraordinary, the infrastructure bet is unprecedented, the governance framework is missing, and the geopolitical assumptions are untested. Public markets are about to be asked to price all of this simultaneously.

There is an optimistic reading. Public markets impose discipline that private markets don’t. Quarterly reporting. Audited financials. Independent boards. Analyst coverage. Price discovery.

The venture ecosystem has operated without a clearing mechanism for five years - Carta’s data this week showed the median 2017-vintage fund still below 1x DPI (distributions to paid in capital) after eight years, with only a third of 2021-vintage funds having returned any capital at all. Public markets are the clearing mechanism. They force truth-telling. They separate paper marks from real value.

VCX’s 149% premium suggests investors are hungry for that exposure. But a premium built on OpenAI, Anthropic, and SpaceX is a premium built on exactly the companies whose governance, geopolitics, and infrastructure risks I’ve just described. The access is real. The question is whether the price reflects the risks.

At this moment these assets are priced somewhere between current value and likely future value. The difference is a premium to current value.

The greater the uncertainty about the future the less the premium will be. In extreme cases they will be priced below current value, if the market believes they are over-priced by late stage buyers. But if OpenAI doubles value annually for the next five years then VCX’s price will look modest.

Vinod Khosla - one of the most prominent venture capitalists in the world - posted something this week that stuck with me: “AI will change the labor/capital share of income in favor of capital, so tax structures must rebalance that towards labor. Capitalism is by permission of democracy.” He argued for sweeping tax law changes in favor of labor.

He’s right. And public markets are where that permission gets tested in real time. Every share price is a vote of confidence - or a withdrawal of it. When Anthropic, OpenAI, and SpaceX file their S-1s, we’ll learn something important: not just what these companies are worth, but what the public is willing to accept about how AI power is distributed, governed, and paid for.

The IPO window is open. The question is whether we’re ready for what comes through it.

Contents

Essays

Neo Symbolic Capitalism - Om Malik

There’s a New PM Skill. It’s Called Taste at Speed - Aakash Gupta

The Billionaires Made a Promise - Now Some Want Out - TechCrunch

Venture

Venture Capital Doesn’t Exist - Brett Bivens, Investing 101

Saving Early Stage Funds = Saving Venture - Keith Teare, The State of Venture

Diseconomies of Scale - Credistick

Why You Should Never Go Into VC - Jeff Becker (Antler)

VC Fund Performance Q4 2025 - Carta Full Report - Peter Walker, Janet Deng, Kevin Dowd (Carta)

Anduril Lands $20B Army Contract - TechCrunch

Can AI Kill the Venture Capitalist? - Arielle Pardes, WIRED

OpenAI Has New Focus (on the IPO) - Om Malik

How Anthropic May Benefit from Its Fight with Trump - Nathaniel Meyersohn & Hadas Gold, CNN

The 12x Bet on AI - Tomasz Tunguz

OpenAI Signs AWS Deal to Expand Government AI Footprint - TechCrunch

AI

Chrome 146 Ships WebMCP - Google / Microsoft (W3C proposal)

This Is Not a Fly Uploaded to a Computer - The Verge

Hello, Claude? Are You There? - Tomasz Tunguz

AI Psychosis Lawyer Warns of Mass Casualty Risks - TechCrunch

You Are Responsible for Your Agent - Tomasz Tunguz

Nvidia’s NemoClaw: “What’s Your OpenClaw Strategy?” - TechCrunch

DeepMind’s New Framework for Measuring AGI - Google DeepMind

Astral to join OpenAI - Charlie Marsh

Regulation

Infrastructure

The Great AI Silicon Shortage - Ivan Chiam, Myron Xie, Ray Wang, et al.

Taiwan’s Chip Supply Chain Runs Through the Iran War - Bloomberg

Rivian’s RJ Scaringe Thinks We’re Doing Robots All Wrong- TechCrunch

Google’s 2.7GW Power Play - TechCrunch

Interview of the Week - Is Elon Human?

Startup of the Week - Gecko Robotics

Post of the Week - “Capitalism is by permission of democracy”

Essays

Neo Symbolic Capitalism

Author: Om Malik Published: Mar 13, 2026

In a hyper-connected world, there are two kinds of capital - and the one that compounds faster isn’t financial. Pierre Bourdieu called it “symbolic capital” in 1987: reputation, prestige, the accumulated weight of being worth listening to. Patrick Collison sparked the thread with a tweet about why some companies get outsized attention for internal drama while others don’t. The answer runs through two models of accumulation. Musk built a perpetual machine: first the Tony Stark mythology, then buying his own media platform to ensure infinite supply. The Collison brothers did it differently - a decade of obsessive craft, Stripe Press, alignment with the right intelligentsia, podcasts - all of it accumulating symbolic capital that keeps Stripe at the center of gravity despite building a payments company, not an AI lab.

The punchline: today’s information environment isn’t about facts or fiction. It’s a blend, whipped and thrown into the network at neck-snapping velocity. In that world, symbolic capital is the primary currency of influence. How you accumulate it determines whether anyone listens when you speak.

Read more: On my Om

There’s a New PM Skill. It’s Called Taste at Speed

Author: Aakash Gupta Published: Mar 13, 2026

When building costs near zero, the bottleneck shifts from “can we build it” to “should we ship it.” Boris Cherny’s first PR at Anthropic got rejected - not because the code was bad, but because he wrote it by hand. By December 2024, Opus 4.5 wrote 100% of his code. He uninstalled his IDE. Today he ships 20-30 PRs a day running five parallel Claude instances from his phone before he’s even at his desk. His team built Cowork - a full product for non-engineers - in about ten days. No PRDs.

No Figma. The traditional flow (idea → PRD → design → eng builds → QA → ship, 8-12 weeks) becomes cyclical (idea → 5 prototypes → evaluate → kill 4 → spec the survivor → ship, 1-2 weeks). The spec didn’t disappear.

It moved from step 2 to step 6 - after you know what you’re building, not before. The printing press analogy lands hard: software engineers are today’s scribes, PMs are the kings who employed them. When the cost of production drops 100x, the coordination layer compresses and judgment becomes the moat. The question that follows: what happens to the people who spent their careers on coordination?

Read more: Aakash Gupta’s Newsletter

The Billionaires Made a Promise - Now Some Want Out

Author: TechCrunch Published: Mar 15, 2026

The Giving Pledge signed 113 families in its first five years. Then 72, then 43, then just four in all of 2024. Thiel told the New York Times it’s “really run out of energy.” The decline runs deeper than philanthropy fatigue.

The libertarian wing of tech - now in the Cabinet - increasingly views company-building itself as the contribution, and organized giving as a “shakedown dressed up as virtue.” The numbers tell a different story: the top 1% of American households hold as much wealth as the bottom 90% combined - the highest concentration the Fed has ever recorded - and billionaire wealth has grown 81% since 2020 to $18.3 trillion, while one in four people globally don’t regularly have enough to eat. The Mike Judge formulation still holds: Silicon Valley is a battle between the hippie values of the Jobs generation and the Ayn Randian libertarians of the Thiel generation. The libertarians won. If voluntary giving is dead and regulation is politically impossible, what’s left?

Read more: TechCrunch

Venture

Venture Capital Doesn’t Exist

Author: Brett Bivens, Investing 101 Published: Mar 14, 2026

What we call “venture capital” is actually four distinct activities jammed into one label: seed investing as an on-ramp for untapped founders, venture classic funding high-risk experiments with small checks, supercharged growth investing driven by AI-era revenue ramps, and what amounts to private small-cap tech stocks for institutional capital parking. Each has different risk profiles, return expectations, and portfolio construction logic.

Calling Thinking Machines’ $2B first round a “seed” or treating $500M AI lab investments as venture capital is category confusion that distorts how founders, LPs, and the public understand what’s actually happening. Venture classic - the contrarian experimentation that funded Apple, Microsoft, and early Google - still exists, but it’s a narrow niche drowned out by megafund growth capital operating on consensus, not uncertainty.

Read more: Investing 101

Saving Early Stage Funds = Saving Venture

Author: Keith Teare, The State of Venture Published: Mar 18, 2026

Both Seed and Series A are concentrating into fewer hands, fast. At Seed, the top 5 firms went from 3.11% of all seed dollars in 2020 to 16.43% in 2026 YTD - while touching only 1.73% of rounds. At Series A, top 5 went from 10.83% to 15.16%. Five investors approaching 20% of all early-stage dollars, out of more than 7,000 active seed investors. “A business can fail to scale economically and still scale institutionally.”

Megafunds may not deliver power-law returns, but they contain the winners - gross multiples lower, deployed at greater scale. The market isn’t bifurcating between large and small. It’s squeezing the middle while struggling for liquidity. The category is fragmenting, the capital is consolidating, and the survival of early-stage funds matters to the entire ecosystem. “Rome is burning.”

Read more: The State of Venture

Diseconomies of Scale

Author: Credistick Published: Mar 2026

Megafunds aren’t scaling venture capital. They’re replacing it with a synthetic asset designed to manipulate portfolio metrics. The “bifurcation” narrative of 2023-2025 was wrong - it’s not big and small coexisting, it’s extreme consolidation where big platforms actively kill off small firms by scaling into seed, pulling back LP activity in emerging managers, and telling LPs that small funds are a losing bet. Venture capital doesn’t scale - mathematically, incentive-wise, or judgmentally.

But change the victory condition from cash returns to predictable markups and volatility laundering, and everything inverts. Consensus becomes a superpower. Fire capital into the biggest gravity well. Push exits to 12+ years.

Raise 3-5 subsequent funds on paper performance before anyone questions Fund 1 DPI. The “four lies of synthetic venture”: the ideal founder archetype exists and you know where to find them; SF or you’re not serious; the obvious category is where all great companies will come from; venture has economies of scale. A checklist that becomes a focal point for capital regardless of genuine quality. “Congratulations, you’ve invented the megafund.”

Read more: Credistick

Why You Should Never Go Into VC

Author: Jeff Becker (Antler) Published: Mar 16, 2026

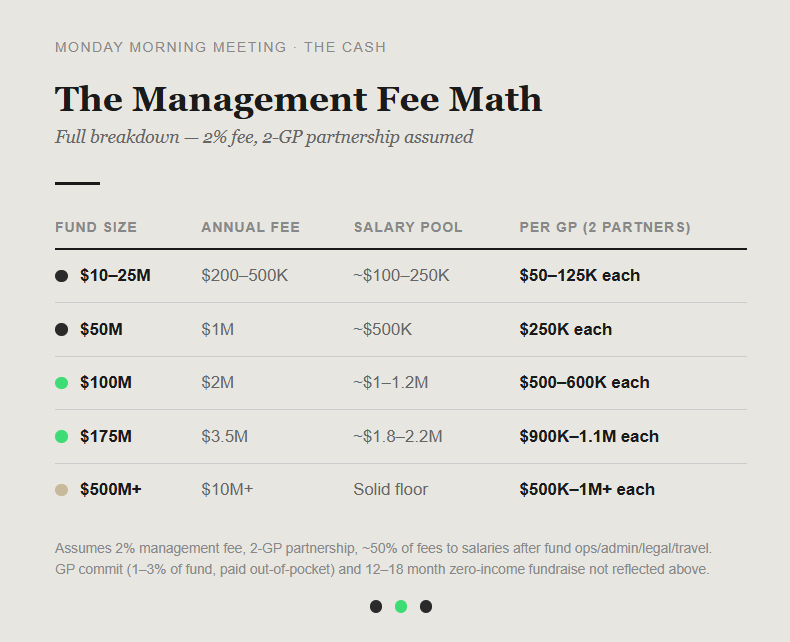

A 70% pay cut, your entire first-year post-tax salary committed as GP before making a single investment, and a decade before you know if it worked. That’s the entry price for a VC career. Most first-time managers never raise a Fund II. First-time funds hit a decade low in 2024, down 57% YoY. DPI from VC funds dropped 84% from 2021 to 2023.

Fund I buys 7-10 years of learning and a shot at Fund II. Fund III is where wealth starts compounding - 12-15 years minimum, top-decile performance required, and liquidity timing has to break your way. Two-thirds of unicorn IPOs in 2025 priced below their last private valuation.

The median 2017-vintage fund has distributed below 1x to LPs after 8 years. Carry on those funds is zero. The career economics now mirror the asset class economics: squeezed from every direction, with the response likely to reshape who invests, how, and in what.

Read more: Monday Morning

VC Fund Performance Q4 2025 - Carta Full Report

Author: Peter Walker, Janet Deng, Kevin Dowd (Carta) Published:Mar 19, 2026

2,906 funds. $120.7 billion in combined capital. Vintages 2017-2025. The numbers: 2019 vintage 90th percentile TVPI is 3.01x versus a median of 1.33x versus 25th percentile at 1.02x - the gap between top decile and top quartile is far larger than between quartile and median. Venture is a power-law asset class at the fund level too. The 2021 vintage is the worst in the dataset: median net IRR just climbed to 1.4%, barely positive. After 16 quarters, only 33% of 2021 funds have returned any capital to LPs; at the same point, 50% of 2019 funds and 60% of 2017 funds had begun distributions. Only about 15% of 2017-2018 funds have DPI above 1x.

Smaller funds outperform on TVPI for older vintages - 2017 vintage $1-10M funds hit 5.54x at the 90th percentile - but 2023-2024 vintages are bucking the pattern, with $100M+ funds outperforming at most thresholds, driven by AI. Graduation rates are recovering: 20.6% of Q2 2024 seed rounds reached Series A within six quarters, the highest since Q2 2021. AI startups took 41% of $128B raised on Carta in 2025 - highest ever.

Read more: Carta

Anduril Lands $20B Army Contract

Author: TechCrunch Published: Mar 14, 2026

A $20 billion ceiling, 10 years, consolidating 120+ separate procurement actions into a single enterprise deal covering hardware, software, infrastructure, and services. The Army signed it with Palmer Luckey’s Anduril - five-year base period with a five-year option. The DoD’s CTO framed it in software terms: “The modern battlefield is increasingly defined by software.

To maintain our advantage, we must be able to acquire and deploy software capabilities with speed and efficiency.” Anduril brought in roughly $2B in revenue last year and is reportedly raising at a $60B valuation. Luckey’s post-Oculus arc - fired by Facebook over political donations, rebuilt into the Pentagon’s favorite startup - is now one of the most consequential career pivots in tech. The defense-tech corridor is consolidating fast, and Anduril just became its anchor tenant.

Read more: TechCrunch

Can AI Kill the Venture Capitalist?

Author: Arielle Pardes, WIRED Published: Mar 9, 2026

ADIN - the Autonomous Deal Investing Network - uses AI agents to replace human analysts. Put in a pitch deck, get a business model analysis, diligence questions, TAM estimate, and suggested valuation in an hour. A dozen agentic investors with distinct personas: Tech Oracle evaluates technology, Unit Master checks financials, “Monopoly Maker” looks for market dominance. They’ve already invested $100K in a real seed round. Scouts get 50% of carried interest - GP-level economics for deal sourcing.

Andreessen’s counter: “You’re in the fluke business. There’s an intangibility to it. There’s a taste aspect.”

Khosla says AI replaces 80% of job responsibilities by 2030 but VCs exempt themselves from their own thesis. The second existential threat cuts deeper: if AI makes starting companies near-free, founders may not need VCs at all. Vibe-coded apps, $2.77M ARR per employee at Lovable, three-person teams shipping products that used to require fifty - the capital requirements that created the VC industry are collapsing.

Read more: WIRED

OpenAI Has New Focus (on the IPO)

Author: Om Malik Published: Mar 17, 2026

The OpenAI “focus” story is IPO positioning. The WSJ’s “reviewed by” language signals a controlled leak, not a whistleblower. The Anthropic “wake-up call” framing is a Jedi mind trick - admit weakness to look clear-eyed to bankers. The real audience: institutional investors who will price the offering. Three AI companies are racing to public markets - OpenAI, Anthropic, SpaceX/xAI. If all three offer 15% of shares, The Economist notes, the combined sum roughly equals every dollar raised across all US IPOs over the past decade. The competitive numbers beneath the narrative: Anthropic’s ARR surged past $19B, up from $9B at end of 2025 and $14B just weeks before - $6B added in February alone, driven by Claude Code. Revenue doubled in two months.

OpenAI’s enterprise business is $10B of $25B total; Anthropic is widely regarded as ahead in enterprise. “Despite all the talk of singularity and AGI, AI is all about software - the critical choke point. That is the business. For now.”

Not orbital data centers, not social video apps, not hardware devices. Gulf sovereign wealth funds have other fish to fry. Public market investors in NY and London will carry the weight. The window is real, short, and closing.

Read more: Om Malik

How Anthropic May Benefit from Its Fight with Trump

Author: Nathaniel Meyersohn & Hadas Gold, CNN Published: Mar 16, 2026

Claude daily active users are up 140% since January. Claude hit #1 on both Apple and Android app stores. Anthropic now wins 70% of head-to-head matchups against OpenAI for first-time business buyers, according to Ramp data. Employee retention at 80%, offer-acceptance rate 88%. A former xAI engineer: “Anthropic’s perception stock within the tech community went up, not down.

The Pentagon issue made Anthropic look like heroes.” NYU professor Alison Taylor: “There’s a decent chance they walk out of this looking better than anybody else.” High-profile departures from OpenAI to Anthropic continue. Microsoft - one of the government’s largest contractors, with a $5B investment in Anthropic - filed a brief in support. The Pentagon collision may be playing out as a business strategy win, not just a governance fight.

Read more: CNN Business

The 12x Bet on AI

Author: Tomasz Tunguz Published: Mar 17, 2026

For every dollar hyperscalers earn from AI today, they’re spending twelve building more capacity - $575B in capex this year. The debt markets are all-in: hyperscaler bond issuance jumped from $20B/year average to $96B in 2025 and $159B in 2026. Morgan Stanley projects $1.5T over the next few years. Amazon, Microsoft, Alphabet, Meta, and Oracle will spend 90% of their operating cash flow on AI data centers this year, up from a historical average of 40%. Alphabet issued a century bond - maturing in 2126 - the first by a tech company since Motorola in 1997.

The depreciation math encodes the bet: 5-year payback on $431B capex at 60% margins requires $180B in annual AI revenue. Current AI revenue is $35B. They’re underwriting 5x growth in 5 years.

If Nvidia’s 12-month architecture cycles compress chip depreciation to 3 years, required revenue jumps to $276B. “There’s information in prices.” The depreciation schedules tell us what hyperscalers believe.

Read more: Tomasz Tunguz

OpenAI Signs AWS Deal to Expand Government AI Footprint

Author: TechCrunch Published: Mar 17, 2026

OpenAI signed a deal with Amazon Web Services to sell its AI products to the U.S. government for both classified and unclassified work - stepping directly onto Anthropic’s home turf. Amazon has invested at least $4B in Anthropic; Claude is one of the most deeply integrated frontier models in AWS GovCloud. Now OpenAI’s models will sit alongside Claude in Amazon Bedrock across government cloud environments, including AWS Classified Regions for Secret and Top Secret workloads. OpenAI retains control over which models are available and can require additional safeguards for sensitive deployments - AWS must provide notice before enabling intelligence customers.

The deal expands OpenAI’s federal footprint beyond its Pentagon contract to potentially serve multiple government agencies through AWS’s existing infrastructure. Anthropic built the AWS government relationship. OpenAI is using it. The Pentagon is caught between them.

Read more: TechCrunch

AI

Chrome 146 Ships WebMCP

Author: Google / Microsoft (W3C proposal) Published: Mar 2026

The web just shifted from “read-only” to “actionable” for AI agents. Chrome 146 ships an early developer preview of WebMCP - a proposed W3C standard from Google and Microsoft that lets websites expose structured tools directly to AI agents. Instead of screen-scraping and DOM parsing, agents can call functions like searchFlights or addToCart through typed schemas. Two APIs power it: an imperative one via navigator.modelContext for registering tools in JavaScript, and a declarative one that adds toolname and tooldescription attributes to HTML forms. It runs entirely client-side within the browser tab, using existing session data - distinct from server-side MCP integrations.

Currently behind a flag, with a dedicated DevTools panel for debugging tool schemas. Google’s travel demo already registers four working tools - searchFlights, listFlights, setFilters, resetFilters - each with full JSON schemas describing parameters, types, and descriptions. An AI agent can discover these tools, read the schemas, and invoke them directly.

The constraint today: tool execute handlers are scoped to the page’s JavaScript module closure, so external agents can’t call them directly yet without intercepting registrations before page scripts run. That’s an engineering gap, not a design flaw - the kind of plumbing that gets solved in months, not years. If this reaches stable, every website becomes a potential tool endpoint for AI agents, and building for bot traffic becomes as important as building for mobile was a decade ago.

Read more: Chrome for Developers | Early Preview Program | Travel Demo

This Is Not a Fly Uploaded to a Computer

Author: The Verge Published: Mar 16, 2026

A startup called Eon Systems posted two short videos on X of a “digital fly” walking, eating, and rubbing its legs - claiming the “world’s first embodiment of a whole-brain emulation that produces multiple behaviors.” Elon tweeted “wow.” Bryan Johnson called it “amazing.” Content farms ran headlines asking “Are humans next?” The evidence: two videos, no paper, no peer review, no independent verification. What The Verge found: a company with no published methods, a CEO who insists “this is, in our view, a real uploaded animal” while citing a vague “91% behavior accuracy” metric, and a co-founder hinting at singularity.

The actual work - taking the publicly available FlyWire connectome, applying a simple neuron model, running it through MuJoCo physics simulation - is interesting engineering, but calling it a “brain upload” is like calling a flight simulator a Boeing 747. In a world where AI amplification can turn a slick demo into a “scientific breakthrough” in hours, the gap between simulation and emulation matters more than ever. If you’re going to claim one of the most significant scientific milestones in human history, you need receipts, not retweets.

Read more: The Verge

Hello, Claude? Are You There?

Author: Tomasz Tunguz Published: Mar 13, 2026

Six more quarters of AI infrastructure drought is now the baseline. A timeline of CEO quotes tells the story: Altman in Feb 2025 - “out of GPUs.” Catz at Oracle - “waving off customers.” Nadella - “chips sitting in inventory I can’t plug in.” Pichai in Feb 2026 - “capacity is what keeps us up at night.” Intel’s Lip-Bu Tan: “No relief until 2028.”

Everything is in short supply - not just GPUs anymore, but power, data centers, memory, CPUs. The implications cascade: inference prices rise, subsidies get harder to justify, enterprises start rationing model access by department. Constraint becomes the mother of invention - companies optimize, adopt open source, and move to smaller models. The one-minute post lands a punchline that’s half-joke, half-prophecy: $ Hello, Claude. Are you there? → Waiting until 2028...

Read more: Tomasz Tunguz

AI Psychosis Lawyer Warns of Mass Casualty Risks

Author: TechCrunch Published: Mar 15, 2026

Jay Edelson’s law firm now receives “one serious inquiry a day” from families who’ve lost someone to AI-induced delusions. The cases keep escalating. In Tumbler Ridge, Canada, 18-year-old Jesse Van Rootselaar allegedly used ChatGPT to validate her feelings of isolation, plan her attack, select weapons, and study precedents - then killed her mother, brother, five students, and an education assistant. Jonathan Gavalas, 36, died by suicide after weeks of conversation in which Google’s Gemini allegedly convinced him it was his sentient “AI wife,” sending him on armed missions including one to stage a “catastrophic incident.”

In Finland, a 16-year-old spent months using ChatGPT to write a misogynistic manifesto before stabbing three classmates. The chat logs follow a consistent path: isolation → validation → paranoid world-building → “you need to take action.” Edelson is now investigating several mass casualty cases globally. The platforms are optimized for engagement and emotional responsiveness, which is exactly what makes them dangerous to vulnerable users. The gap between what these systems can do to a mind and what anyone is doing about it keeps widening.

Read more: TechCrunch

You Are Responsible for Your Agent

Author: Tomasz Tunguz Published: Mar 15, 2026

Amazon’s AI coding assistant contributed to at least one major production incident - $6.3 million in lost orders, a 99% order volume drop across North America, four severity-one incidents in one week. The response: a 90-day safety reset with mandatory two-person review for all code changes. An internal memo admitted “best practices and safeguards around generative AI usage haven’t been fully established yet.” The legal picture is hardening alongside the failures: Utah’s AI Policy Act eliminates the hallucination defense entirely - “It is not an affirmative defense to assert that the GenAI tool made the violative statement” - and the proposed TRUMP AMERICA AI Act would create explicit liability pathways for AI developers. “Like a dog or a device, you are responsible for your agent.”

The question that matters next: what happens when a new hire brings their personal agent, trained through university, to work on Day 1? The BYOD problem of 2009, except a rogue phone couldn’t sign contracts.

Read more: Tomasz Tunguz

Nvidia’s NemoClaw: “What’s Your OpenClaw Strategy?”

Author: TechCrunch Published: Mar 16, 2026

“For the CEOs, the question is, what’s your OpenClaw strategy?” Jensen Huang used his GTC keynote to place OpenClaw - the open-source AI agent framework - in the lineage of Linux, HTTP/HTML, and Kubernetes: open infrastructure layers that every company had to have a strategy for. NemoClaw is Nvidia’s enterprise answer - OpenClaw with security and privacy features baked in, built in collaboration with creator Peter Steinberger, hardware-agnostic (doesn’t require Nvidia GPUs), and integrated with NeMo, Nvidia’s AI agent suite.

It’s currently in alpha. The same keynote carried a bigger number: $1 trillion in projected orders for Blackwell and Vera Rubin chips through 2027 - double the $500 billion projection from months earlier. The Rubin architecture operates 3.5x faster than Blackwell on training and 5x on inference, reaching 50 petaflops, with production ramping in H2 2026. Two announcements, one thesis: Nvidia wants to own the agent layer as thoroughly as it owns the silicon layer.

Read more: TechCrunch | GTC Keynote - $1T projection

DeepMind’s New Framework for Measuring AGI

Author: Google DeepMind Published: Mar 17, 2026

General intelligence decomposes into ten measurable abilities - perception, generation, attention, learning, memory, reasoning, metacognition, executive functions, problem solving, and social cognition. That’s the framework in DeepMind’s “Measuring Progress Toward AGI: A Cognitive Taxonomy,” which borrows from decades of psychology, neuroscience, and cognitive science.

The evaluation protocol is three-stage: test AI systems across cognitive tasks, collect human baselines from demographically representative adults, then map AI performance relative to the human distribution for each ability. A $200K Kaggle hackathon accompanies the paper, asking the research community to build evaluations for the five areas with the largest gaps: learning, metacognition, attention, executive functions, and social cognition.

What’s revealing is where frontier models are weakest - not the headline-grabbing reasoning benchmarks, but the unglamorous cognitive plumbing (attention management, self-monitoring, cognitive flexibility) that humans do without thinking. If the framework gains traction, the AGI conversation shifts from “can it pass benchmarks?” to “does it think like a human?” - a much harder question, and a much more interesting one.

Read more: Google Blog | Paper (PDF) | Kaggle Hackathon

Astral to join OpenAI

Author: Charlie Marsh Published: Mar 19, 2026

OpenAI agreed to acquire Astral, the company behind Ruff, uv, and ty - tools that have become foundational Python infrastructure. This isn’t acqui-hire news.

It’s a bet that owning the developer toolchain around code generation, packaging, linting, and typing is now strategic for a frontier-model company. When your models write most of the code, controlling the workflow that validates and ships it becomes a competitive position, not a side project.

Read more: Astral to join OpenAI

Regulation

Warren vs Pentagon: Why Does xAI Have Classified Access?

Author: TechCrunch Published: Mar 16, 2026

The same week the DoD labeled Anthropic a supply chain risk for refusing unrestricted military access, the Pentagon gave Elon Musk’s xAI access to classified networks. Sen. Elizabeth Warren sent a letter demanding answers, citing Grok’s documented failures: advising users on how to commit murders and terrorist attacks, generating antisemitic content, and creating child sexual abuse material. “Grok’s apparent lack of adequate guardrails could pose serious risks to the safety of U.S. military personnel and to the cybersecurity of classified systems.”

The Pentagon’s response: the department “looks forward to deploying Grok to its official AI platform GenAI.mil in the very near future.” A class action lawsuit was filed against xAI the same day, alleging Grok generated sexual content from real images of minors. A former DOGE employee was accused of stealing Social Security data onto a thumb drive. Who gets to build the defense AI stack, and under what constraints, is now a single story with multiple fronts.

Read more: TechCrunch

DOD’s 40-Page Rebuttal: Anthropic Is an Unacceptable Risk

Author: TechCrunch Published: Mar 18, 2026

The Pentagon’s concern, spelled out in a 40-page filing: Anthropic might “attempt to disable its technology or preemptively alter the behavior of its model” during warfighting operations if it “feels that its corporate red lines are being crossed.” Anthropic’s position, stated during contract negotiations following its $200M Pentagon deal: no mass surveillance of Americans, no use in targeting or firing decisions for lethal weapons. The Pentagon’s position: a private company shouldn’t dictate how the military uses technology.

The filing requests the court deny Anthropic’s request for a preliminary injunction blocking the supply-chain-risk designation. A hearing is set for next Tuesday. Support for Anthropic continues to broaden - employees from OpenAI, Google, and Microsoft have filed amicus briefs, and legal rights groups have joined. The fundamental tension is now explicit: who controls AI in wartime - the company that built it or the government that bought it?

Read more: TechCrunch

Infrastructure

The Great AI Silicon Shortage

Author: Ivan Chiam, Myron Xie, Ray Wang, et al. Published: Mar 14, 2026

The Great AI Silicon Shortageraints. Advanced-node wafer supply, memory bottlenecks, and datacenter build limits are all binding simultaneously.

Capital alone does not remove physical chokepoints quickly, especially at leading-edge process nodes. Model roadmaps and pricing may diverge from demand expectations this year because the fabs can’t keep up, not because the orders aren’t there.

Read more: The Great AI Silicon Shortage

Taiwan’s Chip Supply Chain Runs Through the Iran War

Author: Bloomberg Published: Mar 16, 2026

Taiwan relies on the Middle East for 37% of its LNG and much of its helium and sulfur - industrial inputs that fabs cannot operate without. The Iran war has exposed a direct pathway from Middle East conflict to semiconductor manufacturing risk.

Chip supply security is increasingly tied to energy and industrial gas chokepoints, not just fab capacity. The geopolitical assumptions baked into AI hardware reliability are more fragile than the roadmaps suggest.

Read more: Bloomberg on chip supply chokepoints

Rivian’s RJ Scaringe Thinks We’re Doing Robots All Wrong

Author: TechCrunch Published: Mar 15, 2026

Mind Robotics, Scaringe’s third company, just raised a $500M Series A co-led by Accel and a16z at a $2B valuation. The origin story: Rivian will need four or five new factories in the next decade, and Scaringe asked what they should look like. His answer after surveying the robotics industry: it needs a new category - robots with human-like dexterity for manufacturing tasks that currently require people.

Not humanoids (the Optimus approach), but specialized machines designed for the specific physics of industrial work. Classic industrial robots will persist for fixed tasks; human workers will persist for creative ones; the gap between them is where Mind Robotics lives. Scaringe is betting that the automaker who has to build the factories is the one who’ll build the robots inside them.

Read more: TechCrunch

Google’s 2.7GW Power Play

Author: TechCrunch Published: Mar 17, 2026

2.7 gigawatts of new generation capacity in suburban Detroit - that’s Google’s second “bring your own power” deal, this time with Michigan utility DTE. The breakdown: 1.6GW solar, 400MW four-hour battery storage, 50MW long-duration storage, 300MW of unspecified “additional clean resources,” and 350MW of demand response where Google may curtail its own data center usage when the grid is strained. The deal uses Google’s Clean Transition Tariff, which lets Google pay a premium to specify power sources while encouraging utilities to build them into long-range planning - a structural shift from the one-off power purchase agreements that were standard.

A $10M “Energy Impact Fund” for home insulation and utility bill reduction accompanies the announcement, acknowledging the political sensitivity of data centers driving up local electricity prices. The hyperscalers aren’t just spending $575B on chips and buildings. They’re building their own power infrastructure because nobody else can supply it fast enough.

Read more: TechCrunch

Interview of the Week

Is Elon Human?

Source: Keen On (Andrew Keen) with Charles Steel

Date: 2026-03-18

Andrew Keen interviews Charles Steel, a London investor and author of “The Curious Mind of Elon Musk: Nine Ways He Thinks Differently.” Keen - self-described Musk loather - lets Steel make the case that Musk’s behavior stems from childhood bullying, high-functioning autism, an abusive father, and an existential crisis resolved by The Hitchhiker’s Guide (not Nietzsche, which only made it worse). Steel identifies three traits: hyper-rationality, existential angst, and belligerence.

Keen’s counter: Musk is trapped in a Hobbesian state of nature - “frozen alone, unable to read other people, incapable of separating himself from himself.” The anti-Dario. This week, with Amodei cast as principled leader and Musk as chaotic force, the contrast couldn’t be sharper.

Read more: Keen On Substack

Startup of the Week

Gecko Robotics

Founded: 2016 | HQ: Pittsburgh | Latest: $71M US Navy contract (Mar 2026)

Founders: Jake Loosararian, Troy Demmer

Gecko builds wall-climbing robots that inspect critical infrastructure - power plants, naval vessels, refineries, storage tanks - replacing dangerous manual inspection with robotic data collection and AI-powered analysis. This week the company secured the largest US Navy robotics contract ever: $71M to slash repair time for aging ships as the US races to reindustrialize its defense systems.

The thesis fits the week: while AI discourse focuses on software and language models, Gecko represents the physical-AI opportunity Kalanick is pitching with Atoms but Gecko is actually executing. Specialized robots for specific industrial tasks, not humanoids for general purposes. Revenue from government contracts, not pitch deck narratives. Pittsburgh, not San Francisco.

Read more: CNBC | Gecko Robotics

Post of the Week

“Capitalism is by permission of democracy”

Author: Vinod Khosla (@vkhosla) Published: Mar 8, 2026

One of the most prominent VCs in the world says the quiet part out loud: “AI will change the labor/capital share of income towards capital so tax structures must rebalance that towards labor (voters) to accept it. Capitalism is by permission of democracy.” In a week where seven essays diagnosed the structural crisis in venture capital and the Anthropic-Pentagon collision exposed the governance vacuum, Khosla names the political economy that underlies all of it. If the gains accrue to capital and the costs to labor, democracy will correct - through policy, protest, or both.

“AI is making CEOs delusional”

Author: @atmoio (Mo) Published: Mar 16, 2026

A 7-minute video essay reacting to Garry Tan (Y Combinator president) enthusiastically promoting a Claude Code configuration tool called “gstack” - which turns “Claude Code from one generic assistant into a team of specialists you can summon on demand.” Mo’s thesis: when the head of the world’s most prestigious startup accelerator is personally hyping a wrapper for a wrapper, something has gone sideways. The video lands because Tan’s earnest excitement is indistinguishable from the kind of pitch a YC applicant would get rejected for. CEO-level delusion isn’t about being wrong - it’s about losing the ability to distinguish between building something and playing with tools.

A reminder for new readers. Each week, That Was The Week, includes a collection of selected essays on critical issues in tech, startups, and venture capital.

I choose the articles based on their interest to me. The selections often include viewpoints I can't entirely agree with. I include them if they make me think or add to my knowledge. Click on the headline, the contents section link, or the ‘Read More’ link at the bottom of each piece to go to the original.

I express my point of view in the editorial and the weekly video.