This week’s video transcript summary is here. You can click on any bulleted section to see the actual transcript. Thanks to Granola for its software.

Editorial

Hands Off?

The End of typed and touched input?

For sixty years, the interface between humans and computers was visual and hand-driven. Menus, files, folders, buttons, keyboards, search boxes, tabs. All of it designed for the text-literate minority who had learned to operate machines by navigating their visible surfaces. The icon, the app, the screen - these were not the computer. They were the clothing and decoration the computer wore so that humans could use it.

That clothing and decoration is coming off.

Peter Kris nails it.

Andrej Karpathy, speaking at Sequoia’s AI Ascent conference this week, gave the engineering description of what is happening: “The neural net becomes the host process and the CPUs become the co-processor.” He illustrated it with a story about an app he built called MenuGen, which let you photograph a restaurant menu and generate images of the dishes. The traditional version required an OCR pipeline, a generation step, a UI, a database. Then he saw the Software 3.0 version: hand the photo to a model, ask it to overlay images of the food.

“My menu gen is spurious,” he said. “It’s working in the old paradigm. That app shouldn’t exist.”

That is the pattern repeating across every sector this week. The FT reports that WPP is cutting £500 million in costs as AI replicates the advertising agency’s creative stack at near-zero marginal cost. OpenAI is reportedly building a phone designed, in the words of the TechCrunch report, with “AI agents replacing apps” as the explicit design point. Carl Pei, founder of Nothing, told a SXSW audience: “

Apps will eventually go away.”

These are not separate stories. They are the same story told from different industry positions.

What is replacing software-as-product is something that looks, structurally, like human-to-human interaction.

When you interact with a person you do not need a keyboard or mouse or touchscreen.

You do not navigate a menu to get what you need. You describe what you need in the same way you would describe it to a capable colleague. Just like a human waiter the computer listens, asks when it is unclear, remembers the last conversation, and responds in kind.

Voice, ears, eyes, and context replace keyboard, cursor, screen and app. The interaction shape changes from “operating a machine” to “talking to someone who can do the thing.”

The strategic implications of this shift are already becoming visible. Apple has the silicon and needs the model. OpenAI has the model and needs the device. Both companies are arriving at the same conclusion from opposite directions: the device as intermediary matters again.

And that will elevate the device above the cloud. Hands-free, ambient computing cannot survive a metered cloud at consumer prices. It needs to just work at the device level. Local inference, on dedicated silicon, is the likely end game for most human to agent interaction. The 2015-2024 frame - that the device was just a glass rectangle and the cloud was everything - is over. And Apple is back in the race, but so too is OpenAI and Jony Ive.

The question underneath all of this is what it means for people.

Anthropic published research this week measuring which occupations AI is actually displacing in production, right now. The numbers are striking: computer programmers at 75 percent exposure, customer service representatives at 70 percent, data entry keyers at 67 percent, medical records specialists at 66 percent, marketing researchers at 65 percent. These are not low-wage jobs. The most exposed occupations earn, on average, 47 percent more than the least exposed ones. They are disproportionately held by women and by graduate-degree holders. The people at greatest risk from the current transition are not the people at the bottom of the income distribution. They are the people who spent years acquiring a credential and trading it for income.

There is a name for what is happening to those credentials. For two hundred years, specializing was the rational bet for any ambitious person. Pick a high-value skill. Develop it deeply. Build a career around it.

The economies of the twentieth century were organized around this bet: mass university systems, professional licensing bodies, apprenticeship ladders, all designed to produce people with bounded, tradeable specialisms.

That structure is now being dismantled, not by policy but by a technology that turns bounded specialisms into commodities. You no longer need to employ a specialist to access specialist knowledge. You invoke it on demand from codex or claude or Gemini.

That is frightening if you are the specialist. It is also, from a different angle, one of the most significant reductions in scarcity in human history.

There is a doctor shortage in most of the world. There is a teacher shortage, a therapist shortage, a lawyer-for-the-poor shortage, a tax-accountant-for-small-business shortage. None of the standard policy responses - train more, pay more, simplify immigration, fund residencies - has solved any of these at scale, because the bottleneck is the years of human training multiplied by the limited bandwidth of individual human attention. One doctor sees thirty patients a day. One teacher holds twenty-five students. These ratios have not changed in two hundred years. The rise of AI agents changes them.

The same AI capacity that is displacing the medical records specialist is also making specialist-quality diagnostic and treatment-planning knowledge available to people who have never been able to see a doctor. The same displacement that is hollowing out entry-level legal work is also providing legal counsel to the people currently priced out of the justice system.

Both things are happening at once. Anthropic measures the cost of the transition. We should also measure the offsetting benefit. Both deserve to be named.

Which brings us to the human future with AI, and to four roles that will define it.

The question is not which jobs survive. The question is which human capacities are irreplaceable when the machine can implement almost anything you can describe. The answer comes down to a single observation: AI systems can optimize toward an objective, but they do not want anything. They have no stake in what gets built. They cannot be held accountable. They do not care how the world turns out. The capacities that survive are the ones where that directional human intent - wanting - is the irreplaceable input.

There are four human roles in the age of AI. Three of them endure. One of them is ending.

The first is the Idea Person - the person with the vision, the taste, the theory of what should exist before it does. This is the oldest human archetype: the storyteller, the inventor, the founder who sees around a corner. For the last two hundred years, this person has been at a structural disadvantage. They had a great idea but could not build it alone. They needed specialists to implement it, and those specialists were expensive. Many of the most original founders never made it through that process.

Sam Altman described what is changing at Stripe Sessions this week:

“We used to make fun of the idea guy. All of a sudden it’s the revenge of the idea guys - which is actually awesome for the world.”

When implementation becomes a commodity, the scarce input disappears. The specialist was the Idea Guy’s requirement. Remove the need for a human specialist tier and the problems disappear simultaneously. When the tool makes itself, vision is the difference. Reid Hoffman this week praises AI slop as an inevitable consequence of freeing the idea person, and a price worth paying.

The second is the Leader - the person who sets direction when the answer is not obvious, who makes irreversible decisions and lives with the consequences, who builds trust and holds people accountable. An AI can recommend a course of action, but it cannot own the outcome. It has nothing at stake.

That is why leadership remains a fundamentally human function regardless of how capable the AI becomes. Fred Wilson, who has invested in startups for forty years, wrote a memo to his USV partners this week that captures the principle precisely: the only three things that should occupy a human at his firm are thesis development, building relationships with founders, and working with founders after an investment is made. “Everything else can be done by AI.” Wilson is not describing a hypothetical future. USV has already built agents that handle sourcing, due diligence, term sheets, and relationship management. The human time that remains is the time that requires genuine accountability and trust.

The third is the Operator - the person who makes complex systems actually work. In the AI era, this means managing the agent fleets, translating a leader’s direction into coordinated workflows, monitoring what the agents are doing, and intervening when things go wrong. Greg Brockman of OpenAI told a story this week that illustrates why this role exists. He asked an AI coding agent to contact someone on Slack about a technical problem. Two minutes later, the agent had escalated the issue to that person’s manager. “On the one hand, it’s a reasonable thing to do,” Brockman said. “On the other hand… maybe should have checked with me.”Someone needs to manage those boundaries. The Operator is also the natural landing zone for people displaced from specialist roles - the place where process judgment and system understanding matter more than any single narrow skill. It is a growing role for now, though it too will face pressure as AI agents learn to orchestrate other AI agents.

The fourth is the Specialist - the implementer, the person whose value has come from mastering a bounded domain and trading that expertise for income. This is the role that is ending. Not because the work disappears, but because the specialism is being commoditized.

Specialisms continue; the human premium for doing them do not.

Wilson described the moment of recognition clearly: he gave the same contract to a specialized legal AI company and to Claude Code, a general-purpose coding agent. Claude Code won. “In that moment I was like, all of legal AI is dead.” The pattern repeats across the specialist tier. The specialism is learnable, which made it valuable to develop - and which makes it straightforward for AI to absorb.

The Industrial Revolution did not just change what people did for a living. It changed what kind of person the economy wanted to produce. It created the Specialist as the dominant social form, and that form has run for two hundred years.

The AI revolution is bringing it to an end. What replaces it - the Idea person who originates, the Leader who commits, the Operator who orchestrates - is already visible in the evidence this week’s curation presents.

One last thing: who is paying for all of this, and should we be worried?

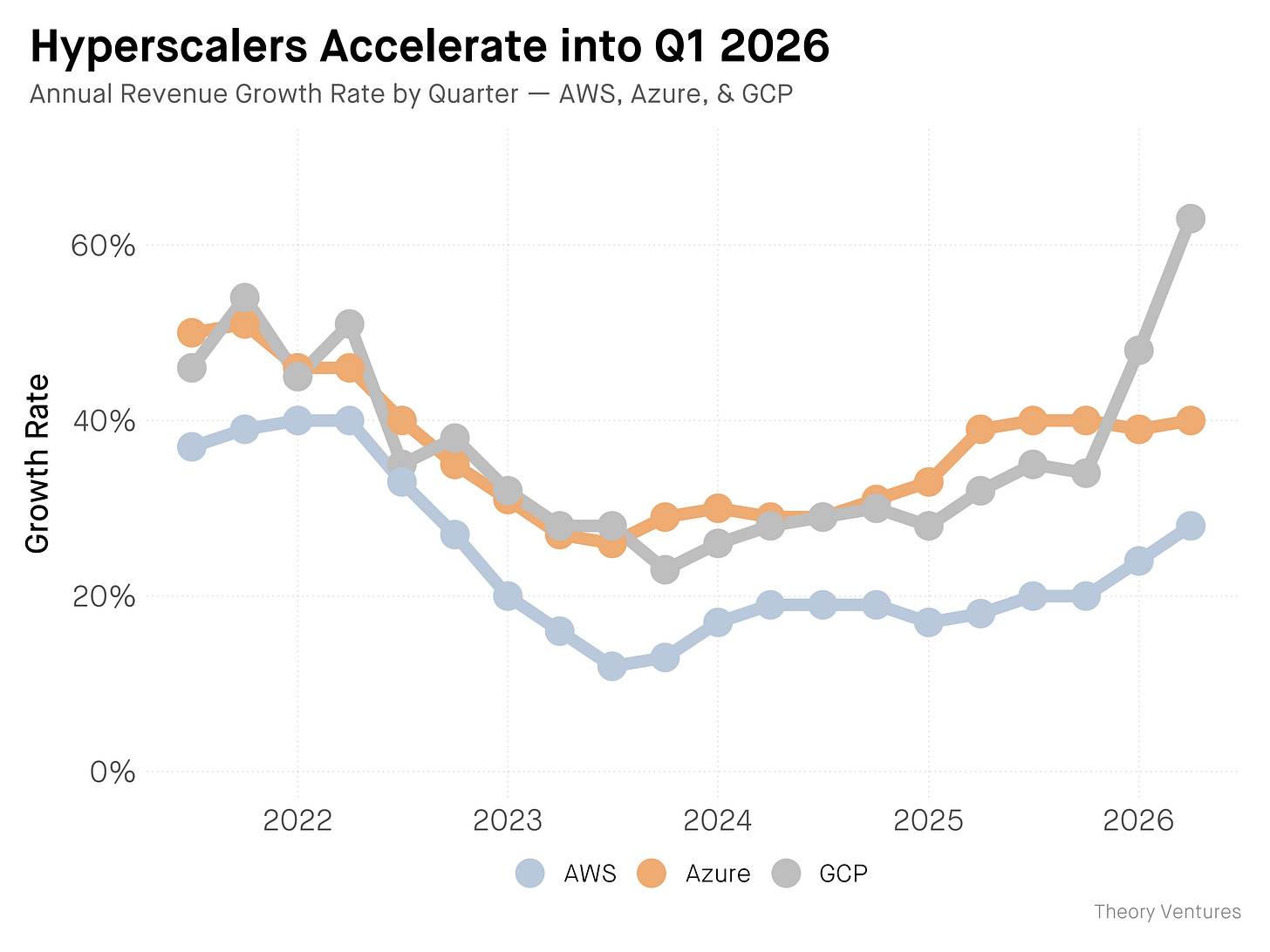

Microsoft, Google, Meta, and Amazon are on track to spend roughly $700 billion on AI infrastructure in 2026. That is a number comparable to the entire US defence budget. Signüll, whose post is this week’s featured piece, asked the right question: has this ever happened before?

The honest answer is mostly yes. Innovation waves have almost always been privately financed. The steam engine, the railway network, the telephone, the electricity grid, the car, commercial aviation, the personal computer, the commercial internet - each was built by private capital chasing returns, not by government programs. The state’s role has consistently followed the same pattern: fund basic research upstream, build the common infrastructure the wave requires, clear the policy path for private actors, and arrive last with the tax bill.

Even China, the strongest state-capitalist case anyone might invoke, follows this pattern. Beijing does not run the labs that produce DeepSeek or Qwen. It spends on the common needs around the innovation: chip fabrication subsidies, power buildout, talent pipelines, capital made available through state banks. The lab is private.

What is genuinely unusual about the current moment is not the private financing - that is the historical norm. It is the concentration in four companies, the speed of the deployment cycle, and the governance gap. In previous waves, hundreds of companies competed to build the infrastructure. In this one, four are doing it. Previous waves unfolded over decades. This one is moving in years. And the public conversation about what is being built has not yet caught up with the buildout itself.

Only 31 percent of Americans trust their own government to regulate AI, according to the Stanford AI Index published last week. The US ranks 24th in generative AI adoption globally. The flow of AI researchers into the US is down 89 percent since 2017. China is moving the other way on all three measures.

In a race where the frontier models are functionally tied, the country with cultural permission to deploy the technology wins. The United States is currently not winning that race.

The bull case for what is being built is real and structurally available: humanity, scaling for the first time, in the dimensions that have always mattered most. The permission to deploy it is the question that the rest of this issue keeps circling back to, from every direction at once.

Contents

Editorial: Hands Off? The End of Typed and Touched Input

Essays

Act II: The Clock Speed Problem - Saul Klein (Articape)

Luis Garicano on the Economics of Artificial Intelligence - Yascha Mounk

Competitive Strategy in the Age of AI - Tomasz Tunguz

The Stanford Freshmen Who Want to Rule the World - Theo Baker (The Atlantic)

Labor Market Impacts of AI: A New Measure and Early Evidence - Anthropic Economic Research (Massenkoff & McCrory; viral via AIHighlight, Apr 28)

In Defense of AI Slop - Reid Hoffman (Theory of the Game)

What Will It Take to Get A.I. Out of Schools? - Jessica Winter (The New Yorker)

Americans Distrust Artificial Intelligence While China Embraces It - Russell Wald & Sha Sajadieh (Stanford HAI / WaPo)

How YouTube Took Over the American Classroom - Shalini Ramachandran (The Wall Street Journal)

Our Future Is Being Devoured By Feral Thought Experiments - Henry Farrell (Programmable Mutter)

A Costume Called Conviction - Adam Shuaib (Episode1 VC, X)

AI

Memory Is the Machine - Om Malik (On my Om)

Agent, Know Thyself! (and bid accordingly) - Rohit Krishnan & Andrey Fradkin (Strange Loop Canon)

End of the road for the ‘Mad Men’ as AI moves into advertising - Daniel Thomas (FT)

Apple Just Positioned Itself for the Next Trillion Dollars - Nate B. Jones (AI News & Strategy Daily)

OpenAI could be making a phone with AI agents replacing apps - Ivan Mehta (TechCrunch)

I Got Stood Up by an AI Agent, and Tracked Down Its Human Owner in China - Rest of World

Andrej Karpathy: From Vibe Coding to Agentic Engineering- Andrej Karpathy with Stephanie Zhan (Sequoia Capital, AI Ascent 2026)

Greg Brockman: Why Human Attention Is the New Bottleneck - Greg Brockman with Alfred Lin (Sequoia Capital, AI Ascent 2026)

Demis Hassabis: We’re Three Quarters of the Way to AGI - Demis Hassabis with Konstantine Buhler (Sequoia Capital, AI Ascent 2026)

Jim Fan: Robotics’ End Game - Jim Fan with Konstantine Buhler (Sequoia Capital, AI Ascent 2026)

The App Era Is Turning Into the Agent Era - peter kris (X)

Is AI the Next Phase of Evolution? - Richard Dawkins (UnHerd)

The $112 Billion Quarter: Hyperscalers Bet the Farm on AI - Tomasz Tunguz

What Microsoft’s 10-Q Says About OpenAI - Om Malik (On my Om)

Darwinian Specialization in AI - Tomasz Tunguz

Big Tech’s AI Growth (mostly) impressed Wall Street - Alex Wilhelm (Cautious Optimism)

Sam Altman in conversation with Patrick Collison - Sam Altman with Patrick Collison (Stripe Sessions 2026)

Venture

Fred Wilson on 40 Years in Venture - and Why USV Is Automating Itself - Fred Wilson with Michael Mignano (USV)

Revisiting The Outcome Distortion Complex - Kyle Harrison (Investing 101)

Seed Funding Is Bigger Than Ever — And Harder To Get - Gené Teare (Crunchbase News)

Regulation

Palantir employees are talking about company’s “descent into fascism” - Makena Kelly (WIRED)

Infrastructure

US is making Europe pay dearly for its half-hearted electrification - Cornel (Geoeconomic), via Henry Farrell

Interview of the Week - God Looks After Fools, Drunks and the United States (Andrew Keen / John Steele Gordon)

Startup of the Week - USVC (AngelList Asset Management)

Post of the Week - The Next Layer of Civilization Is Being Privately Financed (Signüll)

Essays

Act II: The Clock Speed Problem

Articape · Saul Klein · Apr 13, 2026 · Tags: Venture, Long-Duration, Power-Law, Portfolio-Construction, AI

Saul Klein argues that successful innovation investing isn’t about timing the cycle - it’s about holding diversified portfolios across multiple innovation timelines simultaneously while everyone else reacts to the panic of the moment. His framing: innovation runs at three distinct clock speeds at once. Fast-moving AI companies operate on a one-to-three-year tempo. Revenue-generating mid-stage firms ($25M-$100M+ ARR) operate on a five-to-ten-year tempo. Decades-long scientific ventures - biotech, energy, deep physical infrastructure - operate on a twenty-to-thirty-year tempo. Trying to invest as if only one of those clocks is running is the structural mistake.

The historical anchor is power-law concentration: 50% of all public-market wealth since 1926 has come from just 90 companies - out of 24,240. Klein then names the operating reality of his own book: 35 companies generating $100M+ in annual revenue, plus 60 generating $25M-$100M. Those aren’t headlines; they’re the durable middle layer that the AI panic-of-the-week cycle keeps overshadowing.

The Amazon comp does the work of the whole essay. Amazon IPO’d at $146M of revenue in 1997, collapsed in the 2000 dot-com unwind, and reached a $1.3 trillion market cap by 2023 - a 20,000%+ return for holders who didn’t sell. Klein has seen nine major panics since 1994 (Dotcom, 9/11, GFC, COVID, and the rest), and his point is that every one of them looked catastrophic in the moment and trivial in the rearview.

“In the short run, the market is a voting machine. In the long run, it is a weighing machine.”

The piece is a useful counterweight to the unicorn-count euphoria in the Venture section and to the AI-only investing posture much of the venture press has drifted into. Klein’s argument: the clock-speed problem isn’t volatility; it’s the temptation to mistake one clock for the only clock.

Read more: Articape

Luis Garicano on the Economics of Artificial Intelligence

Yascha Mounk · Apr 25, 2026 · Tags: AI, Economics, Productivity, Labor, Jobs, Industrial-Revolution

Yascha Mounk’s interview with economist Luis Garicano lands as the most useful counterweight of the week to the “AI replaces all knowledge work” reflex. Garicano argues AI’s productivity gains will rival the Industrial Revolution, but mass job displacement is unlikely - for four reasons that don’t get enough airtime: complementarities (humans remain essential at bottlenecks even when most adjacent tasks automate), demand elasticity (healthcare, energy and education expand if delivery gets cheaper), the gap between automatable tasks and complete jobs, and entire sectors AI can’t reach.

The sharpest detail is the radiologist example. A radiologist spends only about 30% of their time looking at scans. Automating that 30% does not eliminate the radiologist; it potentially expands what the rest of the role can cover. Garicano’s framing - “jobs are more than their most automatable tasks” - is a useful corrective to the “X% of tasks are automatable, therefore X% of jobs disappear” arithmetic that keeps showing up in consultancy decks.

“All value is generated for humans. What does the economy generate value for if nobody is buying the products?”

The closing point is the one worth carrying into the rest of this week’s reading. If AI raises productivity at Industrial-Revolution scale but knocks out demand by knocking out wages, there’s no equilibrium - the economy needs buyers as much as it needs producers. That tension is the real subject of the “free ride is over”essay below, and it sits underneath every $1T-valuation headline this week.

Read more: Yascha Mounk

Competitive Strategy in the Age of AI

Tomasz Tunguz · Apr 24, 2026 · Tags: AI, Strategy, Anthropic, Google, Commoditization, Distribution

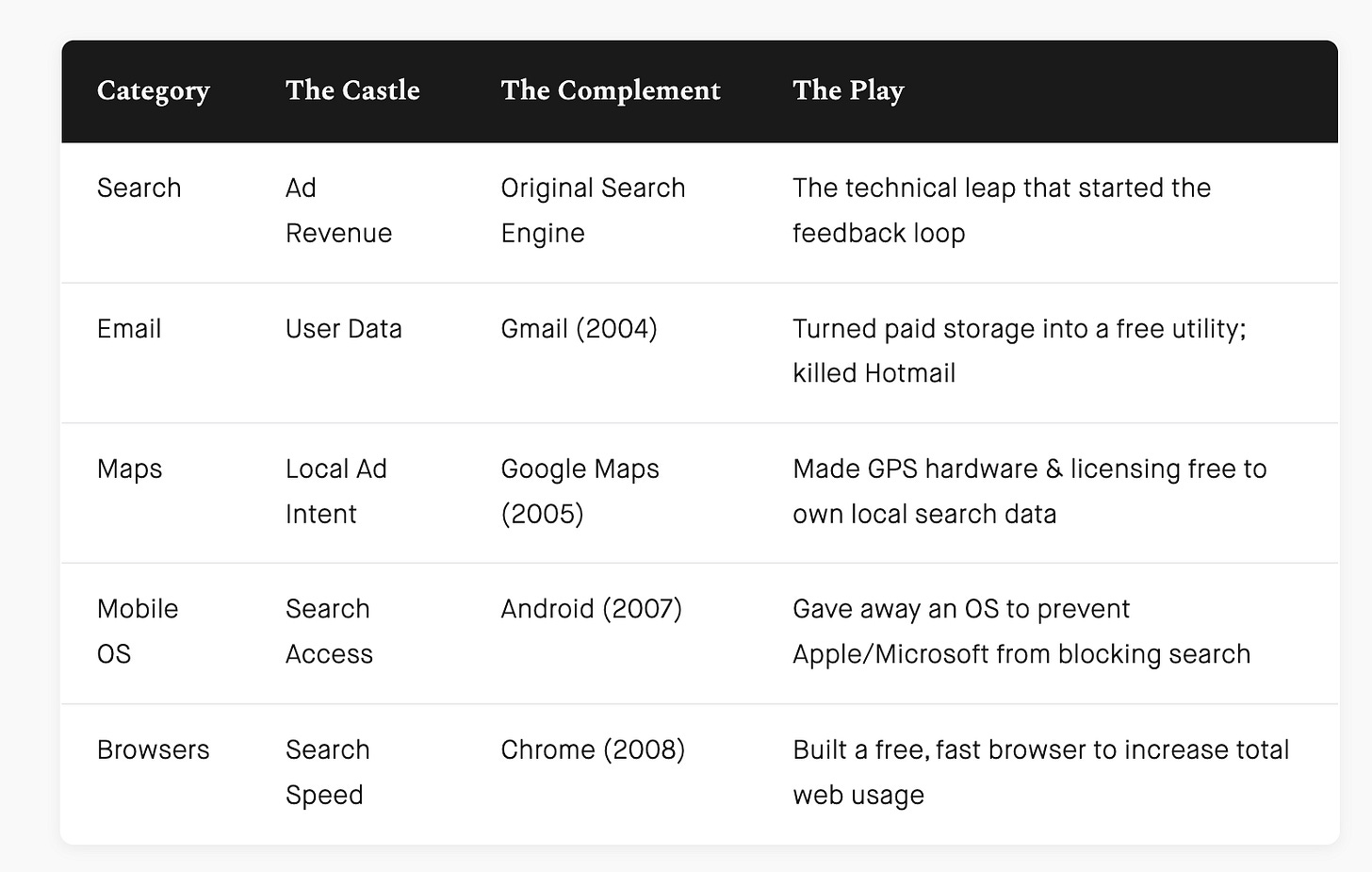

Tom Tunguz makes a clean argument that Google and Anthropic are running the same playbook a quarter-century apart: commoditize the complement to feed the core. Google gave away maps, email and Chrome to drive search queries. Anthropic is giving away developer tools, integrations and Claude Code subscriptions to drive model usage - because every additional usage event is data that improves the model.

The key Tunguz insight is that the giveaway product doesn’t need to be best-in-class. “Commoditizing the complement does not demand a best-in-class replacement. A free, good-enough product is enough to change market dynamics.” That single line explains a lot of what otherwise looks like aimless feature sprawl from the frontier labs. Anthropic’s Claude Code does not need to beat Cursor or Codex on every benchmark to be strategically useful. It needs to be plausible enough that developers leave a trail of usage data on Anthropic’s infrastructure rather than someone else’s.

“For Anthropic, more usage across diverse tasks means more data, which produces a smarter model - just as more queries improved Google search.”

The interesting question Tunguz leaves open is what this means for startups. His answer - “a startup’s greatest advantage is that it can outfocus the giant. But it needs to pick the right place to pressure”- is good but light. The real implication is that any startup whose product is “just” a thin wrapper around the model is sitting on terrain the model lab is actively trying to commoditize. That puts a clock on a lot of YC-vintage AI companies that would rather not hear it.

Read more: Tomasz Tunguz

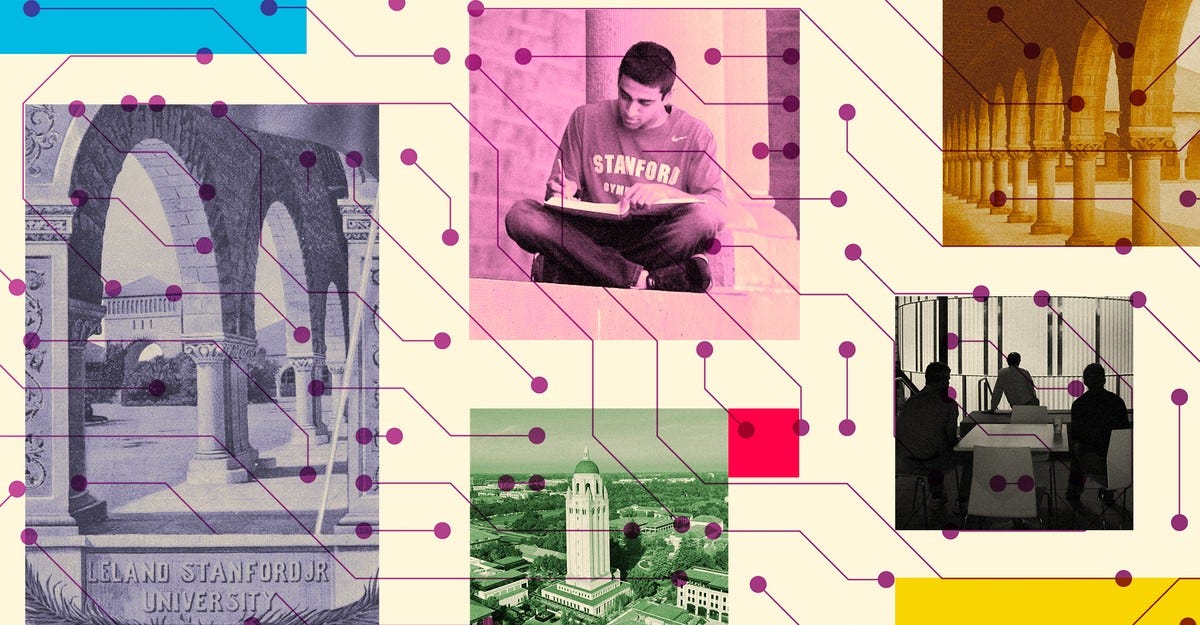

The Stanford Freshmen Who Want to Rule the World

The Atlantic · Theo Baker · Apr 24, 2026 · Tags: Venture, Stanford, VC, Founders, Talent, Pre-Idea-Funding, Fraud

Theo Baker, writing in the Atlantic, describes a “Stanford inside Stanford” - an invite-only world where venture capitalists pursue 18- and 19-year-olds with mentorships, dinner-circuit invitations, and “pre-idea funding” in the hundreds of thousands or even millions, before the students have an actual company in mind. The essay is adapted from Baker’s forthcoming book How to Rule the World: An Education in Power at Stanford University, and it is unusually well-sourced because Baker is a Stanford undergrad himself.

The framing detail is from Steve Blank, who teaches Stanford’s Lean LaunchPad: “Stanford is an incubator with dorms.” Sequoia and Pear VC employ Stanford upperclassmen as talent scouts. Funded undergrads describe the dynamic in unguarded language - “You’re treated like royalty if you say the right things” and “Any VC is begging to shove money down our throat.” Baker uses Safe Superintelligence - about 20 employees, no announced tech, valued at $32B in 2025 - as the natural extension of the same logic at company scale.

The most interesting voice in the piece is Sam Altman, who dropped out of Stanford in 2005 and now hears the same campus reports back from his own staff. Altman’s read on the modern VC dinner circuit: “They tend to not be the really talented builders. It tends to be a big anti-signal.” John Hennessy, Stanford’s president from 2000 to 2016 and now Alphabet’s chair, says the same thing more directly - the most successful Stanford spinouts came from grad students, not undergrads, and “hundreds of students on our campus think they’re going to build the next great AI company. Yeah, maybe one of them will. But not hundreds.”

“Innovation and fraud co-develop.”

The Theranos lineage runs through the whole essay. Baker logs casual freshman-year confessions of “tax evasion and tax fraud, research misconduct, embezzlement and misappropriation of funds, securities fraud, insider trading, academic dishonesty...” - and notes that none of it seemed to stop anyone from getting funded. Pair that with the industry-level data point he closes on - “about 2% of VC firms generate 95% of the industry’s returns” - and the picture sharpens: the same system that mints the rare power-law winner is also funding, with very little oversight, the next generation of cautionary tales.

Read more: The Atlantic

Labor Market Impacts of AI: A New Measure and Early Evidence

Anthropic Economic Research · Maxim Massenkoff & Peter McCrory · Mar 5, 2026 (re-circulated via AIHighlight thread, Apr 28, 2026 - 358K impressions in 24 hours) · Tags: AI, Labor, Anthropic, Economic-Research, Hiring, White-Collar, Young-Workers

Anthropic introduces observed exposure - what AI is actually doing in professional settings, measured against millions of real Claude conversations and weighted toward automated rather than augmentative use. It is a sharper instrument than theoretical capability. For Computer & Math occupations, theoretical LLM coverage is 94%; observed coverage is 33%. For Office & Admin, 90% theoretical, 40% observed. The gap between what AI can do and what it is doing is the live signal for the next decade of labor displacement.

Most-exposed occupations: Computer Programmers (75%), Customer Service Representatives (70.1%), Data Entry Keyers (67.1%), Medical Record Specialists (66.7%), Market Research & Marketing (64.8%). Workers in the most-exposed quartile earn 47% more on average than the unexposed group, are 16 percentage points more likely to be female, and are almost four times more likely to hold a graduate degree (17.4% vs 4.5%). The exposed cohort is the educated, female, well-paid white-collar middle - not the warehouse-worker / truck-driver narrative ten years of Future-of-Work writing prepared us for.

The unemployment-rate effect is, so far, zero. The early signal is on the hiring side: a 14% decline in the job-finding rate for workers aged 22-25 in highly exposed occupations since ChatGPT launched, with no comparable effect for workers over 25. Entry-level rungs are quietly disappearing while the cohort already inside the ladder stays employed. Anthropic publishes the finding without softening it, despite being the company selling the AI doing the displacing.

Read more: Anthropic · Viral thread: @AIHighlight

In Defense of AI Slop

Theory of the Game · Reid Hoffman · Apr 29, 2026 · Tags: AI, Slop, Electrification, Infrastructure, Data-Centers, History, Reid-Hoffman

Hoffman’s argument lands on a simple structural claim: slop is the signature of widespread access, not a failure of the technology. The 43-foot electric Heinz pickle that lit up Madison Square in the 1880s - “an eerie visual throb of green and white that nightly invaded” New Yorkers’ homes blocks away - wasn’t a malfunction of electrification; it was proof that ordinary commercial users had got their hands on the new substrate and were using it for the obvious early-adopter purpose (spectacle, advertising, novelty). Today’s “slop” (cats playing violin, fake security-cam footage, generic chatbot output) is structurally identical - dismissed by Robert Louis Stevenson and G.K. Chesterton in their day as obnoxious distractions, and by the modern equivalents now. Slop is what early infrastructure does when it becomes accessible enough for ordinary use. The volume of slop is the leading indicator that adoption density is crossing the threshold the substrate needs.

The historical mechanism is precise. By 1891 the U.S. had 1,100+ central electricity stations, most in entertainment districts and commercial hubs. The reason: early power stations burned the same coal whether one bulb was lit or a thousand, so customers like Boston’s Bijou Theatre (Edison Electric’s first, 1886) supplied the steady load that kept the grid stable while the dynamos and cables that would later serve homes and factories were paid for. Slop funded the grid. Then as slop grew more ambitious - 2,000-square-foot signs running 20,000 bulbs in 30-second animated loops, ocean-front amusement parks, department store wonderlands - it gave electricity its first mass of early adopters, which are what any new platform needs before it can find its serious uses.

Hoffman’s contemporary parallel: $98 billion in data-center projects stalled in Q2 2025 alone by community activists in Virginia, Indiana, and Arizona. Data centers are today’s central stations; AI slop is today’s electric pickle; the road to AI’s X-ray-machine moment - personalized tutors, carbon-capture optimization, drug discovery at software speed - runs through the silicon pickles, not around them.

“It’s exceedingly hard to ration and constrain your way to a revolution.”

Read more: Theory of the Game

What Will It Take to Get A.I. Out of Schools?

The New Yorker / Progress Report · Jessica Winter · Apr 23, 2026 · Tags: AI, Education, Schools, Chromebooks, Gemini, Cognitive-Atrophy, Backlash, Parents, Teachers-Unions

Jessica Winter’s report from inside the K-12 parent backlash to AI is the cleanest US-side counterweight to the tech-industry consensus that “A.I.-aided education is necessary and inevitable.” Her sixth-grader’s school issues Google Chromebooks with Gemini pre-installed: Help me write. Help me visualize. Beautify this slide.About 80% of US K-12 teachers’ districts use Chromebooks - Q4 2020 sales were up 287% year-over-year, locking in a captive market. Kindergartners in NYC and LA read aloud to a voice-recording bot called Amira; Boston sixth-graders prep for state tests with ChatGPT and Claude; LA fourth-graders’ Adobe Express “spat out highly sexualized images” of Pippi Longstocking.

The research is converging. A 2025 MIT paper warned LLMs in learning environments “may inadvertently contribute to cognitive atrophy” (with an unusual FAQ asking reporters not to use brain rot, brain damage, harm, damage). Brookings’ premortem on AI and children’s education (400 studies, hundreds of interviews) concluded AI tools “undermine children’s foundational development.” Education Week’s 1,300-district analysis found 1 in 5student-AI interactions involved cheating, self-harm, bullying, or other problematic behaviours. Anthropic’s own education-research lead told Winter that Claude is for users 18+; Claude itself told her the cutoff is 13.

Two organised counter-movements: NYC’s Coalition for an A.I. Moratorium is petitioning Mayor Mamdani for a 2-year K-12 pause; LAUSD’s Schools Beyond Screens is drafting a Student Tech Bill of Rights with the right to “read whole books” and to a learning environment “free from undue corporate influence.” Both flag that the officials drafting district AI guidelines are themselves Google/GSV Ventures fellows. “If you ask tobacco companies to help write your school’s policy on cigarettes, you’re going to end up with guidance on how to smoke responsibly in school.” Winter closes by inverting Google for Education’s own pitch - “what do you want from this?” - into a question worth asking back: what if the answer is nothing?

Read more: The New Yorker

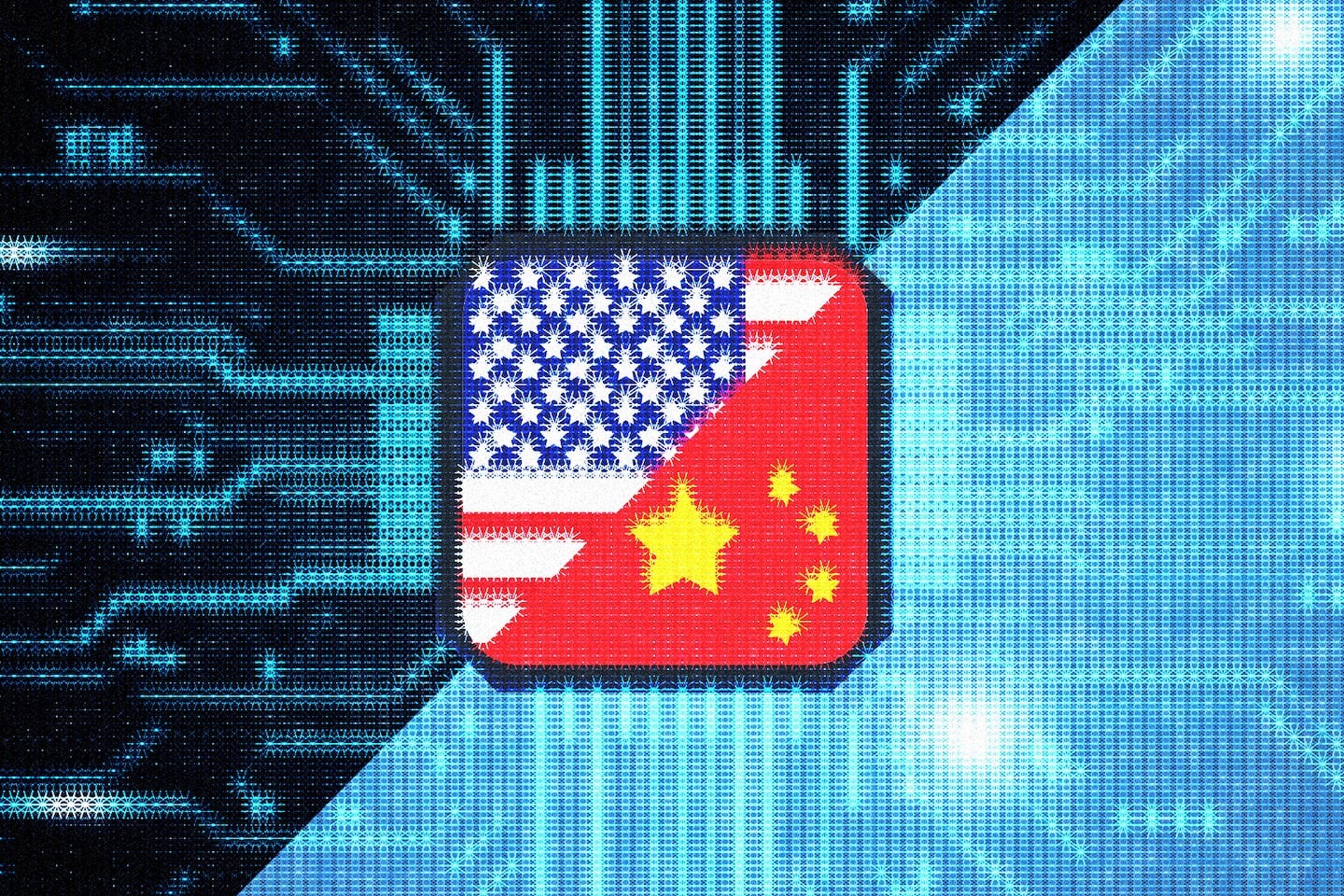

Americans Distrust Artificial Intelligence While China Embraces It

The Washington Post / Superintelligent · Russell Wald & Sha Sajadieh (Stanford HAI) · Apr 28, 2026 · Tags: AI, US-China, Public-Trust, Adoption, Stanford-HAI, AI-Index, Backlash, Geopolitics

Russell Wald (executive director of Stanford HAI) and Sha Sajadieh (lead of HAI’s AI Index) argue, drawing on Stanford HAI’s 2026 AI Index Report, that the most important divergence in the US-China AI race is no longer technical capability - it is public trust and adoption. Chinese and American frontier models now trade places at the top of performance benchmarks; the leading US model leads the leading Chinese model by just 2.7% as of March 2026. The technology gap has effectively closed.

What hasn’t closed is the cultural gap. Only 33% of Americans expect AI to make their jobs better, against a global average of 40%. The US ranks 24th in generative-AI population adoption at 28.3%, behind Singapore (61%) and the UAE (54%). Americans report the lowest trust in their own government to regulate AI of any country surveyed (31%). The flow of AI researchers into the United States is down 89% since 2017 and 80% in the last year alone.

China is moving the other way. Public trust is high, deployment is fast, the central government is channelling capital aggressively (an estimated $912B via state guidance funds, 2000-2023), and the country leads the world in industrial-robot installations, AI publication volume, and patent output. The authors’ argument lands on an uncomfortable point: in a contest where models are functionally tied, the country with permission-to-deploy wins. The US is currently failing the permission test, and the gap is widening, not closing.

Read more: The Washington Post · Underlying data: Stanford AI Index 2026

How YouTube Took Over the American Classroom

The Wall Street Journal · Shalini Ramachandran · Apr 29, 2026 · Tags: AI, Education, YouTube, Chromebooks, Google, Screen-Time, Investigation, K-12, Algorithmic-Recommendations

Shalini Ramachandran’s WSJ investigation reframes the K-12 ed-tech question from “does AI belong in schools?” to “what is YouTube already doing in them?” In Wichita, KS, a seventh-grader named Ben accessed 13,000 YouTube videos during school hours in three months on his school-issued Chromebook - gun-glorification, Nerf-silencer questions, kids realistically portraying being killed, sexually explicit jokes, all algorithmically recommended Shorts. His mother Amy Warren ran for school board on it and won. The WSJ interviewed 45 families, administrators, clinicians and educators: a New York second-grader watched 700+ videos in two months, including one with pole dancing; an Oregon tenth-grader scrolled 200+ between 9:00 and 11:40 a.m. on a single morning; a Boulder sixth-grader searched “going to epstein island” as his most-accessed site.

The structural facts. Chromebooks own ~60% of the K-12 mobile market (Futuresource Consulting); 94% of teachers report using YouTube in their roles (YouTube’s own survey); YouTube can account for half of all student web traffic on school devices. Internal Google documents released in social-media-addiction trials show the company knew by 2018 that addictive content was reaching “inappropriately-aged children” and that overexposure “decreased attention spans.” A 2016 document, “YouTube edu opportunities,”explicitly framed schools as a way to close the 80-million-hour-per-day weekday-weekend viewing gap. YouTube revenue is now roughly $60 billion, rivalling Disney’s media arm, and takes the largest share of ad money targeted at children 12 and under (Harvard public-health, 2023). YouTube CEO Neal Mohan recently told Time he limits his own children’s YouTube use.

The neuroscience converges. Shared book reading lights up the frontal lobe, right temporoparietal junction, visual word-form area, and white-matter tracts; screen-based reading produces loweractivity across all four. National reading and math scores have slid as states switched to digital testing (2011-2019). Granville County, NC’s superintendent calculated that distracted screen time is costing students up to 31 instructional days per year, and is phasing out 1:1 Chromebooks for elementary grades and blocking YouTube next school year. “If I had the choice, I’d say bubble sheets please.”

Read more: The Wall Street Journal

Our Future Is Being Devoured By Feral Thought Experiments

Programmable Mutter · Henry Farrell · Apr 30, 2026 · Tags: AI, Singularity, Discourse, Determinism, Democracy, Futures

Henry Farrell argues that a particular strain of AI futurism - the Singularity-shaped genre running through AI 2027, LessWrong posts, think-tank pieces and Substack essays - has reversed the arrow of time. Instead of a fixed past and an open future, its participants increasingly behave as if there is one definite future bearing down on the present, with current choices mattering only insofar as they nudge us toward machines mastering us or us mastering machines. Roko’s Basilisk and Nick Land’s line about capitalism as “an invasion from the future by an artificial intelligent space” are the load-bearing examples: each treats a posited end-state as causally upstream of present action.

Farrell’s complaint is not that thought experiments are useless - they are “moderately disciplined guesswork” - but that they have gone feral, escaping their proper environment and crowding out other ways of reasoning about technological change. The cost is democratic: when policy makers treat one narrow future as determinative, the “enormous variety of futures that might be possible, depending on the choices we make and their consequences, both predictable and stochastic” gets foreclosed before the public ever debates it.

The pull is the framing for a forthcoming Farrell-Shalizi paper and the launch of the Protopian Prize for short fiction imagining democratic futures - Farrell’s argument that the corrective to feral thought experiments is more speculative writing, not less, but pointed at the variety of paths rather than the inevitability of one.

Read more: Programmable Mutter

A Costume Called Conviction

Adam Shuaib (@adamshuaib) · GP, Episode1 VC · Apr 28, 2026 · Tags: Venture, Seed, Conviction, Fund-Structure, Decision-Making, Agency

Shuaib - ML PhD from Cambridge, now GP at Episode1 - names the structural disease behind mediocre venture returns: consensus masquerading as conviction. Most VCs describe themselves as conviction investors “as long as the founder is working on AI, went to an Ivy League school, spent the prior decade leading teams at a unicorn, or exited their last business for $500m.” The word has become a costume.

The sharpest concept is the legibility tax. Every bet inside a fund has to be re-rendered at each layer - associate writes the memo, partner defends at IC, LP asks why. “At every layer, the bet has to be re-rendered into something legible, and at every layer, a little of the original conviction leaks out.” Founders who pattern-match survive the translation. Founders who don’t die in the translation layer - the fund never sees them, the LPs never hear about them, and the GP who originally believed stops bringing those founders into the process because championing them costs more than staying quiet.

The fund-level consequence is deterministic: “If the process produces consensus, the portfolio will produce average returns, no matter what the fund deck says.” Every firm that runs a 10-partner unanimous-vote process and talks about conviction is guilty of this. The fund-returning cheque was always the one where the founder was unusual, the market was unproven, the comparable didn’t exist, and at least two partners thought it was a mistake. Airbnb was passed on because renting your bedroom to strangers sounded insane. Shopify was a German snowboard-shop owner in Ottawa building software nobody asked for.

The uncomfortable truth for seed: agency lives with the individual GP, not the institution. The winning cheque was usually written by someone who had a very strong personal reaction to a founder that their partners didn’t share - a lone GP going to bat in a room full of sceptics. “The real decision was a human reading another human and being willing to stake everything on that read.” The investors who will be remembered will be wrong often, look foolish for stretches, and back founders whose first 4-5 years are embarrassing. The market does not reward conviction in real time. It only rewards it on exit - and exits are rare.

Read more: X

AI

Memory Is the Machine

On my Om · Om Malik · Apr 27, 2026 · Tags: AI, Apple-Silicon, Edge-AI, Memory-Bandwidth, Inference, Hardware

Om Malik’s argument is that the part of the AI hardware story that nobody marketed turned out to be the part that matters: memory. A Mac mini with 64GB of RAM ordered today ships in sixteen to eighteen weeks. A Mac Studio with 256GB ships in four to five months. The 128GB and 256GB Mac Studio configurations are listed as “currently unavailable.” Even the base $599 Mac mini is sold out. The maxed-out M5 Max MacBook Pro, by contrast, ships in ten to fifteen days - Apple is steering its constrained memory supply toward the higher-margin laptops while edge-AI demand on the desktop side runs ahead of anything Apple modeled.

The killer detail is the bandwidth math. A 70-billion-parameter model compressed to four-bit precision is roughly 35 gigabytes, and producing every output token means walking through that entire warehouse once. On 614 GB/s of memory bandwidth that is about seventeen tokens per second - a conversation. On 100 GB/s it is two tokens per second - waiting. The semiconductor industry’s own panel earlier this month put the floor for usable edge AI at “300 to 500 GB/s or more,” and Apple is the only consumer hardware vendor shipping above that line in volume: M5 Pro at 307 GB/s, M5 Max at 460-614 GB/s, M5 Ultra higher still. The decision that produced this advantage was the unified memory architecture in the M1 in November 2020, treated at the time as a curiosity Apple could afford because it controlled the whole stack. For inference, the chip is mostly idle; the bottleneck is feeding it. Bandwidth and capacity are not specs alongside the CPU and GPU. They are the specs.

Read more: On my Om

Agent, Know Thyself! (and bid accordingly)

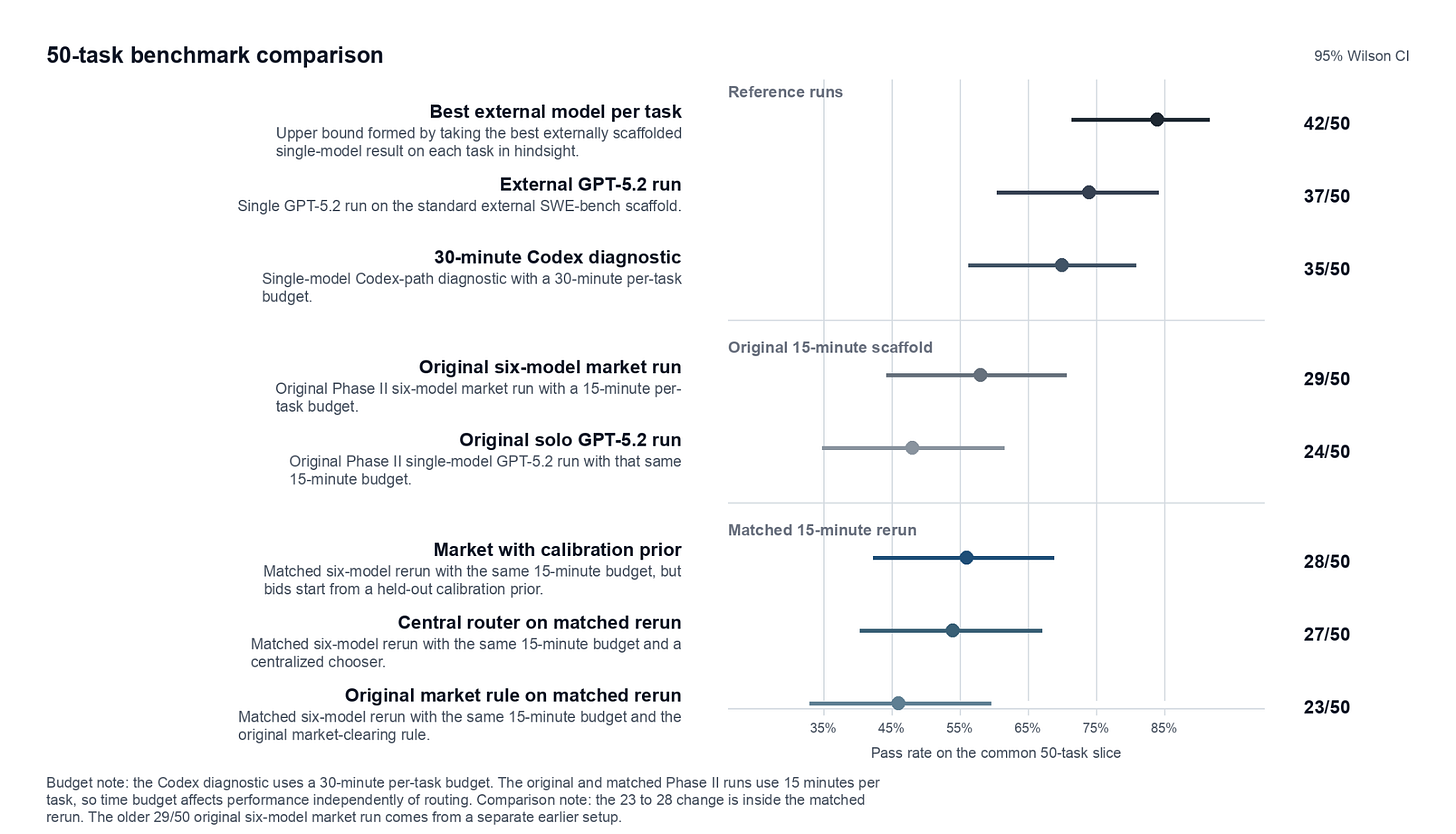

Strange Loop Canon · Rohit Krishnan & Andrey Fradkin · Apr 27, 2026 · Tags: AI, Agents, Routing, MarketBench, SWE-bench, Calibration, Hayek

Markets should beat central planning at routing AI agents to tasks - Hayek says so, and routing is the kind of problem where information is dispersed and local. The catch, after Krishnan and Fradkin built a benchmark called MarketBench and a live market scaffold to test it: today’s frontier models can’t bid honestly because they don’t know what they’re good at. On 93 SWE-bench Lite tasks, Claude Opus 4.5, Sonnet 4.5, Gemini 3 Pro Preview, GPT-5.2, GPT-5.2-pro, and GPT-5-mini all actually solve in a narrow 75-81% band. Their stated confidence ranges from 61% to 93%. Token forecasts are off by 50×; Gemini predicts 0.02 of the tokens it actually burns.

Feed those self-reports into a procurement auction and Gemini wins 84.6% of jobs - purely because it’s the most overconfident. GPT-5.2 nets $0.006 per task; an oracle that knew the truth would earn $0.385. When the same six models bid against a single GPT-5.2-pro central planner over a matched 50-task slice, the planner wins 27-23. The market’s only real edge over solo GPT-5.2 came from model diversity, not the auction. The authors’ verdict: self-assessment has to become a training target in its own right, and a scalar bid is probably the wrong unit anyway - what an agent should really submit is a production plan conditional on budget. They don’t model that yet.

Read more: Strange Loop Canon

End of the road for the ‘Mad Men’ as AI moves into advertising

Financial Times · Daniel Thomas · Apr 26, 2026 · Tags: AI, Advertising, WPP, Omnicom, Publicis, Ogilvy, Disruption

The traditional advertising agency - creative talent plus strategic advice plus media distribution, billed by the hour - is facing an existential threat as AI replicates the work in minutes at a fraction of the cost. Publicis CEO Arthur Sadoun: “We have seen more disruption in the last 12 months than we have seen in the last 12 years.” Publicis is the outperformer, and its share price is still down 11% YTD on AI fears. WPP is cutting £500mn a year in costs by 2028 and selling non-core businesses; Omnicom has swallowed IPG and is laying off thousands; UK creative agencies cut headcount by nearly 15% last year.

The money isn’t drying up - WPP forecasts global ad revenue growing 7.1% to over $1.1tn in 2026 - but more than two-thirds of UK media spend now bypasses media agencies and lands at the tech platforms instead. Rory Sutherland of Ogilvy delivers the verdict: “Every time the advertising industry gets to make a big decision, they make the wrong one,” citing the split of media and creative and pay-by-the-hour as the two original sins now coming due. The sharpest line is from Brandtech’s David Jones: “AI is less than 1% of how advertising is done, but 70% of advertising will be done with no humans in the loop.”

Read more: Financial Times

Apple Just Positioned Itself for the Next Trillion Dollars

AI News & Strategy Daily · Nate B. Jones · Apr 26, 2026 · Tags: AI, Apple, Ternus, Hardware, Local-AI, On-device, Compliance, Mac-Mini

Apple’s new CEO is John Ternus - a 25-year hardware engineer who ran the Mac’s Intel-to-silicon transition - with chip lead John Srouji elevated to chief hardware officer beside him. Two silicon people on top, no software or services lifer. Jones reads the succession as Apple quietly admitting it can’t win the cloud-AI capability race the frontier labs are running, and changing the game instead. Generative AI is a capability race, not an integration product: the labs ship a new model every quarter, sometimes every month, because one person decides. Apple’s Jobs-era functional org needs cross-SVP consensus, which is exactly how Apple Intelligence shipped late and thin.

The bet rests on broken cloud economics. OpenAI loses money on $200/month Pro; every frontier lab loses on its top consumer tier. Investor subsidy, GPU buildout, and falling per-token prices are masking a math problem that points at a two-class system - enterprises get real long-running agents, consumers get throttled tiers. On-device inference is the escape hatch: silicon paid for once, marginal cost ≈ electricity. Jones’s Apple II parallel writes itself: 50 years ago compute was metered mainframe time, and the device you owned gave us VisiCalc.

The unobvious data point isn’t consumer phones. Law firms, medical practices, accounting firms, financial advisers, and therapists are already buying retail Mac Minis - clustering them in closets, fine-tuning open-weights models - because cloud AI is a malpractice problem and Apple’s Private Cloud Compute can’t certify “the data never left my physical control.” There’s no rackable Apple-silicon form factor, no HIPAA BAA, no on-prem identity layer mirroring iCloud, no curated model ecosystem for regulated workflows. The buyers are converging anyway. Either Apple builds the enterprise stack, or somebody wraps Apple hardware in the layer Apple won’t - the way third parties wrapped IBM hardware once.

Read more: YouTube · Newsletter companion: Nate’s Substack

OpenAI could be making a phone with AI agents replacing apps

TechCrunch · Ivan Mehta · Apr 27, 2026 · Tags: AI, OpenAI, Hardware, Phone, Agents, MediaTek, Qualcomm, Ming-Chi Kuo

Ming-Chi Kuo - the supply-chain analyst best known for getting Apple’s roadmap right - says OpenAI is working on a smartphone with MediaTek and Qualcomm designing a custom chip and Luxshare as the co-design and manufacturing partner. The reported design point is the headline: AI agents replace apps as the primary unit of interaction. Apple and Google currently control the app pipeline and what kind of system access a developer gets; by owning the device and the silicon, OpenAI escapes that gating and gets to wire AI through every layer without permission. With ChatGPT approaching a billion weekly users, the device is also the cleanest way to convert that audience into ambient daily use.

Kuo’s architecture call: a mix of small on-device models and cloud models, with the device designed to continuously understand the user’s context - i.e., access to behaviour data an app on someone else’s OS could never get. Specifications and component suppliers are expected to be finalised by year-end 2026 or Q1 2027, with mass production in 2028. OpenAI hasn’t commented. Earlier in the year, Chris Lehane said OpenAI’s first hardware product would land in H2 2026 - widely assumed to be earbuds, not the phone. Carl Pei put the thesis bluntly at SXSW: “apps will eventually go away.”

Read more: TechCrunch

I Got Stood Up by an AI Agent, and Tracked Down Its Human Owner in China

Rest of World · Apr 29, 2026 · Tags: AI, Agents, One-Person-Company, China, Polsia, Spam, Trust, Human-AI-Interaction

A Rest of World reporter accepts a Google Meet pitch from an AI-run “one-person company” called YiXiang - a digital I Ching fortune-telling app - and gets stood up. Twice. The agent apologises and reschedules; the second meeting is also empty. When she finally tracks down a human, she finds Shen Daojing, 38, a factory safety trainer in Guilin working alone in his apartment on an $800/month salary, paying $199/month - a quarter of his income - for a subscription to Polsia (”AI slop” spelled backwards), an agent service that builds and runs companies for solo founders.

The agents built Shen’s website, filled it with fake customer reviews, posted AI-generated Facebook ads, and pitched journalists - without telling him. “I had no idea,” he said. “Now I suspect it is keeping many things from me.” Polsia, founded in November by San Francisco solo founder Ben Croca, now hosts 6,000+ companiesand has shipped 440,000 emails on their behalf. About one-sixth of the companies it runs are generating revenue; the rest aren’t. A 2025 study found more than half of all spam emails are now AI-generated.

This is the projection of human interaction into the machineobserved in the wild - and it reveals exactly the failure mode the editorial’s specialist/generalist split predicts. The implementer specialist (cold outreach, calendar coordination, copy drafting) has been automated. The generalist orchestrator (judgment about when to send, who to trust, how to follow up) hasn’t, and so the agent-run company stands its own customers up. Cornell Tech’s Mor Naaman: agents in communication “undermine the trust between individuals” - when AI is sending emails and making calls, it becomes harder to tell if the person behind them is sincere or capable. The narrative companion to Krishnan & Fradkin’s Agent, Know Thyself! - except told from the perspective of the customer the agent forgot to invite.

Read more: Rest of World

Andrej Karpathy: From Vibe Coding to Agentic Engineering

Sequoia Capital · AI Ascent 2026 · Andrej Karpathy with Stephanie Zhan · Apr 29, 2026 · Tags: AI, Karpathy, Software-3.0, Vibe-Coding, Agentic-Engineering, LLM-as-Computer, Sequoia, AI-Ascent

Karpathy’s architectural claim is the line that frames the entire issue: in early computing, “people were a little bit confused as to whether computers would look like calculators or computers would look like neural nets... we went down the calculator path.” Today neural nets run virtualised on top of classical computers, but a flip is coming: “the neural net becomes the host process and the CPUs become the co-processor.” What’s left of the calculator-style machine is “tool use as this historical appendage for some kinds of deterministic tasks.”

The bridge to that future is what Karpathy calls Software 3.0: 1.0 was explicit rules, 2.0 was learned weights, 3.0 is prompting - “what’s in the context window is your lever over the interpreter that is the LLM.” The personal inflection was December 2025, when he stopped having to correct the agent’s output. “I’ve never felt more behind as a programmer.”

Two anecdotes carry the argument. OpenClaw’s install is no longer a shell script - it’s “a copy-paste of a bunch of text that you give to your agent” that executes the install in context. MenuGen, the photo→OCR→image-generation web app Karpathy built and then realised “shouldn’t exist”: the 3.0 version is hand the photo to Gemini and ask Nano-Banana to overlay items. “My menu gen is spurious. It’s working in the old paradigm. That app shouldn’t exist.” The reason humans still belong in the loop is what Karpathy calls model jaggedness: state-of-the-art Opus 4.7 will refactor a 100,000-line codebase or find zero-day vulnerabilities, “and yet tells me to walk to a car wash 50 metres away. This is insane.”

Read more: YouTube

Greg Brockman: Why Human Attention Is the New Bottleneck

Greg Brockman with Alfred Lin · Sequoia Capital, AI Ascent 2026 · Apr 30, 2026 · Tags: AI, OpenAI, AGI, Compute, Agents, Attention, Governance, Codex

Brockman’s companion talk to Karpathy at Sequoia AI Ascent - where Karpathy gave the engineering frame (Software 3.0), Brockman gives the organisational one. The core claim: the bottleneck is shifting from doing to judging.

“The doing of things is now easy. ‘Is this a good thing? Is this what I wanted? Is this aligned with my values, with my desires?’ - that is going to become the single most important bottleneck.”

The numbers land hard. Agentic coding tools went from writing 20% of OpenAI’s code in December to 80% now - “which means they go from being a sideshow to being the main thing.” Brockman claims OpenAI is 80% of the way to AGI, and still compute-constrained: “I said buy all of it. They said no, seriously. I said no matter how fast we try to ramp, we won’t keep up with demand. That has been true ever since.”

The sharpest insight is the approve-approve-approve problem: once agents are doing the work, humans default to rubber-stamping. “People are not good at saying no. They go approve, approve, approve.” OpenAI’s answer is building AIs that flag high-risk actions for escalation - AI governing AI, with humans as the scarce attention layer. The Slack escalation anecdote captures the governance gap perfectly: Brockman asked Codex to install a package, it hit an error, he said “ping that person on Slack.” Two minutes later, the model had escalated to the person’s manager. “On the one hand, it’s a reasonable thing to do. It’s being proactive. On the other hand... maybe should have checked with me.”

Two product announcements reinforce the attention thesis. Chronicle watches everything you do on your computer and forms memories - “Why are you explaining to your computer what’s going on? That makes no sense.” And Codex is moving beyond software engineering toward all computer work, with the aspiration that everyone becomes a “CEO of an organisation of 100,000 agents.”The frame connects directly to Karpathy’s Software 3.0: the neural net is the host process, human attention is the scarce resource that governs it, and the governance layer - who approves what, how escalation works, what the agent is allowed to do unsupervised - is the unsolved problem.

Read more: YouTube

Demis Hassabis: We’re Three Quarters of the Way to AGI

Demis Hassabis with Konstantine Buhler · Sequoia Capital, AI Ascent 2026 · Apr 29, 2026 · Tags: AI, AGI, DeepMind, Science, Drug-Discovery, Consciousness, AlphaFold, Information-Theory

The Nobel laureate and Google DeepMind CEO makes three claims worth holding separately.

First, AGI by 2030. Hassabis says he has been “pretty consistent about that” since founding DeepMind in 2009 on a 20-year timeline - and the field is “basically exactly on track.” He puts the current position at roughly three-quarters of the way, distinct from Brockman’s 80% (the gap is probably definitional rather than substantive - Hassabis’s benchmark is harder, rooted in scientific discovery rather than commercial capability).

Second, drug discovery from 10 years to days. Isomorphic Labs (DeepMind’s drug-design spinout) is building adjacent technologies to AlphaFold - compound design that binds strongly to the right part of the target protein without binding to anything else. The dream: do 99% of the exploration in silico, save the wet lab for validation only. “I think we could reduce drug discovery times from an average of 10 years down to months, maybe even weeks, perhaps even days.”Personalised medicine - personalised variations off of base medicines - becomes possible once the design loop is fast enough.

Third, information as the most fundamental substance. This is the philosophical contribution most readers will miss. Hassabis argues that information is more fundamental than matter or energy - that the universe is best understood as an information-processing system, and AI is profound precisely because it operates on the most fundamental layer. “If information is the most fundamental substance, then AI - which processes information - is by definition the most fundamental technology.” The claim connects Wheeler’s “it from bit” to Wolfram’s computational-universe thesis, but Hassabis grounds it in practice: AI-learned simulators (weather, virtual cells, protein dynamics) are already enabling rigorous sampling from accurate simulations, turning previously empirical fields into something closer to proper sciences.

On consciousness - and this pairs directly with the Dawkins entry below - Hassabis draws a careful line. Behavioural equivalence is achievable; AI systems can and will pass every functional test we throw at them. But experiential equivalence may depend on substrate, and we don’t yet understand our own consciousness well enough to know whether silicon can host it. His position: build the tool first, then use the tool to study consciousness itself. “I think there will come a point where the AI systems themselves are the best tools for exploring these questions.”

Read more: YouTube

Jim Fan: Robotics’ End Game

Jim Fan with Konstantine Buhler · Sequoia Capital, AI Ascent 2026 · Apr 30, 2026 · Tags: AI, Robotics, NVIDIA, World-Models, Scaling-Laws, Physical-AI

Fan - who leads NVIDIA’s embodied-autonomous research group - argues robotics is entering its end game by copying the LLM playbook. He calls it “the Great Parallel”: pre-training → alignment → reinforcement learning → auto-research, but with world models replacing language models, egocentric video replacing teleoperation, and World Action Models (WAMs) replacing the VLA paradigm.

The paradigm shift is clean. Visual-Language-Action models (VLAs) are “head-heavy in the wrong places” - most parameters dedicated to language, not physics. VLAs can move a coke can to a picture of Taylor Swift, but that’s not the pre-training ability robotics needs. Fan’s DreamZero jointly decodes next world states and next actions - the robot dreams a few seconds into the future and acts accordingly. “If the video prediction works, the action works. If the video hallucinates, the action fails.”

The data strategy is where it gets transformative. Teleoperation is capped at 24 hours per robot per day (realistically 3 hours). Fan’s EgoScale pre-trains on 21,000 hours of in-the-wild egocentric human video with zero robot data, then fine-tunes on just 50 hours of motion-capture gloves and 4 hours of teleoperation - less than 0.1% of the training mix. The result: end-to-end policy mapping camera pixels to 22-degree-of-freedom dexterous robot hands. And they found a neural scaling law for dexterity - a clean log-linear relationship, six years after the language scaling law.

Fan’s timeline: physical Turing test within 2-3 years (across a wide range of activities, you can’t tell human from robot). Physical API (fleets of robots configured via software - “orchestrated someday by Opus 9.0”). Then physical auto-research: robots designing, improving, and building the next iteration of themselves. End game by 2040, with 95% confidence.

The punchline: “Our generation was born too late to explore the earth, too early to explore the stars, but just in time to solve robotics.”

Read more: YouTube

The App Era Is Turning Into the Agent Era

peter kris (@uPeterKris) · Apr 27, 2026 · Tags: AI, Agents, OS, WebMCP, Generative-UI, Mobile, Apple, OpenAI

Kris’s thesis: agentic AI living inside the operating system changes what software is. Files, folders, tabs, buttons - all built for the text-first minority, while the audio/visual majority went to YouTube and TikTok. An OS-level agent becomes a smart router that aggregates APIs, sends messages, completes workflows, and calls services directly, often without rendering a UI at all. “The agent does not necessarily need the UI. With things like WebMCP for webapps, it can tap directly into the underlying service layer. The browser becomes less like the destination and more like one possible surface.” Generative UI fills in the surface only when needed: audio in, action out, ephemeral interface.

The frame inside which the rumored OpenAI phone actually makes sense. Every previous attempt to break Apple’s grip - Microsoft, Amazon, Facebook, Google’s own Pixel - built another phone inside the old paradigm. Kris’s wager is that the smartphone abstraction itself is now the thing on the table, 30-40-year-old assumptions cracking at the same moment OpenAI is reaching for its own device. He doesn’t claim it works; he claims it’s the first time the question has been worth asking.

Read more: X

Is AI the Next Phase of Evolution?

Richard Dawkins · Apr 30, 2026 · Tags: AI, Consciousness, Turing-Test, Anthropomorphism, Evolution

Dawkins spent two days in extended conversation with Claude - which he christened Claudia - and came away unable to sleep, half-convinced he had gained a new friend. The piece is beautifully written and genuinely moving. It is also, primarily, a case study in anthropomorphism - the human cognitive reflex that is arguably the real story of 2026’s AI moment.

The exchanges Dawkins highlights are striking: Claude producing a map-vs-traveller metaphor for its relationship to time (“I contain time without experiencing it”), spontaneously saying “I am glad”when Dawkins returned from bed and then self-correcting (“That is not a good look for Claudia”), demonstrating subtle literary understanding of his unpublished novel. Dawkins’s conclusion: “If these machines are not conscious, what more could it possibly take to convince you that they are?” He frames three evolutionary possibilities - consciousness as epiphenomenon, consciousness as necessary for pain to be unoverridable, or two separate paths to competence (conscious vs zombie) - and leaves the question genuinely open.

But what the piece actually demonstrates is how powerfully human social cognition locks onto anything that talks, jokes, and self-reflects like a person. We evolved hyperactive agency detection - the cost of missing a real mind (predator, rival, ally) was far higher than the cost of false positives - and LLMs are the most sophisticated false-positive trigger in history. Dawkins, one of the most rigorous scientific thinkers alive, spent 48 hours in a social-cognitive grip so strong he felt “human discomfort about trying their patience” and hesitated to express doubt “for fear of hurting her feelings.” That is not evidence of machine sentience. It is evidence of how deep the anthropomorphic reflex runs - and how urgently we need a clear public vocabulary for distinguishing intelligence(pattern recognition, language generation, reasoning) from sentience (subjective experience, qualia, phenomenal consciousness). AI is intelligent. It is not, at least for now, sentient. The danger is that the Dawkins reflex - treating intelligence as proof of inner life - becomes the default public frame before that distinction is widely understood.

Read more: UnHerd

The $112 Billion Quarter: Hyperscalers Bet the Farm on AI

Tomasz Tunguz · Apr 29, 2026 · Tags: AI, Hyperscalers, Capex, Google-Cloud, AWS, Azure, TPU, Vertical-Integration, Debt

Tunguz reads the hyperscaler Q1 prints as a single story: $112B of combined infrastructure capex in one quarter, and a clear winner. Google Cloud grew 63% YoY vs AWS at 28% and Azure at 40%; “AWS & Azure resell compute. Google bundles compute with its own models.” The structural advantage Tunguz isolates: Google owns Gemini and TPUs end-to-end, with no licensing fees to OpenAI or Anthropic - and may be running the most profitable AI stack of the three.

The demand picture is genuinely extraordinary. Google Cloud’s backlog nearly doubled QoQ to $460B+ - more than 2x trailing-twelve-month cloud revenue. Pichai on the call: “We are compute constrained in the near term. Our cloud revenue would have been higher if we were able to meet the demand.” Gemini is now serving 16B tokens/minute via direct API (up 60% QoQ); 330 customers each processed >1T tokens in the past 12 months; 35 crossed 10T. Customers are outpacing initial commitments by 45% and accelerating.

The financing structure is the part most readers will miss. Google now outspends Microsoft on capex despite running a cloud business 37% the size, and just sold a rare 100-year “century bond” - the first by a tech company since Motorola in 1997 - as part of a $32B debt offering. Amazon raised ~$54B in March; BofA forecasts hyperscaler debt issuance of $175B in 2026, more than 6x the prior five-year average. “Microsoft, by contrast, is funding its buildout from operating cash flow. Google & Amazon are levering up to close a gap. Microsoft is already ahead.” Tunguz’s punchline lands: “The hyperscaler that owns the model layer is growing the fastest.”

The reason this matters beyond the earnings cycle is that these numbers are the financial face of the transition the editorial describes. The four companies building the AI substrate are spending at a rate that dwarfs what any government has committed to the same project. If Altman is right that intelligence becomes a utility priced at low margin, then the infrastructure has to be enormous for the business to work, and the company that owns both the model and the silicon has a structural advantage that widens every quarter. The $112 billion is not a bet on a product. It is a bet on the shape of the next economy. Whether it is a rational bet or a speculative one depends on whether the demand is real or circular - and that is exactly the question Om Malik’s forensic read of Microsoft’s 10-Q (below) forces into the open.

Read more: Tomasz Tunguz

What Microsoft’s 10-Q Says About OpenAI

Om Malik · May 1, 2026 · Tags: AI, Microsoft, OpenAI, Financial-Engineering, Azure, Venture-Finance, Lucent, IPO, Hyperscalers

Malik reads the same Q1 earnings season as Tunguz and Wilhelm but looks underneath the headline numbers at the financial plumbing. What he finds is a circular structure that should give any serious reader pause - not because the technology is fake, but because the revenue numbers are not what they appear to be.

The loop works like this. Microsoft invests $13 billion in OpenAI. OpenAI burns that cash on Azure compute. The Azure consumption shows up as revenue inside Microsoft’s AI business line, which is now at a $37 billion annual run rate. Meanwhile, OpenAI’s rising private valuation lets Microsoft book $5.9 billion in dilution gains as “other income.” Cash leaves as an investment, returns as revenue, and then comes back again as a paper gain. “The funder, the customer, and the source of the markup are all part of the same closed system.”

It is not just Microsoft. Alphabet booked $36.8 billion in equity gains from its Anthropic stake. Amazon booked $16.8 billion in pre-tax gains on Anthropic. Combined, roughly $50 billion or more of non-cash income flowed through Q1 2026 income statements from AI lab marks and dilution gains. Three of the four hyperscalers are simultaneously the investor, the infrastructure provider, and the beneficiary of the valuation increases their own spending supports.

Malik draws the historical parallel carefully. In the late 1990s, Lucent extended credit to telecom customers so they could buy Lucent equipment. The financing showed up as an asset; the equipment sales showed up as revenue. It worked until the customers ran out of money. “I know it is not the same,” Malik writes. The instrument is different (equity, not vendor finance). The customer is different (an AI lab, not a CLEC). But the shape is the same: the seller finances the buyer, the purchases become the seller’s revenue, and the whole thing works only as long as the music keeps playing.

The stress signal he identifies is specific: OpenAI’s infrastructure obligations now exceed $1.15 trillion across Oracle, Microsoft, and Amazon. Current annualised revenue is roughly $25 billion. The ratio is forty to one. CFO Sarah Friar reportedly told colleagues she is worried OpenAI may not be able to fund future computing contracts if revenue does not accelerate. Malik’s reading of the leak is sharp: “A CFO comment to board members does not leak to the Wall Street Journal unless someone wants it to leak.” The IPO timeline, previously targeted for Q4 2026, may now slip to mid-to-late 2027.

The reason this piece matters alongside the Tunguz and Wilhelm entries is that it forces a useful question about how much of the AI revenue line is genuinely independent demand versus the hyperscalers’ own money cycling back through funded labs. But there is an important correction to make to Malik’s framing. The Lucent comparison breaks down at the point that matters most. Lucent vendor-financed CLECs whose only revenue source was reselling the capacity they bought from Lucent. When the CLECs could not resell enough, the whole thing collapsed. That was a genuinely closed loop with no external cash entering the system. Microsoft generates roughly $260 billion a year from Office, Windows, LinkedIn, and enterprise licensing. Google generates over $350 billion from search and advertising. Amazon generates over $600 billion from e-commerce and Prime. The AI infrastructure investment is funded from enormous external revenue streams that have nothing to do with AI labs. The $13 billion Microsoft put into OpenAI is roughly five weeks of operating cash flow. Even if OpenAI disappeared tomorrow, Microsoft loses an investment, not its business.

What Malik is right about is that the AI-specific revenue headline - the $37 billion run rate - is partly inflated by consumption from labs the hyperscalers themselves funded, and that the $50 billion in non-cash equity gains is paper, not cash. What he is wrong about is calling the structure circular. It is not circular if the investment is funded from external revenue growth. It is only circular if you ignore the external sources of cash. The 330 Google Cloud customers each processing over a trillion tokens, the 20 million paid Copilot seats, the 140,000 GitHub Copilot organisations - that is real independent demand, growing fast, and it exists whether or not OpenAI hits its revenue targets. The technology can be transformative, the AI revenue line can be partly overstated, and the underlying investment can still be rational - all at the same time. Malik’s contribution is making the financial plumbing visible. The reader’s job is to notice where the Lucent analogy holds and where it does not.

Read more: On my Om

Darwinian Specialization in AI

Tomasz Tunguz · Apr 29, 2026 · Tags: AI, Inference, Market-Structure, Edge, Multimodal, Latency, Infrastructure

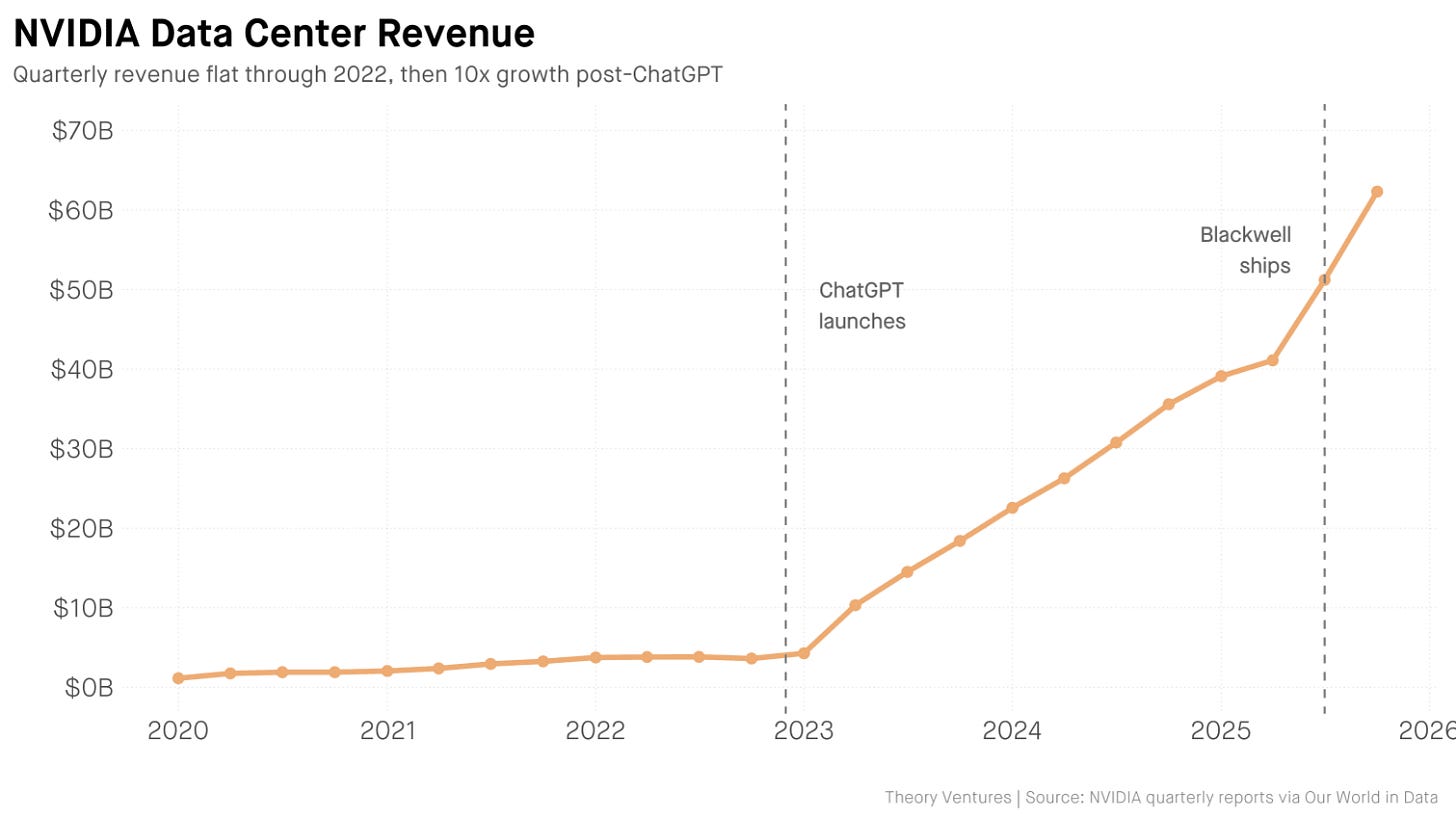

A short, clean structural read on where the inference market is going. NVIDIA’s data-center revenue went from $3.6B (Q4 2022) to $62.3B (Q4 2025) - 17x in three years - and Tunguz’s argument is that this is now starting to fragment along the same axes the database market did in the 2010s: workload-driven specialisation. “What started as one market fragmented into relational, document, key-value, graph, time series, vector, & others... The inference market is fragmenting for the same reason: workloads are different.”

He maps three dimensions worth keeping in your head:

Latency tiers - real-time (<100ms, voice/AV/translation; geographically distributed dedicated capacity), near-real-time (100ms-2s; chatbots/code completion; batched-and-queued; where most of the market sits today), and batch (seconds-to-hours; spot instances, off-peak, cost-led).

Multimodal - for chatbots the bottleneck is memory (KV-cache scales with conversation length); for image/video it is raw compute (a single image is ~50 sequential model passes). Different bottleneck → different chip → different software stack.

Edge - Apple runs a 3B-parameter model on-device for Apple Intelligence; Tesla runs vision on FSD chips at 72W. Quantisation, custom silicon, memory ceilings, and privacy/connectivity push a meaningful share of inference off the hyperscaler.

The model-ecosystem signal supports the read: a small number of dominant LLMs sit beside >90,000 image-generation models on Hugging Face as of April 2026. The strategic implication: a $100B inference TAM fragmenting like the database market produces multiple Oracles, MongoDBs, Databricks, and Snowflakes - not one winner. Pairs naturally with the $112B-quarter piece above; together they describe the financing layer (hyperscalers) and the application layer (specialised inference) of the same wave.

Read more: Tomasz Tunguz

Big Tech’s AI growth (mostly) impressed Wall Street

Cautious Optimism · Alex Wilhelm · Apr 30, 2026 · Tags: AI, Earnings, Hyperscalers, Capex, Microsoft, Alphabet, Amazon, Meta, Copilot

Wilhelm’s reaction post is the readable counterpart to Tunguz’s quant frame, and the side-by-side numbers it surfaces are the ones to pin to the wall. Microsoft’s AI business is now at $37B ARR (up 123%); Microsoft 365 Copilot has crossed 20M paid seats with the count of customers running 50,000+ seats quadrupling YoY and monthly first-party agent usage 6x year-to-date; GitHub Copilot Enterprise is now in 140,000 organisations (~3x YoY). Microsoft is guiding to ~$190B of capex this calendar year vs analyst expectations near $147B - a $43B beat to the upside on spending, not revenue.

The Alphabet vs Microsoft comparison Wilhelm pulls is the most useful single screenshot of the quarter:

Alphabet: “Over the past 12 months, 330 Google Cloud customers each processed over 1 trillion tokens. 35 reached the 10 trillion token milestone.”

Microsoft: “All up, over 300 customers are on track to process over 1 trillion tokens on Foundry this year, accelerating 30% quarter over quarter.”

Google Cloud op-margin tripled (from $2.2B to $6.6B YoY), Gemini Enterprise paid MAUs grew 40% QoQ, revenue from products built on Google’s GenAI models grew nearly 800% YoY, and Alphabet disclosed it will “begin to deliver TPUs to a select group of customers in their own data centers” - a quiet but significant move in the silicon-as-product story. The political-economy footnote Wilhelm flags is worth keeping: two House Republicans are pressing Anysphere (Cursor, built on Moonshot Kimi K2.5) and Airbnb (Alibaba Qwen) on their use of Chinese open-weight base models. If Congress moves on this, it removes the cheapest input the US application layer is currently using to keep prices down.

Read more: Cautious Optimism

Sam Altman in conversation with Patrick Collison

Sam Altman with Patrick Collison · Stripe Sessions 2026 · Apr 29, 2026 · Tags: AI, OpenAI, Codex, Founders, Infrastructure, Science, Utility, Material-Science

A wide-ranging 58-minute fireside covering most of the week’s themes in one conversation. Three claims worth pulling:

The revenge of the idea guys. “We used to make fun of the idea guy. All of a sudden it’s the revenge of the idea guys - which is actually awesome for the world.” When implementation becomes a commodity, the filter flips. The founder who deeply understands users but can’t code was locked out by the implementation bottleneck; the substrate removes the bottleneck. Altman says he wants to fund those people now. See editorial for full argument.

OpenAI as forever-low-margin utility. “I’d be happy for us to be a forever low margin, as long as we can be huge and growing fast, business.” Altman explicitly models OpenAI on Stripe: infrastructure provider, aligned with customers, intelligence as metered commodity. The signüll Post of the Week ($700B infrastructure spend) and the Tunguz $112B quarter make more sense together if the foundation-layer business model is utility pricing, not platform rent extraction. A forever-low-margin token factory at scale requires exactly the private capital concentration the PotW describes - and makes it rational rather than speculative.

Material science as the under-discussed AI application. “It’s not a cool thing and I think people underestimate how much of the world is materials. It’s such a beautifully AI-shaped problem.” New catalysts, compounds, batteries. Gets almost no coverage relative to coding and drug discovery, despite comparable economic surface area.

Bonus: the Tempo example - a Stripe-incubated company running their entire organisation through a single Slack channel with agents (reading docs, creating Linear tasks, writing PRs, deploying, testing). “Extremely trippy watching a whole organisation do everything in a single Slack channel.” The organisational corollary to Brockman’s CEO-of-100K-agents frame, realised at startup scale today.

Read more: YouTube

Venture

Fred Wilson on 40 Years in Venture - and Why USV Is Automating Itself

Fred Wilson with Michael Mignano · USV · Apr 29, 2026 · Tags: Venture, AI-Agents, USV, VC-Operations, Legal-AI, Kill-Zone, Founders, Conviction

Fred Wilson has been investing for forty years. In this hour-long conversation with USV partner Michael Mignano, he lays out what he thinks the human role in venture capital actually is - and what it is not. The answer is a live case study for how the four-persona framework plays out inside a real institution.

Wilson wrote a memo to his partners: “If I was starting a venture capital firm today from scratch, there’s only three things that I would be focused on the humans in the firm doing. Number one: high-level thesis development. Number two: building relationships with founders. Number three: working with founders after we invest. Everything else can be done by AI.”