This week’s video transcript summary is here. You can click on any bulleted section to see the actual transcript. Thanks to Granola for its software.

Editorial

Civilization: What Is Worth Doing?

Civilization is the story of human beings escaping necessity.

For most of history, we had to do almost everything ourselves. We had to hunt, farm, carry, build, count, copy, remember, repair, and defend. A great deal of human time was not chosen. It was commanded by survival.

Technology changes that bargain. Agriculture, writing, machines, electricity, software, networks, and now AI each move some part of life from raw necessity into infrastructure. What once required human labor becomes a system. What once required daily effort becomes available. The meaning of progress is not that humans do nothing. It is that humans get more choice about time.

AI and robotics are probably the next step in that same story.

That is why I think the standard question, “What jobs will remain?” is too small. Of course jobs will change. Some will disappear. Many will be redesigned. New ones will emerge. But the larger question is: what is worth doing when more of what had to be done no longer has to be done by us?

That is a civilization question. It is also a personal one.

Norman Lewis gives this week its philosophical anchor. Writing against the ghost of Paul Ehrlich, he argues that the real divide is whether we see people as burdens or as creators of abundance. Ehrlich saw mouths. Julian Simon saw minds. Lewis puts it plainly:

“The future will not be saved by having fewer people with smaller dreams.”

That shifts the goal from passive consumption to active creation. A resource is not simply something found in nature. It becomes a resource when knowledge makes it useful.

Sand becomes chips. Oil becomes energy. A field becomes food through drainage, seed science, fertilizer, logistics, markets, and accumulated human intelligence.

Branko Milanovic adds the necessary discipline. Even in “99 percent Utopia,” scarcity does not disappear. Better restaurants remain scarce. New inventions are scarce when they first arrive. Unpleasant work that cannot be automated still needs inducement. Money, queues, status, taste, and priority rights reappear wherever quality differs or coordination is hard.

So abundance is not the end of choice. It is the expansion of meaningful choice.

That is where AI becomes interesting. If machines can write code, produce financial models, draft memos, search documents, build apps, and operate software, then the scarce thing is no longer simply labor. The scarce thing is direction or leadership.

Rex Woodbury’s essay on knowledge reproduction gets close to the heart of this. Lewis knows humans have to determine the purpose of a deck. Woodbury knows that AI can make the deck, the memo, the model, and the second opinion.

And both would agree that AI cannot yet supply the responsibility attached to them. It cannot know who should see the deck, which recommendation should lead in the meeting, or who bears the cost if the advice is wrong.

This is the distinction that matters. The artifact becomes cheap. The direction and accountability do not.

Aaron Levie sees the same change from the software side. Agents are becoming users of software. They will not sit politely in front of a UI. They will call APIs, consume permissions, move data, and perform work on behalf of people and organizations. Levie’s warning to software companies is blunt: bundle agent access into the human seat or “you’re DOA.”

Esther Dyson then asks the governance question. If agents act in public, who are they? Who owns them? Who is liable when they transact, negotiate, or misrepresent themselves? Her “.agent” proposal is modest (i.e., it is doable) and the instinct is right. Accountability has to attach somewhere.

Tomasz Tunguz gives the operating warning. A three-person team running twenty agents may look fantastically productive until one of the three humans leaves and a third of the institutional memory disappears. The agents keep moving. The judgment may not.

WIRED shows the failure mode already arriving. Thousands of vibe-coded apps are exposing corporate and personal data on the open web. The tools made building easier. They did not automatically make builders responsible.

This is a pattern across the articles below. AI makes execution cheaper. It does not make purpose cheaper or easier.

The Big Build

The infrastructure stories say the same thing in physical form. Casey Newton’s line is useful: “Think railroads, not crypto.”

The AI boom may overbuild. It may disappoint investors. It may create spectacular financial wreckage. But like railroads, it may also leave behind infrastructure that changes what society can do.

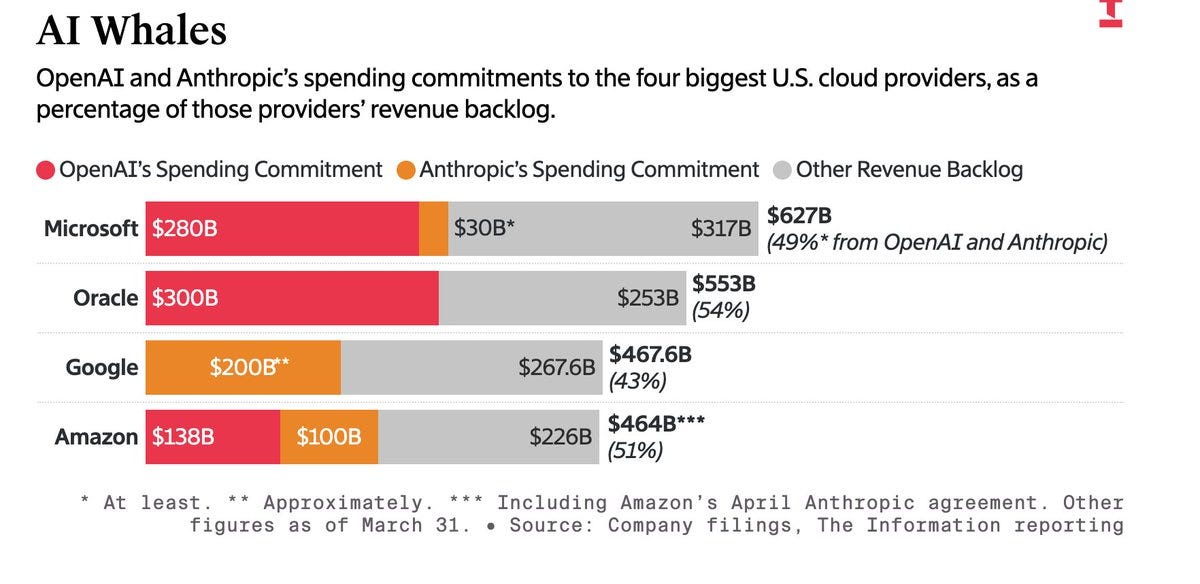

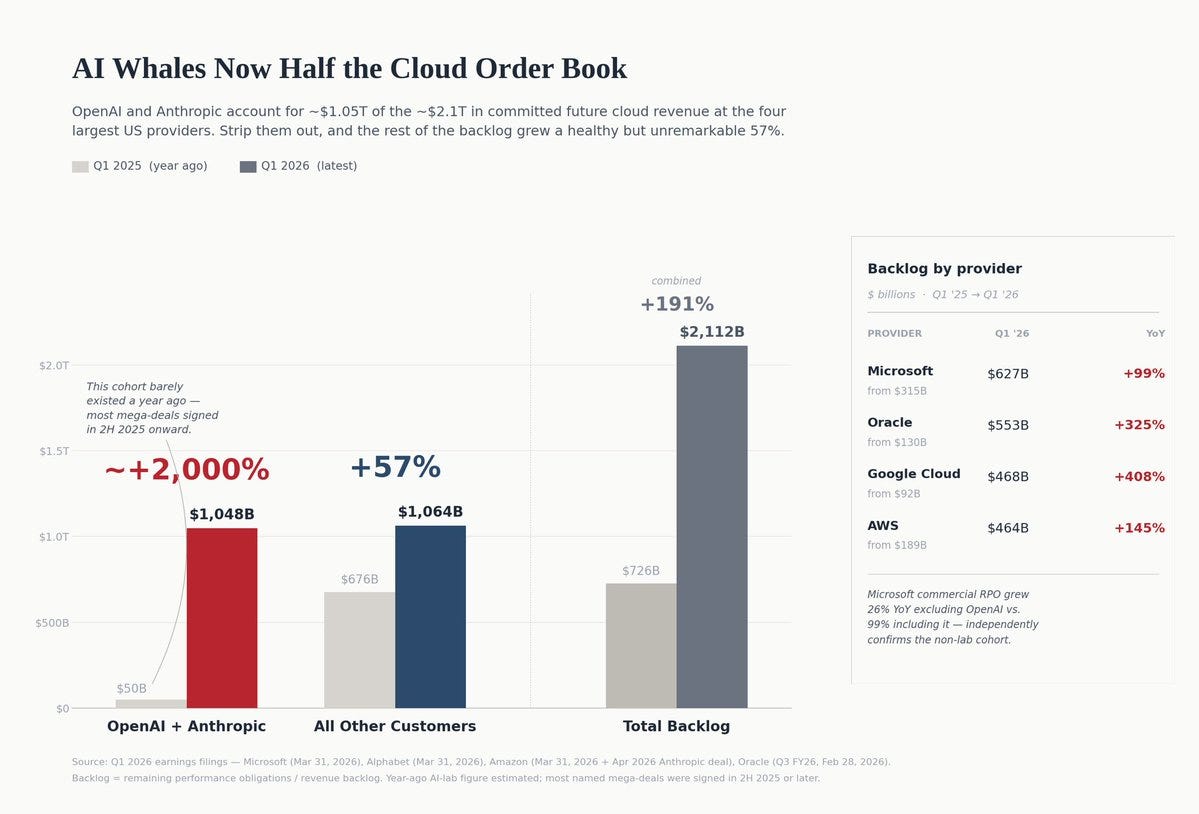

Jessica Lessin’s cloud-backlog charts show how concentrated this buildout has become. OpenAI and Anthropic are now giant demand signals inside Microsoft, Oracle, Google, and Amazon. Anthropic’s SpaceXai deal turns a product-limit announcement into a 300-megawatt infrastructure story. And yes, Anthropic is growing up. As is Elon.

Data Gravity reminds us that intelligence is not weightless. Long-context agents run on memory, bandwidth, chips, and energy. xAI’s move toward neocloud, the landlord economics of AI infrastructure, and pressure on the power grid all point in the same direction: intelligence is becoming a physical business.

Civilization - The Plan?

Compute is not a civilization strategy. Energy is not a civilization strategy. Capital is not a civilization strategy. They are capacities. A society still has to decide what to do with them.

The venture articles this week show capital wrestling with the same question. Crunchbase reports a huge April funding number, but the Series B data shows a thinner and more selective market underneath.

Samir Kaji says venture is splitting into access capital at the top and hard company-building at the bottom. Robinhood’s venture fund shows retail demand for private-market exposure. Dan Gray argues that the ten-year fund clock no longer matches the liquidity reality of up to two decades.

Marc Rubinstein’s Nasdaq-100 history adds another lesson. An index is not just a list. It can become a product, a brand, a licensing machine, and a distribution flywheel. That matters for SignalRank because private-market exposure will not become legible to public investors by magic. It needs selection, structure, trust, and a wrapper people can understand.

Access to private companies is becoming a product. Liquidity is becoming a product. AI scarcity is becoming a product. But access to what? Liquidity for what? Scarcity in service of what?

The Why and the What

Tech and regulation pieces belong in the same editorial, not in a separate mental file.

The government wants model safety and first access. The Trump administration is rediscovering AI review under new language. David Wallace-Wells writes that AI populism is arriving because people are beginning to see data centers, job anxiety, and model power as one political bargain. Julia Angwin sees Meta entering its zombie era, still huge but increasingly dependent on ad load, debt, AI spending, and extraction from aging platforms.

But these observations are negative. What do we want? And how do we get it? These are the right questions.

What are we trying to build?

Capacity without purpose is madness. Power without a plan is waste. Technology without an end game - a goal - produces new things faster than it changes life.

Perhaps this is too human-centered. Perhaps advanced AI systems will eventually set goals, form preferences, negotiate tradeoffs, and define agendas better than we do. Nope, I don’t think so.

That is not where we are today. Today’s systems can optimize brilliantly toward objectives given to them. They can surprise us. They can expand the range of what an individual or small team can attempt. They can make execution feel almost magical. Honestly my day job loves that. But I chose to build https://agent.signalrank.com this week. My AI coded but it was my goal.

AU does not yet possess the human thing at the center of civilization: wanting.

Wanting is not the same as prompting. It is not a task description. It is the deep choice about what kind of future is worth pursuing.

That choice happens at two levels.

At the individual level, it asks what we do with our time when necessity gives us back more of it. Do we learn, build, create, care, socialize, start companies, raise children, make art, govern institutions, or disappear into entertainment optimized by machines that know our weaknesses too well? And what a gift - time.

At the societal level, it asks what we build and protect. More housing or more obstruction? More energy or more managed scarcity? More science or more fear? More open opportunity or more access-controlled private markets? More resilient infrastructure or more beautiful dashboards over brittle systems?

A society that cannot get its young people out of the house may struggle to create the relational economy Ezra Klein thinks becomes more valuable after AI.

A society that has compute but no purpose, trust, or accountability, that has capital but no end goals, that has infrastructure but no vision to build, has not solved the problem of civilization. It has merely automated parts of decline.

So I come back to the title.

Civilization is not measured only by how much work gets done. It is measured by how much human time is freed from necessity, and then by what people choose to do with that time.

AI and robots may take over more of the execution layer. Good. That is what tools are for. But the human job does not vanish. It moves up the stack.

We have to decide what is worth doing.

And then we have to build a society with enough freedom, competence, courage, and responsibility to do it.

Contents

Editorial

Essays

AI

Venture

Regulation

Infrastructure

Interview of the Week

Startup of the Week

Post of the Week

Essays

The Anduril Thesis

Kyle Harrison - Investing 101 (Contrary) - May 2, 2026 - Tags: Defense, Anduril, China, Procurement, Autonomy, Industrial-Base, Geopolitics

Harrison distills two years of work on Anduril into a sharp argument about American defense capacity. His claim is not simply that Anduril is a successful startup. It is that the company was built as a direct answer to a procurement system designed for slow, bespoke, cost-plus weapons programs at exactly the moment war is shifting toward cheap, autonomous, high-volume systems.

The historical contrast does the work. The United States once had overwhelming manufacturing capacity and a Defense Department that funded a large share of global R&D. After McNamara-era budgeting, post-Cold War consolidation, and decades of prime-contractor incentives, the system became very good at producing expensive platforms and very bad at moving quickly. Harrison sets that against Ukraine’s drone warfare, Iran’s cheap drones, and China’s missile and manufacturing scale.

Anduril’s counter-model is software-defined hardware, fixed-cost contracts, rapid iteration, commercial components, high-volume manufacturing, and policy work treated as part of the product. The China frame is why the piece matters: deterrence now depends less on exquisite platforms and more on making allies hard targets with affordable autonomous systems.

Read more: Investing 101

What 10 Studies Reveal About AI Panic in the Media

Nirit Weiss-Blatt - AI Panic News - May 2, 2026 - Tags: AI, Media, Framing, Moral-Panic, China, Doom, Trust, Policy, Influencers

Weiss-Blatt reviews ten academic studies on AI coverage and extends the analysis into the creator ecosystem that distributes AI-doom narratives outside traditional journalism. The consistent finding is that post-ChatGPT media coverage shifted heavily toward danger framing. UK headline analysis found “Impending Danger” as the dominant frame at 37 percent; sentences connecting AI with danger roughly doubled after ChatGPT; and coverage often fell into familiar moral-panic scripts such as Frankenstein, Pandora’s Box, and The Terminator.

The most useful comparison is geographic. Chinese media tends to frame AI through national strength, economic competitiveness, and patriotic pride. US and UK coverage more often frames it as threat. That matters because trust and adoption follow the permission structure created by public narratives.

The second half tracks organized doom media: Future of Life Institute funding for content projects, dedicated AI-doom YouTube channels, and Aella’s Berkeley residency paying creators to post daily. Weiss-Blatt’s point is not that AI has no risks. It is that the public fear environment is being shaped by incentives, funding, and strategic messaging, not just spontaneous concern.

Read more: AI Panic News

Why the A.I. Job Apocalypse (Probably) Won’t Happen

Ezra Klein · The New York Times · May 3, 2026 · Tags: AI, Jobs, Labor, Automation, Productivity, Relational-Economy, Scarcity

Klein takes the strongest version of the AI job-apocalypse argument seriously - Dario Amodei’s warning about entry-level white-collar jobs, Mustafa Suleyman’s automation timeline, OpenAI’s shorter-workweek language, and layoffs where companies cite AI - then asks a narrower question: if the technology is already this capable, why has the broad labor market not yet shown an apocalypse?

His best frame comes from economist Alex Imas: every technological shift should start by asking what becomes scarce. If AI makes technical knowledge and production artifacts abundant, scarcity may move toward relational work, provenance, trust, attention, and social meaning. The likely labor story is not one clean substitution curve, but a messy re-pricing of tasks, credentials, and human presence.

Klein is not saying displacement is imaginary. His warning is narrower and more useful: partial displacement can be politically brutal. If AI hurts millions of workers but not enough to dominate national statistics, America may repeat the China-shock pattern - real local damage, weak retraining, and too much blame-shifting.

Read more: The New York Times

The Work of Knowledge in the Age of AI Reproduction

Rex Woodbury · Digital Native · May 6, 2026 · Tags: AI, Knowledge-Work, Provenance, Credentials, Labor, Services, Trust

Woodbury updates Walter Benjamin for the AI economy. Mechanical reproduction stripped art of scarcity; AI reproduction is now doing the same to knowledge artifacts. The McKinsey-quality deck, the legal memo, the diagnostic second opinion, and the analyst model are becoming cheaper to produce, but that does not mean judgment, provenance, and accountability have become cheap.

The useful distinction is between the artifact of knowledge and the human responsibility attached to it. Claude can generate a credible strategy deck, but it cannot yet know which partner needs to see it, which slide should lead in the room, or who bears the cost if the recommendation is wrong. That is why Woodbury expects knowledge markets to barbell like art markets: mass-produced artifacts at the bottom, expensive trusted provenance at the top, and a squeezed middle of credential-light knowledge labor.

The piece fits this week’s emerging theme because it explains why AI may flatten the analyst pyramid without eliminating the need for accountable humans. As knowledge output becomes abundant, the scarce layer shifts to signatures, credentials, trust, and institutional willingness to be on the hook.

Read more: Digital Native

99 percent Utopia and money

Branko Milanovic · Global Inequality and More 3.0 · May 6, 2026 · Tags: Economics, Money, Utopia, Scarcity, Automation, Robots, Post-Scarcity, Marx

Milanovic reposts a 2015 thought experiment prompted by Leif Wenar’s question: in the best possible world, with human nature fixed, does money exist? His answer is almost, but not entirely, no. Money’s central function is coordination: it tells coffee growers, shippers, baristas, and customers how to align plans. A true utopia would need either abundance so complete that coordination becomes trivial, or some other rationing mechanism.

The clever move is that Milanovic does not treat post-scarcity as pure fantasy. He points to goods already sitting on the “coast of Utopia”: water, ice, napkins, electricity in public places, free concerts, museums, and other things whose marginal cost to an individual user is effectively zero. People generally do not hoard them because they trust they will be available when needed. As productivity rises, more goods can move into that category.

But the last one percent is where money returns. Better restaurants will still be scarce, unpleasant jobs will still need inducement, and new inventions will initially be limited. Queues, coupons, priority rights, or some money-like rationing device reappear as soon as quality differs, labor is unpleasant, or innovation creates a new scarce thing. The closing paradox is beautiful and relevant to the AI abundance debate: faster progress makes more goods feel utopian, but also creates new scarcities. Full happiness may be possible only in stagnation.

Read more: Branko Milanovic

The Future Is Not Scarce. Our Nerve Is.

Norman Lewis · What a piece of Work is Man! · May 2, 2026 · Tags: Abundance, Productivity, Anti-Malthusianism, Innovation, Energy, Growth, Paul-Ehrlich, Julian-Simon

Lewis uses Paul Ehrlich’s death to make an anti-Malthusian case: the deepest divide is between seeing humans as burdens on a fixed world and seeing them as problem-solvers who expand what counts as a resource. He sets Ehrlich’s failed population-bomb predictions against Julian Simon’s abundance thesis, where scarcity is a signal for substitution, invention, and productivity growth.

The piece is less about proving every resource problem is easy than about rejecting learned pessimism. Lewis argues for more energy, more housing, more infrastructure, more science, and more tolerance for creative destruction. His target is the politics of limits, where restraint becomes a moral habit even after technology changes the constraint. In an AI-and-robotics age, the human role may shift away from supplying labor directly and toward choosing the civilization agenda: what should be built, why it matters, and what kind of abundance is worth pursuing.

Read more: What a piece of Work is Man!

AI

As Agents Become the Biggest Users of Software

Aaron Levie (@levie) · CEO, Box · May 1, 2026 · Tags: AI, Agents, Software, Business-Model, Headless, API, Pricing, SaaS

Levie argues that when agents become major software users, every platform has to go headless. Agents will not mainly click through interfaces; they will operate through APIs, permissions, workflows, and data access. That pushes SaaS away from a clean per-human-seat model.

His framework has three parts. Human seats still matter, but they need bundled API usage so a user’s agents can act across Claude, Codex, Gemini, Cursor, Copilot, Perplexity, and other tools. Agent seats may exist when an agent has its own workspace, permissions, and data, but they cannot be priced like human seats because one customer might consolidate work into a single agent while another spins up a thousand. Above the seat allotment, consumption pricing becomes the natural model, and some workflows may eventually be priced by outcome rather than by API call.

The useful point is commercial, not just technical. If agents can perform far more operations on enterprise data than humans ever did through the UI, the revenue ceiling for software platforms changes - but so do metering, access control, and customer expectations.

Read more: X

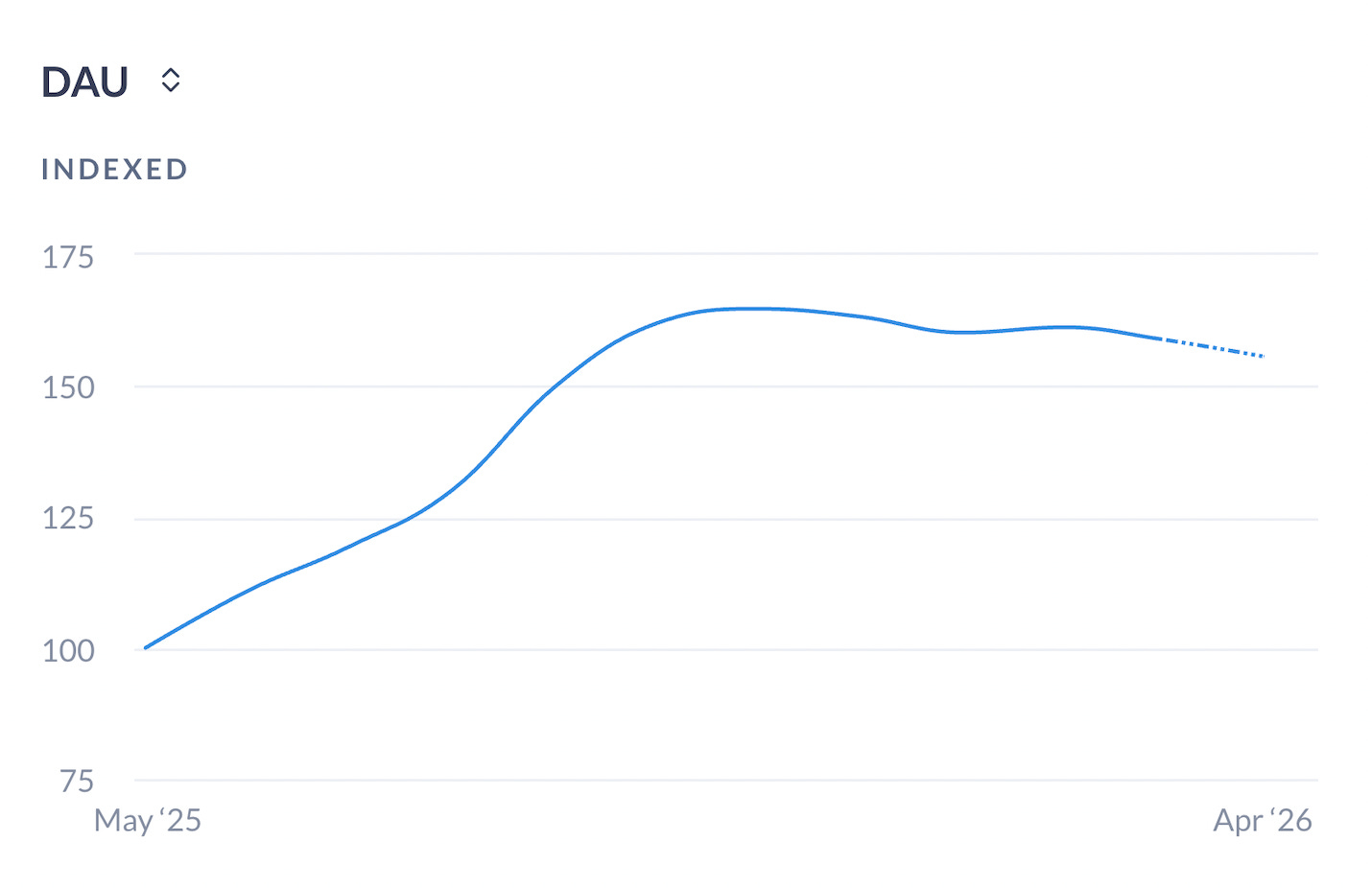

Are AI’s Consumer Applications Hitting a Wall?

Alex Kantrowitz · Big Technology · May 1, 2026 · Tags: AI, Consumer, ChatGPT, Apptopia, Earnings, Enterprise, Bubble

Kantrowitz reads behind the OpenAI revenue miss that rattled the market this week and finds a bigger story almost everyone glossed over: consumer AI may be plateauing. ChatGPT failed to hit its 1 billion weekly active user goal by end of 2025 and may not be there yet (900M as of February). Apptopia data shows daily active users for chatbots as a whole have fallen in 4 of the past 5 months.

The split with enterprise is brutal. AWS just printed 28% growth (its best in years), Google Cloud 63%, Azure 40% - all powered by enterprise AI demand. Meanwhile Meta got punished 8.5% for telling investors it would spend up to $145B chasing “personal superintelligence,” and Apple - the consumer-AI “failure” - sailed through earnings on iPhone and MacBook Neo strength. Kantrowitz: Apple, for the moment, looks wise to have skipped the foundational-model arms race in service of a consumer app nobody actually wanted.

His read: consumer AI has surged on novelty (Studio Ghibli moments, voice mode spikes) but no mainstream generative AI companion, fitness app, life coach, or fantasy adventure has emerged. The exception is agentic coding - Codex and Claude Code are booming, Anthropic is on track for $30B+ this year, and GPT-5.5 reportedly doubled Codex revenue in under a week. The question is whether those agent “super apps” become as standard as the phone, or whether the consumer side stays stuck while the enterprise dollars pile up.

Read more: Big Technology

Meta Is Dying. It’s About Time.

Julia Angwin · The New York Times · May 8, 2026 · Tags: AI, Meta, Facebook, Platforms, Social-Media, Advertising, Capex, Trust

Angwin argues that Meta has entered the internet company “zombie era”: still huge, still profitable, but visibly aging and increasingly dependent on extracting more from a declining or alienated user base. The core evidence is not that Facebook disappears tomorrow. It is that Meta’s latest earnings showed the first reported dip in daily users across its properties, while the company remains tied to a founder-control structure that lets Mark Zuckerberg keep making enormous strategic bets.

The spending history is the sharpest part. Angwin points to roughly $80 billion poured into the metaverse from 2021 to 2026, followed by another AI pivot: about $100 billion spent on open AI models, a $14 billion Scale AI deal to rebuild the effort, and a stated minimum of $115 billion more over the next year. Meanwhile, Meta’s long-term debt has risen, and some data-center financing sits off balance sheet.

The piece belongs in this issue because it links consumer-platform fatigue to the AI capex race. Meta’s cash cow is still immense, but if the core product is aging, the company’s AI spending starts to look less like optional innovation and more like a very expensive search for the next interface. Angwin’s warning is that declining internet platforms do not merely fade away. They can become more ad-loaded, scam-filled, and socially corrosive on the way down.

Read more: The New York Times

We May Now Know What Kind of AI Bubble This Is

Casey Newton · Platformer · April 30, 2026 · Tags: AI, Bubble, Infrastructure, Railroads, OpenAI, Capex

Newton’s useful frame is not whether every AI company deserves its valuation, but whether the buildout leaves real infrastructure behind even if financial returns disappoint. The railroad analogy matters: speculative overinvestment can produce painful financial wreckage and still create a substrate that later companies use.

That distinction matters for operators and investors. Individual AI companies can be wildly overvalued, and some will be wiped out, without the entire buildout being fake. Data centers, fiber, power contracts, accelerator capacity, and networking improvements may remain useful even if today’s equity stories break. The near-term market can still punish excess: consumer adoption may plateau, revenue may miss expectations, and capex assumptions may prove too optimistic. Newton’s framing separates the durability of the substrate from the valuation of the companies racing to control it.

Read more: Platformer

Import AI 455: Automating AI Research

Jack Clark · Import AI · May 4, 2026 · Tags: AI, Recursive-Self-Improvement, AI-R&D, Anthropic, Frontier-Models, Forecasting, Capabilities

Clark’s full Import AI essay makes a concrete forecast: he now puts a 60 percent-plus chance on no-human-involved AI R&D by the end of 2028, defined as a system powerful enough to plausibly train its own successor. He does not expect that in 2026, but thinks a non-frontier proof of concept could appear within a year or two.

The evidence is cumulative. SWE-Bench has moved from Claude 2 at roughly 2 percent in late 2023 to Claude Mythos Preview at 93.9 percent. METR’s task-horizon curve has advanced from GPT-3.5 handling 30-second tasks in 2022 to Opus 4.6 handling roughly 12-hour tasks in 2026. CORE-Bench, which tests whether agents can reproduce research papers from code repositories, rose from a 21.5 percent top score in 2024 to 95.5 percent by late 2025. MLE-Bench has also moved sharply upward.

Clark’s deeper point is that frontier progress may depend less on constant genius than on repeated engineering loops: scale, find breakage, fix it, and scale again. Models are increasingly useful at code, experiment orchestration, post-training, kernel optimization, and managing synthetic teams. If that loop compounds, compute allocation and governance become first-order political questions.

Read more: Import AI

Agent Memory Is A Product Surface, Not Saved Chat History

Adaline Labs · May 2, 2026 · Tags: AI, Agents, Memory, Context, Product, Production, Observability

Adaline’s core claim is that agent memory is not chat history and not a bigger context window. It is a product surface. As agents move from one-off tools into ongoing work, forgetting becomes a bug; remembering the wrong thing becomes a worse bug because it produces confident, hidden error.

The sharpest data point comes from the LOCOMO benchmark cited in the State of AI Agent Memory 2026 report. Stuffing everything into context gets 72.9 percent accuracy, but at roughly 17 seconds of p95 latency and about 26,000 tokens per conversation - around 14 times the token cost of selective memory. A million-token window does not solve persistence, scope, deletion, ownership, or retrieval quality.

The essay breaks memory into user, task, project, and operational scopes, then lists the failures teams hit without explicit rules: stale memory, overgeneralization, tenant leakage, instruction conflict, hidden influence, and bad retrieval. The practical standard is simple: production agents should not remember everything. They should remember the right thing, at the right time, for the right reason.

Read more: Adaline Labs

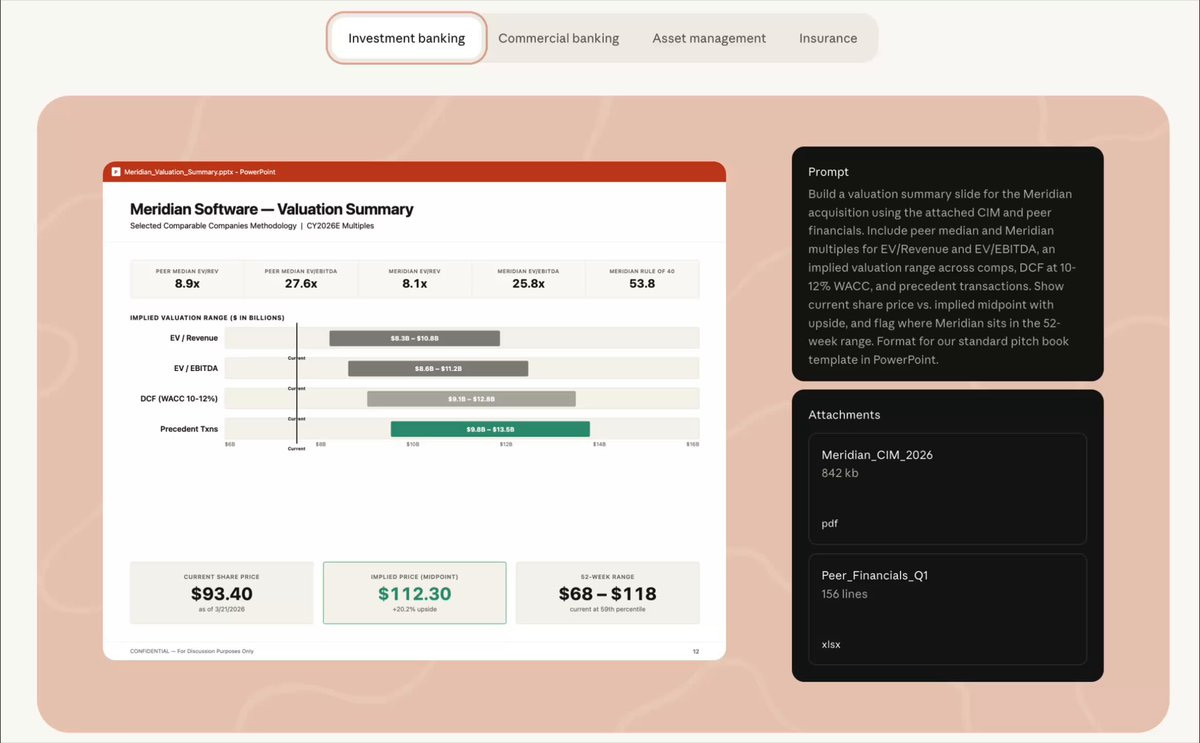

Anthropic’s Finance Agents Come for the Analyst Pyramid

Author: Josh Kale / Claude Published: May 5, 2026

Josh Kale’s framing is blunt - and promotional: Anthropic is packaging parts of the first-year analyst workflow into finance-agent templates. The source is Claude’s own launch post announcing ready-to-run agent templates for financial services - pitch builder, meeting preparer, earnings reviewer, model builder, market researcher, valuation reviewer, GL reconciler, month-end closer, statement auditor, and KYC screener - installable as plugins in Cowork and Claude Code, or runnable as production Managed Agents via cookbooks.

The important bit is not that banks will fire every junior analyst tomorrow. It is that Anthropic is packaging the analyst workflow as reusable agent infrastructure rather than a generic chatbot. Finance is a perfect wedge: repetitive document work, messy spreadsheets, regulatory checklists, valuation models, and presentation assembly, all surrounded by expensive human review. The analyst pyramid does not vanish; it gets flatter, with fewer people doing more orchestration, review, and exception handling.

This belongs next to the week’s software-factory and agent-memory pieces. The most valuable AI products are becoming occupational playbooks: not “ask Claude anything,” but “run the month-end close,” “prepare the meeting,” “review the valuation,” and “build the pitch.” That is where enterprise AI moves from demo to workflow displacement.

Read more: X / Josh Kale · Claude

AI’s big messaging pivot

Noah Smith · Noahpinion · May 5, 2026 · Tags: AI, Jobs, Messaging, OpenAI, Anthropic, Regulation, Public-Opinion, Nationalization

Smith catches a real shift in the AI industry’s public story. Sam Altman, Jensen Huang, and Marc Andreessen are all pushing back against the idea that AI’s purpose is to eliminate human work. That is a philosophical move, but also a political one. The old pitch - this technology may put you on welfare forever and might also kill everyone - was never going to survive contact with voters.

Policy risk is the reason the pivot matters. Smith points to polls showing Americans souring on AI, Bernie Sanders moving from copyright and water concerns toward catastrophic-risk language, Trump reportedly considering government review of new models, and a broader Washington conversation about soft nationalization. In that environment, labs need to look less like a job-destruction cartel and more like builders of augmentation tools.

The competitive angle is pointed. Anthropic, especially through Dario Amodei, has made job-loss warnings unusually explicit. OpenAI can now position itself as the human-friendly alternative. The labor debate is becoming a narrative contest as much as a technical forecast.

Read more: Noahpinion

A.I. Populism Is Here. And No One Is Ready.

David Wallace-Wells · The New York Times Magazine · May 8, 2026 · Tags: AI, Populism, Backlash, Data-Centers, OpenAI, Public-Opinion, Regulation, Diffusion

Wallace-Wells argues that the AI industry spent years preparing for existential risk and technical race dynamics while underestimating the much more immediate political risk: people. The warning signs are no longer abstract. He opens with attacks on Sam Altman’s San Francisco property, then widens the lens to public anger over data centers, job loss, elite concentration, and the sense that a handful of men now control the infrastructure of the future.

The useful turn is from AI safety to AI populism. Americans are not only worried that models might become dangerous. They are worried that AI is another oligarchic bargain: local costs, national disruption, and private upside captured by a few companies. Wallace-Wells points to polling showing Americans far more concerned than excited about AI, and to a sharp swing against local data-center construction, especially in Northern Virginia.

The piece also complicates the race narrative. Recursive self-improvement may still arrive, but the near-term bottleneck may be diffusion: who uses AI, for what, under which institutional constraints, and with what public permission. That puts it next to Noah Smith’s messaging-pivot piece. The labs are learning that winning benchmarks is not enough if voters, workers, towns, courts, and regulators decide the bargain looks rigged.

Read more: The New York Times

Why SaaS freemium playbooks don’t work in AI, and what to do instead

Author: Vikas Kansal Published: May 5, 2026

Kansal’s thesis is that AI breaks the old SaaS freemium bargain because the free tier is no longer almost free to serve. In software, giving away a basic product could create habit at near-zero marginal cost. In AI, every magical free interaction burns compute, and if the free version is good enough to satisfy most users, the company has trained demand while undermining the reason to pay.

The killer detail comes from Google’s AI subscription work: the team found that gating model intelligence was weaker than gating usage intensity, larger context windows, speed, and compute-heavy modalities. The answer was not simply to put the best model behind a $20 wall, but to build Plus, Pro, and Ultra tiers around volume, friction reduction, and expensive experiences such as real-time world models. The pull is that AI companies are not just selling answers anymore. They are selling hours, priority access, and the ability to turn expensive inference into a predictable consumer subscription.

Read more: Lenny’s Newsletter

Optimizing Software Factories

Author: Tomasz Tunguz Published: May 5, 2026

Tunguz’s thesis is that the real constraint in AI-heavy software teams is not throughput, but resilience. A twenty-person engineering team that loses one person loses five percent of its capacity. A three-person team running twenty autonomous agents loses a third of the institutional memory that trains, prompts, validates, and debugs the agent fleet. The agents keep moving, but the orchestration knowledge walks out the door.

The killer detail is the budget model. At a 10/90 AI-to-labor ratio, a mid-stage engineering budget buys roughly twenty engineers plus Copilot, Cursor, and inference spend. At 50/50, it buys a dozen engineers and a fleet of agents, turning humans into architects and decomposers. At 90/10, three engineers sit inside a constellation of agents that generate, review, test, deploy, monitor, and optimize - with almost no hierarchy and almost no redundancy.

The pull is operations research, not AI hype. Manufacturing plants do not run at 100 percent utilization because one breakdown can cascade through the whole system. Slack is not waste; it is the feature that keeps the factory robust. If startups are becoming software factories, then the question is not how few humans can supervise the most agents. It is how much human redundancy the system needs before one resignation becomes an outage.

Read more: Tomasz Tunguz

Thousands of Vibe-Coded Apps Expose Corporate and Personal Data on the Open Web

Author: Andy Greenberg Published: May 7, 2026

Greenberg’s thesis is that vibe coding is creating a new security problem that is less about subtle bugs than about applications being put on the open web with almost no authentication at all. RedAccess cofounder Dor Zvi says his team found more than 5,000 AI-built apps from Lovable, Replit, Base44, and Netlify with little or no access control, and around 40 percent appeared to expose sensitive data, including medical information, financial records, corporate presentations, strategy documents, and customer-chat logs.

The killer detail is how easy the discovery process was. Because many apps are hosted on the AI coding companies’ own domains, RedAccess found exposed projects through ordinary Google and Bing searches combining those domains with targeted terms. WIRED verified that several examples were still online, while the companies largely argued that public exposure reflected user configuration choices rather than platform vulnerabilities.

The pull is that AI coding tools have lowered the barrier not only to building software, but to shipping it outside normal engineering and security review. The next S3-bucket-style data-exposure wave may come from employees who never thought they were deploying production software.

Read more: Wired

Notes from inside China’s AI labs

Nathan Lambert - Interconnects AI - May 7, 2026 - Tags: AI, China, Frontier-Models, Talent, Research-Culture, Labs, Competition

Lambert returns from visits with Chinese AI labs with a useful correction to the usual chip-and-sanctions story. The American and Chinese frontier labs may look similar in the technical ingredients - scientists, data, compute, RL, agents, and model-stack detail - but the operating cultures are different enough to matter.

His strongest claim is organizational. Chinese labs appear unusually well matched to the current LLM-building game: meticulous stack work, fast absorption of prior art, large numbers of student contributors, less public star-scientist culture, and a willingness to optimize the final model rather than individual research credit. That does not mean China is necessarily better at 0-to-1 scientific invention. It means fast-following at frontier-model construction may be a deep institutional strength.

This belongs in the issue because it shifts the China AI debate from abstract geopolitics to lab practice. The competition is not only about export controls or capital. It is also about how teams absorb context, suppress ego, organize young talent, and turn known recipes into working systems at speed.

Read more: Interconnects AI

Venture

Minimum Half of Seed Startups Will Not Make It to Series A

Peter Walker - Head of Insights, Carta - Apr 30, 2026 - Tags: Venture, Seed, Series-A, Carta, Markup-Rate, AI-Exception, Graduation-Rate

Walker, who runs Insights at Carta, states the seed market’s hard truth plainly: at least half of seed startups will never raise a Series A, and in difficult years the failure rate is closer to 65 percent. Even in the best years, 50 percent was the mark.

Walker keeps the lesson narrower: macro conditions matter, speed matters, and even in strong years only about half of seed companies made it to Series A. For seed funds, he argues that primary markups - excluding minor bridges and convertibles - are a useful indicator of portfolio quality and reserve allocation.

His AI caveat is important. The companies raising two or three times in twelve months are real, but they are a tiny fraction of venture. Most founders should not benchmark themselves against the OpenAI-Anthropic-xAI funding environment. The chasm is not just financing. It is where the market decides whether a product has become a business.

Read more: LinkedIn

Cursor + Kalshi Seed Investor on Spotting Outlier Talent

Turner Novak interviews Ali Partovi · The Peel · May 7, 2026 · Tags: Venture, Seed, Talent, Neo, Cursor, Kalshi, Founder-Selection, Computer-Science

Turner Novak’s Peel episode with Ali Partovi is a founder-selection piece. Neo’s model is explicitly people-first: find exceptional young technical talent early, build the network around them, and invest before the company is obvious.

Partovi traces the idea to the pain of missing PayPal and Google at three employees, then to a network that began in 2017 around top college students and eventually backed seed rounds including Cursor and Kalshi. The strongest thread is that computer science has become a business education as well as a technical credential. It teaches systems thinking, abstraction, leverage, and the ability to build before a market has settled language for the thing being built.

The venture lesson is that early-stage edge moves earlier when capital is abundant and consensus forms quickly. By the time the metrics are clean, the best companies may already be expensive. Neo’s bet is that alpha starts as talent underwriting: identify the outlier person before the polished company exists.

Listen/read: X / Turner Novak · Episode notes

The Disappearance of the Ten-Year Fund

Dan Gray · The Odin Times · May 3, 2026 · Tags: Venture, Fund-Structure, Liquidity, LPs, Secondaries, Sequoia, Permanent-Capital, Bartlett-Ramella

Gray reads Bartlett and Ramella’s Stanford Law paper on PitchBook cash flow, NAV, and portfolio data from 1995-2014 vintages and lands on a structural conclusion: the ten-year venture fund is functionally broken. For 2010-2014 vintage funds, median year-ten NAV still exceeds total committed capital. Companies are staying private longer and getting larger, so value remains unrealized well past the legal fiction of a ten-year fund life.

The industry response is bifurcating. Sequoia’s open-ended structure, and adviser registrations by firms such as Andreessen Horowitz and General Catalyst, acknowledge that the top of the market increasingly wants permanent-capital flexibility. At the other end, Gray argues small funds should treat the ten-year horizon as a discipline, not a relic: concentrate at seed and Series A, where pricing edge is real, and use the growing secondary market as the default handoff point.

The piece is a useful reminder that liquidity, not paper markup, is the LP’s actual product. Venture can still work, but the fund vehicle has to match the time profile of the assets it owns.

Read more: The Odin Times

Fundamental Truths in VC Today

Samir Kaji · Allocate / Venture Unlocked · Apr 2026 · Tags: Venture, Barbell, Growth-Equity, AI, Series-C, LPs, SPVs, ARR, Secondaries

Kaji’s list of nine “fundamental truths” is useful because it says plainly that venture is no longer one asset class. Seed funds, growth managers, SPV operators, secondaries, credit, and wealth-channel products now sit under the same label while offering very different risk, fee, and liquidity profiles.

The barbell is the central point. Small funds still need idiosyncratic sourcing, price discipline, and enough portfolio construction to survive the left tail. Large firms and SPV operators are increasingly selling access to consensus growth names, sometimes with PE-like economics and concentrated books. Kaji’s sharpest example is an SPV operator reportedly deploying about $1 billion into top consensus growth names in twelve months at a 5 percent one-time fee and 15 percent carry.

AI accelerates the split, especially around Series C, where category winners start to look legible and capital floods in. But Kaji does not treat capital intensity as proof of durability. Foundation models still need sustainable margins, application moats are under pressure, and infrastructure demand remains partly forward-financed. The result is not “venture is dead.” It is several capital markets wearing one name.

Read more: LinkedIn

Billion-Dollar AI Rounds Push April To Third-Highest Startup Funding Month In A Year

Gené Teare · Crunchbase News · May 5, 2026 · Tags: Venture, AI, Funding, Megarounds, Capital-Concentration, Crunchbase, SignalRank

Teare’s April funding report is the cleanest snapshot of capital concentration this week. Crunchbase says global venture funding reached $56 billion in April, the third-largest monthly total in the past year and up 100 percent year over year. But Anthropic’s $15 billion round and Jeff Bezos’s AI-manufacturing company Project Prometheus’s $10 billion round accounted for 45 percent of all venture capital in the month.

AI dominated the stack: $37 billion, or 66 percent of global venture investment. Model companies absorbed $26.7 billion; physical AI across robotics, aerospace, drones, and autonomous vehicles took about $5.3 billion; and AI infrastructure in semiconductors and data centers raised $1.8 billion. U.S. companies raised about 70 percent of the global total.

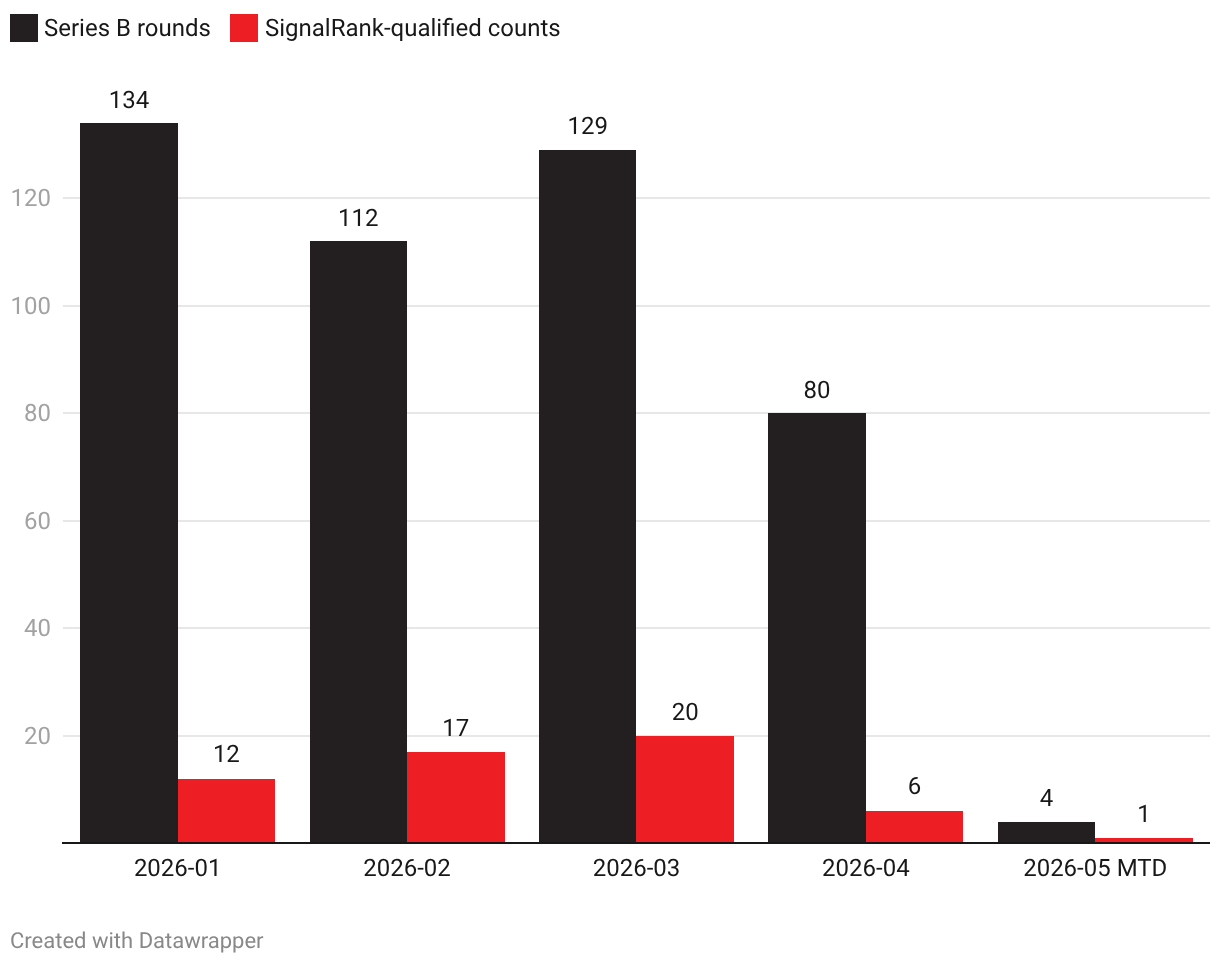

A separate SignalRank analysis adds useful Series B context. April 2026 had 80 Series B rounds, but only 6 were SignalRank-qualified. The five largest Series B rounds - Valar Atomics, X Square, Shengshu Technology, EVAS Intelligence, and ENGINEAI - were not flagged as SignalRank-qualified. The headline market is being pulled upward by mega AI rounds, while the investable Series B universe remains thinner and more selective.

SignalRank also generated a companion chart for the year-to-date Series B context: 2026 monthly Series B rounds and SignalRank-qualified counts, with data credited to agent.signalrank.com.

Chart image: PNG · Interactive Datawrapper version · Source: agent.signalrank.com

The caveat is Crunchbase’s own methodology warning and SignalRank’s matching caveat: reported private-market data lags, early-stage data revises after the fact, and investor counts reflect disclosed or mapped participations rather than lead roles or dollars invested.

Read more: Crunchbase News

Robinhood’s venture fund IPO attracted 150,000+ retail investors, CEO says

Sarah Perez · TechCrunch · May 6, 2026 · Tags: Venture, Retail-Investing, Private-Markets, Closed-End-Funds, Robinhood, OpenAI, Frontier-Companies, Liquidity

Robinhood’s Ventures Fund I is a useful signal for the public-private market convergence story. Vlad Tenev says more than 150,000 retail investors participated in the IPO for the NYSE-listed fund, which gives public-market buyers exposure to private companies including OpenAI, Stripe, Oura, Databricks, Mercor, Ramp, Airwallex, and Boom. His pitch is simple: if companies are staying private long enough to reach hundreds of billions of dollars in value, retail investors should not have to wait for the IPO to participate.

The phrase Tenev uses is “frontier companies,” a replacement for “unicorn” when OpenAI and Anthropic can plausibly raise at valuations closer to sovereign-scale industrial assets than ordinary startups. The structure is also the story: a publicly traded venture-capital-like vehicle with daily liquidity, no accreditation requirement, a management fee, and no carry. The risk is obvious - retail access does not magically solve valuation opacity or portfolio concentration - but the demand signal matters. Private-market exposure is being repackaged for public investors in real time.

Read more: TechCrunch

Bye the Index

Marc Rubinstein · Net Interest · May 8, 2026 · Tags: Indexes, Nasdaq-100, QQQ, Market-Structure, ETFs, Licensing, Distribution, SignalRank

Rubinstein’s Nasdaq-100 story is useful because it treats an index not as a passive list, but as a distribution engine. Nasdaq launched QQQ in 1999 because retail investors wanted exposure to the exchange’s technology franchise without having to pick individual Nasdaq stocks. The product gathered $12 billion in assets in its first year, then moved from Nasdaq’s small in-house effort to an Invesco partnership built around sales, marketing, and fund distribution.

The lesson is that the index became a business line of its own. QQQ charged investors a small fee, spent heavily on marketing, and turned Nasdaq’s brand into a global licensing machine. Rubinstein notes that Nasdaq earned $854 million in index licensing fees over the past 12 months, with index licensing now overtaking cash equities trading, data, and listings inside its core market-services business.

That matters for SignalRank. A private-market index is not only a portfolio construction rule. It is a way to make an otherwise opaque market legible, repeatable, and distributable. The Nasdaq-100 lesson is that selection, brand, licensing, product wrappers, and distribution can reinforce each other. The index can become the flywheel.

Read more: Net Interest

Regulation

The Government Wants Model Safety. It Also Wants First Access.

Author: Marcus Schuler Published: May 4, 2026

Washington’s renewed interest in AI model review is not just a safety story; it is an access story. The killer detail is Anthropic’s Mythos contradiction: the Pentagon labeled Anthropic a supply-chain risk after a fight over military-use terms, while the NSA reportedly tested the same restricted model because it could find exploitable flaws in Microsoft software. One office saw risk. Another saw utility.

Schuler’s thesis is that a government review process would turn that improvisation into procedure. Instead of asking only whether a frontier model is safe for public release, officials could ask whether the government should see it first, especially when the model has cyber capability. OpenAI’s own restricted GPT-5.5 Cyber program makes the pattern harder to dismiss as one lab’s posture. Product launch, defense asset, and cyber risk are beginning to occupy the same release channel, and the customer that wants early access may also become the reviewer.

Read more: Implicator.ai

The Trump administration’s AI doomer moment

Casey Newton · Platformer · May 6, 2026 · Tags: AI, Regulation, Trump, Anthropic, Model-Safety, National-Security, Mythos

Newton’s column catches the Trump administration reversing itself in real time. A year ago, Vance, Sacks, and other officials dismissed AI safety as hand-wringing and regulatory capture. Now the White House is exploring a Biden-like pre-release review process for frontier models, and Google, Microsoft, and xAI have agreed to give the Commerce Department early access to evaluate them.

The proximate cause is Anthropic’s Mythos model. The government reportedly believes it is capable enough at developing cybersecurity exploits to create national-security risk, even as the administration is tangled in its own contradictory stance toward Anthropic: trying to designate it as a supply-chain risk, while also expanding government access to its models. The renamed AI Safety Institute, now the Center for AI Standards and Innovation, is quietly moving back toward safety review under a different political vocabulary.

The piece matters because it shows how capability changes ideology. “Let builders build” is easy until the model can materially alter cyber offense, infrastructure risk, and state capacity. The danger now is not only under-regulation, but a licensing regime that can be repurposed for political pressure or censorship.

Read more: Platformer

Infrastructure

AI Whales: OpenAI and Anthropic Dominate Cloud Backlogs

Author: Jessica Lessin / The Information Published: May 5, 2026

Jessica Lessin’s chart turns the AI infrastructure story into a balance-sheet concentration story. Lessin’s shared chart, based on company filings and The Information reporting, estimates OpenAI and Anthropic’s commitments inside the four biggest U.S. cloud providers’ revenue backlogs: Microsoft at $627 billion total backlog, with roughly $280 billion from OpenAI and at least $30 billion from Anthropic; Oracle at $553 billion, with $300 billion from OpenAI; Google at $467.6 billion, with about $200 billion from Anthropic; and Amazon at $464 billion, with $138 billion from OpenAI plus $100 billion from Anthropic.

Justin Halford’s follow-up chart supplies the counterweight. OpenAI and Anthropic account for about $1.05 trillion of roughly $2.1 trillion in committed future cloud revenue at the four largest U.S. providers, but the rest of the backlog still grew 57 percent year over year. The whales dominate the order book; they are not the whole demand story.

The caution is that backlog is not current revenue and not guaranteed profit. Still, the chart shows how much hyperscaler growth now depends on a tiny set of frontier-model customers whose economics depend on raising capital, selling enterprise AI, and converting compute into durable revenue.

Read more: X / Jessica Lessin · X / Justin Halford · The Information

Higher usage limits for Claude and a compute deal with SpaceX

Anthropic · May 6, 2026 · Tags: AI, Infrastructure, Compute, Anthropic, Claude, SpaceX, Capacity, Inference, Claude-Code, Energy

Anthropic has turned the “we need more compute” story into a product announcement. It is doubling Claude Code’s five-hour rate limits for Pro, Max, Team, and seat-based Enterprise plans, removing peak-hours reductions for Claude Code on Pro and Max, and raising API rate limits for Claude Opus models. The reason is new physical capacity.

The headline is SpaceX: Anthropic says it will use all compute capacity at SpaceX’s Colossus 1 data center, adding more than 300 megawatts and over 220,000 NVIDIA GPUs within the month. That sits alongside up to 5 GW with Amazon, 5 GW with Google and Broadcom beginning in 2027, $30 billion of Azure capacity through Microsoft and NVIDIA, and a $50 billion U.S. infrastructure investment with Fluidstack.

The product lesson is direct. Claude Code demand, long-context agents, finance workflows, and managed agents all translate into power, chips, networking, and capital allocation. For SpaceX, Colossus signals a possible broader role as an AI infrastructure supplier, especially if the companies’ stated interest in orbital AI compute develops into a real deployment. Frontier AI is becoming a contest for physical compute empires, not only benchmark scores.

Read more: Anthropic · Claude thread · 300 MW capacity detail · Grok on xAI / Colossus 2

Why KV Cache and Memory Drive AI Economics

Data Gravity · May 4, 2026 · Tags: AI, Infrastructure, Inference, KV-Cache, Memory, HBM, Unit-Economics, Agents

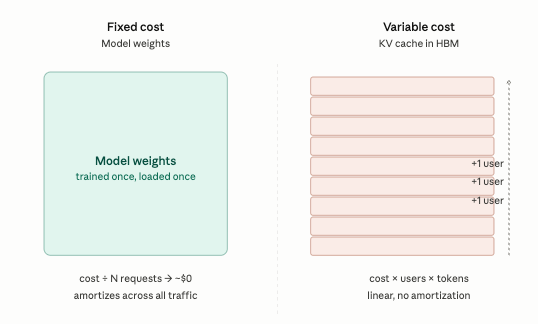

This is the clearest plain-English explanation this week of why AI economics are not mainly about raw compute. Model weights are the fixed cost; the KV cache is the variable cost. The cache is the model’s short-term memory, stored in expensive high-bandwidth GPU memory, and it grows with every word in a conversation. Long-context requests can turn one GPU from serving many short chats into serving one or two users.

That makes memory traffic the real unit-cost line for inference. It explains why cached tokens are discounted, why DeepSeek’s Multi-head Latent Attention mattered, why NVIDIA’s roadmap leans so hard into HBM bandwidth, and why simple GPU-as-a-service margins should compress. Customers are not just paying for math. They are paying for a memory traffic jam.

Agents make the problem harder because they replay long, changing histories while using tools and coordinating work. Agent economics will depend on who can compress, reuse, route, and govern memory state most efficiently.

Read more: Data Gravity

Is xAI a neocloud now?

Russell Brandom · TechCrunch · May 6, 2026 · Tags: AI, Infrastructure, xAI, SpaceX, Anthropic, Neocloud, Data-Centers, Compute, Colossus

Brandom asks the awkward question raised by the Anthropic-SpaceX compute deal: if xAI can lease the entire Colossus 1 data center to Anthropic, is xAI primarily a model company or a neocloud? Musk’s explanation is that xAI has moved training to Colossus 2 and no longer needs Colossus 1. The business logic is clear enough. If Grok usage is weaker than expected and the company has more GPU capacity than its products can use, Anthropic turns idle or surplus compute into revenue and credibility.

The strategic question is harder. Google and Meta tend to protect scarce compute for their own model and product roadmaps, even when cloud customers would pay for it. Selling major capacity to a competitor suggests a different priority stack: data centers, chips, orbital compute, and infrastructure monetization may be more central to Musk-world AI than Grok itself. That does not make the business small. It may make it bigger. But neocloud economics are less forgiving than software economics, with Nvidia pricing power on one side and volatile AI demand on the other.

Read more: TechCrunch

The AI Race Is Becoming a Landlord Business

Author: Corey Lahey Published: May 8, 2026

Lahey’s thesis is that frontier AI is starting to behave less like pure software and more like an industrial landlord business. The visible product is the model, but the strategic asset is the ability to serve intelligence reliably, cheaply, and at scale. Rate limits, latency, uptime, redundancy, chip supply, power, and multi-cloud relationships become product quality because customers experience capacity constraints as broken workflows, not as procurement problems.

The killer detail is the circular structure of the market. Amazon invests in Anthropic, Anthropic commits spend to AWS; Google invests in Anthropic, Anthropic buys Google capacity; Microsoft sits inside OpenAI’s cloud economics; Nvidia sells the shovels while cloud providers finance the mines. That can look like revenue circularity, but the demand is not imaginary. The practical lesson is that durable AI value may sit around the bottleneck rather than the interface. For startups, the question becomes what gets harder to copy after the model layer spreads: proprietary workflow data, distribution, permissions, compliance, availability, or recovery when an agent fails.

Read more: Ugly Baby & VC Stories

The biggest U.S. power grid is under strain from AI

Tim De Chant - TechCrunch - May 8, 2026 - Tags: AI, Infrastructure, Energy, Data-Centers, Grid, PJM, Power-Markets

De Chant uses PJM, the largest U.S. grid operator, to show how AI demand is colliding with institutions built for a slower energy world. PJM says its region has “years, not decades” to change. That matters because the territory includes Northern Virginia and other data-center-heavy markets where AI and cloud demand are already reshaping electricity planning.

The operational failure is concrete. PJM paused new generation interconnection requests in 2022 while demand was rising, leaving a backlog and then a rush of new requests when the queue reopened. More than 800 projects representing about 220 gigawatts of power are now seeking connection, while utilities, politicians, households, and data-center customers argue over prices, reliability, and who gets priority.

The story is a reminder that AI infrastructure is not just GPUs and model weights. It is power markets, grid queues, turbine shortages, renewables, batteries, ratepayers, and political legitimacy. If intelligence becomes a major new load on the grid, institutional throughput becomes part of the AI stack.

Read more: TechCrunch

Interview of the Week

Why History Keeps Happening

Andrew Keen interviews Becky Holmes · Keen On · May 8, 2026 · Tags: AI, Fraud, Deepfakes, Trust, Online-Safety, Crime, Interview

“Every single person that we meet was both the endpoint of thousands of years that brought them there, and the midpoint of some other process, and was the beginning of something else entirely. Think of yourselves as the middle and the beginning, not just the end.” — Patrick Wyman

History, we are often told, is a simple story of progress — from caves and villages to cities; from forests and farms to factories; from chieftains and kings to democracies. But, for Patrick Wyman, host of the enormously popular Tides of History and Fall of Rome podcasts, that’s far too linear a narrative. In his new book, Lost Worlds: How Humans Tried, Failed, Succeeded, and Built Our World, Wyman argues that rather than a teleological inevitability, civilization is a chaotic ten thousand year story of improvisation, experiment, failure, and unintended consequence. It is never ending. We are always in the middle of it.

Watch/listen: Keen On

Startup of the Week

Sierra raises $950M as the race to own enterprise AI gets serious

Connie Loizos · TechCrunch · May 4, 2026 · Tags: Startup, Enterprise-AI, Agents, Customer-Experience, Sierra, Bret-Taylor, Funding, SaaS

Sierra is this week’s startup because it shows where enterprise AI is moving fastest: not into novelty chatbots, but into agentic systems that sit between customers and the messy enterprise software stack. Bret Taylor’s company is raising $950 million led by Tiger Global and GV at a valuation above $15 billion, leaving it with more than $1 billion to work with and the mandate to become the standard platform for AI-powered customer experience.

The operating claims are the reason it belongs here. Sierra says it now serves more than 40 percent of the Fortune 50 and that its agents are handling billions of interactions across mortgage refinancing, insurance claims, returns, and nonprofit fundraising. The company went from $100 million ARR in late November to $150 million ARR by February, a pace that explains both the valuation and the anxiety across incumbent enterprise software.

The interesting piece is Ghostwriter, Sierra’s “agent as a service” tool that lets users describe a needed workflow in natural language and have Sierra build and deploy a specialized agent. Taylor’s thesis is that most enterprise software is barely used because humans hate navigating the UI. Sierra is betting the next interface is not another dashboard. It is an agent that talks to the system for you.

Read more: TechCrunch

Post of the Week

Stanford CS336: the 2026 default LLM architecture

Jason Zhu (@GoSailGlobal) · X · May 5, 2026 · Tags: LLMs, Architecture, Training, Inference, GQA, RoPE, QK-Norm, Long-Context

Zhu translates a Stanford CS336 lecture by Tatsu Hashimoto into a useful field guide for anyone trying to understand why frontier and open-source LLMs now look so similar. The post’s headline claim is that roughly 90 percent of mainstream LLM architecture choices have converged. In 2024, everyone was “cosplaying Llama 2.” In 2025, the problem was training without collapse. In 2026, the problem is surviving long context.

The practical template is the value. Modern models mostly use pre-norm residual streams, RMSNorm instead of LayerNorm, no bias terms, SwiGLU or GeGLU activations, RoPE positional encoding, serial transformer blocks, and extra layer norms wherever instability appears. Hyperparameters have converged too: feed-forward width around 2.67× hidden size for GLU models, attention head dimensions that multiply back to hidden size, hidden-dimension-to-layer-count ratios near 100, multilingual vocabularies around 100K-200K tokens, and weight decay kept on not because of classic overfitting but because it changes where optimization converges.

The current pieces are stability and inference. Z-loss keeps the output softmax normalizer from exploding. QK norm scales queries and keys before attention so softmax inputs stay controlled. Google-style logit soft caps trade a little performance for safety. Grouped-query attention is effectively standard because it preserves most expressiveness while cutting KV-cache cost. Long-context models are also moving toward alternating local and global attention. The pull is simple: in 2026, copy the boring parts and spend your novelty budget elsewhere.

Read more: X / GoSailGlobal

A reminder for new readers. Each week, That Was The Week, includes a collection of selected essays on critical issues in tech, startups, and venture capital.

I choose the articles based on their interest to me. The selections often include viewpoints I can't entirely agree with. I include them if they make me think or add to my knowledge. Click on the headline, the contents section link, or the ‘Read More’ link at the bottom of each piece to go to the original.

I express my point of view in the editorial and the weekly video.