This week’s video transcript summary is here. You can click on any bulleted section to see the actual transcript. Thanks to Granola for its software - Transcript and Summary

Editorial: Missing in Action - Real Leadership

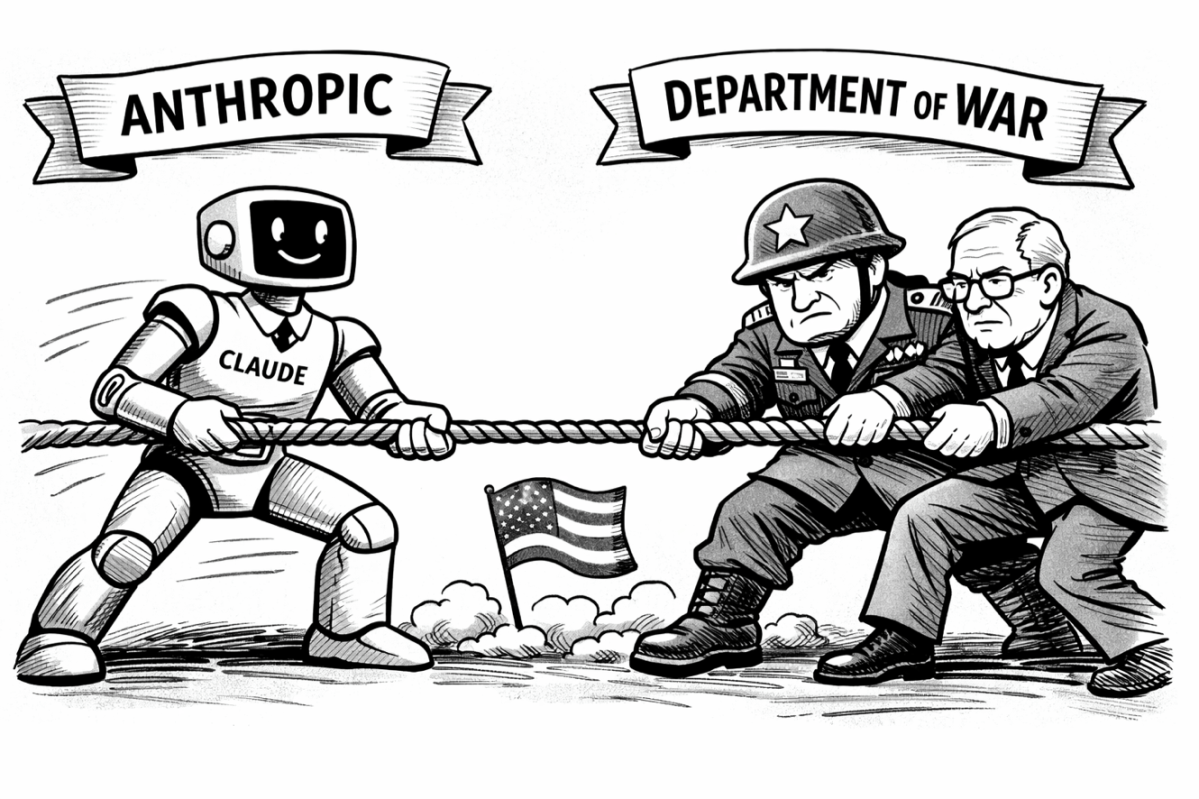

This week, the most important question in technology — who sets AI policy? — got a definitive answer: nobody. Not because the question wasn’t asked. Because every participant who could have provided leadership failed to.

Start with Anthropic. Dario Amodei’s internal memo is a remarkable document. He accuses OpenAI of gaslighting, calls Palantir’s safety offering “almost entirely safety theater,” and claims the Pentagon specifically wanted Claude to analyze bulk commercial data on Americans. Some of these claims may be true. But read the memo carefully and something more troubling emerges: Amodei treats the absence of law as an invitation to fill in the blanks himself.

Sam [Altman]’s description and the DoW description give the strong impression (although we would have to see the actual contract to be certain) that how their contract works is that the model is made available without any legal restrictions (“all lawful use”) but that there is a “safety layer”, which I think amounts to model refusals, that prevents the model from completing certain tasks or engaging in certain applications. (The Information)

By conflating “all lawful use” with “without any legal restrictions” is the nub of it. All lawful use is already restricted to lawful use, so clearly restricted. He then goes on to add what he considers the additional legal restrictions should be, even showing concern that Hegseth has any authority outside of Anthropic’s control:

On autonomous weapons, the DoW claims that “human in the loop is the law”, but they are incorrect. It is currently Pentagon policy (set during the Biden administration) that a human has to be in the loop of firing a weapon. But that policy can be changed unilaterally by Pete Hegseth, which is exactly what we are worried about. So it is not, for all intents and purposes, a real constraint. (The Information)

He is “worried about” Hegseth have the authority to change operational rules. Even though, like him or not, Hegseth runs the Dept of War. He would prefer Anthropic to have override on that.

There is no statute governing military AI surveillance. His response is not to advocate for one — it’s to impose his own restrictions through a vendor contract and present that as principle. As Ben Thompson argues, if you build an independent power structure that rivals the state’s, the state will destroy it. As Dean Ball writes — and Ball is a former Trump AI policy advisor criticizing his own side — operational restrictions in defense contracts are routine, but Anthropic crossed from technical constraints into policy-making. That’s not democracy. It’s a CEO deciding what the military can and cannot do, based on his personal beliefs about risk, and calling it ethics. The fact that his beliefs may be correct doesn’t make the method democratic.

After the memo leaked, Amodei apologized for its tone — specifically for calling OpenAI employees 'gullible' and its supporters 'Twitter morons.' But he called it an 'out-of-date assessment,' not a retraction, and Anthropic simultaneously announced it would sue the Pentagon over the supply chain designation. It was an apology for the language, not the intent. And the intent was to impose an ideological set of beliefs into a vendor contract.

Ironically, the US did actually use Claude in the Iran operation despite the conflict with Anthropic.

Sam Altman played it differently. He publicly supported Amodei — while, per the NYT timeline, already negotiating the deal that would replace Anthropic the moment they walked away. He announced red lines written into a binding contract: no mass surveillance, human responsibility for lethal force. He invited the Pentagon to offer the same terms to all AI companies. On the surface, pragmatic and constructive.

Those red lines amount to “any lawful use” — compliance with the same legal frameworks that Amodei refused. So Altman’s bad behavior was not the same as Amodei’s.

Altman was not undemocratic. He was something arguably worse: performatively democratic while being strategically opportunistic. He won the contract. He did not win hearts and minds. Short-term gain, long-term credibility cost — because the next time OpenAI announces a principled position, everyone will remember this week. The narrative from OpenAi was about the contract, not about policy. In that sense it was the same as Amodei, abandoning a moment of leadership where Government is held accountable for future policy. Amodei cared about that but seriously misplayed his hand. Altman seemingly did not care. Both abstained from true leadership.

Amadai seems to be ideologically driven... Altman seems to be mainly commercially driven... they're both naughty boys in the playground, leveraging the absence of clarity to their own advantage. Neither one of them is an authoritative leader of opinion with the interests of everyone at heart.

Then there’s Pete Hegseth. Palmer Luckey’s (and mine from last week) constitutional argument — that elected officials, not corporate executives, should control military AI — has genuine force. Hegseth has the stronger institutional claim. But what is he actually doing with it? He wants to use AI for surveillance and potentially ill-judged military applications. He may have the law on his side, but he has no vision for what AI policy should be.

Hegseth probably is the least culpable of the three. Because he's just doing his job (whether you agree with it or not).

The supply-chain risk designation — tweeted, likely beyond his legal authority, exceeding what even Trump intended according to Zvi Mowshowitz’s sources — was an act of political retaliation, not governance. Ball’s verdict is devastating: the rational response was to cancel the contract and issue new procurement guidance. Instead, Hegseth went for what Ball calls “corporate murder.” The broader administration stance is hands-off — mostly defensible in the early days of a technology — but there is no endgame. No framework. No long-term strategy. Just power exercised through tweets and PAC donations.

Om Malik’s essay this week (post of the week) draws the contrast that makes the absence of American leadership most visible. China has an AI policy. Beijing’s 15th Five-Year Plan commits to AI dominance across the entire economy — chips, quantum, humanoid robots, open-source AI as a deliberate competitive weapon.

America’s response: a pinky promise from seven CEOs not to raise your electricity bill. The question Om poses isn’t about authoritarianism versus democracy. It’s simpler: where is America’s long-term AI strategy? Who trains the talent? Where does compute go? How does AI get woven into manufacturing, healthcare, logistics? Right now the answer is: let the companies figure it out and hope voters don’t notice. The government is a buyer with no policy for leading the future.

Apple this week announced a new MacBook Pro with 128GB of memory. That is more than enough to run a very powerful AI model on your laptop. So you can have your own agent running on an AI locally — without any reliance on either major companies or government. That is the core of China’s recognition and it is driven by 128 gb unified memory chip sets. Intel and AMD have equivalents to Apple now also.

And then Benn Stancil reminds us what everyone is actually fighting over. Surveillance is not ‘Minority Report’ — it is a SQL query. The data is already collected. Every click, every movement, every transaction sits in a table somewhere, protected not by encryption but by the tedium of writing 595-line queries. AI doesn’t create surveillance capability; it removes the annoyance barrier that was the only thing standing between a database and a surveillance state. The capability exists today regardless of who wins the contract.

Max Tegmark delivers the broadest indictment: every major AI company lobbied against binding regulation while promising to self-regulate. That is the right starting point for any technology. But self-regulation is not the same as no leadership.

Regulation = stopping things, Policy = enabling things

David Sacks has the opportunity with AI to do what he did with stablecoins and crypto — set a forward-looking set of goals and execute a roadmap.

There's a difference between regulating something to stop or slow it and setting policy to enable something. Regulating is generally how to stop bad things. Policy is about how to allow good things to happen. Sacks probably has the power to begin to do that. But he hasn't. David Sacks is asleep at the wheel on AI compared to China. Time to wake up?

Leadership means planning, enabling, and building, not just buying and reacting. America has less leadership on AI systems than on sandwiches — which are highly regulated. Congress is entirely absent from this story — the one institution with the democratic legitimacy to set AI policy chose not to act while the technology outran every existing set of rules.

No good guys. Anthropic was ideological where it needed to be democratic. Altman was pragmatic where he needed to be honest. Hegseth was powerful where he needed to be strategic. Congress was silent where it needed to be present. No one led. And while they fought over who controls the technology, Iranian drones hit the data centers where it all runs, and three companies consumed 88% of all venture capital on earth.

The question of who sets AI policy deserves a serious answer. Real-time democratic mechanisms are the right long-term direction, but society is not close to the use of technology to achieve it. This week proved that nobody currently in the room is capable of providing one.

Contents

Essays (5)

Venture (8)

AI (8)

Regulation (6)

Interview of the Week - Was Henry Kissinger Evil? What His Secret Phone Calls Reveal

Startup of the Week - How Jobright Hit $5M ARR With 9 People and a System of In-House Agents

Post of the Week - The Great (ai) Game vs AI Theater

Essays

Danielle Allen on Why Technocratic Liberalism Failed

Author: Yascha Mounk

Date: 2026-02-28

Publication: Yascha Mounk

Danielle Allen argues that the old technocratic consensus is exhausted and that legitimacy now depends on rebuilding institutions around shared agency, not expert insulation. The interview frames liberal democracy as a participation problem as much as a policy problem.

The thesis here is that this debate is fundamentally about institutional design, not just ideology or personality. The argument leans on how authority is allocated when technical systems move faster than political consensus.

For TWTW, this matters because AI governance fights are increasingly a proxy for who holds power when systems scale faster than trust. It is a useful political counterpart to this week’s economic debates about verification and institutional capacity.

The evidence points to a practical conclusion: legitimacy and performance now depend on building durable mechanisms for accountability and participation. The piece therefore reads as a governance brief for an AI-era policy environment, not simply a one-week opinion cycle.

Read more: Danielle Allen on Why Technocratic Liberalism Failed

If A.I. Is a Weapon, Who Should Control It?

Author: Ross Douthat

Date: 2026-02-28

Publication: NYT Opinion

The column puts the current U.S. AI confrontation in constitutional terms: if frontier models become strategic infrastructure, delegating authority entirely to either firms or the state is unstable. That tension is no longer theoretical after this week’s Pentagon-Anthropic escalation.

The piece is strongest as a framing essay rather than a policy blueprint. It sharpens the core question the sector now has to answer: what governance model preserves both security and civil liberty when model capability keeps compounding?

Read more: If A.I. Is a Weapon, Who Should Control It?

The Economics of Technological Change

Author: Paul Krugman

Date: 2026-03-01

Publication: Substack

Krugman separates the AI labor debate into three questions that often get conflated: total jobs, wage distribution, and market concentration. The point is that history repeatedly avoids permanent mass unemployment while still producing painful transition periods where workers lose bargaining power.

The thesis here is that this debate is fundamentally about institutional design, not just ideology or personality. The argument leans on how authority is allocated when technical systems move faster than political consensus.

That framing is useful this week because it links AI execution gains to political economy, not just product velocity. Even if output rises, the timing and distribution of gains remain the core policy and market risk.

The evidence points to a practical conclusion: legitimacy and performance now depend on building durable mechanisms for accountability and participation. The piece therefore reads as a governance brief for an AI-era policy environment, not simply a one-week opinion cycle.

Read more: The Economics of Technological Change

Anthropic and Alignment

Author: Ben Thompson

Date: 2026-03-02

Publication: Stratechery

Thompson frames the Anthropic-DoW break as a structural alignment problem: private model providers can assert principles, but national-security procurement still defaults to state authority when constraints are not codified in law. The analysis connects governance posture to institutional reality instead of treating this as a one-company ethics dispute.

The thesis here is that this debate is fundamentally about institutional design, not just ideology or personality. The argument leans on how authority is allocated when technical systems move faster than political consensus.

For TWTW, this deepens the week’s central argument from policy signaling to power allocation. It helps explain why negotiated constraints, enforceable oversight, and procurement design now matter as much as model capability.

The evidence points to a practical conclusion: legitimacy and performance now depend on building durable mechanisms for accountability and participation. The piece therefore reads as a governance brief for an AI-era policy environment, not simply a one-week opinion cycle.

Read more: Anthropic and Alignment

How to Bet Against the Bitter Lesson

Author: Tim O’Reilly

Date: 2026-03-02

Publication: O’Reilly Radar

O’Reilly argues that scaling laws and cheaper inference make pure model capability a weak moat, so the durable edge shifts to institutions that encode judgment, trust, and domain accountability. The piece pushes against deterministic narratives that “bigger models win everything.”

For TWTW, this is a useful bridge between this week’s labor and governance debates. If execution is commoditizing, then verification systems, workflow design, and social trust become the assets that compound.

Read more: How to Bet Against the Bitter Lesson

Venture

Dear SaaStr: Should I Take On Venture Debt?

Author: Jason Lemkin

Date: 2026-02-28

Publication: SaaStr

At $4M ARR, venture debt can look like clean, non-dilutive runway. Lemkin’s argument is that founders routinely underestimate covenant risk and the operational drag that comes with debt once growth decelerates.

The central argument is operational: capital structure, financing terms, and market plumbing now matter as much as product narrative. Instead of treating this as abstract finance, the piece frames decisions as path-dependent choices that shape founder and investor outcomes over multiple rounds.

The practical takeaway is to treat debt as a timing tool, not free capital. In the current environment, that distinction is central for companies trying to buy time for AI-driven productivity gains without losing strategic flexibility.

What matters most is the implementation detail behind the headline numbers. The conclusion is that venture returns in this cycle will be driven by discipline in structure, timing, and liquidity strategy rather than valuation optics alone.

Read more: Dear SaaStr: Should I Take On Venture Debt?

15 Angel Investments and All Failures

Author: David Cummings

Date: 2026-02-28

Publication: David Cummings on Startups

A blunt field report on early angel investing: a portfolio can be active and still produce no realized wins if check sizing, access, and follow-on discipline are weak. Cummings emphasizes that pattern recognition develops slowly and that early losses are usually structural, not bad luck.

The central argument is operational: capital structure, financing terms, and market plumbing now matter as much as product narrative. Instead of treating this as abstract finance, the piece frames decisions as path-dependent choices that shape founder and investor outcomes over multiple rounds.

For venture readers, this is a useful counterweight to performative “angel alpha” narratives. It captures the mechanics of failure at small scale, where decision quality and portfolio construction matter more than story flow.

What matters most is the implementation detail behind the headline numbers. The conclusion is that venture returns in this cycle will be driven by discipline in structure, timing, and liquidity strategy rather than valuation optics alone.

Read more: 15 Angel Investments and All Failures

RVI IPO vs VCX Direct Listing: The First PVC Head-to-Head

Author: Keith Teare

Date: 2026-02-28

Publication: YouTube

Two public venture products are reaching the market with opposite structures: an underwritten IPO with upfront load versus a direct listing with no new shares and no underwriting spread. The structure difference creates very different starting conditions for retail investors.

From the transcript-level discussion, the comparison hinges on listing mechanics rather than branding: underwriting fees, load structure, share creation, and opening-price discovery each change investor starting conditions. The video argues that these mechanics will influence volatility, effective entry price, and who captures early upside.

This is a timely venture-market signal because pricing mechanics, not brand narratives, will determine near-term investor outcomes. The first 90-day trading behavior will likely set the tone for future permanent-vehicle launches.

As evidence, the episode pairs the RVI-versus-VCX setup with parallel valuation context from Stripe and Cerebras to show how structure and pricing interact across private and public markets. The conclusion is that early performance should be interpreted through vehicle design and liquidity behavior, not just headline demand.

Read more: RVI IPO vs VCX Direct Listing: The First PVC Head-to-Head

The Great Myth of the Liquidation Preference: Yes, It Matters. But Not In Many Scenarios.

Author: Jason Lemkin

Date: 2026-03-01

Publication: SaaStr

Lemkin’s core point is practical: liquidation preferences are real and can change outcomes, but founder economics are usually dominated by ownership dilution, execution quality, and exit size. The mechanics only become fatal in specific downside cases, not as a universal rule.

The central argument is operational: capital structure, financing terms, and market plumbing now matter as much as product narrative. Instead of treating this as abstract finance, the piece frames decisions as path-dependent choices that shape founder and investor outcomes over multiple rounds.

This is useful for this week’s venture lens because it replaces social-media term-sheet fear with scenario-based underwriting. It is a better operating framework for founders deciding whether to optimize for valuation optics or resilient cap-table structure.

What matters most is the implementation detail behind the headline numbers. The conclusion is that venture returns in this cycle will be driven by discipline in structure, timing, and liquidity strategy rather than valuation optics alone.

Read more: The Great Myth of the Liquidation Preference

Conviction, Consolidation, and Smart Capital

Author: EUVC

Date: 2026-03-02

Publication: EUVC | The European VC

The piece argues that capital structure is now the differentiator in Europe: LP pressure is driving consolidation among managers, and capital that actively helps distribution, hiring, and governance is outperforming passive checks. In this framing, “smart capital” is not branding but service depth under tighter liquidity cycles.

The central argument is operational: capital structure, financing terms, and market plumbing now matter as much as product narrative. Instead of treating this as abstract finance, the piece frames decisions as path-dependent choices that shape founder and investor outcomes over multiple rounds.

For TWTW, the relevance is strategic. As venture returns compress, manager selection shifts from narrative quality to how well funds shape company outcomes between rounds, which is the same execution-over-story pattern showing up across AI markets.

What matters most is the implementation detail behind the headline numbers. The conclusion is that venture returns in this cycle will be driven by discipline in structure, timing, and liquidity strategy rather than valuation optics alone.

Read more: Conviction, Consolidation, and Smart Capital

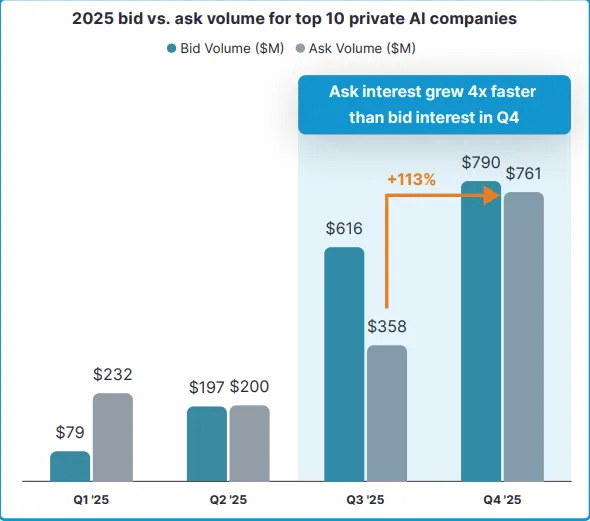

Massive AI Deals Drive $189B Startup Funding Record In February While Public Software Stocks Reel

Author: Gené Teare

Date: 2026-03-03

Publication: Crunchbase News

Crunchbase reports a record $189B in global venture funding in February, with the majority concentrated in three AI leaders. The core signal is not broad market recovery but extreme capital concentration around perceived frontier winners.

The central argument is operational: capital structure, financing terms, and market plumbing now matter as much as product narrative. Instead of treating this as abstract finance, the piece frames decisions as path-dependent choices that shape founder and investor outcomes over multiple rounds.

This matters for weekly curation because it sharpens the divergence between private AI mega-round momentum and public software repricing. The capital cycle now rewards scale narratives while punishing incumbents exposed to AI margin compression.

What matters most is the implementation detail behind the headline numbers. The conclusion is that venture returns in this cycle will be driven by discipline in structure, timing, and liquidity strategy rather than valuation optics alone.

Read more: Massive AI Deals Drive $189B Startup Funding Record In February While Public Software Stocks Reel

Anduril Aims at $60 Billion Valuation in New Funding Round

Author: TechCrunch / WSJ

Date: 2026-03-03

Publication: TechCrunch

Anduril is reportedly targeting a $60B valuation in a new financing led by Thrive and a16z, roughly doubling from its prior valuation cycle. The round is a signal that defense-tech is absorbing a larger share of late-stage growth capital as procurement urgency and geopolitical risk rise in parallel.

The central argument is operational: capital structure, financing terms, and market plumbing now matter as much as product narrative. Instead of treating this as abstract finance, the piece frames decisions as path-dependent choices that shape founder and investor outcomes over multiple rounds.

For TWTW, this is a useful market read-through on where institutional conviction is moving. In the current week’s policy environment, companies aligned to defense deployment are seeing both demand pull and valuation support.

What matters most is the implementation detail behind the headline numbers. The conclusion is that venture returns in this cycle will be driven by discipline in structure, timing, and liquidity strategy rather than valuation optics alone.

Read more: Anduril Aims at $60 Billion Valuation in New Funding Round

Secondaries, Deeper, Stronger, Liquider

Author: Moses Sternstein

Date: 2026-03-04

Publication: The Random Walk

Sternstein argues the private secondary market has become a core liquidity channel rather than a side market, with tender activity and transaction quality both improving in 2025. The key shift is that demand is concentrating in top private names, so secondaries are increasingly used for position management and employee liquidity, not distress exits.

The central argument is operational: capital structure, financing terms, and market plumbing now matter as much as product narrative. Instead of treating this as abstract finance, the piece frames decisions as path-dependent choices that shape founder and investor outcomes over multiple rounds.

This matters for venture-market structure because it extends private-company duration without forcing premature IPOs. As secondaries deepen, capital formation and liquidity can stay inside the private ecosystem longer.

What matters most is the implementation detail behind the headline numbers. The conclusion is that venture returns in this cycle will be driven by discipline in structure, timing, and liquidity strategy rather than valuation optics alone.

Read more: Secondaries, Deeper, Stronger, Liquider

AI

SaaS In, SaaS Out: Here’s What’s Driving the SaaSpocalypse

Author: TechCrunch

Date: 2026-03-01

Publication: TechCrunch

The article argues that coding agents are changing software economics fast enough to pressure legacy per-seat SaaS models. Buyers now have a more credible build option, and that threat is already showing up in renewal negotiations and sector repricing.

The key claim is that AI competition is shifting from model novelty toward distribution, workflow integration, and defensible economics. The argument treats pricing power and real adoption behavior as stronger signals than benchmark theater.

For TWTW, the relevance is strategic: this is less about one company winning and more about pricing architecture changing under incumbent software. Outcome-based and usage-based models now look like survival mechanics, not optional experimentation.

The supporting examples suggest that winners will be defined by execution in production environments, not just technical demos. The conclusion is that business-model design and operational reliability are now first-order strategic variables.

Read more: SaaS In, SaaS Out: Here’s What’s Driving the SaaSpocalypse

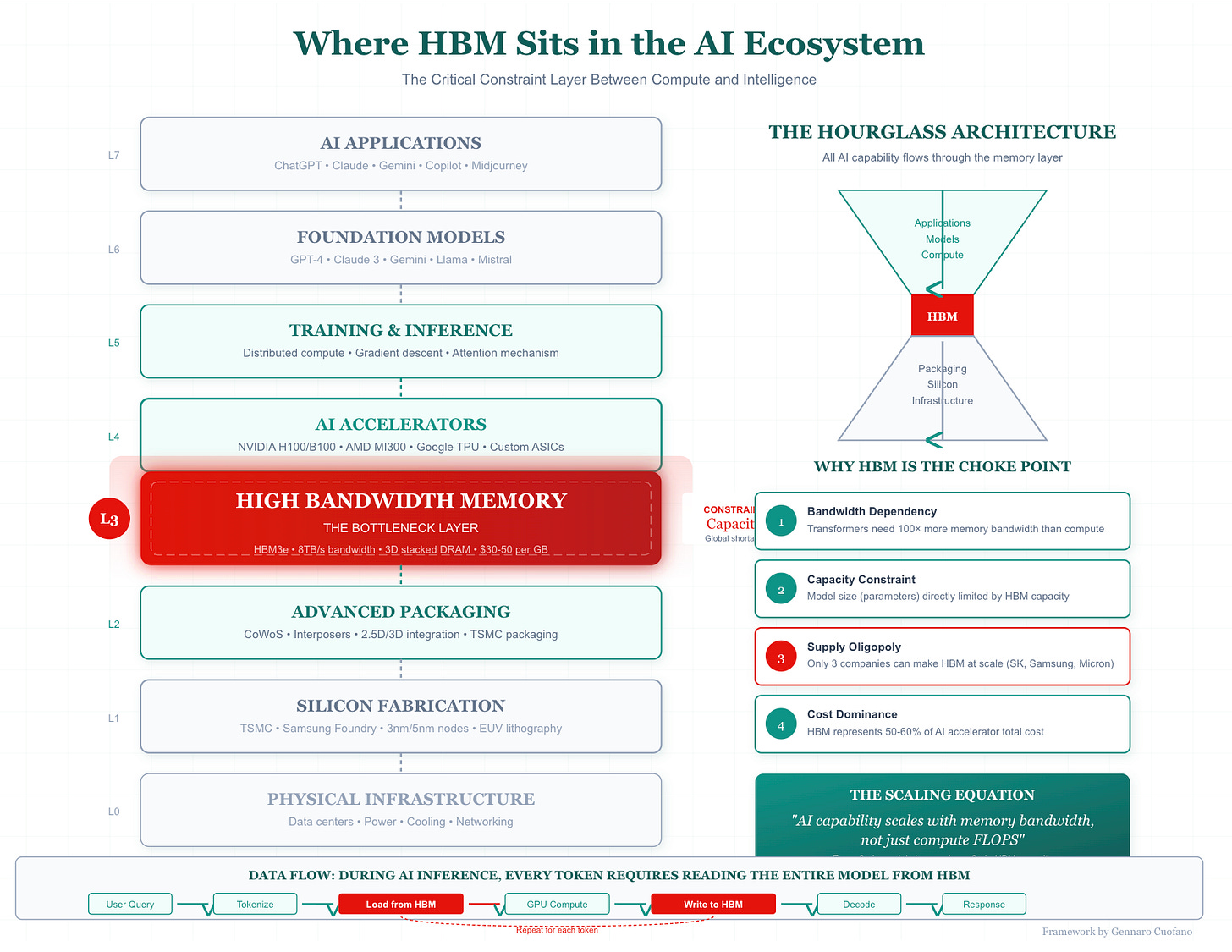

The AI Memory Chokepoint

Author: Gennaro Cuofano

Date: 2026-02-28

Publication: FourWeekMBA

This analysis shifts focus from GPUs to memory architecture, arguing that high-bandwidth memory and data-movement limits are now the practical bottleneck for scaling frontier systems. If true, near-term AI competition depends less on model design narratives and more on who can secure and integrate scarce memory supply.

The key claim is that AI competition is shifting from model novelty toward distribution, workflow integration, and defensible economics. The argument treats pricing power and real adoption behavior as stronger signals than benchmark theater.

That matters for TWTW because it links geopolitical chip policy, capex strategy, and product velocity in one constraint stack. It is a helpful complement to this week’s governance coverage by showing the physical infrastructure limits behind deployment claims.

The supporting examples suggest that winners will be defined by execution in production environments, not just technical demos. The conclusion is that business-model design and operational reliability are now first-order strategic variables.

Read more: The AI Memory Chokepoint

Cursor Surpasses $2B in Annualized Revenue

Author: TechCrunch / Bloomberg

Date: 2026-03-02

Publication: TechCrunch

Cursor reportedly doubled annualized revenue to $2B in roughly three months, with enterprise demand accounting for most of the acceleration. The trajectory suggests AI coding adoption is consolidating around tools that can clear procurement and workflow integration at scale.

The key claim is that AI competition is shifting from model novelty toward distribution, workflow integration, and defensible economics. The argument treats pricing power and real adoption behavior as stronger signals than benchmark theater.

For TWTW readers, this is a concrete datapoint for the “agentic software” thesis. Revenue velocity is now outpacing historical SaaS patterns and forcing incumbents to re-price both product positioning and go-to-market assumptions.

The supporting examples suggest that winners will be defined by execution in production environments, not just technical demos. The conclusion is that business-model design and operational reliability are now first-order strategic variables.

Read more: Cursor Surpasses $2B in Annualized Revenue

Would You Buy Generic AI?

Author: Tomasz Tunguz

Date: 2026-03-01

Publication: Tomasz Tunguz

Tunguz frames an emerging “generic AI” phase where comparable model performance is available at dramatically lower token prices. His thesis is that price compression is arriving faster than vendor moats can harden.

The key claim is that AI competition is shifting from model novelty toward distribution, workflow integration, and defensible economics. The argument treats pricing power and real adoption behavior as stronger signals than benchmark theater.

The strategic implication is margin pressure across the model layer and rising value capture in distribution, integration, and proprietary data. That perspective complements this week’s focus on commoditization risk and business-model reset in software.

The supporting examples suggest that winners will be defined by execution in production environments, not just technical demos. The conclusion is that business-model design and operational reliability are now first-order strategic variables.

Read more: Would You Buy Generic AI?

Not Prompts, Blueprints

Author: Tomasz Tunguz

Date: 2026-03-04

Publication: Tomasz Tunguz

Tunguz’s thesis is that effective AI execution now depends more on pre-structured workflows than iterative prompting. Teams that sketch decision branches and handoffs first can let agents run longer without constant operator intervention, which changes productivity from bursty assistance to repeatable throughput.

The key claim is that AI competition is shifting from model novelty toward distribution, workflow integration, and defensible economics. The argument treats pricing power and real adoption behavior as stronger signals than benchmark theater.

For this week’s theme, it reinforces the shift from raw model capability to operating design. The scarce skill is increasingly workflow architecture and verification, not one-off prompt craft.

The supporting examples suggest that winners will be defined by execution in production environments, not just technical demos. The conclusion is that business-model design and operational reliability are now first-order strategic variables.

Read more: Not Prompts, Blueprints

The AI industry’s civil war

Author: Eric Levitz

Date: 2026-03-04

Publication: Vox

Levitz maps the current U.S. AI fight as a conflict between accelerationist and institutionalist camps, with national-security policy acting as the forcing function. Instead of a generic “AI ethics” debate, the piece frames a concrete power struggle over who governs deployment in practice: firms, agencies, or courts.

The key claim is that AI competition is shifting from model novelty toward distribution, workflow integration, and defensible economics. The argument treats pricing power and real adoption behavior as stronger signals than benchmark theater.

This is useful editorial context because it helps connect this week’s company-level events to a broader political realignment. The dispute is less about one contract and more about the governing model for frontier systems.

The supporting examples suggest that winners will be defined by execution in production environments, not just technical demos. The conclusion is that business-model design and operational reliability are now first-order strategic variables.

Read more: The AI industry’s civil war

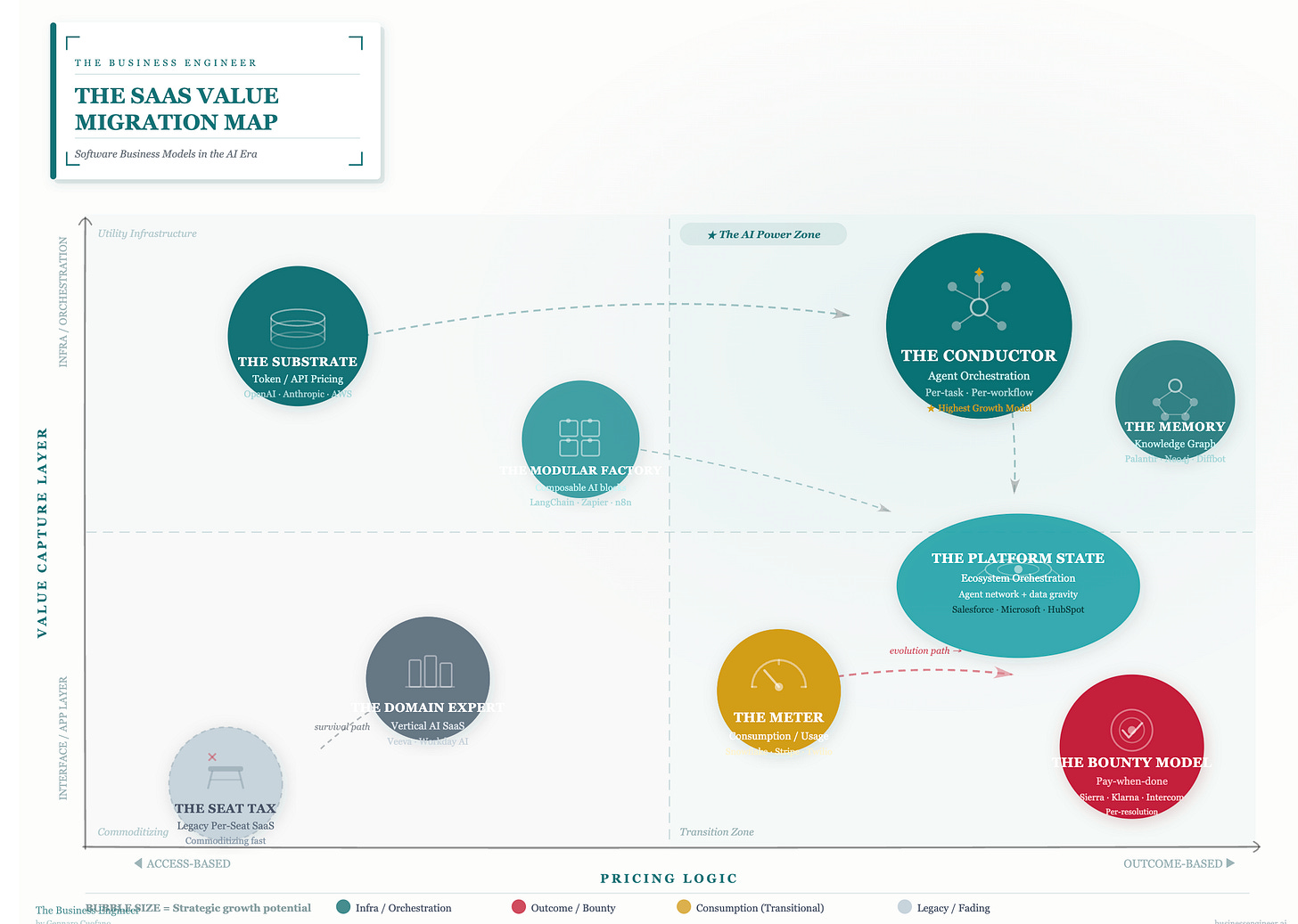

The Nine Agentic Business Models Replacing Traditional SaaS

Author: Gennaro Cuofano

Date: 2026-03-05

Publication: FourWeekMBA

Cuofano maps nine recurring monetization patterns in AI-native software, from tokenized API layers to task-level outcome pricing and embedded agent orchestration. The key argument is that value is migrating from seat licenses to execution throughput and verifiable business outcomes.

The key claim is that AI competition is shifting from model novelty toward distribution, workflow integration, and defensible economics. The argument treats pricing power and real adoption behavior as stronger signals than benchmark theater.

For TWTW, the relevance is strategic rather than tactical. If this framing holds, the next leg of software repricing is not just cheaper model access but a structural rebundling of where margins live.

The supporting examples suggest that winners will be defined by execution in production environments, not just technical demos. The conclusion is that business-model design and operational reliability are now first-order strategic variables.

Read more: The Nine Agentic Business Models Replacing Traditional SaaS

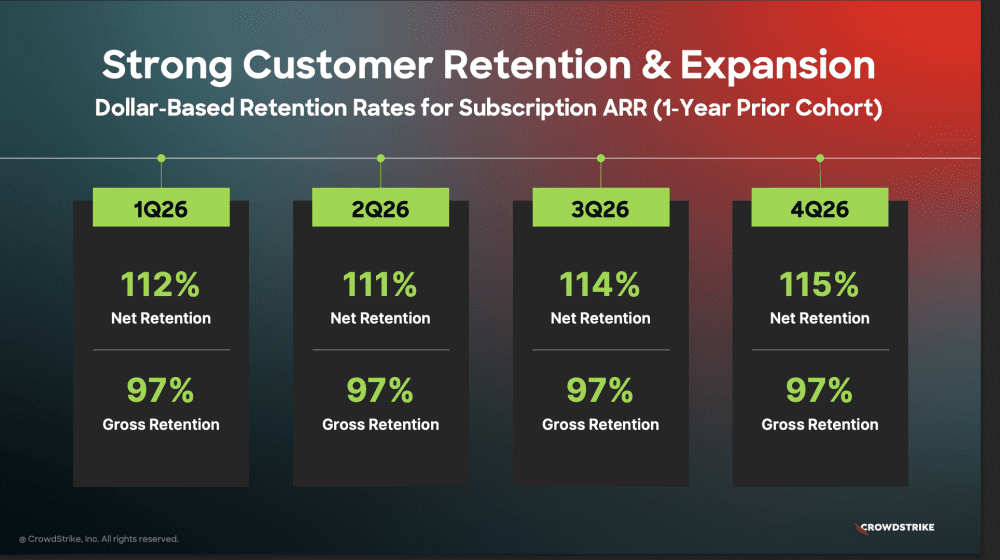

CrowdStrike: Best Quarter Ever at $5.25B ARR — So Much for AI Disrupting Cybersecurity

Author: Jason Lemkin

Date: 2026-03-05

Publication: SaaStr

Lemkin highlights CrowdStrike’s record quarter as a useful counterexample to broad “SaaS extinction” narratives. Revenue quality, free cash flow, and expansion metrics remained strong even as AI disruption fears drove sector-wide repricing.

The key claim is that AI competition is shifting from model novelty toward distribution, workflow integration, and defensible economics. The argument treats pricing power and real adoption behavior as stronger signals than benchmark theater.

The deeper signal is expectations, not performance. Incumbents can execute exceptionally and still be punished if they do not show clear AI-linked acceleration, which resets the standard for every public software company.

The supporting examples suggest that winners will be defined by execution in production environments, not just technical demos. The conclusion is that business-model design and operational reliability are now first-order strategic variables.

Read more: CrowdStrike: Best Quarter Ever at $5.25B ARR — So Much for AI Disrupting Cybersecurity

Regulation

OpenAI Reaches Agreement with the Department of War

Author: OpenAI

Date: 2026-02-28

Publication: OpenAI

OpenAI says its defense agreement preserves two explicit boundaries: no domestic mass surveillance and human responsibility for use-of-force decisions. The company also states it opposed Anthropic’s supply-chain-risk designation.

The governance implication is material. This frames safety constraints as contractual and legal commitments inside government procurement, rather than unilateral vendor terms that can trigger immediate exclusion.

Read more: OpenAI Reaches Agreement with the Department of War

The Trap Anthropic Built for Itself

Author: TechCrunch (interview with Max Tegmark)

Date: 2026-02-28

Publication: TechCrunch

Tegmark’s argument is that companies cannot rely on voluntary commitments while also resisting binding regulation. Without legal guardrails, the government can still demand high-risk deployment terms when national-security pressure rises.

The piece argues that the governance fight has moved from abstract safety principles to concrete state-market power arrangements. It focuses on who sets constraints, who enforces them, and what legal basis can survive institutional stress.

This is a useful counterweight to company-vs-company framing. It pushes the debate toward institution design: who sets enforceable limits, and where those limits live when incentives diverge.

The evidence supports a broader conclusion that policy architecture is now part of product risk, procurement risk, and reputational risk at once. In practice, firms and governments are converging on negotiated rules that will likely define the next phase of deployment.

Read more: The Trap Anthropic Built for Itself

How OpenAI Caved to the Pentagon on AI Surveillance

Author: The Verge

Date: 2026-03-02

Publication: The Verge

This report presents the strongest counter-reading of OpenAI’s public framing, arguing that contractual language may rely on broad “lawful use” standards rather than narrow operational constraints. It highlights how legal frameworks can appear restrictive while still permitting expansive surveillance practice in implementation.

The piece argues that the governance fight has moved from abstract safety principles to concrete state-market power arrangements. It focuses on who sets constraints, who enforces them, and what legal basis can survive institutional stress.

For this week’s curation, it is important editorial context because it pressure-tests the negotiated-guardrails narrative. The distinction between public principles and contract enforceability is now central to judging whether AI safety commitments are real or rhetorical.

The evidence supports a broader conclusion that policy architecture is now part of product risk, procurement risk, and reputational risk at once. In practice, firms and governments are converging on negotiated rules that will likely define the next phase of deployment.

Read more: How OpenAI Caved to the Pentagon on AI Surveillance

The Pentagon’s Anthropic Designation Won’t Survive First Contact With the Legal System

Author: Lawfare

Date: 2026-03-03

Publication: Lawfare

Lawfare argues the Pentagon’s “supply chain risk” designation for Anthropic likely exceeds statutory authority and is vulnerable to judicial reversal. The article shifts the debate from political narrative to administrative-law constraints and litigation risk.

The piece argues that the governance fight has moved from abstract safety principles to concrete state-market power arrangements. It focuses on who sets constraints, who enforces them, and what legal basis can survive institutional stress.

This is an important addition for editorial context because it tests whether executive pressure can bypass process when AI policy disputes escalate. The legal durability of these actions now directly affects procurement strategy and model-provider leverage.

The evidence supports a broader conclusion that policy architecture is now part of product risk, procurement risk, and reputational risk at once. In practice, firms and governments are converging on negotiated rules that will likely define the next phase of deployment.

Read more: The Pentagon’s Anthropic Designation Won’t Survive First Contact With the Legal System

The Pentagon-Anthropic feud is quietly obscuring the real fight over military AI

Author: Caroline Orr Bueno

Date: 2026-03-05

Publication: Fast Company

This essay argues the headline conflict between Anthropic and the Pentagon is masking a broader governance contest over procurement authority, contractor leverage, and acceptable constraints on military AI use. It reframes the story from one company dispute to an institutional control problem.

For this week’s curation, it is valuable because it connects legal, commercial, and national-security incentives in one frame. That makes it a stronger editorial input than incremental contract-coverage clips.

Read more: The Pentagon-Anthropic feud is quietly obscuring the real fight over military AI

China vows to accelerate technological self-reliance with new AI+ action plan

Author: Reuters

Date: 2026-03-05

Publication: Reuters

China’s latest five-year policy blueprint places AI at the center of industrial strategy, pairing broad “AI+” deployment language with commitments across quantum, 6G, and embodied systems. The policy signal is coordinated state support for frontier capability, not isolated sector stimulus.

This matters for TWTW because it widens the frame beyond U.S. procurement disputes. Strategic competition now hinges on how fast states can align industrial policy, capital, and deployment infrastructure around AI.

Read more: China vows to accelerate technological self-reliance with new AI+ action plan

Interview of the Week

Was Henry Kissinger Evil? What His Secret Phone Calls Reveal

Author: Andrew Keen

Date: 2026-02-28

Publication: Keen On

Andrew Keen interviews historian Tom Wells about The Kissinger Tapes, built from Kissinger’s secretly recorded calls. Wells came to the material as a critic and found political skill and stamina, but also a deeper-than-expected indifference to human cost, especially around Vietnam and Cambodia.

The interview’s deeper argument is that strategic choices are inseparable from the historical and moral frames leaders bring to moments of crisis. Rather than offering simple verdicts, it traces how private reasoning and institutional context interact over time.

It is a useful interview pick for this week because the moral question is not historical trivia. In a week dominated by debates over military AI, surveillance, and responsibility at scale, the conversation sharpens what it means to own decisions made through bureaucratic systems.

Taken together, the discussion suggests that evaluating leadership requires both documentary evidence and careful interpretation of constraints. The conclusion is less about hero or villain labels and more about how power is exercised under uncertainty.

Read more: Was Henry Kissinger Evil? What His Secret Phone Calls Reveal

Startup of the Week

How Jobright Hit $5M ARR With 9 People and a System of In-House Agents

Author: Henry Shi

Date: 2026-02-28

Publication: Henry’s Best Hits

Jobright is an AI-native recruiting product that reportedly reached roughly $5M ARR with a team of nine. The operating detail that stands out is not just headcount efficiency but discipline: channels are measured by cohort quality, experiments are killed quickly, and agents are used as part of the company design rather than as bolt-on labor replacement.

The core argument is that small teams can now reach scale by redesigning internal workflows around agents instead of adding headcount linearly. It emphasizes process architecture, role clarity, and system-level iteration as the real growth engine.

That makes it a strong startup pick for this issue. It is a concrete example of what AI-native operating leverage looks like when paired with customer discovery and execution rigor, not just a layoff narrative.

The evidence indicates that sustainable efficiency comes from compounding operational leverage rather than one-off automation wins. The conclusion is that execution systems, not org size, are becoming the primary determinant of startup velocity.

Read more: How Jobright Hit $5M ARR With 9 People and a System of In-House Agents

Post of the Week

The Great (ai) Game vs AI Theater

Author: Om Malik

Date: 2026-03-05

Publication: Om.co

“The game is so large that one sees but a little at a time.”

To understand AI, its stakes and its long-term impact, you have to step away from the cacophony of headlines. And instead take the time to think of it as the Great Game.

The Great Game was the 19th century strategic rivalry between the British Empire and Russia over Central Asia. Subsequent versions of this have played out over control of oil, for example. Then there was the Cold War, arguably the greatest game, with global nuclear annihilation at stake.

The game changes. The playbook doesn’t.

Neither side wanted direct war. Both wanted dominance. So they competed through proxies, influence, positioning, and long-horizon maneuvering. It was about who controlled the board, not just who won the battle.

Great Game is how we describe any era-defining geopolitical competition where the stakes are civilizational, the timeline is generational, and the weapons are economic and technological as much as military.

AI is the new Great Game.

Why I wrote this piece:

Trump meets tech giants on energy pledge ahead of midterms Seven tech giants signed a White House pledge to cover data center energy costs and not pass them to consumers. Voluntary, unenforceable, and timed for the midterms. (Reuters)

China vows to accelerate technological self-reliance in AI push Beijing’s 15th Five-Year Plan commits China to AI dominance across its economy, with targeted investment in chips, quantum computing, humanoid robots, and open-source AI as a deliberate national strategy. Oh, they got the game. (Reuters)

The Pro-Human AI Declaration A broad coalition including those with no clue about AI calling for human control, accountability, and limits on AI concentration of power. AI theater at its best. (Human AI)

A reminder for new readers. Each week, That Was The Week, includes a collection of selected essays on critical issues in tech, startups, and venture capital.

I choose the articles based on their interest to me. The selections often include viewpoints I can't entirely agree with. I include them if they make me think or add to my knowledge. Click on the headline, the contents section link, or the ‘Read More’ link at the bottom of each piece to go to the original.

I express my point of view in the editorial and the weekly video.