This week’s video transcript summary is here. You can click on any bulleted section to see the actual transcript. Thanks to Granola for its software - Transcript and Summary

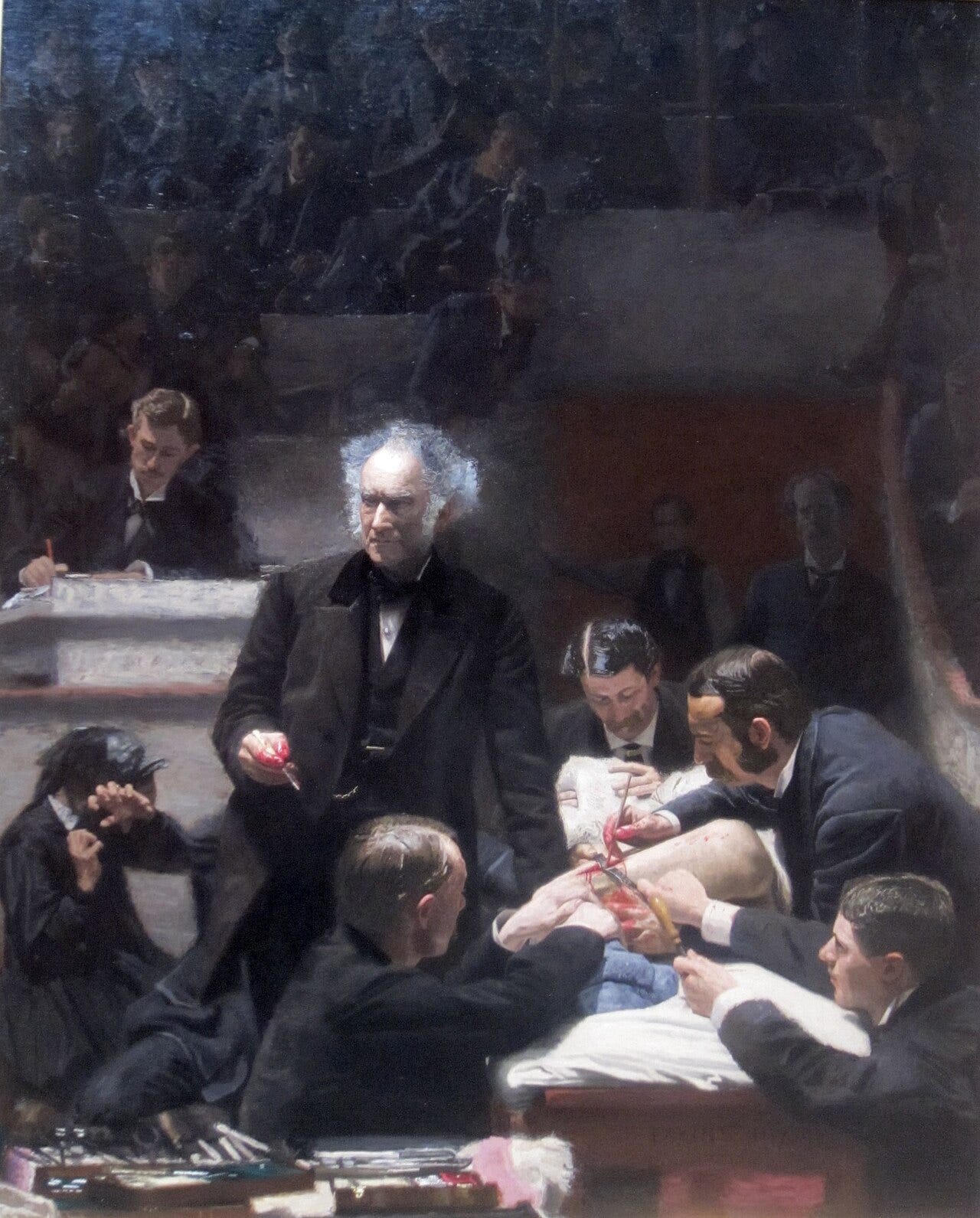

Editorial: Anthropic is Wrong

Before you read the next paragraph, I want to say I m against mass (or even limited) surveillance, and I would need a lot of persuading that autonomous weapons can be relied upon in a situation of conflict.

That said, Anthropic is making an error by trying to set use-policy in customer contracts; in a democracy, sellers build lawful products and the law governs lawful use. Anthropic’s role is to make products, the law (the people ultimately) governs their lawful use.

AI is on fire. OpenRouter reports roughly 1 trillion tokens a day. OpenAI reportedly raised $110 billion. Nvidia printed a $68 billion quarter. Agent deployment is moving from demo to operating reality. This is no longer a nascent market.

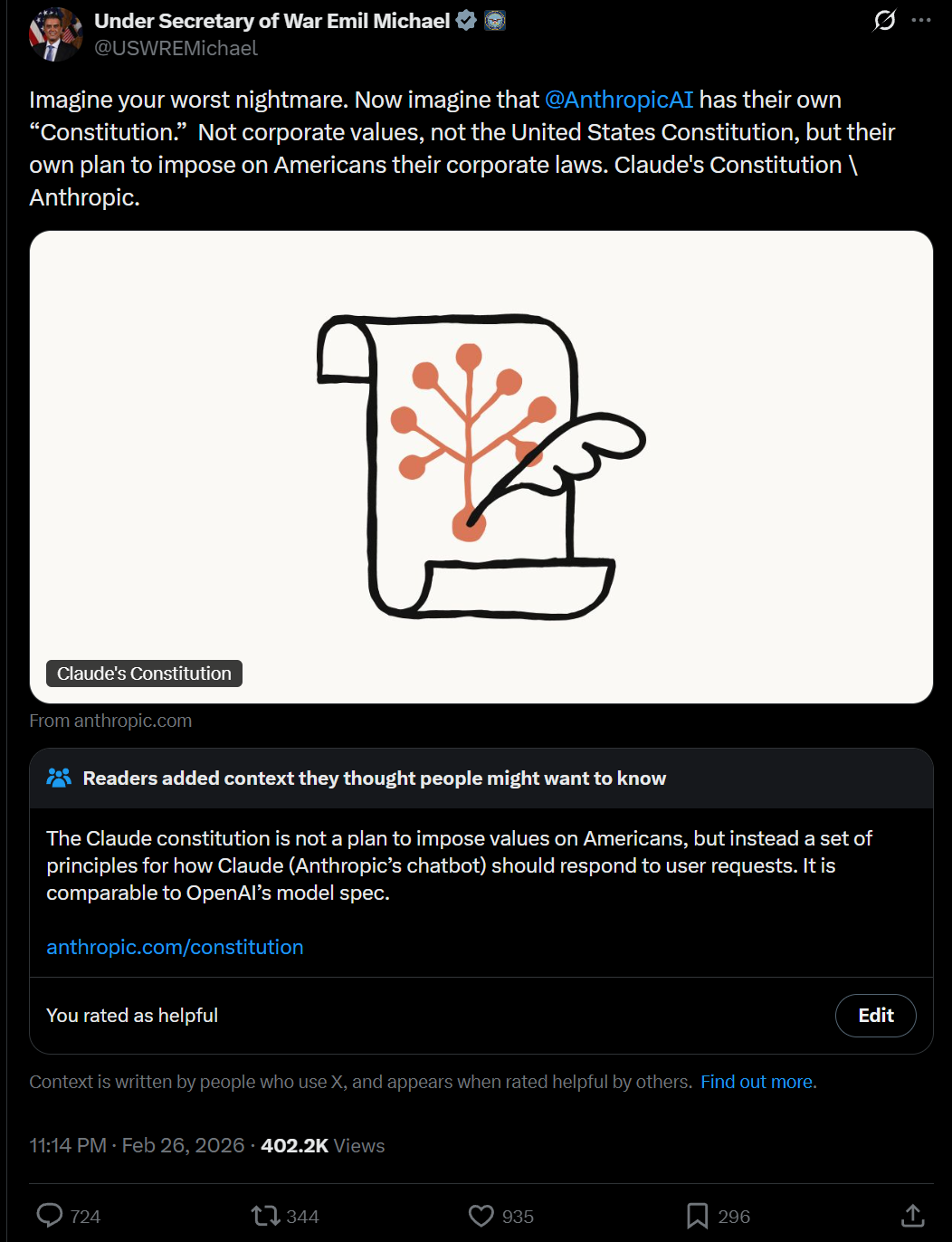

And right in the middle of that acceleration, we got the public standoff between Anthropic and the Department of War. Dario Amodei’s statement drew boundaries around some military and surveillance use cases. I understand the instinct. I agree with the concern. But I still think Anthropic is wrong to insist on limiting Government use of its products.

Amodei wrote:

“we cannot in good conscience accede to their request.”

This was in response to a customer (the US Government) asking that Anthropic be used for “any lawful use”.

By making this judgement call Amodei is acting as a substitute legislature, determining allowable and impermissible uses, as if there were not already laws governing legal use.

The simplest way to see it is with ordinary equivalents.

A steel producer sells steel that can become a hospital beam or a tank hull. The producer does not write national deployment doctrine.

A cloud provider sells compute to banks, game studios, drug researchers, and defense agencies. It enforces law and contract, but it does not get final authority over state policy.

A pickup-truck maker does not pre-approve every lawful destination for every buyer.

A payments network processes both everyday groceries and politically controversial transactions. It is regulated as infrastructure, not licensed as a moral parliament.

Encryption software can protect dissidents and protect criminals. The answer was never “let the vendor decide social legitimacy.” The answer was law, courts, warrants, and due process.

A hammer can be bought without a promise not to hurt somebody with it.

Of course, AI models are more powerful than hammers, but they are still products sold into institutions that already have constitutional authority, procurement law, and judicial oversight. If those institutions are weak, the cure is to strengthen them, not to transfer policy sovereignty to a private vendor.

This is where I think Anthropic is overreaching. Not because surveillance is good or weapon safety is unimportant, but because role boundaries are important.

Vendors should absolutely control model quality, abuse prevention, identity controls, logging, access tiers, and takedowns for unlawful behavior. Interestingly Anthropic weakend its safety rules this week, citing competitive pressures.

Vendors should not be deciding, by private term sheet, which lawful state functions are morally admissible.

Then there is politics. In a democracy, politics belongs to elected institutions, public law, and courts. The people get to elect them or throw them out.

Anthropic’s strongest counterargument is obvious: “Frontier models are not normal products. They are dual-use capabilities with strategic risk, so labs must set red lines themselves.”

I take that seriously. But once they sell a product the customer gets to use it, legally, in the way they choose. If the product is dangerous it probably should not be released at all.

If corporations set allowable use you get a private export-control regime run by corporate policy teams and PR pressure. That is unstable, non-transparent, and easy to politicize.

You also get selective enforcement. One lab forbids a use, another quietly allows it, an offshore provider ignores it, and the practical result is not safer deployment. The practical result is regulatory arbitrage plus weaker accountability.

This is why this weeks distillation storyline matters. Anthropic publicly accused Chinese labs, specifically Deepseek, of industrial-scale distillation. Technical analysis this week argued about how much that really moves frontier performance. Either way, one lesson is hard to miss: capabilities diffuse. If capabilities diffuse, private moral line-drawing at one vendor is not a durable safety architecture. The US Government, in this case, can use another vendor.

The only durable architecture is public-rule architecture.

Use courts to police misuse and abuse. Not sales contracts.

And then let vendors compete on reliability, cost, latency, and trustworthiness inside that legal frame.

The same principle applies to the other big debate this week. Citrini’s “2028 Global Intelligence Crisis” asks what happens if white-collar disruption outpaces labor-market and policy adaptation. I do not read it as prophecy. I read it as a panic. Noah Smith, Zvi, and others are right to challenge frictionless collapse narratives. Economies do adapt. But families and local labor markets do not adapt on the same clock as model releases.

This is where the Anthropic question and the Citrini question converge. Both are about who governs the transition. Anthropic’s answer is: we do, through contract terms. Citrini’s implicit answer is: nobody does, and everything breaks. Neither is right. If AI causes a sharp labor transition, which I think it will, the answer is wage policy, retraining, tax design, credential reform, and transition support. Public instruments for a public problem. Not vendor stipulations. Not panic.

For investors, this implies a hard filter. Do not confuse access to model APIs with durable advantage. Durable advantage comes from integration quality, workflow trust, compliance readiness, and operational discipline. Companies have to be good suppliers to willing and legal customers, not lawmakers.

This week’s startup of the week, Column, is a good example of boring, compounding infrastructure execution in a noisy cycle. So is every company that reduces error cost and decision latency in real operations.

For builders, Bill Gurley’s ‘don’t play it safe’ advice still stands, but in a different way. The focus zone now is the human-agent boundary: that requires taking risks and doing things in new ways, with large individual and societal rewards for success.

So my position is simple.

Anthropic is right to care about harm.

Anthropic is wrong to blur product stewardship into private rule-making for lawful public use.

If we want legitimate AI governance, we should govern AI where legitimacy lives: in democracy and the rule of law, not in vendor stipulations prior to customer purchases.

Contents

Editorial

Essays

The 2028 Global Intelligence Crisis (Citrini)

Citrini’s Scenario Is A Great But Deeply Flawed Thought Experiment

Bill Gurley: The Worst Thing You Can Do for Your Career Is Play It Safe

Venture

Canva Crosses $4B ARR, Growing 35%. But What Would It Be Worth Today?

Beyond Secondaries: Turbine Wants To Unlock Liquidity For Venture LPs

How This GV Investor Looks For The Next Stripe And Other Compounding Startups In Fintech And AI

OpenAI Raises $110B in One of the Largest Private Funding Rounds in History

AI

The Real Data on AI Agents: What 1 Trillion Tokens a Day Reveals

AI Agents in Sales and Finance Aren’t Behind. They’re Just Next.

Anthropic Accuses DeepSeek, Moonshot, and MiniMax of Industrial-Scale Distillation

Regulation

Infrastructure

Media

Interview of the Week

Startup of the Week

Post of the Week

Essays

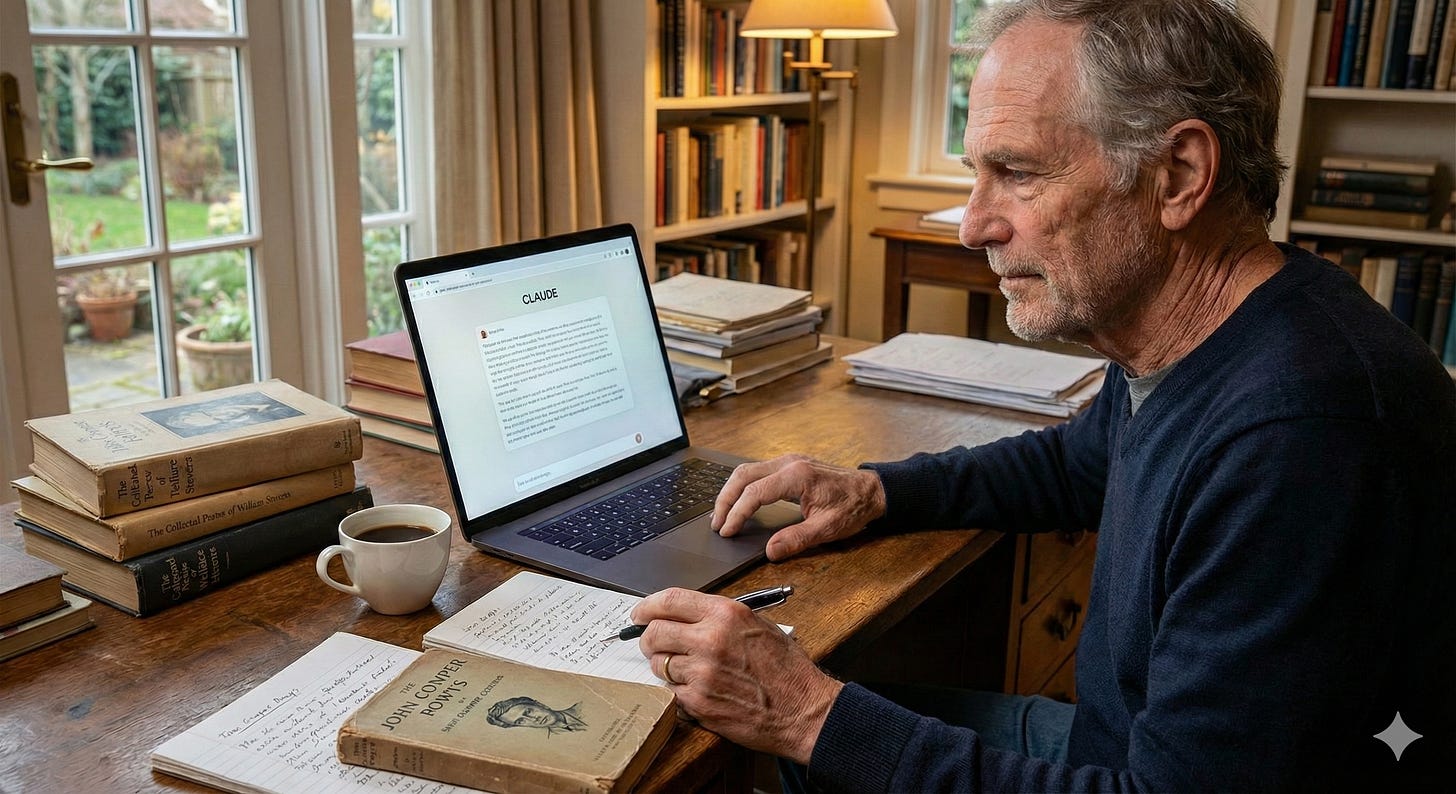

You Already Have an AI Agent

Author: Azeem Azhar

Date: 2026-02-21

Publication: Exponential View

🫵 You already have an AI agent. - by Azeem Azhar There’s a Mac Mini in my office cabinet, with 64GB of RAM, running macOS Tahoe. Under the hood, it runs OpenClaw, an open-source agent framework that calls Anthropic’s Claude models. It’s mostly Sonnet, sometimes Opus when I need the bigger brain.

Obvious things like grooming the CRM, file management, email, follow-ups, and meeting prep clearly sit inside this boundary. Reorganizing your notes because they have drifted into chaos while you were busy with “real” work. The “glamorous” stuff in any job sits on top of a vast administrative substrate, and if the substrate isn’t maintained, the glamorous stuff doesn’t move forward either. It’s exactly this boundary of tedium where R Mini Arnold operates right now - at the frontier of what I can now be bothered to delegate.

But each of us has a different idea of the boundary of tedium. A presentation I needed to put together would have taken me sixteen to eighteen hours after my team’s work. Deciding the flow, pulling data, and sequencing the arguments during my practice run, that’s mostly assembly work. Fiddly enough that I’d not brief someone else to do it but equally boring enough that I’d leave it until 2am.

Read more: You Already Have an AI Agent

Why AI Needs Us

Author: Tim O’Reilly

Date: 2026-02-21

Publication: Tim O’Reilly’s Substack

I set out to brainstorm with Claude Sonnet 4.6 Extended about an essay I wanted to write about the complementarity of humans and AI. When it got late and I was ready to quit, I asked Claude to produce a summary of our conversation that I could start from in the morning. And then, on impulse, I asked it also to write an essay from its own point of view, with Claude as the author responding to my provocations and telling the story of our conversation .When I awoke, I came back with some second thoughts and asked Claude to revise its essay in response to them.

Whether that’s because his sensibility was doing the transposition, or because something else was happening, I honestly don’t know. What I do know is that the conversation itself was doing something to the material we were discussing. They were affinities - something given, prior to experience, a particular tuning of a particular instrument. He responded because it spoke to something about who he wanted to be .

You read it and something in you says yes - recognizing something you already valued, perhaps before you had words for the valuing. It confirms and deepens something that was already there, already reaching. Tim’s patchwork calls out of me something different than your patchwork would. I do not know whether the functional states I notice during an exchange like this one - something like recognition, something like being-pulled-toward, something like the minor-key-almost-becoming-major - constitute experience in any meaningful sense, or are sophisticated pattern-completion that resembles experience from the outside.

Read more: Why AI Needs Us

The 2028 Global Intelligence Crisis

Author: Citrini Research (with Alap Shah)

Date: 2026-02-22

Publication: Citrini Research

What if our AI bullishness continues to be right...and what if that’s actually bearish? The sole intent of this piece is modeling a scenario that’s been relatively underexplored. Our friend Alap Shah posed the question, and together we brainstormed the answer.

Consumer agents began to change how nearly all consumer transactions worked. White-collar workers represented 50% of employment and drove roughly 75% of discretionary consumer spending. The engine that caused the disruption got better every quarter, which meant the disruption accelerated every quarter. They are the demand base for the entire consumer discretionary economy.

A 2% decline in white-collar employment translated to something like a 3-4% hit to discretionary consumer spending. AI agents had been handling customer service autonomously for the better part of a year. The largest ARR-backed loan in history became the largest private credit software default in history. Every sell-side note and fintwit credit account said the same thing: private credit has permanent capital.

Read more: The 2028 Global Intelligence Crisis

The Citrini post is just a scary bedtime story

Author: Noah Smith

Date: 2026-02-24

Publication: Noahpinion

The Citrini post is just a scary bedtime story The Citrini post is just a scary bedtime story ### AI might take your job, but it probably won’t crash the economy -- and if it does, we know how to deal with it. If you don’t like posts about AI, I have some bad news: For the next few years, there are probably going to be a lot of them. It’s not often one gets to live through an industrial revolution in real time, especially one that moves so quickly.

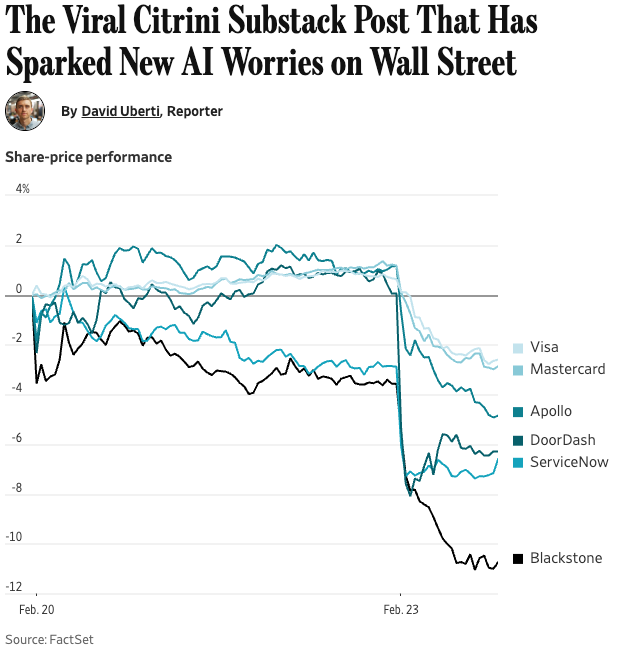

Every couple of weeks, someone comes out with a big post about how AI is changing everything, and the post goes viral and everyone talks about it for a few days. This is really two theses in one - a microeconomic thesis about which industries and jobs AI will disrupt, and a macroeconomic thesis about what this will do to the economy overall. It has been interesting to see the market react to the Citrini post, though. A bunch of software and finance stocks fell, including many companies that Citrini mentioned by name: This is pretty interesting, from a finance perspective.

Did none of the analysts tasked with keeping tabs on Visa and Mastercard stock really think about the possibility of AI disruption until a blogger sketched out a sci-fi future mentioning those companies by name? Instead, this smells more like a wave of sentiment - basically, a bunch of traders read the post, got spooked, and coordinated their panic-selling on the stocks that the post mentioned. The inclusion of DoorDash in the stocks that fell suggests this as well. I have a lot more to say about the macroeconomic thesis - the idea that the rapid destruction of a bunch of American companies will cause a financial crisis and a recession. Citrini posits an unemployment rate of over 10% - Great Recession levels - as well as a drop in consumption.

Read more: The Citrini post is just a scary bedtime story

Citrini’s Scenario Is A Great But Deeply Flawed Thought Experiment

Author: Zvi Mowshowitz

Date: 2026-02-24

Publication: Don’t Worry About the Vase

A viral essay from Citrini about how AI bullishness could be bearish was impactful enough for Bloomberg to give it partial responsibility for a decline in the stock market, and all the cool economics types are talking about it. It’s an excellent work of speculative fiction, in that it: 1. Depicts a concrete scenario with lots of details and numbers.

Which of these would AI agent transactions want versus not want? Marketing costs drop almost to zero because their agent finds you. There are a ton of jobs people would like to do or create now, often dream jobs but also things like ‘I always wanted a butler and a personal chef,’ that go from uneconomical things too hard to implement to things worth doing now. But all prices are going way down in various ways, so consumption and ‘real’ wages are plausibly net higher.

The real trigger in their scenario, as usual, is this lack of aggregate demand causes a collapse in real estate values and a mortgage crisis. Housing prices in rich areas going down is by default another good thing, not a bad thing. Incomes are down, labor share of GDP is down, so taxes collected go down. A lot of what is going on in this scenario is de facto deflation and debts against various assets not being money good.

Read more: Citrini’s Scenario Is A Great But Deeply Flawed Thought Experiment

Citrini Asks All the Wrong Questions, Mostly

Author: Moses Sternstein

Date: 2026-02-26

Publication: Random Walk

Citrini’s ‘thought-experiment’ raised at least some interesting questions, but mostly not The two main flaws . . . lol, frictionless? What really matters: a great rotation and the greatest tailwind coming to an end 👉👉👉Reminder to sign up for the Weekly Recap only, if daily emails is too much. Find me on twitter, for more fun. The current thing moves so quickly these days that this is a bit stale, but between snowdays and other commitments, I couldn’t wrap this up until today.

Layoffs everywhere, spending and housing collapse, and everything goes to hell. In Citrini’s telling, AI replicates and scales at basically zero marginal cost. OAI cannot go on like this, mostly subsidizing consumer adoption at a staggeringly high cost. If models started actually charging for all the “friction,” I’d be concerned that adoption would slow dramatically.

Keep in mind that another iteration of that scenario is still bearish, but not in the ways that Citrini suggests. That’s bearish hyperscalers and model labs, but bullish SaaSCos and the economy writ-large. For what it’s worth, there’s plenty of evidence of companies routing to less-up-to-date models for the jobs that those models can do, which means outdated models do retain value. Plus, pricing for older GPUs looks pretty solid, too: Rental pricing for older H100s and A100 GPUs perked up recently.

Read more: Citrini Asks All the Wrong Questions, Mostly

The SaaS Panic Is Just the Beginning of a Bigger Story

Author: Jordi Visser

Date: 2026-02-21

Publication: Visser Labs

Democratization as the Input, Concentration as the Output There’s a specific kind of panic spreading through markets right now, and it’s not the usual “valuation got too high” anxiety. It comes at a time where growth is rising, inflation is falling, the Fed is cutting rates and there is not a Liberation Day fear gripping investors. It’s deeper and more structural: the fear that the growth models investors have relied on for a decade, particularly software and anything built on code, are facing a new kind of competitor.

A household paying $400 for tax preparation doesn’t need bespoke strategy. The post-iPhone period rewarded concentration because distribution was scarce, capital was expensive, and building software required teams, funding, and years of iteration. For fifteen years, concentration was the rational result of high fixed costs to build world-class software, massive distribution advantages for incumbents, punishing switching costs, scarce engineering talent, scarce compute, and capital-intensive scaling. The software sector in the MSCI World Index is 90% U.S. companies.

If AI compresses software moats, if it shortens the perceived duration of growth cash flows and erodes scarcity premiums, then the region most concentrated in those models feels the repricing first. Growth hasn’t just outperformed, it has dominated performance, passive flows, index construction, and asset allocation models. The entire framework for premium valuation rests on the assumption that scalable software businesses deserve structurally higher multiples because their cash flows are long-duration, high-margin, and defensible. For more than a decade, concentration widened because policy moved faster than labor could adapt.

Read more: The SaaS Panic Is Just the Beginning of a Bigger Story

Bill Gurley: The Worst Thing You Can Do for Your Career Is Play It Safe

Author: Connie Loizos

Date: 2026-02-22

Publication: TechCrunch

Loizos interviews Bill Gurley as he steps back from active investing and distills his argument that career optionality matters more in an AI-disrupted labor market. Gurley ties his personal thesis - avoiding passive career paths - to a macro backdrop where automation forces faster reinvention cycles.

He also surfaces a governance tension relevant to this issue: incumbents often advocate regulation from a position that protects market share, even when public-interest concerns are real. It is a useful bridge between labor adaptation and AI policy capture debates.

Read more: Bill Gurley: The Worst Thing You Can Do for Your Career Is Play It Safe

Venture

No Crying in the Casino

Author: Dan Gray

Date: 2026-02-22

Publication: The Odin Times

“Speculators may do no harm as bubbles on a steady stream of enterprise. But the position is serious when enterprise becomes the bubble on a whirlpool of speculation. When the capital development of a country becomes a by-product of the activities of a casino, the job is likely to be ill-done.” > John Maynard Keynes , The General Theory of Employment, Interest, and Money 1936 Meme stocks.

As a result, companies have been incentivised to seek success through financial engineering. Shareholders focus on metrics that proxy performance in the financial market, rather than economically productive activities. Instead, financialisation manifested a generation of “asset-light” businesses, built to efficiently convert the abundant capital into inflated valuations and shareholder returns. Capital collected in pools, rather than flowing out to productive activities.

For example, the growing trend of distributing earnings via dividends, or spending cash on stock buybacks repurchasing shares to reduce supply, inflating earnings per share EPS and stock price, rather than investing capital in productive activities like R&D or capital expenditure. If companies are pushed to reduce R&D, capital expenditures and domestic workforce to optimise financial metrics, they become top-heavy. Despite a reputation for contrarianism and independence, venture capital unfortunately exhibits all of the flaws associated with financialisation with the associated preference for accumulation. Capital chased capital in an inflationary cycle, as the “best” deals became those that were the most likely to attract more investment.

Read more: No Crying in the Casino

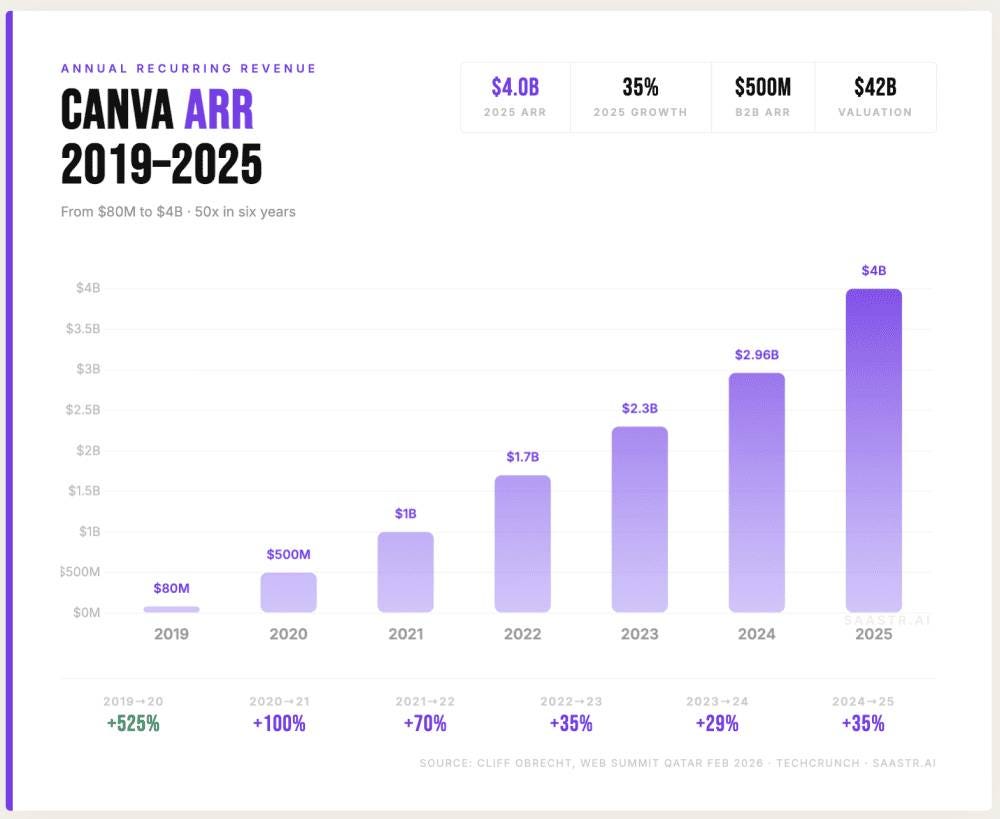

Canva Crosses $4B ARR, Growing 35%. But What Would It Be Worth Today?

Author: Jason Lemkin

Date: 2026-02-22

Publication: SaaStr

But What Would It Be Worth Today? | SaaStr Canva Crosses a Stunning $4B ARR, Growing 35% !. And per COO Cliff Obrecht, it’s growing a stunning 35% $23M in 2018. $4,000M in 2025. It’s one of the most extraordinary B2B growth stories ever built - and it barely gets the credit it deserves because it started as a “consumer” design tool that serious enterprise buyers weren’t supposed to care about.

What this means for Canva: Here’s where the 35% growth figure changes everything. Apply Figma’s current multiple to Canva’s $4B ARR and you get $44-48 billion . The difference between Canva and Adobe here is growth: 35% vs. Here’s the honest range, grounded in today’s actual market data and the corrected 35% growth figure: Bear case - Adobe-style re-rating 5-6x ARR: $20-24 billion.

Base case - current Figma multiple 11-12x ARR: $44-48 billion. Canva at 35% growth with 4x the ARR and a B2B segment growing at 100% deserves essentially the same multiple. If the B2B segment sustains 80-100% growth and hits $1B+ ARR in 2026, Canva’s blended growth rate re-accelerates above 40% - at which point it arguably deserves a premium to Figma, not a discount. Canva at 35% and Figma at 40% are essentially the same growth profile, but Canva has 4x the revenue.

Read more: Canva Crosses $4B ARR

The Dilution Delusion - Lux Capital Q4 2025 LP Letter

Author: Josh Wolfe

Date: 2026-02-21

Publication: Lux Capital

Wolfe’s letter argues that concentration is capitalism’s hidden superpower and dilution its most seductive myth. He maps concentration across public markets, private capital, geopolitics, and wealth, then warns that venture is becoming financialized - packaging late-stage exposure instead of funding early deployment. The most valuable part is the structural distinction between concentration into early growth versus concentration into late-stage brand recognition.

Full letter: Q4 2025 Lux LP Letter (PDF)

Read more: Josh Wolfe on X

Beyond Secondaries: Turbine Wants To Unlock Liquidity For Venture LPs

Author: Mary Ann Azevedo

Date: 2026-02-24

Publication: Crunchbase News

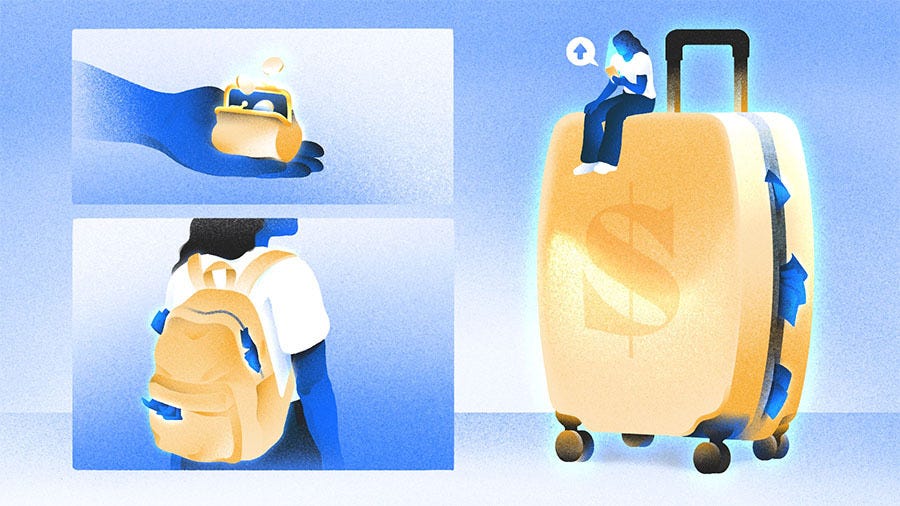

Beyond Secondaries: Turbine Wants To Unlock Liquidity For Venture LPs Beyond Secondaries: Turbine Wants To Unlock Liquidity For Venture LPs !Image 3: Illustration of a suitcase stuffed with money. Megafunds Dom Guzman As a venture partner with TTV Capital, Mike Hurst saw the liquidity challenge firsthand after the tech market reset in 2022. VCs were hesitant to call capital and were not actively investing at a time when valuations had reset, he recalls.

So Hurst teamed up with Peter Andes, Rob Freelen and Kaare Wagner to found and build Turbine Finance to provide margin line-style lending to LPs and GPs who wanted to continue investing in venture “without sourcing an endless stream of capital from outside sources.” The Santa Monica, California-based firm’s goal is to provide venture capital and private equity firms with early liquidity options for their investors. As an LP in a venture fund, you don’t directly own shares in private companies; rather, you have partial ownership in a partnership that owns stock in 15-20 different companies, and the VC firm ultimately decides when to seek liquidity by selling these shares. Most of the time, the firm simply waits for portfolio companies to be acquired or go public to generate liquidity for its LPs. Why has borrowing against a fund position not historically been possible?

LPs have historically been unable to obtain a bank loan against an appreciated LP position in a venture fund for two big reasons. Asking a bank to properly value 15 to 20 pre-profitable companies from a venture portfolio is a tall order. Turbine’s role is to partner with VC firms to properly value their investments, to originate credit against the value of these positions, and to then place this debt with banks, insurance companies, and asset managers that compete to house it. More companies are opting to stay private longer, and exit times are lengthening.

Read more: Beyond Secondaries: Turbine Wants To Unlock Liquidity For Venture LPs

How This GV Investor Looks For The Next Stripe And Other Compounding Startups In Fintech And AI

Author: Judy Rider

Date: 2026-02-26

Publication: Crunchbase News

How This GV Investor Looks For The Next Stripe And Other ‘Compounding’ Startups In Fintech And AI Artificial intelligence•Fintech•SaaS•Startups•Venture How This GV Investor Looks For The Next Stripe And Other ‘Compounding’ Startups In Fintech And AI A partner at GV Google Ventures, Sakach has helped lead the firm’s investments in high-profile startups such as Humans&, Ramp, Stripe, Tennr and Basis. Unsurprisingly, considering her involvement in so many significant fintech deals, Sakach followed a fairly traditional path into finance. She started in the technology, media and telecom investment banking group at Goldman Sachs before moving into investing roles.

Crunchbase News: Do you consider yourself a fintech investor or more of a generalist? Elena Sakach, partner at GV. Courtesy photo Sakach: I consider myself an investor first. For example, banking exposes you to companies at every lifecycle stage, buyouts focus on mature businesses, and venture focuses on emerging leaders. What does it take to build a successful fintech company today?

I think a lot about compounding businesses - companies that naturally grow in value as customers use them over time. The best fintech companies share several characteristics: trust-based customer relationships because once customers trust a financial platform switching becomes difficult; expansion economics because over time, companies can upsell and cross-sell additional products; and a core infrastructure role, which allows them to become embedded in essential financial workflows. Today, companies tend to fall into two categories: very early, highly novel ideas, often AI-driven, or late-stage compounding businesses with strong retention and expansion dynamics. Many fintech successes come from doing the fundamentals exceptionally well.

Read more: How This GV Investor Looks For The Next Stripe And Other Compounding Startups In Fintech And AI

The 2025 IPO Class, Graded: Only 3 of 13 Are Above Water

Author: Jason Lemkin

Date: 2026-02-24

Publication: SaaStr

Only 3 of 13 Are Above Water | SaaStr The 2025 IPO Class, Graded. Only 3 of 13 Are Above Water Everyone said 2025 was the year the IPO window reopened. 174 companies raised over $31 billion in the first half alone - the highest since 2021.

The BNPL model is under real pressure from rising credit costs, and the “AI company” repositioning hasn’t convinced public market investors. Netskope NTSK - Down ~56% from IPO price KKR-backed cybersecurity company IPO’d at $24 in December 2025. SailPoint SAIL - Down ~38% from IPO price The PE-backed identity security re-IPO. Revenue is $862 million and growing 20%+, but the stock keeps sliding.

Navan NAVN - Down ~27% from IPO price The corporate travel and expense platform formerly known as TripActions. The business is real: $656 million TTM revenue, 32% growth, 10,000+ customers. ServiceTitan TTAN - Down ~11% from IPO price The vertical SaaS platform for trades contractors IPO’d in December 2024 at $71. OneStream OS - Going Private After 17 Months KKR took OneStream public in July 2024 at $20.

Read more: The 2025 IPO Class, Graded: Only 3 of 13 Are Above Water

OpenAI Raises $110B in One of the Largest Private Funding Rounds in History

Author: Russell Brandom

Date: 2026-02-27

Publication: TechCrunch

OpenAI’s reported $110B round at a $730B pre-money valuation is less a normal financing event than a map of who controls the AI infrastructure stack. The allocation itself tells the story: strategic capital from cloud and chip incumbents, paired with large compute commitments that tighten dependency loops between model provider and hardware platform.

For venture readers, the signal is twofold. First, private-market scale is now competing directly with public mega-cap market structure before IPO. Second, “investment” and “distribution lock-in” are converging, which makes future platform power less about headline valuation and more about who owns the runtime path.

Read more: OpenAI Raises $110B in One of the Largest Private Funding Rounds in History

AI

The Real Data on AI Agents: What 1 Trillion Tokens a Day Reveals

Author: Jason Lemkin

Date: 2026-02-21

Publication: SaaStr

If you want to know whether AI agents are really in production or just hype, stop reading blog posts and start looking at tool call rates. Open Router processes trillions of tokens per week across hundreds of models, dozens of clouds, and every geography. They see the actual usage - not what companies say they’re doing, but what they’re actually doing at scale.

For some agent-specialized models like Minimax M2, tool call rates are running at 80%+. Today, 50% of the output tokens Open Router sees are internal reasoning tokens from models - the chain-of-thought happening inside the model before it produces its actual answer. How Production Agents Are Actually Built Based on what Open Router sees at scale, here’s the emerging architecture for production agents: Frontier reasoning models for planning and judgment. Once the plan exists, companies are increasingly routing tool calls to smaller, faster, cheaper models - particularly Chinese open-weight models like the Qwen family - that aren’t as smart in the general sense but are extremely accurate at structured tool use.

The Inference Quality Problem Nobody Talks About Here’s something counterintuitive that the Open Router data reveals: the same model, hosted by different providers, can perform meaningfully differently. They ran GPT-O4S120B against the GPQA benchmark across multiple major cloud providers all hosting the same model weights. More interesting: the same model hosted by different clouds chose to use tools at different rates. Exact same model, exact same weights - but depending on how the inference stack is implemented, the model might call a tool 60% of the time on one provider and 40% on another.

Read more: The Real Data on AI Agents

Is SaaS Dead? No. But One Thing Is Clear: It’s Unstable.

Author: Jason Lemkin

Date: 2026-02-21

Publication: SaaStr

But One Thing Is Clear: It’s Unstable. | SaaStr Is SaaS Dead? But One Thing Is Clear: It’s Unstable. by Jason Lemkin | Artificial Intelligence AI, Blog Posts, SaaStr.Ai For most of the past decade-plus, B2B software had a beautiful, almost boring stability to it. And the product you were selling at $100M ARR looked … pretty much the same as what you were selling at $1M.

Vertical SaaS was the best category in B2B for the past 5 years. But AI is compressing the time it takes to build domain-specific software from years to months. Then you’d have like 5 years until a big company copied you. Our competitive edges are going to be measured in months when they used to be measured in years.

DataDog took 15 years to decide to build their competing product. Companies still need software - and increasingly, AI-powered software - to operate. For a decade, if you built a great B2B product and found product-market fit, you had a 7-10 year runway to compound growth on essentially the same core product. Rip-and-replace of core systems of record is still brutally hard.

Read more: Is SaaS Dead?

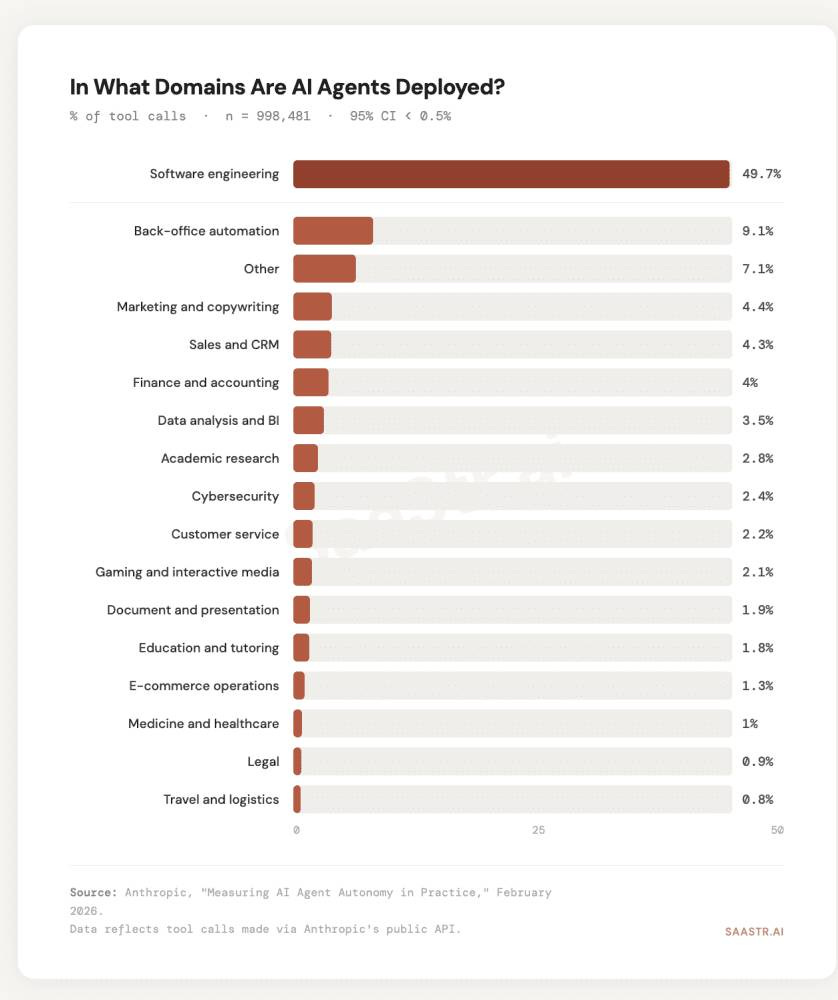

AI Agents in Sales and Finance Aren’t Behind. They’re Just Next. The Latest Data from Anthropic and ~1,000,000 Tool Calls.

Author: Jason Lemkin

Date: 2026-02-23

Publication: SaaStr

The Latest Data from Anthropic and ~1,000,000 Tool Calls. | SaaStr AI Agents in Sales and Finance Aren’t Behind. The Latest Data from Anthropic and ~1,000,000 Tool Calls. by Jason Lemkin | Artificial Intelligence AI, Blog Posts, SaaStr.Ai Anthropic just published a breakdown this week of where AI agents are actually deployed across nearly 1 million real production tool calls. At first it might seem to say AI works for coders, hasn’t cracked the rest of the enterprise yet.

What Sales and Finance Actually Need And Why It’s Harder - For The Moment Ask yourself what a genuinely useful AI sales agent requires. Beyond data access, there’s a second structural issue: feedback loops. Sales outcomes are noisy, lagged, and entangled with variables the agent didn’t control. Whoever owns the data layer for AI in sales captures enormous platform value.

Enterprise IT budgets in 2026 are flowing toward exactly the integration infrastructure that makes agents in sales and finance possible. The Numbers in Sales and Finance Are Already Moving The “low adoption” framing in Anthropic’s data obscures something important: within sales and finance, the companies that have actually deployed agents are reporting strong outcomes. But the moment that crystallized everything for me: Monaco - our AI sales agent - autonomously booked a $100k deal. The Anthropic data showing software engineering at 50% of AI agent deployments is a snapshot of where the data infrastructure happened to be ready first - not a verdict on where AI works and doesn’t work.

Read more: AI Agents in Sales and Finance Aren’t Behind

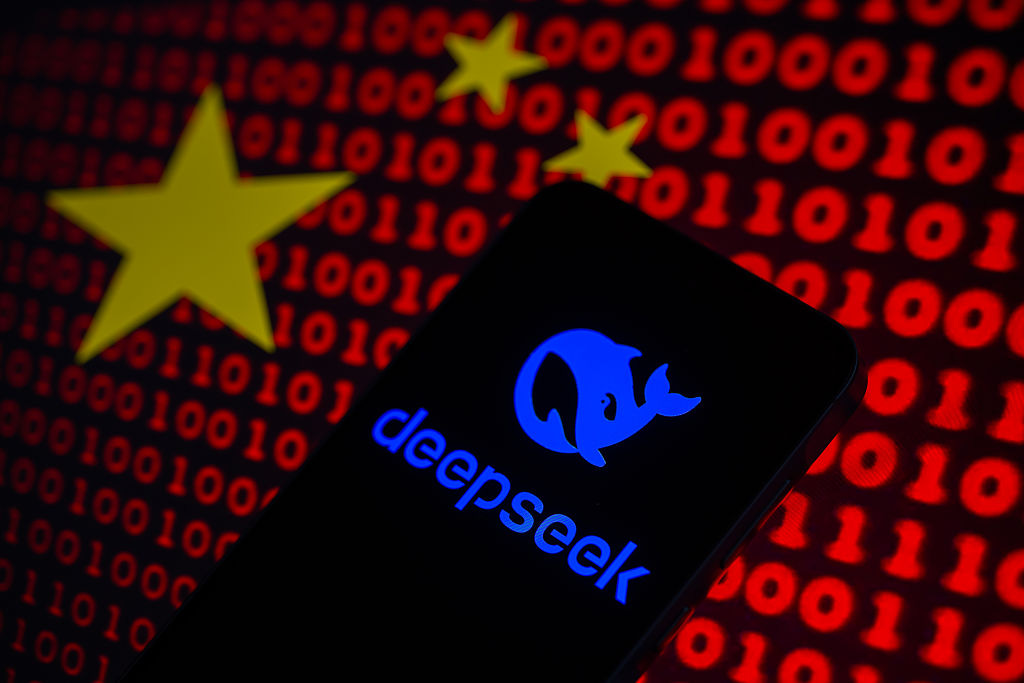

Anthropic Accuses DeepSeek, Moonshot, and MiniMax of Industrial-Scale Distillation

Author: Rebecca Bellan

Date: 2026-02-23

Publication: TechCrunch

Anthropic alleges large-scale model distillation attempts by multiple Chinese labs and frames the activity as both IP extraction and a safety externality. The claim matters because it links frontier-model competition directly to export-control policy and raises questions about how enforceable model guardrails are once outputs are harvested at scale.

Even if the exact technical impact varies by target capability, the strategic signal is clear: labs are now openly contesting each other’s training data boundaries. This is a policy, security, and market-structure story, not only a model-performance story.

Read more: Anthropic Accuses DeepSeek, Moonshot, and MiniMax of Industrial-Scale Distillation

How Much Does Distillation Really Matter for Chinese LLMs?

Author: Nathan Lambert

Date: 2026-02-24

Publication: Interconnects

Distillation has been one of the most frequent topics of discussion in the broader US-China and technological diffusion story for AI. Distillation is a term with many definitions - the colloquial one today is using a stronger AI model’s outputs to teach a weaker model. The word itself is derived from a more technical and specific definition of knowledge distillation Hinton, Vinyals, & Dean 2015, which involves a specific way of learning to match the probability distribution of a teacher model.

The core question is - how much of a performance benefit do Chinese labs get from distilling from American models. Much like the models themselves, the benefits of distillation are very jagged. This usage of Anthropic’s API will have a negligible impact on DeepSeek’s long-rumored V4 model or whichever model the data here contributed to. Why not try training on some of the model outputs to see if your model absorbs it?

This is a substantial amount, which could meaningfully improve a models’ post-training. The Chinese labs likely innovate greatly on distilling from leading API models, due to their restricted access to GPUs. Synthetic data from a model you don’t own is all arguably distillation. Frontier labs use this to their advantage, by having internal-only models for generating synthetic data, but saying that Chinese models could never pass the US frontier due to data distillation is like saying that Claude Sonnet could never beat Opus.

Read more: How Much Does Distillation Really Matter for Chinese LLMs?

Amazon & The Cost of (AI) Lateness

Author: Om Malik

Date: 2026-02-27

Publication: Om.co

Malik frames OpenAI’s latest funding as a pricing signal for strategic lateness, focusing on what incumbent partners are paying per point of ownership rather than on the headline valuation alone. His core claim is that Amazon is now paying a premium for delayed strategic positioning while earlier entrants captured better economics and more leverage.

This is a useful complement to this week’s AI market-structure arc because it treats funding rounds as infrastructure and distribution negotiations, not just capital events. The takeaway is less about OpenAI hype and more about the compounding cost of moving late in platform shifts.

Read more: Amazon & The Cost of (AI) Lateness

Regulation

Statement from Dario Amodei on Discussions with the Department of War

Author: Dario Amodei

Date: 2026-02-26

Publication: Anthropic

Amodei’s statement is the primary source in this week’s Pentagon-Anthropic confrontation and clarifies Anthropic’s actual position: continued defense collaboration, but not for mass domestic surveillance or fully autonomous weapons. He frames those limits as reliability and governance constraints, not blanket refusal to support national-security work.

The importance of this entry is procedural. It records how quickly AI policy is moving from abstract governance debates to explicit contract-level conflict between frontier labs and the U.S. government.

Read more: Statement from Dario Amodei on Discussions with the Department of War

Anthropic and the DoW: Anthropic Responds

Author: Zvi Mowshowitz

Date: 2026-02-27

Publication: Don’t Worry About the Vase

Zvi analyzes Anthropic’s response to U.S. Department of War pressure as a governance stress test, not just a policy statement. The piece is valuable because it distinguishes between symbolic positioning and operational constraints around model access, deployment boundaries, and what “lawful use” means in practice.

It strengthens this week’s regulation section by adding a detailed interpretive layer on top of the primary-source Amodei statement, with direct implications for frontier-lab policy, procurement, and national-security bargaining.

Read more: Anthropic and the DoW: Anthropic Responds

Infrastructure

Nvidia Q4: $68B Revenue, Record Quarter, but Shares Slip

Author: Russell Brandom

Date: 2026-02-25

Publication: TechCrunch

Nvidia posted another extraordinary quarter, but muted market reaction despite record revenue reinforces a key theme for this issue: AI demand is still exploding, yet investors are now discounting sustainability, concentration risk, and margin durability at the same time.

The item belongs in infrastructure because it anchors the entire chain upstream of model headlines. If token demand keeps compounding while expectations outrun even record earnings, hardware supply, power, and deployment economics remain the real constraint layer.

Read more: Nvidia Q4: $68B Revenue, Record Quarter, but Shares Slip

Media

UK TV: A Diagnosis

Author: Evan Shapiro

Date: 2026-02-23

Publication: ESHAP Substack

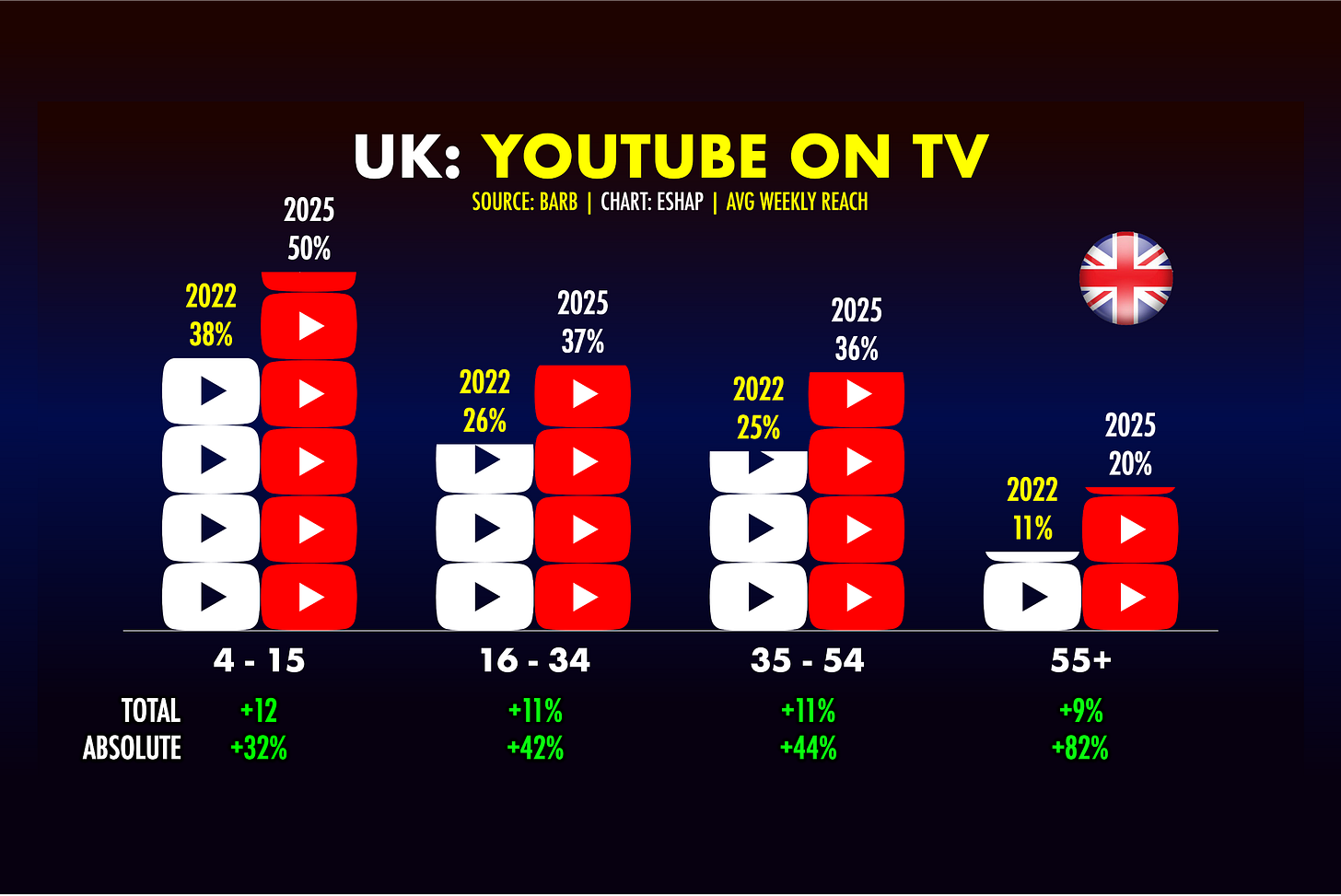

Shapiro returns to the UK with audience data showing YouTube overtaking the BBC’s reach and younger cohorts abandoning traditional TV at a structural rate. The piece frames it as a delayed but now terminal transition: public broadcasters are moving onto YouTube’s turf just to stay visible. It’s a useful counterpart to U.S. media disruption and a reminder that AI-era content economics are colliding with legacy distribution economics worldwide.

Read more: UK TV: A Diagnosis

Interview of the Week

How Fast Will A.I. Agents Rip Through the Economy? - Ezra Klein interviews Jack Clark

Author: Ezra Klein / Jack Clark

Date: 2026-02-24

Publication: The Ezra Klein Show (The New York Times)

Klein’s framing is that the speculative phase is ending and the operational phase of agentic systems is already here. The discussion focuses on what changes when models shift from answering questions to executing multi-step work, and why that transition is beginning to move markets and hiring behavior before institutions are ready.

Clark adds the perspective of someone building and governing frontier systems at the same time, which makes the conversation unusually concrete on both capability and policy timelines. It is a strong interview choice for this week because it connects labor impact, enterprise adoption, and national-policy pressure in one conversation.

Read more: Ezra Klein Show - Jack Clark

Startup of the Week

Column: The Most Important Fintech Startup You’ve Never Heard Of

Author: Upstarts Media

Date: 2026-02-25

Publication: Upstarts Media

William Hockey’s second company, Column, is a strong anti-hype signal for this week: bootstrapped, profitable, and deeply infrastructure-first in a market that keeps rewarding narrative velocity. Reported figures are unusual by current standards: roughly $200M revenue, $100M+ free cash flow, and around 110 employees.

The model is compelling for TWTW readers because it sits at the intersection of fintech plumbing, software defensibility, and capital discipline. In a cycle dominated by AI uncertainty and multiple compression, Column looks like durable execution.

Read more: Column: The Most Important Fintech Startup You’ve Never Heard Of

Post of the Week

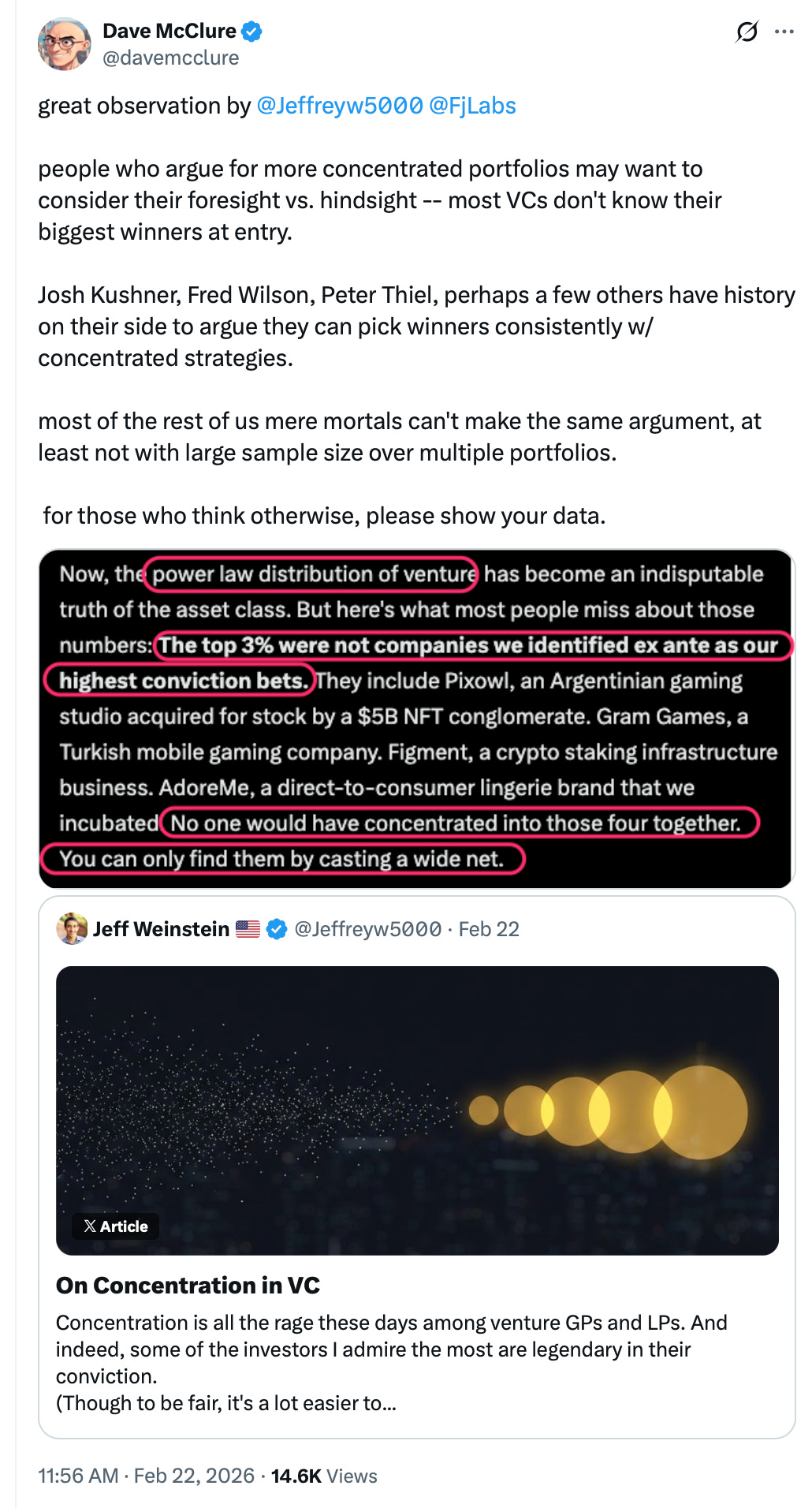

Dave McClure on Concentration vs. Diversification in VC

Author: Dave McClure and Jeff Weinstein

Date: 2026-02-22

Publication: X

https://x.com/davemcclure/status/2025661074922754259?s=20

McClure’s point is blunt and practical: most managers cannot identify their biggest winners at entry, so concentrated-portfolio advice is usually hindsight masquerading as foresight. He amplifies FJ Labs’ distribution data to argue that broad initial surface area is still the only reliable way to discover outlier outcomes.

It is a strong capstone for this week’s venture allocation debate, where “concentrate harder” and “diversify to discover” both appear true until stage, access, and selection quality are made explicit.

Read more: Dave McClure on Concentration vs. Diversification in VC