This week’s video transcript summary is here. You can click on any bulleted section to see the actual transcript. Thanks to Granola for its software.

Editorial

Stewart Alsop has been watching tech for nearly five decades. When he says Sam Altman is one of the worst CEOs he’s observed — that Altman “wouldn’t recognize a strategy if it hit him in the face” — it’s worth taking seriously. The evidence he marshals is real: $180 billion raised, Sora canceled, no coherent executive team, a podcast network acquired for reasons nobody can explain.

I don’t want to argue with the observations. I want to argue with the framing.

The personality critique is the oldest and laziest form of tech journalism. It’s satisfying in the moment and explains almost nothing. If OpenAI replaced Altman tomorrow with the most disciplined, operationally rigorous CEO in the industry, would the fundamental dynamics change? Would the capital stop flowing? Would the valuation compress? Would the strategic incoherence — which is partly to do with how early stage the company is, partly structural, partly a function of OpenAI’s bizarre nonprofit/for-profit hybrid — suddenly resolve?

The real story isn’t Sam Altman. The real story is what kind of market produces an $850 billion valuation for a company with no coherent strategy. That’s a story about capital behaving like it’s buying the next big thing before it is big. It’s a story about a moment so large, and yet uncertain, that investors are terrified of being left out more than they’re worried about being wrong. This is rational behavior for such a game changing moment.

Alsop contrasts Altman unfavorably with Anthropic’s discipline and Musk’s delivery record. But comparisons are not fair in this fast-changing ecosystem.

The Economist asked this week how to control “the five men” who control AI. It is the wrong question.

Altman, Amodei, Hassabis, Musk and Zuckerberg are not the right focus. The actual technology, tools and capabilities are.

The cult of personality — positive or negative — is how commenters avoid the harder questions. It’s more comfortable to say “bad CEO” than to ask why the market rewards him anyway. That question is more interesting, more unsettling, and more useful.

That’s what this week is actually about.

John Thornhill’s FT piece adds a sharper diagnosis than most of the week’s commentary manages. The AI leaders now calling for calmer rhetoric didn’t just fail to prevent public anxiety — they engineered it.

The existential risk narrative was a deliberate communications strategy, optimized for credibility in Washington and credibility with investors. It worked on those audiences. It also landed, inevitably, in the broader public imagination as confirmation that the thing being built is genuinely dangerous. We are dangerous therefore we are important does not land. The public only hears the first part of the sentence.

That is more than irony. It is a leadership failure dressed up as responsibility. The people who most needed a positive, honest account of what AI means for their lives — younger workers facing credential inflation, gig workers watching their market compress, communities living beside the data centers - got “we might be building something dangerous, and we need to be regulated” That is not a message. It is a bet that safety would become a moat.

The same leaders now tasked with undoing the dread are the ones who built it. Thornhill circles that point. This editorial says it directly.

Thornhill’s other piece this week - the Lunch with the FT profile of Dario Amodei - completes the picture. Amodei has warned that AI will eliminate half of all entry-level white-collar jobs within five years. He is simultaneously raising $30 billion to build the technology doing it, funding PACs for stricter safety regulation, and coordinating with Amazon, Apple, and Microsoft on frameworks for how frontier models get released.

He describes the open-source community and smaller competitors as “chaotically oriented actors.” He says he wants AI regulated like cars and aeroplanes. Both things are true, and both serve the same interest: regulation written at Anthropic’s scale, meeting Anthropic’s compliance costs, enforced on a market where Anthropic already has a $380 billion valuation and a seat at every table.

This is not conspiracy. Sadly Amodei and Altman, and certainly Musk and Hassabis, all believe that AI might be unsafe. On the face of it that is bizarre. AI is software operating within human-run systems, deployment controls, and institutional constraints; the real risks are mostly misuse, concentration of power, and bad governance, not science-fiction autonomy. But insofar as there are risks the right narrative is a positive narrative about what the platforms can and are doing to optimize its utility while minimizing any risks. Not a “help, it’s dangerous’ but a ‘wow, it’s awesome, and we are making it safe’.

It is the normal logic of incumbency: build a moat, then legislate it into a monopoly or oligopoly. The people digging AI’s grave are the same ones insisting they’re the only responsible diggers. The self-defeating prophecy - existential risk as marketing, regulation as competitive strategy — runs all the way down.

Do you want to lead, or narrate yourself as a victim? You cannot do both for long.

When I started writing this editorial I wanted to accuse the observers, and especially the critical observers of AI, as creating a cult of personality aimed at demonizing the CEOs. As I reflect it is clear that those leaders are placing themselves in the spotlight as being responsible for possibly bad outcomes. They have created their own cult-like platform. In that context it is little wonder they are being demonized by listeners.

How to change that? We need real technical, business and social leadership that champions solutions and gains. Leave others to do the demonizing. Self-demonization is not just a bad look. It is a bad strategy. Maybe look at Nvidia’s Jensen Huang for a clue about how to be an AI leader.

Contents

Editorial: The Cult of Personality: AI is not about The CEOs

Essays

I Don’t Think Sam Altman Lies - Stewart Alsop

A reflective note on AI rhetoric, conflict, and responsibility - Sam Altman

Make AI safe again - John Thornhill, FT

Anthropic chief Dario Amodei: ‘I don’t want AI turned on our own people’ - John Thornhill, FT

The inevitable need for an open model consortium - Nathan Lambert

We Are Not Hard Tech Pilled Enough - Shomik Ghosh

What if a few AI companies end up with all the money and power? - Noah Smith

AI Populism’s Warning Shots - Jasmine Sun

The Internet’s Most Powerful Archiving Tool Is in Peril - Kate Knibbs

It’s not “bad marketing” from A.I. companies - Matt Yglesias

Venture

Q1 venture capital in a nutshell - Yoram Wijngaarde, Dealroom

Accel raises $5B for late-stage AI bets and expansion capital - Bloomberg

Josh Wolfe on the coming extinction of subscale VC funds- Josh Wolfe

New leaders, new fund: Sequoia has raised $7B to expand its AI bets - TechCrunch

AI

Vibe check from AI industry HumanX: Anthropic is talk of the town - Ashley Capoot, CNBC

Claude Mythos #3: Capabilities and Additions - Zvi Mowshowitz

Read OpenAI’s latest internal memo about beating the competition — including Anthropic - The Verge

Databricks: Only 19% of Organizations Have Deployed AI Agents - SaaStr

2026 AI Index Report - Stanford HAI

The Beginning of Scarcity in AI - Tomasz Tunguz

Sam Altman’s second thoughts - Casey Newton, Platformer

Anthropic’s rise is giving some OpenAI investors second thoughts - TechCrunch

Our 1.25 Humans + 20 AI Agents Closed 140% of What Our All-Human Sales Team Did Last Year - Jason Lemkin, SaaStr

LinkedIn data shows AI isn’t to blame for hiring decline… yet - TechCrunch

Claude Opus 4.7: Literally One Step Better in Every Dimension - Latent Space

OpenAI takes aim at Anthropic with beefed-up Codex - TechCrunch

The Mythos Moment - The Economist

Regulation

Infrastructure

Meta Partners With Broadcom to Co-Develop Custom AI Silicon - Meta Newsroom

Interview of the Week

Agency, Agency, Agency - Keen On (Sophie Haigney)

Startup of the Week - Nvidia-backed SiFive hits $3.65 billion valuation for open AI chips

Post of the Week - The Age of Consensus Capital - Nico Wittenborn

Essays

I Don’t Think Sam Altman Lies

Alsop Louie Partners · Apr 15, 2026 · Tags: AI, OpenAI, Leadership, Strategy

Stewart Alsop’s verdict after nearly five decades covering tech: Sam Altman is intelligent, well-intentioned, and completely unable to run a company. “He wouldn’t recognize a strategy if it hit him in the face.” The evidence is not abstract — OpenAI has raised $180 billion at an $850 billion valuation while canceling Sora, acquiring a podcast network with no coherent rationale, and building no durable executive team to show for it.

Alsop uses Anthropic’s disciplined enterprise focus and Musk’s concrete delivery record as counterpoints, though neither comparison fully holds. The question the piece raises and leaves hanging: how does a company heading toward public markets get there when its CEO has never built a high-performance team — and the board either can’t see it or won’t act?

Read more: Alsop Louie Partners

A reflective note on AI rhetoric, conflict, and responsibility

Sam Altman Blog · Apr 11, 2026 · Tags: AI, OpenAI, Narrative, Safety

Altman argues that AI competition has entered a phase where narrative warfare and political framing are beginning to shape real-world safety outcomes. The piece is partly personal — written in the wake of threats against AI executives — but its core claim is institutional: that public escalation in AI rhetoric compounds actual risk during a period of rapid capability growth. He is, in other words, asking people to turn down a temperature that he and his colleagues spent years turning up.

The uncomfortable read is not that the concern is wrong. It is that the same people most responsible for framing AI as an existential force are now surprised to find the public treating it as one. This is where the self-accounting attempt lives.

Read more: Sam Altman Blog

Make AI safe again

Financial Times · John Thornhill · Apr 2026 · Tags: AI, Safety, Narrative, Leadership

Thornhill’s argument is that the AI industry has a trust problem of its own making. The leaders who spent years amplifying existential risk narratives — framing AI as potentially dangerous to humanity, positioning themselves as the responsible stewards of something that might kill everyone — have now discovered that the public believed them. The safety discourse was not primarily about informing citizens. It was optimized for Washington credibility and investor signaling. It landed, as such messages do, in the broader imagination as confirmation that the technology poses real danger.

The piece identifies the gap without quite naming it directly: the people who most needed a compelling positive vision of what AI could mean for their lives — younger workers navigating a tightening labor market, gig workers watching their incomes compress, communities subsidizing data center electricity bills — received “we might be building something dangerous, but we’re the responsible ones doing it.” That is not a reassurance. It is a burden-transfer. The irony Thornhill documents is structural: the same leaders now calling for calmer rhetoric are the ones who built the dread. Undoing it will require more than a blog post asking people to dial down the temperature.

Read more: Financial Times

Anthropic chief Dario Amodei: ‘I don’t want AI turned on our own people’

Financial Times · John Thornhill · Apr 17, 2026 · Tags: AI, Anthropic, Regulation, Leadership

Dario Amodei is the man who says AI could eliminate half of all entry-level white-collar jobs within five years — and is also raising $30 billion to accelerate the technology doing it. Over lunch at Cotogna in San Francisco, the Anthropic CEO presents himself as the responsible adult in the room: willing to fight the Pentagon over domestic surveillance, funding PACs for stricter safety regulation, and calling for AI to be governed like cars and aeroplanes. The framing is coherent and impeccably positioned. It is also a regulatory strategy that would entrench incumbents and raise barriers high enough to freeze out challengers — what he calls “chaotically oriented actors” is mostly the open-source community that cannot afford his compliance costs.

The clearest signal is buried in the Project Glasswing announcement: Anthropic has now pulled Amazon, Apple, and Microsoft into a coordinated framework for how frontier models are released. Amodei calls it “a good first step.” It is also a template for who gets to set the standards — and who has to meet them. The moat, built at $380 billion, looks better with a guardrail around it.

Read more: Financial Times

The inevitable need for an open model consortium

Interconnects AI · Apr 11 · Tags: AI, Open Models, Economics

Nathan Lambert argues that near-frontier open models are drifting beyond the financial reach of any single idealistic lab, which means the open ecosystem will likely need a consortium model if it wants durable access to serious capability. The logic is straightforward and uncomfortable. Training costs keep rising, closed deployment keeps getting more profitable, and the set of organizations willing to give away their best work will keep shrinking unless the funding model changes.

The essay reframes the open-versus-closed debate as an industrial organization problem rather than a moral one. If open models matter for safety research, bargaining power, and ecosystem health, then somebody has to pay for them at scale. Lambert’s answer is not romantic, but it is probably where the real fight is headed.

Read more: Interconnects AI

We Are Not Hard Tech Pilled Enough

On The Frontier · Apr 12 · Tags: AI, Infrastructure, Energy

Shomik Ghosh’s argument is that the AI boom is being misread as a software story when it is increasingly an atoms story. Compute is constrained by power, metals, manufacturing capacity, and geopolitics, which means the companies and sectors usually treated as boring industrial backwater may end up capturing a disproportionate share of the value. He runs through the bottlenecks plainly: EUV, rare earths, turbines, nickel, grid strain, and a world that is moving away from easy assumptions about globalized supply.

The essay reframes AI infrastructure as a portfolio and policy question, not just a data-center capex race. If software optimism depends on energy abundance and secure materials chains, then the real leverage may sit with the people building the physical substrate — a sharp corrective to the idea that hard tech has already become consensus.

Read more: On The Frontier

What if a few AI companies end up with all the money and power?

Noahpinion · Apr 13 · Tags: AI, Power, Markets, Inequality

Noah Smith argues that the recent surge in agentic coding revenue may have broken the comforting idea that AI will be strategically important but economically low-margin. If frontier model makers can dominate adversarial domains like cybersecurity, then capability does not just become a product advantage. It becomes a moat. Defenders may have little choice but to buy from the frontier, which means technical leadership can harden into pricing power and, eventually, political power.

Some of the downstream fears are speculative, but the frame is where the value lies. If AI profits concentrate in a few companies because the most important use cases reward the best models disproportionately, then the next debate is not just about innovation or safety. It is about who captures the money, who accumulates leverage, and what happens when technical superiority starts to look like structural power.

Read more: Noahpinion

AI Populism’s Warning Shots

Author: Jasmine Sun Published: April 13, 2026

Jasmine Sun’s argument is that the attacks on AI executives are not isolated incidents of deranged violence. They are early data points in a predictable political transition. AI has moved from a domain of technical experts arguing about benchmarks and safety frameworks to a populist issue shaped by voters, electoral incentives, and economic fear. Her thesis is that once a technology gets coded as an elite project that displaces workers, democratic resistance is not a communications failure to be corrected. It is a structural condition to be managed.

The killer detail is the speed of the DC policy shift. Within weeks, conversations moved from policymakers lacking the political will to address AI at all, to everyone scrambling to design AI agendas — including OpenAI publishing a surprisingly progressive policy whitepaper. That is not a reaction to a safety argument. It is a reaction to a political signal.

Sun grounds the pattern in Carl Benedikt Frey’s The Technology Trap: when technologies take the form of capital that replaces workers rather than tools that augment them, organized resistance follows. The question is not whether opposition will grow, but whether the industry will move toward a grand bargain that directly addresses job displacement and redistributes AI’s gains. Without one, Sun argues, the escalation logic is already in place — and young people facing economic instability have no shortage of targets to blame.

Read more: jasmi.news

The Internet’s Most Powerful Archiving Tool Is in Peril

Author: Kate Knibbs Published: Apr 13, 2026

Kate Knibbs argues that the fight over AI scraping is now endangering one of the web’s core public-memory institutions. The Wayback Machine is not just a convenience for nostalgists. It is infrastructure for accountability, used by reporters, researchers, and organizers to prove what governments, companies, and institutions once said before those records disappear or are quietly rewritten. As publishers escalate their defenses against AI crawlers, the thesis is that preservation itself is becoming collateral damage.

The killer detail is the asymmetry. USA Today used Wayback Machine records to report on changes in ICE detention data even while its parent company blocks the Internet Archive’s crawler, and Originality AI found 23 major news sites now blocking the archive bot altogether. That turns the archive from a shared civic utility into a shrinking patchwork shaped by private fear of downstream AI use. The pull is that if preservation gets treated as indistinguishable from scraping, the web does not simply get less open. It gets harder to verify the recent past at all.

Read more: Source

It’s not “bad marketing” from A.I. companies

Slow Boring / Matt Yglesias · Apr 17, 2026 · Tags: AI, Narrative, OpenAI, Leadership, Safety

Matt Yglesias addresses the week’s central argument head-on: the widespread claim that AI leaders’ alarming rhetoric — extinction risk, mass disemployment — is a communications strategy gone wrong. His answer is that it isn’t. These are not people who chose a scary frame to pitch investors and accidentally frightened the public. OpenAI was founded by people who sincerely held these beliefs long before GPT-2. Anthropic was founded by former OpenAI employees who thought the original company was too commercially focused and not sufficiently alert to the existential stakes. The comms teams understand that more reassuring messaging would play better. The founders don’t use it because they don’t believe it.

Yglesias tightens the frame: the surprise is real because the belief is real. If you’re trying to understand why OpenAI’s CEO keeps saying things that alarm people, the answer isn’t that he miscalculated the audience. It’s that he means it. Whether that makes the public dread more or less legitimate is a separate question. But the good-faith read of these founders is a necessary corrective to the cynicism-as-explanation that most of the week’s criticism has relied on.

Read more: Slow Boring

Venture

Q1 venture capital in a nutshell

X / Yoram Wijngaarde · Apr 16, 2026 · Tags: Venture, Data, AI, Funds

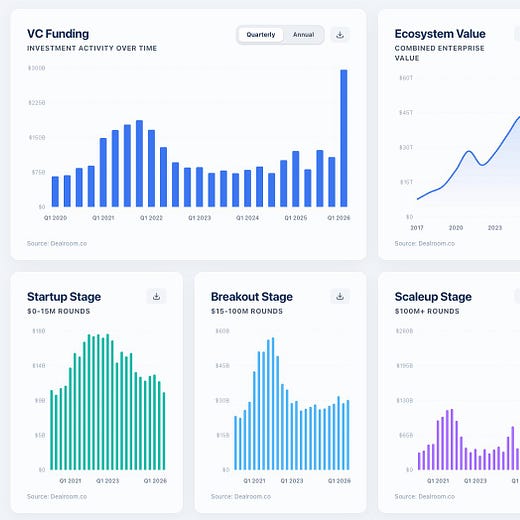

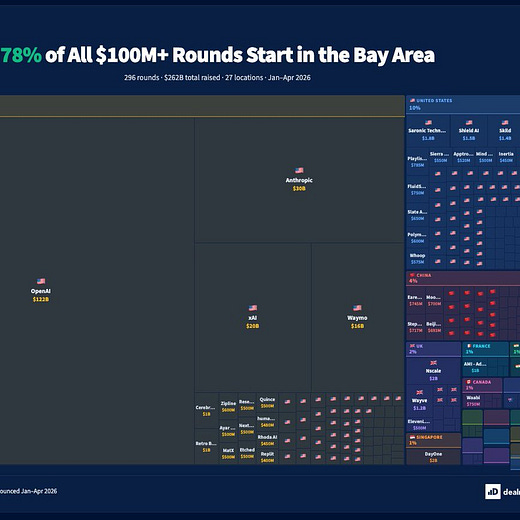

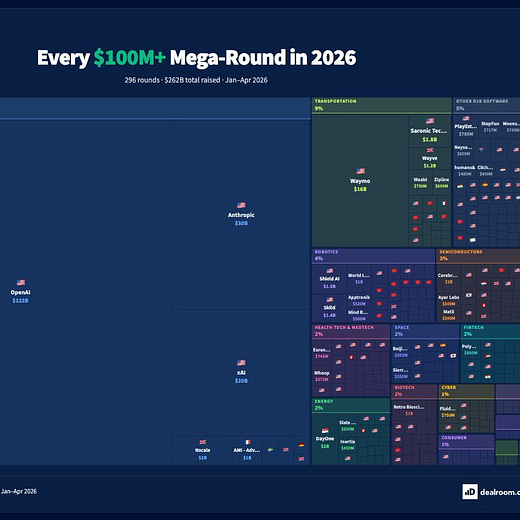

Dealroom’s Yoram Wijngaarde posted the single most clarifying chart of the quarter: Q1 2026 VC funding spiked to roughly $300 billion — more than double any quarter in the past two years. Ecosystem combined enterprise value is approaching $45 trillion. The chart breaks the story down by stage, and that is where it gets precise. Startup rounds ($0–15M) and Breakout rounds ($15–100M) are flat. The entire spike is in Scaleup rounds ($100M+), which hit ~$250 billion in Q1 alone.

This is not a broad market recovery. It is a concentration event. Capital is not flowing back into the ecosystem — it is flowing into the top of it at historic scale. Money is available in extraordinary quantities, but only for the companies and funds already at the top of the stack.

Read on X: @yoramdw

Accel raises $5B for late-stage AI bets and expansion capital

Bloomberg · Apr 15, 2026 · Tags: Venture, AI, Funds

Accel closed a $5 billion raise — including a $4 billion Leaders fund — positioning itself for concentrated, later-stage AI exposure rather than broad early-seed optionality. The firm has backed Anthropic and Cursor, and the scale of the raise signals that large venture shops are betting the next phase of AI value creation will consolidate into a small number of breakout companies rather than diffuse across the market.

The scale of the raise signals that large venture shops are betting the next phase of AI value creation will consolidate into a small number of breakout companies rather than diffuse across the market: mega-funds writing bigger checks into fewer names at the top, pressure and disappearance in the middle.

Read more: Bloomberg

Josh Wolfe on the coming extinction of subscale VC funds

X / Josh Wolfe · Apr 10 · Tags: Venture, Funds, Consolidation

Josh Wolfe argues that venture is heading into another consolidation cycle. His claim is blunt: most subscale funds under $400 million will disappear, while a smaller number of mega-firms keep raising assets, broadening their product lines, and positioning themselves more like durable financial institutions than traditional boutiques.

The post shifts the lens from startup valuations to venture-firm structure, suggesting a broader shape for the market: more excess at the top, more pressure in the middle, and more power concentrating in firms with scale. Even as a short post, it is a clean signal for where the industry conversation may be heading.

Read more: X / Josh Wolfe

New leaders, new fund: Sequoia has raised $7B to expand its AI bets

TechCrunch · Apr 17, 2026 · Tags: Venture, AI, Funds

Sequoia raised roughly $7 billion for a new expansion-strategy fund — nearly double its last comparable vehicle ($3.4 billion in 2022) — positioning itself for late-stage AI investing across the US and Europe. The firm has backed both OpenAI and Anthropic, and with both reportedly eyeing public listings in 2026, the fund sizing implies Sequoia expects a significant payday and wants dry powder to stay in the game.

The details that matter: this is also the first major capital raise under Sequoia’s new co-stewards Alfred Lin and Pat Grady, signaling continuity of strategy under new leadership. With multiple large late-stage AI firms all expanding at historic scale at the same moment, concentrating into the same handful of companies, the question the fund size doesn’t answer is what happens after the IPOs.

Read more: TechCrunch

AI

Vibe check from AI industry HumanX: Anthropic is talk of the town

Author: Ashley Capoot Published: Apr 11, 2026

HumanX’s clearest signal was not another model benchmark or product launch. It was a market narrative shift. Ashley Capoot reports that among the 6,500 executives, founders, and investors at the conference, Anthropic, not OpenAI, was the company shaping the room. The thesis is that AI’s center of gravity is moving away from the general chatbot story and toward enterprise coding, where Claude Code has become the product other builders now measure themselves against.

The killer detail is Arvind Jain’s line that Claude has “become a religion,” paired with concrete operational fallout inside companies already reorganizing around coding agents. Decagon says work that once took four or five engineers can now be handled by two, while Cisco’s Jeetu Patel describes future scrum teams as two people plus six agents, or even infinite agents. That moves the conversation from tool adoption to org design.

The pull here is that momentum in AI now looks less like consumer mindshare and more like workflow capture. If the conference mood is right, the next phase of the race will be decided by which company becomes the default digital coworker inside the enterprise.

Read more: Source

Claude Mythos #3: Capabilities and Additions

Don’t Worry About the Vase · Apr 14, 2026 · Tags: AI, Anthropic, Security, Capabilities

Zvi Mowshowitz goes beyond the headline cybersecurity claims and works through what Mythos’s broader capability profile actually implies for deployment decisions, guardrails, and institutional response. The analysis refuses to treat the model release as isolated news — it connects specific capability deltas to concrete risk-management choices that organizations and regulators will need to make in the near term. If the policy arguments are about who gets access and under what controls, Mowshowitz is doing the harder work of saying what those controls actually need to govern.

Read more: Don’t Worry About the Vase

Read OpenAI’s latest internal memo about beating the competition — including Anthropic

The Verge · Apr 13 · Tags: AI, OpenAI, Anthropic, Enterprise, Competition

OpenAI chief revenue officer Denise Dresser sent a four-page internal memo to employees on Sunday laying out the company’s enterprise strategy — and it was promptly leaked to The Verge. The memo is worth reading in full, but the most consequential claim is a specific accounting attack on Anthropic’s stated $30 billion run rate. Dresser argues the number is inflated by roughly $8 billion because Anthropic grosses up its revenue-share arrangements with Amazon and Google, while OpenAI reports its Microsoft rev-share net. Both companies are reportedly planning IPOs this year, which makes that $8 billion gap more than a competitive talking point.

The memo also hits Anthropic on compute (”their strategic misstep to not acquire enough compute is showing up in the product — customers feel it through throttling, weaker availability”), on strategic narrowness (”you do not want to be a single-product company in a platform war”), and on narrative (”their story is built on fear, restriction, and the idea that a small group of elites should control AI”). Anthropic has been winning the conference-room mood; OpenAI is doing the math to dispute whether the underlying business warrants it.

Read more: The Verge

Databricks: Only 19% of Organizations Have Deployed AI Agents. But They’re Already Creating 97% of Databases.

SaaStr · Apr 11 · Tags: AI, Agents, Data, Enterprise

The headline number here is not that most companies have deployed agents. They have not. The more interesting signal is that once agents do get into production, they immediately start absorbing narrow but highly consequential infrastructure work. Databricks says only 19% of organizations have deployed AI agents, yet agents are already creating 97% of database branches and 80% of databases on Neon. That is a startlingly fast transfer of routine operational work from humans to machines.

The evidence cuts through the agent hype cycle. Enterprise adoption is still shallow at the organizational level, but where the loop closes, it closes hard. The practical story is not that agents are everywhere. It is that in the right workflows they become default operators much faster than management narratives usually admit.

Read more: SaaStr

2026 AI Index Report

Stanford HAI · Apr 13 · Tags: AI, Research, Data, Policy

AI capability is not plateauing — it is accelerating while the frameworks meant to govern it fall further behind. Stanford’s annual 400-page data census of the field documents a year in which coding benchmark performance went from 60% to near 100% on SWE-bench Verified, organizational AI adoption hit 88%, and generative AI reached 53% of the global population in just three years, faster than the PC or the internet ever did.

The sharpest detail is the jagged frontier: the same models that earned a gold medal at the International Mathematical Olympiad read analog clocks correctly only 50.1% of the time. The US-China performance gap has effectively closed, with Anthropic’s best model now leading DeepSeek by just 2.7%. US private AI investment hit $285.9 billion, more than 23 times China’s. Yet the number of AI researchers and developers moving to the United States has dropped 89% since 2017, with 80% of that decline happening in the last year alone.

The public-expert trust gap may be the most consequential number in the report: 73% of AI experts expect a positive impact on how people do their jobs. Among the general public, that figure is 23%.

Read more: Stanford HAI

The Beginning of Scarcity in AI

Tomasz Tunguz / Theory Ventures · Apr 13 · Tags: AI, Infrastructure, Compute, Venture

Tomasz Tunguz argues that the era of abundant, cheap AI compute is over — and the data backs it up hard. Nvidia Blackwell GPU rental prices jumped 48% in sixty days, from $2.75 to $4.08 per hour. CoreWeave raised prices 20% while extending contract minimums from one year to three. Anthropic’s newest model is accessible to roughly 40 organizations globally. OpenAI’s own CFO said the company is making “tough trades” on what not to build because compute is simply not there.

The underlying constraint behind enterprise competition, infrastructure attacks, and concentration-of-power arguments is the same. Scarcity is not a temporary bottleneck. It is the new structural reality, and it will determine who can play at the frontier for years.

Read more: Tomasz Tunguz

Sam Altman’s second thoughts

Platformer / Casey Newton · Apr 14 · Tags: AI, OpenAI, Safety, Narrative

Casey Newton’s argument is simple and uncomfortable: OpenAI’s leaders spent years warning that AI poses existential risks, and now they are surprised that the public is anxious about it. After a series of violent incidents targeting AI executives, Altman called for calmer rhetoric. Newton points out that Altman himself has previously described the development of superhuman intelligence as “probably the greatest threat to the continued existence of humanity.” The concern he is now asking people to dial down is, in large part, concern he helped create.

The killer detail is the policy contradiction. The same company urging de-escalation actively lobbied against AI safety legislation in California and worked to limit EU AI regulation. The public surveys now showing majorities worried about AI speed are not irrational — they are downstream of the narrative OpenAI chose to build its brand on. Newton’s piece does not resolve the tension, but it names it with unusual precision.

Read more: Platformer

Anthropic’s rise is giving some OpenAI investors second thoughts

TechCrunch · Apr 15 · Tags: AI, Anthropic, OpenAI, Venture, Valuation

The FT is reporting that some investors who have backed both OpenAI and Anthropic are starting to reweight toward the latter. The telling detail: one shared investor said justifying OpenAI’s most recent fundraising required underwriting an IPO valuation of $1.2 trillion or more, making Anthropic’s current $380 billion valuation look like the relative bargain. That is a remarkable inversion from a year ago, when Anthropic was the smaller challenger scrambling for compute and enterprise credibility.

If sophisticated crossover investors are quietly repositioning before two major IPOs, the shift in conference-room mood is not just sentiment — it is starting to show up in how money is being placed.

Read more: TechCrunch

Our 1.25 Humans + 20 AI Agents Closed 140% of What Our All-Human Sales Team Did Last Year. But I’m Not Sure That’s the Real Story.

SaaStr / Jason Lemkin · Apr 15 · Tags: AI, Agents, Sales, Enterprise

Jason Lemkin replaced most of his human sales team with AI agents, kept 1.25 humans, and in Q1 closed 140% of the prior year’s all-human revenue. He then spends the rest of the essay refusing to let that headline do all the work, and that’s what makes it worth reading. His honest accounting: AI agents gave the team two things that had nothing to do with intelligence. First, 100% lead coverage at any hour — versus the 40% or less that humans bothered to respond to. Second, lead concentration in his best closers, because with fewer humans, every qualified inbound went to the people who actually knew how to close. Lemkin estimates those two structural effects — coverage and concentration — may account for most of the gain. The AI “smarts” part is real but may be the smaller variable.

The value of AI in the workflow is often mundane, structural, and large: you show up to everything, and you stop diluting your best people.

Read more: SaaStr

LinkedIn data shows AI isn’t to blame for hiring decline… yet

TechCrunch · Apr 15 · Tags: AI, Workforce, Labor, Data

LinkedIn’s chief global affairs officer confirmed at the Semafor World Economy summit that the company’s billion-member economic graph shows hiring down roughly 20% since 2022 — but says the data does not point to AI as the cause. The expected impact zones (customer support, admin, marketing) are not declining faster than the rest. College entrants aren’t getting hit harder than mid-career workers. The most likely culprit, he said, is rising interest rates. The “yet” in the headline is doing significant work: LinkedIn is looking at models that have been widely deployed for, at most, eighteen months. The lag between capability deployment and measurable workforce reorganization may be longer than headlines assume.

AI may be reorganizing work faster in some narrow domains than the aggregate numbers suggest — and the aggregate may look benign right up until it doesn’t.

Read more: TechCrunch

Claude Opus 4.7: Literally One Step Better in Every Dimension

Latent Space · Apr 17, 2026 · Tags: AI, Anthropic, Models, Capabilities

Anthropic launched Claude Opus 4.7 this morning, and the benchmark story is unusually clean: 4.7-low is strictly better than 4.6-medium, 4.7-medium is strictly better than 4.6-high, 4.7-high beats 4.6-max, and a new xhigh effort level now becomes the default for Claude Code. On SWE-Bench Pro, the coding benchmark that matters most for enterprise adoption, Opus 4.7 is 11 points higher than its predecessor. List pricing is unchanged at $5/$25 per million input/output tokens.

The capability addition that isn’t just a benchmark number: vision. Opus 4.7 accepts images up to 2,576 pixels on the long edge — roughly 3.75 megapixels, more than three times prior Claude models — opening up computer-use agents, dense screenshot reading, and precision visual workflows that previously required workarounds. The week ends with Anthropic holding the model quality lead it spent the previous five days defending against OpenAI’s competitive pressure. The question is whether compute scarcity, Anthropic’s API reliability track record, and enterprise availability can keep pace with what the benchmarks now show.

Read more: Latent Space

OpenAI takes aim at Anthropic with beefed-up Codex that gives it more power over your desktop

TechCrunch · Apr 16, 2026 · Tags: AI, OpenAI, Anthropic, Coding, Agents

OpenAI revamped Codex to run as a background agent on your Mac — opening apps, clicking, typing — while you continue working in other windows. The direct comparison is Claude Code, which the TechCrunch piece frames openly: “there is currently a low-grade war between OpenAI and Anthropic over who can release the most convenient and powerful AI coding tools, and so far Anthropic seems to be winning.” Codex now lets you deploy multiple parallel agents on your desktop, all running background tasks without interfering with active work.

The enterprise mindshare contest has favored Anthropic in conference-room sentiment and investor repositioning; OpenAI’s Codex revamp is the product response. Whether parallel desktop agents are the right answer to the competitive gap, or whether the gap has now widened further with the Opus 4.7 launch, is a question the next few weeks will answer.

Read more: TechCrunch

The Mythos Moment — Economist Insider

The Economist · Apr 2026 · Tags: AI, Safety, Regulation, Anthropic, China, US Politics

The Economist’s Editor-in-Chief Zanny Minton Beddoes convenes her masthead — Deputy Editor Edward Carr, AI writer Alex Frantz, US editor John Prideaux, and China correspondent Corbyn Duncan — for a 46-minute reckoning with what Anthropic’s Mythos model means for AI governance, US-China competition, and the fast-deteriorating public trust in AI.

The technical baseline comes from Frantz: Mythos is not a modest capability increment. The previous top-tier Anthropic model produced two working exploits out of hundreds of attempts against a known Firefox vulnerability. Mythos produced 181 — and 27 of those went further, building full attack chains that reached the Windows registry. Anyone with access becomes, effectively, a tier-one hacker regardless of their own technical background. Anthropic’s response was to release it to only 11 pre-vetted partners (Apple, Microsoft, the Linux Foundation among them) — what the panel calls the Glasswing model — while charging those partners heavily for compute and positioning them as defenders who can patch vulnerabilities before the model reaches the public.

The political reaction in Washington, reported by Minton Beddoes from her week of meetings, was sharp and fast. Treasury Secretary Scott Bessent and Fed Chair Jay Powell convened an emergency meeting with major banks within days of the announcement. The Trump administration — which arrived in office as explicit accelerationists, dismissing the Biden safety framework — has done what Prideaux calls “a 180.” Minton Beddoes’s read: Mythos may have been the wake-up call that, in her prior analysis, she had assumed would require an actual AI disaster to produce.

Three other threads run through the discussion. First, the Pew data: 50% of Americans report feeling more concerned than excited about AI, and the US leads the world in that metric — a striking inversion for a country that built the technology. The public trust deficit predates Mythos and will outlast it. Second, the China question: Chinese labs are compute-constrained and cannot yet fast-follow a model that was never released publicly, but that lead is measured in months, not years. Corbyn Duncan’s reporting suggests China is simultaneously optimistic about AI applications and beginning to suppress domestic anxiety about job displacement. Third, Minton Beddoes identifies the policy vacuum that the panel finds most alarming: Congress cannot pass meaningful legislation before 2028, by which time the capability curve will have already arrived at bioweapons territory — where, as Frantz notes, there is no patch for human biology and no Glasswing-style managed release. You either don’t build it, or it’s out.

The constructive note — and the panel pushes for one — is that Anthropic’s choice to restrict Mythos was not inevitable. The fact that the first lab to cross this threshold voluntarily held it back is itself a data point that didn’t have to go that way. The question is whether the leaders who built the dread, raised the capital, and set the narrative can now build the institutions to govern what they’ve made. The Economist’s answer, delivered with characteristic Zanny optimism, is: possibly, and the window is shorter than anyone wants.

Watch: Economist Insider

Regulation

Uncovering Webloc: An Analysis of Penlink’s Ad-based Geolocation Surveillance Tech

Citizen Lab · Apr 11, 2026 · Tags: Regulation, Surveillance, Privacy, AI

Citizen Lab documents how ordinary advertising data exhaust — the kind generated by every app on every phone — is being commercially packaged into persistent geolocation intelligence for law enforcement and intelligence agencies. Penlink’s Webloc tool recombines ad-tech signals at scale without the legal safeguards that typically govern direct telecom surveillance, creating a shadow surveillance layer that operates largely outside existing regulatory frameworks.

The same data infrastructure that makes targeted advertising work also makes persistent human tracking routine and cheap. That is not a future risk. It is the current operating condition.

Read more: Citizen Lab

Two Cyber Models, Two Opposite Bets. The Subsidy Era Ends.

Implicator.ai · Apr 15 · Tags: Regulation, AI, Security, Access

OpenAI and Anthropic launched AI cybersecurity models within one week of each other, and the gap between their distribution strategies is the story. OpenAI’s GPT-5.4-Cyber goes to thousands of verified defenders — anyone who can prove they work in security. Anthropic’s Mythos Preview goes to roughly 40 vetted organizations. Same capability class, opposite bets. OpenAI is wagering that auditing identity at scale is more effective than gatekeeping access. Anthropic is betting that tight restriction is the more defensible posture for a dual-use tool that can find vulnerabilities as easily as it can report them.

The killer detail is operational rather than philosophical. Anthropic’s API uptime over the past 90 days sat at 98.95% — well below the 99.99% standard enterprise contracts typically require. Retool’s founder migrated to OpenAI despite acknowledging model quality parity. That means Anthropic is restricting access on principle while also struggling to deliver reliable access to the organizations already inside the gate. Marcus Schuler’s deeper argument is that both distribution decisions signal the end of the subsidized AI era: as compute costs force providers toward usage-based pricing and tighter controls, the free-floating “ship it and see” phase is closing. The labs are now being forced to make explicit bets about who gets what, and those bets will define the cybersecurity landscape.

Read more: Implicator.ai

Infrastructure

Meta Partners With Broadcom to Co-Develop Custom AI Silicon

Meta Newsroom · Apr 14 · Tags: Infrastructure, AI, Chips, Silicon

Meta announced an expanded partnership with Broadcom to co-develop multiple generations of its MTIA (Meta Training and Inference Accelerator) chip — the custom silicon that powers AI across all of Meta’s apps. The deal covers chip design, advanced packaging, and networking, with four new MTIA generations planned over the next two years to handle ranking, recommendations, and generative AI workloads at scale.

The strategic point is supply chain sovereignty. Rather than relying entirely on Nvidia for inference at Meta’s volume, the company is building a proprietary silicon layer for the workloads it can predict and control. With GPU rental prices up 48% in sixty days and CoreWeave extending contract minimums to three years, Meta’s move illustrates how the largest AI consumers are now treating chip access as a long-term structural problem, not just a procurement cycle.

Read more: Meta Newsroom

Interview of the Week

Agency, Agency, Agency

Source: Keen On Published: Apr 15, 2026

“Dictators are quite high agency.” Sophie Haigney’s New York Times op-ed landed on April Fools’ Day but was not a joke: “agency” has become the defining buzzword of Silicon Valley bro culture, promoted for its own sake by the very people building technologies designed to rob everyone else of theirs. Haigney — former web editor of The Paris Review — argues that Altman, Zuckerberg, and the broader tech leadership class keep demanding that people become “high agency” while deploying addictive, attention-capturing, and decision-automating systems that do the opposite. Keen’s interview draws out the core contradiction sharply: agency without a value system attached to it isn’t empowerment. It’s just power, and the people who talk about it most insistently tend to be the ones accumulating it.

This is the sharpest cultural critique of the week’s underlying theme. The editorial opens with the question of what kind of market rewards an $850 billion valuation for strategic incoherence. Haigney’s answer is structural: a culture that prizes high agency as a terminal value, divorced from any accountability for what that agency does, produces exactly the leaders and companies we’re now arguing about.

Listen: Keen On

Startup of the Week

Nvidia-backed SiFive hits $3.65 billion valuation for open AI chips

Source: TechCrunch Published: Apr 11, 2026

SiFive raised an oversubscribed $400 million round at a $3.65 billion valuation, and the interesting part is not just the size. It is the stack position. SiFive licenses open RISC-V CPU designs rather than selling chips directly, which gives it a shot at becoming a neutral architectural layer inside the AI infrastructure buildout. Nvidia joining the round matters because it suggests the dominant GPU company would rather expand the ecosystem around its software and interconnect standards than force every customer into the same proprietary CPU path.

That makes SiFive a strong startup pick for this issue because it sits exactly where several of this week’s threads meet: open versus closed systems, the scramble for AI infrastructure, and the growing importance of physical stack leverage beneath the model layer. If AI demand keeps pulling the industry downward into chips, power, and interconnects, SiFive is well positioned to matter.

Read more: TechCrunch

Post of the Week

The Age of Consensus Capital

@ncsh (Nico Wittenborn) · Apr 15

Editors Note: Two numbers. One devastating diagnosis of what venture capital has become. Wittenborn’s post landed like a fire alarm on Tuesday — drawing in Turner Novak (”turns out VCs were the ChatGPT wrappers all along”), veteran investor @honam warning LPs that this “creates systemic risk,” and @robgo tracing the root cause to the collapse of mid-size exits: when there are no diamonds-in-the-rough stories to tell, everyone chases the same five names.

The pessimism is earned. But there are two reasons for optimism that the doom-posters are missing.

First, concentration creates its own antidote at the seed level. When 75% of money crowds into five companies, the founders being ignored are exactly the ones a good seed investor should be finding. That has always been the job — what changes now is the scale of the opportunity. The more extreme the consensus at the top, the more overlooked the outliers below it.

Second, if that concentrated capital generates even a 2x return, the cash flowing back to LPs will be enormous. History says LPs mostly redeploy winnings into the same large funds that delivered — institutional inertia is real. But this cycle is different in scale. If $500B+ flows back into LP portfolios from AI, even a small percentage shift toward emerging managers is a massive absolute number. The seed opportunity doesn’t require a structural change in LP behavior. It just requires a few smart LPs to notice that the next OpenAI won’t look like OpenAI when it’s raising a seed round.

The age of consensus capital is real. So is its upside — for those positioned to catch what it’s missing.

Read on X: @ncsh